NVIDIA Introduces dual Cortex A9 based Tegra 2

by Anand Lal Shimpi on January 7, 2010 2:00 PM EST- Posted in

- Smartphones

- Mobile

Atom vs. Cortex A9

With Atom Intel adopted an in-order architecture to save power. With Cortex A9, ARM went out of order to improve performance. Despite the fundamental difference, Atom and ARM's Cortex A9 appear similar from a high level.

Atom has two instruction decoders at the front end, as does the A9. The dual decoders feed an instruction queue that can dispatch out of order:

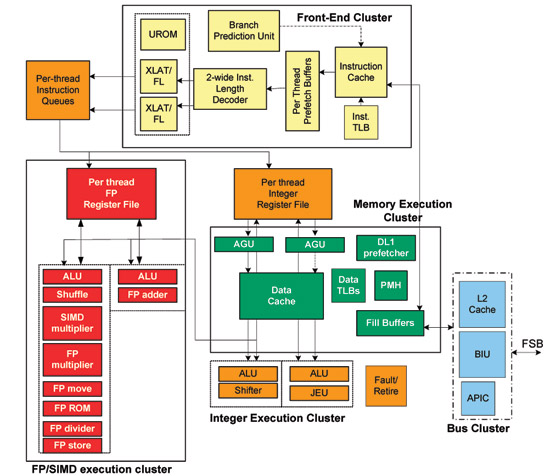

Intel's Atom Architecture

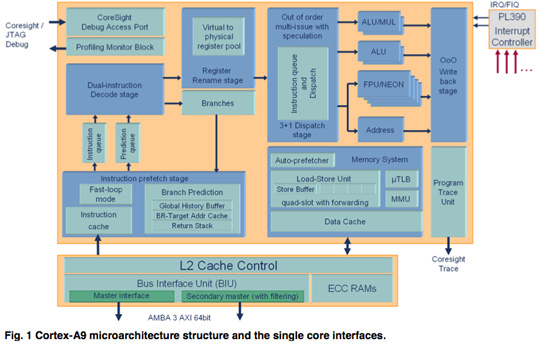

Both architectures appear to have a unified instruction queue that feed four dispatch ports. Atom has two ports that feed AGUs and/or ALUs, and two ports for the FPU (one for SSE and one for FP ops). A9 has two ALU ports, one FPU/NEON port and one AGU port.

Single-core ARM Cortex A9

Atom's advantage here is Hyper Threading as two threads get to share its execution resources simultaneously. Cortex A9's advantage is in a shallower pipeline and out of order execution. Both promote higher IPC but go about it in very different ways. If ARM can get clock speeds high enough they may actually have a higher performance option.

Final Words

Honestly, Tegra 2 is one of the most exciting things I've seen at CES - but it's mostly because of its dual Cortex A9 cores. While I'm excited about improving 3D graphics performance on tablets and smartphones, I believe general purpose performance needs improvement. ARM's Cortex A9 provides that improvement.

The days of me pestering smartphone vendors to drop ARM11 and embrace Cortex A8 are over. It's all about A9 now. NVIDIA delivers one solution with Tegra 2, but TI's OMAP 4 will also ship with a pair of A9s.

The big unknown continues to be actual, comparable power consumption. We're also lacking measurable graphics performance compared to the PowerVR SGX cores (particularly the SGX 540).

If NVIDIA is to be believed, then Tegra 2 is the SoC to get. I suspect that's being somewhat optimistic. But rest assured that if you're buying a smartphone in 2010, it's not Snapdragon that you want but something based on Cortex A9. NVIDIA is a viable option there and we'll have to wait until Mobile World Congress next month to see if there are any promising designs based on Tegra 2.

And just in case I wasn't clear earlier, we will see the first Tegra based Android phone in 2010. I just hope it's good.

55 Comments

View All Comments

T2k - Tuesday, January 12, 2010 - link

http://www.slashgear.com/imagination-technologies-...">http://www.slashgear.com/imagination-te...gx545-to...Nvidia has nothing against Imagination's new PowerVR chip, period.

Anand is licking the wrong @ss again.

bnolsen - Monday, January 11, 2010 - link

That pat is bothersome...the core general purpose cpu being only 10% of the transistors in the package. Makes me wonder if there isn't some better way to design cpus and socs in general.techadd - Monday, January 11, 2010 - link

Most of the job is now done on specialized processors. Get used to it. The general purpose CPUs are going to matter less and less. They are slow for hard tasks and will be giving way to special gear like video and graphics processors.jconan - Saturday, January 9, 2010 - link

is the TEGRA2 CUDA compliant as others have mentioned?techadd - Sunday, January 10, 2010 - link

I doubt it. That would draw more power. It's good as it is, but I have hopes for future Tegrasjconan - Saturday, January 9, 2010 - link

is the TEGRA2 CUDA compliant as others have mentioned?Mike1111 - Saturday, January 9, 2010 - link

Anand, Imagination has their own dedicated HD video decode (VXD) and encode (VXE) processors, just like Nvidia. They offer comparable features (1080p h.264 high profile decode and encode) in a low power envelope. This has nothing to do with the GPU (SGX vs. Nvidia's 2D/3D graphics processor).VXD390: http://www.imgtec.com/news/Release/index.asp?NewsI...">http://www.imgtec.com/news/Release/index.asp?NewsI...

VXE380: http://www.imgtec.com/news/Release/index.asp?NewsI...">http://www.imgtec.com/news/Release/index.asp?NewsI...

Plus the iPhone3GS officially supports not only 480p but (720x)576p anamorphic (PAL DVD resolution) with high bitrates (if you go too high you just have to manually restrict your encoder to h.264 level 3.0 or iTunes won't transfer the file). Unofficially the iPhone 3GS supports even 1080p, you just have to know which h.264 options to tweak and how to transfer the file. So the problem with 1080p decode is Apple, not the Samsung SoC. Of course that's nothing compared to the announced Tegra2 SKU, but that's no surprise since it's newer and aimed at tablets/smartbooks etc.

thebeastie - Friday, January 8, 2010 - link

Good article this one, why? Because I had no idea Nvidia were working on a good SoC technology, I simply ignored just about ANYTHING with the word Tegra on it think it was just some power sucking first gut shot thing created by nvidia as a side show.I was so ultra wrong! This looks truly impressive.

vol7ron - Thursday, January 7, 2010 - link

Anand,You certainly hyped the A9 up, maybe a little too much. I agree with you and everything, but the repetition of the Cortex A9 support kind of made me a little sick. (please read on)

Personally, I'm happy if there are any improvements, but this still isn't where it should be. What I would like to know, though, is if you plan on doing any performance testing on phone devices in the future?

I believe smartphones/PDAs/pocket pcs - whatever you want to call them - are reaching that last step of maturity and have enough features and variance that they are worthy of testing.

I even started thinking, "should I pay to upgrade my phone?" I have 1 1/2 years left on my contract! Had this been one of my previous, non-touch devices, I would have gladly saved money and waited 'til even after my contract expired. But now, I started thinking that I'm using my phone a lot more than my desktop - the $/time-used would say it'd be a better buy.

Please start doing some in-depth analysis and, if you can, please push the phone manufacturers to include pico-projectors / good external speakers. I for one use my phone to watch my workout videos, it'd be nice just to set it down or let others view things at the same time.

vol7ron

QChronoD - Thursday, January 7, 2010 - link

Dear Santa,I plan to be very good this year, so please start your elves working on a new phone running Android on a Tegra2 with a 4.5" OLED screen.