AMD Announces ATI Mobility Radeon 5000 Series

by Jarred Walton on January 7, 2010 12:01 AM EST- Posted in

- Laptops

Performance Preview

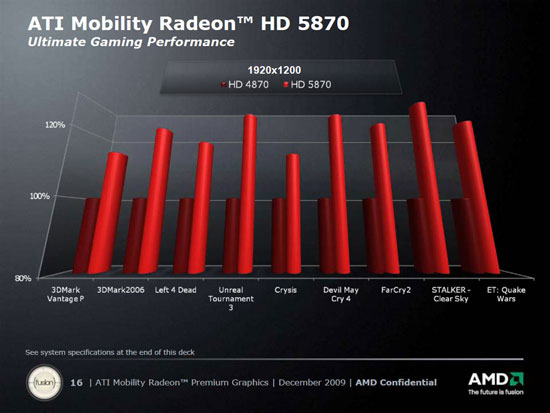

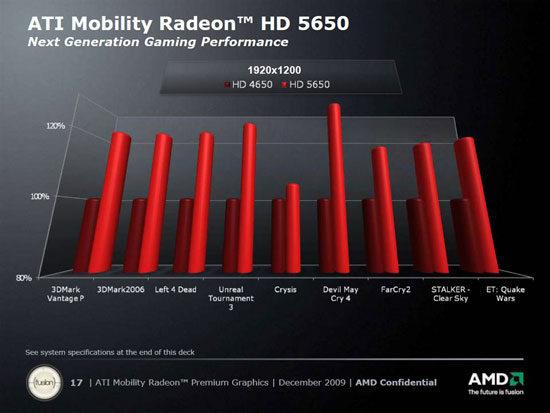

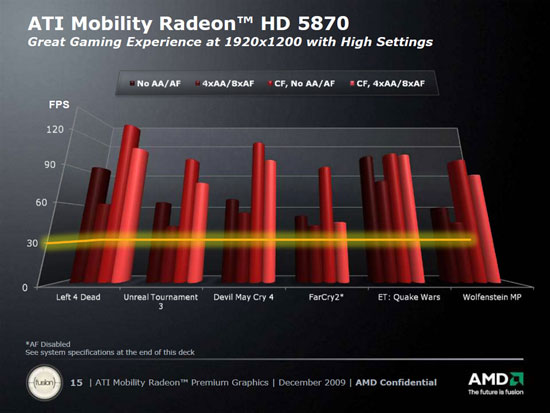

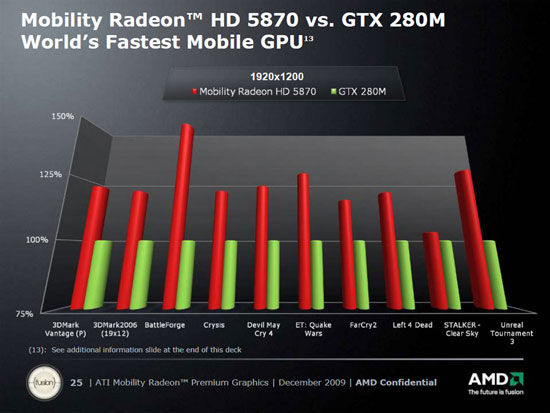

Since we don't have hardware, we are left with the charts that ATI provided. Obviously, you need to take these benchmark results with a huge grain of salt, but for now it's all we have to go on. ATI provided results comparing performance of their new and old Performance (5650 vs. 4650) and Enthusiast (5870 vs. 4870) solutions, an additional chart looking at WUXGA single vs. CrossFire performance, and two more charts comparing performance of high-end and midrange ATI vs. NVIDIA. Here's what we have to look forward to, based on their testing.

The performance is about what we would expect based on the specifications. Other than adding DX11 support, there's nothing truly revolutionary going on. The latest 5870 part has a higher core clock and more memory bandwidth - 27% more processing power and 11% more bandwidth compared to the standard HD 4870, to be exact. The average performance improvement appears to be 20~25%, which is right in line with those figures. Crysis appears to hit some memory bandwidth constraints, which is why the performance increase isn't as high as in other titles (see the 5650 slide for a better example of this).

On the midrange ("Performance") parts, the waters are a little murky. The highest clocked 5600 part (5750 or 5757) can run at 650MHz and provides a whopping 62% boost in core performance relative to the 4650, but that's only 10% more than the HD 4670. With GDDR5, the 5750 also offers a potential 100% increase in memory bandwidth. That said, in this slide we're not looking at the 5750 or 4670; instead we have the 5650 clocked at 550MHz with 800MHz DDR3 and ATI compares it to the 4650 with unknown clocks (550MHz/800MHz DDR3 are typical). That makes the 5650 25% faster in theoretical core performance with the same memory bandwidth. The slide shows us a performance improvement of 20~25% once more, which is expected, except Crysis clearly hits a memory bottleneck this time. On a side note, running a midrange GPU at 1920x1200 is going to result in very poor performance in any of these titles, so while the 5650 is 20% faster, we might be looking at 12 FPS vs. 10 FPS.

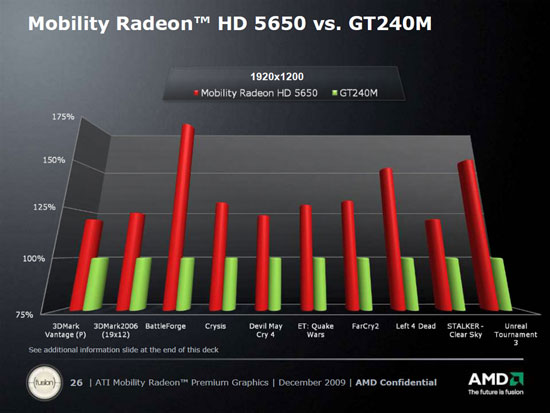

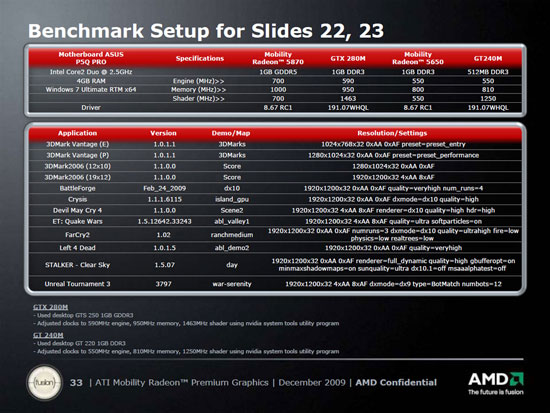

Moving to the ATI vs. NVIDIA slides, it's generally pointless to compare theoretical GFLOPS between the ATI and NVIDIA architectures as they're not the same. The 5870 has a theoretical ~100% GFLOPS advantage over the GTX 280M but only 5% more bandwidth. The average performance advantage of the 5870 is around 25%, with BattleForge showing a ~55% improvement. Obviously, the theoretical 100% GFLOPS advantage isn't showing up here. The midrange showdown between the 5650 and GT 240M shows closer to a ~30% performance increase, with BattleForge, L4D, and UT3 all showing >50% performance improvements. The theoretical performance of the 5650 is 144% higher than the GT 240M, but memory bandwidth is essentially the same (the GT 240M has a 1% advantage). There may be cases where ATI can get better use of their higher theoretical performance, but these results suggest that NVIDIA "GFLOPS" are around 70% more effective than ATI "GFLOPS".

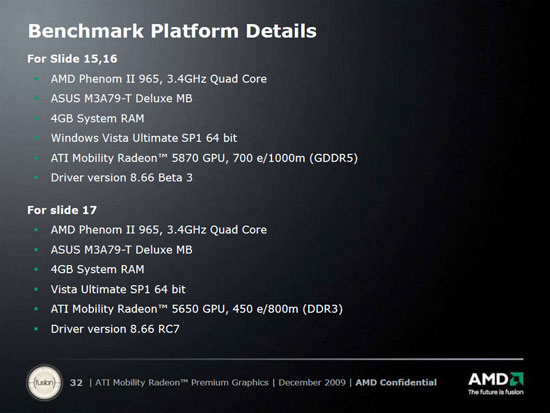

For reference, ATI uses a desktop system for the ATI vs. ATI charts and some form of Core 2 Duo 2.5GHz setup for the ATI vs. NVIDIA charts. This isn't really something fishy, considering there are as yet no laptops with the new ATI hardware and no one offers an identical laptop with support for both ATI and NVIDIA GPUs (well, Alienware has the m17x, but that's about as close as we get). Here are the test details:

The first slide states a clock speed of 450MHz on the 5650 but the second says 550MHz. Given the figures in the ATI performance comparison, we think the 550MHz clock is correct.

32 Comments

View All Comments

tntomek - Friday, January 8, 2010 - link

Does anyone have a hint if these are immediately available? Dell is already selling the new i5 chips but still old video cards.Also does anyone have a hint of how the video card works in something like a dell. is the Intel onboard video totally disabled?

RobotHunter - Saturday, January 9, 2010 - link

I just ordered one yesterday with a $300 off coupon from HP. Core i5 with the 1GB ATI Mobility Radeon HD 5830.bennyg - Thursday, January 7, 2010 - link

This is what you get when the other mob is rehashing years old tech.An evolutionary step thats just "enough" to be better, but much below its potential.

pcfxer - Thursday, January 7, 2010 - link

You mean AMD?JarredWalton - Thursday, January 7, 2010 - link

AMD is the parent company, but ATI is the group within AMD making the GPUs, and the GPUs are still called the "ATI Mobility Radeon". So pretty much you can say AMD or ATI and it's correct.SlyNine - Saturday, January 9, 2010 - link

I think some people tried to transition to saying AMD, but to many were stuck in there own ways and have an infinity for ATI. I know I feel that way, Otho I like Nvidia too. Both companies seem to be the epitome of free enterprise, they are very innovative without all the price fixing, unfair business practices and bullcrap you see in other industries.apriest - Thursday, January 7, 2010 - link

I have spent the last two months in driver hell trying to get two external monitors hooked up to an HP 4510s laptop with a ATI Mobility Radeon HD 4330 for an accounting firm. The laptop has an analog and HDMI output, but you can't use both at the same time. So that leaves USB-based DisplayLink adapters. DisplayLink drivers are the worst on the planet and conflict with HP's video driver. You can't use ATI's official driver without hacking it first with Mobility Modder. If you do that, HP's QuickLaunch application will blue screen. If you remove QuickLaunch, you can't control whether your laptop screen or the other ATI video output is the primary screen, nor can you turn the secondary display on/off. Argh! There is no end to the frustration!My simple point is, I can't WAIT to have the simplicity of my desktop's triple head ATI 5870 on a laptop using official ATI drivers. If vendors finally allow at least two digital outputs at the same time as the internal screen using official ATI drivers, it will be nervana for some of my clients!

Pessimism - Thursday, January 7, 2010 - link

The problem is with the users not the laptops. For one, I find it hard to justify two monitors let alone three, that is what alt-tab is for. Your brain/eyes can't focus on more than one monitor at a time anyway. People who think multi-display lets them become some superhuman multitasker are fooling themselves. If they still demand three monitors, they should be using a desktop not a laptop.The0ne - Friday, January 8, 2010 - link

I think most users wouldn't have the need for a 2nd or even 3rd monitor for their laptop use but in my case I must have a 2nd and more importantly bigger and larger resolution. My 17" laptop with 1920x1200 doesn't cut it with my spreadsheets. And although the resolution is nice things are tiny and while you can blow things up, that defeats the purpose of having more things in the same amount of space.In addition to myself, I also set up dual monitors for technicians that use my spreadsheets specifically. It all comes down to being productive.

SlyNine - Friday, January 8, 2010 - link

Total BS, If you believe you can only focus on one monitor at a time why don't you cut a 24 inch hole in a box that is about an arms length deep so all you can see through is the hole in the box.Walk around like that and see how easy it is.