NVIDIA’s GeForce GT 240: The Card That Doesn't Matter

by Ryan Smith on January 6, 2010 12:00 AM EST- Posted in

- GPUs

Overclocking

As we briefly mentioned in the introduction, the GT 240 has a 70W TDP, one we believe to be chosen specifically to get as much out of the card as possible without breaking the 75W limit of a PCIe slot. Based on the lower core and shader clock speeds of the GT 240 compared to the GT 220 (not to mention the 8800 GT) we believe it to be a reasonable assumption that the GPU is capable of a great deal more, so long as you’re willing to throw the 75W limit out the window.

There’s also the matter of the RAM. The DDR3 card is a lost cause anyhow thanks to its low performance and the fact that it’s only 10MHz below its RAM’s rated limit, but the GDDR5 cards have great potential. The Samsung chips on those cards are rated for 4000MHz effective, some 17% more than the stock speed of 3400MHz effective. If the memory bus can hold up, then that’s a freebie overclock.

So with that in mind, we took to overclocking our two GDDR5 cards: The Asus GT 240 512MB GDDR5, and the EVGA GT 240 512MB GDDR5 Superclocked.

For the Asus card, we managed to bring it to 640MHz core, 4000MHz RAM, and 1475MHz shader clock. This is an improvement of 16%, 17%, and 10% respectively. We believe that this card is actually capable of more, but we have encountered an interesting quirk with it.

When we attempt to define custom clock speeds on it, our watt meter shows the power usage of our test rig dropping by a few watts, which under normal circumstances doesn’t make any sense. We suspect that the voltage on the GPU core is being reduced when the card is overclocked, however there’s currently no way to read the GPU voltage of a GT 240, so we can’t confirm this. However it does fit all of our data, and makes even more sense once we look at the EVGA card.

For the EVGA card, we managed to bring it to 650MHz core, 4000MHz RAM, and 1700MHz shader clock. This is an improvement of 18%, 11%, and 27% respectively.

Compared to our Asus card, we do not get any anomalous power readings when attempting to overclock it, so if our GPU core voltage theory is correct, then this would explain why the two cards overclocked so differently. In any case, the significant shader overclocking should be quite beneficial in what shader-bound situations exist for the GT 240.

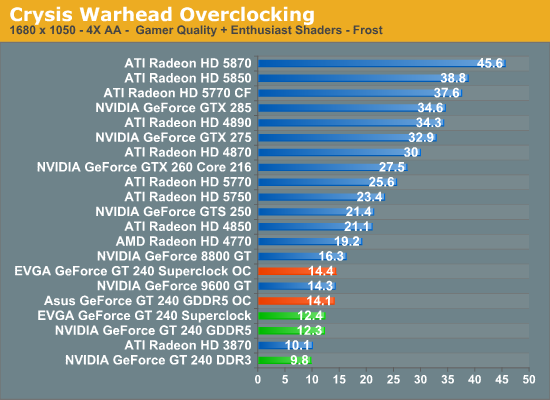

Crysis gives us some very interesting results when overclocked, and this data helps cement the idea that Crysis is ROP-bound on the GT 240. Compared to a stock GT 240, our overclocked Asus and EVGA cards get roughly 15% and 17% more performance. More interestingly, they’re only a few tenths of a frame apart, even though the EVGA card has its shaders clocked significantly higher.

As the performance difference almost perfectly matches the core overclock, this makes Crysis an excellent candidate for proving that the GT 240 can be ROP-bound. It’s a shame that the GT 240’s core doesn’t overclock more than the shaders, as given the ROP weakness we’d rather have more core clockspeed than shader clockspeed in our overclocking efforts.

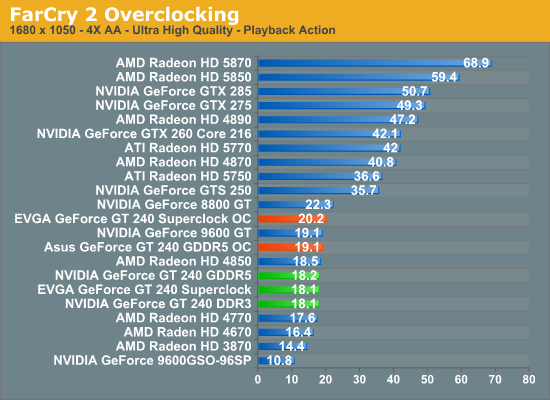

When it comes to Far Cry 2, the significant shader speed differences finally make themselves more apparent. Our cards are still suffering from RAM limitations, but it’s still enough to get another 10% out of the EVGA card.

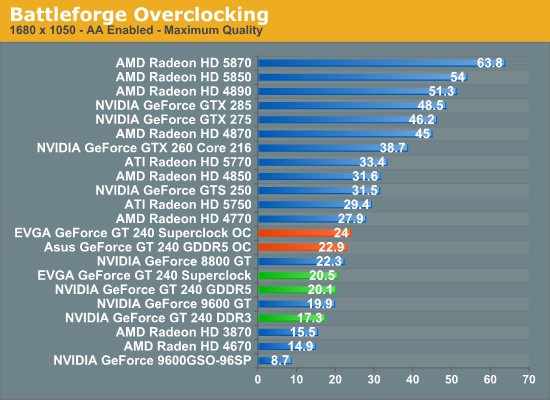

Finally with Battleforge we can see a full stratification of results. The overclocked EVGA card is some 19% faster than a stock GT 240, and 5% faster than the overclocked Asus. Meanwhile the Asus is 14% faster than stock, even with its more limited shader overclock.

Finally, on a quick note we’ll talk about power usage. As we mentioned previously only the EVGA card behaved correctly when overclocking – in overclocking that card we saw a 21W jump from 172W under load to 193W under load. If indeed that card is at or close to 70W under normal circumstances as NVIDIA’s specifications call for, then when overclocked it’s over 90W. It becomes readily apparent here that the clock speeds of the GT 240 were picked by NVIDIA to meet the PCIe power limit rather than the capabilities of the GPU itself.

55 Comments

View All Comments

BernardP - Thursday, January 7, 2010 - link

Despite the fact that it *is* overpriced, I bought the Asus GT240 DDR5. Why? It fits in my small case while the "green" 9600GT and 9800GT don't. It has "good enough" performance for the light gamer that I am. It is a well-balanced match with my Athlon 64 X2 5400+. I'm staying with my current build and Win XP for the next 2 years, so DX10 or DX11 is not important. It's a near-silent HTPC card, my main use. I favor NVidia drivers, especially their ability to create and scale custom resolutions. Why has ATI still not included this feature in their catalyst driver? I don't want to fiddle with PowerStrip.With a bit of fine tuning of the fan speed profile in the Asus SmartDoctor utility, I'm able to keep GPU temps below 56 deg. Celcius while gaming, with little added noise. At idle, the card temp is hovering around 33-34 deg.

Overall, I am very satisfied with my Asus GT240 GDDR5

knowom - Wednesday, January 6, 2010 - link

I really like the low power, heat, and noise on the GT 240 a good fanless one would make a excellent HTPC/DAW candidate. A follow up review underclocking it and comparing it against a 9600GT and a bunch of integrated graphics and perhaps I5 as well.philologos - Wednesday, January 6, 2010 - link

I have an aging Dell Dimension E510, for which I bought a Zotac GT240 512MB GDDR5 AMP! edition. I needed a single slot card with very little height, and I also was wary of using the 6-pin connector from my Dynex (aka Be$t Buy) 400w PSU. I really wanted a 5770, but the coolers would have interfered with Dell's CPU cooling "tunnel."I agree the price should be dropped ten to twenty dollars, but there's been a massive improvement from the 8500GT that it replaced. This should tide me over until can afford my first home-built. The GT240 might even serve as a PhysX processor if such things don't go the way of the dinosaurs. Basically, I think this card has a definite niche; I didn't look at the 9800GT Greens, unfortunately, but I have doubts one would even fit. There is precious little space for expansion cards in my 'puter.

BelardA - Thursday, January 7, 2010 - link

The 9800GT should fit... even some versions of the ATI 4850.Dynex PSUs are usually not that good... :(

Check out the 12v rail requirements of the video card, but then again - the GT240 (stupid names) is in the same power class as the ATI 4600s.

Yeah, some people have to bend some metal to make PSUs and cards fit in the Dell E510.

asusmaun - Wednesday, January 6, 2010 - link

Hi! I think the GT 240 models with GDDR5 are extremely sweet and I want to get one. I recommend it!Look at the fine features:

* Low power / cool temps (69W)

* Quiet

* Small card (eVGA's card is even 1 slot)

* Pure Video 4th and 5th generation (VP4/5). This might be the only card that does VP5 stuff right now.

* Plays most games fine if quality/res not set too high. This is true, more or less, for all graphics cards at some point. You can never keep up with game graphics demands without spending a lot of money. If you can spend that much money, good for you, but many cannot.

* Affordable price for a card with new technology

The next Nvidia model up, the GTS 250 is a huge, hot (145W) card that is old technology dressed up with a new model name (again!). It only can do Pure Video 2nd generation (VP2) video acceleration. Sure, it can play games a little faster, but game performance isn't always the lone recommend factor for choosing your card. And, the GTS 250 does cost more when I looked at prices. If the GT 240 has enough game performance for you right now, then the GTS 250 is not a better card.

I'm not going to talk about Radeon cards because I run on Linux and stay with Nvidia cards. If you are going Nvidia, the GT 240 is in the sweet spot for overall price/performance/features IMO.

If the GT 240 is good enough for you now, the price is maybe low enough also that by the time it seems too slow for you, there will be much better cards with new technology for you to upgrade to later.

To say the card doesn't matter and just not recommend it based on mainly game performance, isn't really looking at this card's features and market from a balanced point of view. The card could be highly recommendable for a computer used for watching movies and some game playing (HTPC or others). This card is just never going to please those kinds of users that are spoiled with the highest-end components all the time - the rest of us have to compromise some and the GT 240 can fit budget and purpose well right now.

This review article, even though it does not recommend the card, might actually cause a lot of people to rush to buy these cards, for fear they will be discontinued! I was actually impressed with the game performance charts. So, this review article may very well still help sell a lot of these cards. :)

AznBoi36 - Wednesday, January 6, 2010 - link

Excellent points all around.I could see the GT240 as a viable upgrade for those on aging systems (Socket 939/478) and are on a tight budget. Why because the CPUs for those platforms most likely aren't fast enough to power many of the newer mainstream cards (ie: keeping the GPU well fed without being CPU limited). Also these users most likely have older monitors @ 1280x1024, and as shown the GT240 has enough oomph to run many of the new games at 1280x1024 with maximum detail and probably some AA/AF.

New build? I can see this possibly going into a HTPC, but not anything else. There's much better cards out there. The 4670 is the better card overall for HTPC and light gaming IMO. Lower price, similar power requirements, heat and noise.

Hauk - Wednesday, January 6, 2010 - link

Man that article title.. ouch! ;)Spoelie - Thursday, January 7, 2010 - link

It's actually the *message* NVIDIA sent out itself. Not even bothering to send review samples, you're telling the world these cards are low-key and unimportant in the grand scheme of things.Taft12 - Wednesday, January 6, 2010 - link

"I hesitate to call the GT 240 a “bad” GPU"You may hesitate but this review clearly shows that the GT240 paired with DDR3 memory indeed makes for bad GPU. NVidia should have mandated OEMs use DDR5.

DominionSeraph - Wednesday, January 6, 2010 - link

Your usage of "GPU" shows that you have no idea what one is.You probably think the "CPU" is the case.