Intel's 8-core Skulltrail Platform: Close to Perfecting the Niche

by Anand Lal Shimpi on February 4, 2008 5:00 AM EST- Posted in

- CPUs

Fully Buffered DIMM: An Unnecessary Requirement

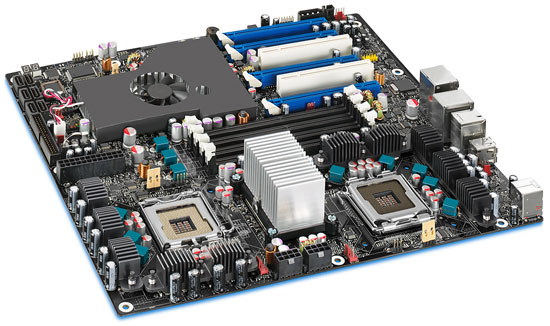

The Intel D5400XS is quite possibly the most impressive part of the entire Skulltrail platform. Naturally it features two LGA-771 sockets, connected to Intel's 5000 chipset via two 64-bit FSB interfaces. The chipset supports the 1600MHz FSB required by the QX9775 but it will work with all other LGA-771 Xeon processors, in case you happen to have some laying around your desk too.

Thanks to the Intel 5400 chipset, the D5400XS can only use Fully Buffered DIMMs. If you're not familiar with FBD, here's a quick refresher taken from our Mac Pro review:

Years ago, Intel saw two problems happening with most mainstream memory technologies: 1) As we pushed for higher speed memory, the number of memory slots per channel went down, and 2) the rest of the world was going serial (USB, SATA and more recently, Hyper Transport, PCI Express, etc...) yet we were still using fairly antiquated parallel memory buses.

The number of memory slots per channel isn't really an issue on the desktop; currently, with unbuffered DDR2-800 we're limited to two slots per 64-bit channel, giving us a total of four slots on a motherboard with a dual channel memory controller. With four slots, just about any desktop user's needs can be met with the right DRAM density. It's in the high end workstation and server space that this limitation becomes an issue, as memory capacity can be far more important, often requiring 8, 16, 32 or more memory sockets on a single motherboard. At the same time, memory bandwidth is also important as these workstations and servers will most likely be built around multi-socket multi-core architectures with high memory bandwidth demands, so simply limiting memory frequency in order to support more memory isn't an ideal solution. You could always add more channels, however parallel interfaces by nature require more signaling pins than faster serial buses, and thus adding four or eight channels of DDR2 to get around the DIMMs per channel limitation isn't exactly easy.

Intel's first solution was to totally revamp PC memory technology, instead of going down the path of DDR and eventually DDR2, Intel wanted to move the market to a serial memory technology: RDRAM. RDRAM offered significantly narrower buses (16-bits per channel vs. 64-bits per channel for DDR), much higher bandwidth per pin (at the time a 64-bit wide RDRAM memory controller would offer 6.4GB/s of memory bandwidth, compared to a 64-bit DDR266 interface which at the time could only offer 2.1GB/s of bandwidth) and of course the ease of layout benefits that come with a narrow serial bus.

Unfortunately, RDRAM offered no tangible performance increase, as the demands of processors at the time were no where near what the high bandwidth RDRAM solutions could deliver. To make matters worse, RDRAM implementations were plagued by higher latency than their SDRAM and DDR SDRAM counterparts; with no use for the added bandwidth and higher latency, RDRAM systems were no faster, if not slower than their SDR/DDR counterparts. The final nail in the RDRAM coffin on the PC was the issue of pricing; your choices at the time were this: either spend $1000 on a 128MB stick of RDRAM, or spend $100 on a stick of equally performing PC133 SDRAM. The market spoke and RDRAM went the way of the dodo.

Intel quietly shied away from attempting to change the natural evolution of memory technologies on the desktop for a while. Intel eventually transitioned away from RDRAM, even after its price dropped significantly, embracing DDR and more recently DDR2 as the memory standards supported by its chipsets. Over the past couple of years however, Intel got back into the game of shaping the memory market of the future with this idea of Fully Buffered DIMMs.

The approach is quite simple in theory: what caused RDRAM to fail was the high cost of using a non-mass produced memory device, so why not develop a serial memory interface that uses mass produced commodity DRAMs such as DDR and DDR2? In a nutshell that's what FB-DIMMs are, regular DDR2 chips on a module with a special chip that communicates over a serial bus with the memory controller.

The memory controller in the system stops having a wide parallel interface to the memory modules, instead it has a narrow 69 pin interface to a device known as an Advanced Memory Buffer (AMB) on the first FB-DIMM in each channel. The memory controller sends all memory requests to the AMB on the first FB-DIMM on each channel and the AMBs take care of the rest. By fully buffering all requests (data, command and address), the memory controller no longer has a load that significantly increases with each additional DIMM, so the number of memory modules supported per channel goes up significantly. The FB-DIMM spec says that each channel can support up to 8 FB-DIMMs, although current Intel chipsets can only address 4 FB-DIMMs per channel. With a significantly lower pin-count, you can cram more channels onto your chipset, which is why the Intel 5000 series of chipsets feature four FBD channels.

The AMB has two major roles, to communicate with the chipset's memory controller (or other AMBs) and to communicate with the memory devices on the same module.

When a memory request is made the first AMB in the chain then figures out if the request is to read/write to its module, or to another module, if it's the former then the AMB parallelizes the request and sends it off to the DDR2 chips on the module, if the request isn't for this specific module, then it passes the request on to the next AMB and the process repeats.

As we've seen, the AMB translation process introduces a great deal of latency to all memory accesses (it also adds about 3-6W of power per module), negatively impacting performance. The tradeoff is generally worth it in workstation and server platforms because the ability to use even more memory modules outweighs the latency penalty. The problem with the D5400XS motherboard is that it only features one memory slot per FBD channel, all but ruining the point of even having FBD support in the first place.

Four slots, great. We could've done that with DDR3 guys.

You do get the benefit of added bandwidth since Intel is able to cram four FBD channels into the 5400 chipset, the problem is that the two CPUs on the motherboard can't use all of the bandwidth. Serial busses inherently have more overhead than their parallel counterparts, but the 38.4GB/s of memory bandwidth offered by the chipset is impressive sounding for a desktop motherboard. You only get that full bandwidth if all four memory slots are populated, but you do increase latency as well.

Some quick math will show you that peak bandwidth between the CPUs and the chipset is far less than the 38.4GB/s offered between the chipset and memory. Even with a 1600MHz FSB we're only talking about 25.6GB/s of bandwidth. We've already seen that the 1333MHz FSB doesn't really do much for a single processor, so a good chunk of that bandwidth will go unused by the four cores connected to each branch.

The X38/X48 dual channel DDR3-1333 memory controller would've offered more than enough bandwidth for the two CPUs, without all of the performance and power penalties associated with FBD. Unfortunately a side effect of choosing to stick with a Xeon chipset is that FBD isn't optional - you're stuck with it. As you'll soon see, this is a side effect that does really hurt Skulltrail.

30 Comments

View All Comments

moiettoi - Friday, June 27, 2008 - link

Hi allThis sounds like a great board and for some-one like me that uses 4x22"monitors and does heaps of multi tasking it sounds perfect and would gladly pay the price asked.

BUT why is such a great board slowed right down by not having DDR3 memory sticks,,,because from what I've read at the momment there is not that much difference with running this and what I have now which is a quad core with DDR3 which runs great but I do overwork it.So bigger would be better.

You would think and I'm sure they already know that it would be common sence to make this board with DDR3 as it is it's only fault as far as I can see.

We will probably see that board come out soon or next in line once they have sold enough of these to satify there egos.

Great board but,,,,just not yet I will be waiting for the next one out which will have to carry DDR3,,,if they want to go forward in thier technolagy.

hnolagy

VooDooAddict - Thursday, February 7, 2008 - link

For testers of large distributed systems this is an awesome thing to have sitting on your desk.You can have a small server room running on one of these.

The biggest shortfall I see is cramming enough RAM on it.

iSOBigD - Tuesday, February 5, 2008 - link

I'm actually very disappointed with 3D rendering speed. Going from 1 core to 4 cores takes my rendering performance up by close to 400% (16 seconds to 4.something seconds, etc.) in Max with any renderer. (I've tried Scanline, MentalRay and VRay) ...so I'm surprised that going from 4 to 8 gives you 40-60% more speed. That's pretty pathetic, so I suspect the board is to blame, not the software.martin4wn - Tuesday, February 5, 2008 - link

Actually 40-60% is not disappointing at all, it's quite impressive. You are encountering the realities of Amdahl's law, which is that only the parallel part of the app scales. Here's a simple workthrough:Say the application is 94% parallel code and 6% serial. As you add cores, say the parallel part scales perfectly, so doubles in speed with every doubling in core count. Now say the runtime on one core is 16 seconds (your example). Of that, 1 second is serial code and the other 15 seconds is parallel code running serially.

Now running on a 4 core machine, you still have the 1s serial, but the parallel part drops to 15/4 = 3.75 seconds. Total runtime 4.75s. Overall scaling is 3.4x. Now go to 8 cores. Total runtime = 1 + 15/8 = 2.87s. Scaling of 60% going from 4 cores to 8 cores, and overall scaling of 5.5x

So the numbers are actually consistent with what you are seeing. It's a great illustration of the power of Amdahls law - even an app that is 94% parallel still only gains 60% going from 4 to 8 cores even with perfect scaling, and it's really hard to get good scaling at even moderate core counts. Once you get to 16 or more cores, expect scaling to fall off even more dramatically.

ChronoReverse - Tuesday, February 5, 2008 - link

This is why I'm quite happy with my quad core. What would probably be the useful limit on the desktop would be a quad core with SMT. After that faster individual cores will be needed regardless of how parallel our code gets (face it, you're not getting 90% parallelizeable software most of the time and even then 8 cores over 4 isn't getting more than about 50% boost in the best case for 90% parallel code).FullHiSpeed - Tuesday, February 5, 2008 - link

Why the heck does this D5400XS MB support only the QX9775 CPU ??? If you need to use 8 cores you can get a lot more bang for the buck with quad core Xeon 5400 series, with only 80 watts TDP each, up to 3 ghz. For a TOTAL of $508 ($254 each quad ) you can have 8 cores @ 2 Ghz.Last month I built a system with a Supermicro X7DWA-N MB ($500) and 4 gig of DDR2 667 ($220) and a single 2.83 Ghz Xeon E5440 ($773) , which I use to test Gen 2 PCIE, dual channel 8 Gb/s Fibre Channel boards, two boards at once.

Starglider - Tuesday, February 5, 2008 - link

Damnit. AMD could've destroyed this if they'd gotten their act togther. Tyan makes a 4 socket Opteron board that fits into an E-ATX form factor;http://www.tyan.com/product_board_detail.aspx?pid=...">http://www.tyan.com/product_board_detail.aspx?pid=...

I was strongly tempted to get one before the whole Barcelona launch farce. If AMD hadn't made such horrible execution blunders and could have devoted the kind of resources Intel had to a project like this, we could have four Barcelonas running at 3 to 3.6 GHz with eight DDR2 slots all on a dedicated channel. Ah well. Guess I'll be waiting for Nehalem.

enigma1997 - Tuesday, February 5, 2008 - link

Note what Francois said in his Feb 04 reply re memory timing http://blogs.intel.com/technology/2008/01/skulltra...">http://blogs.intel.com/technology/2008/01/skulltra... Do you think it would help the latency and make it closer to DDR2/DDR3 ones? Thanks.enigma1997 - Tuesday, February 5, 2008 - link

CL3 FBDIMM from Kingston would be "insanely fast"?! Have a read of this artcile: http://www.tgdaily.com/content/view/34636/135/">http://www.tgdaily.com/content/view/34636/135/Visual - Tuesday, February 5, 2008 - link

I must say, I am very disappointed.Not from performance - everything is as expected on this front... I didn't even need to see benchmarks about it.

But prices and availability are hell. AMD giving up on QuadFX is hell. Intel not letting us use DDR2 is hell.

I was really hoping I could get a dual-socket board with a couple (or quad) PCI-express x16 slots and standard ram, coupled with a pair of relatively inexpensive quadcore CPUs. Why is that too much to ask?

The ASUS L1N64-SLI WS board has been available for an eon now, costs less than $300 and has quite a good feature set. Quadcore Opterons for the same socket are also available for more than a quarter, some models as cheap as $200-$250.

Unfortunately, for some god-damned reason neither ASUS not AMD are willing to make this board work with these CPUs. The board works just fine with dual-core Opterons, all the while using standard unbuffered unregistered DDR2 modules, but not with quad cores? WTF.

And that board is old like the world now. I am quite certain AMD could, if they wanted, have a refresh already - using the newest and coolest chipsets with PCIe 2.0, HT 3.0, independent power planes for each cpu, etc.

Intel could also certainly have a dual socket board that works with cheap DDR2, have plenty PCI-express slots, and the cheap $300 quad-core Xeons that are out already instead of the $1500 "extremes".

I feel like the industry is purposely slowing, throttling technological progress. It's like AMD and Intel just don't want to give us the maximum of their real capabilities, because that would devalue their existing products too quickly. They are just standing around idly most of the time, trying to sell out their old tech.

Same as nVidia not letting us have SLI on all boards, or ATI not allowing crossfire on nforce for that matter.

Same as a whole lot of other manufacturers too...

I feel like there is some huge anti-progress conspiracy going on.