The Road to Acquisition

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

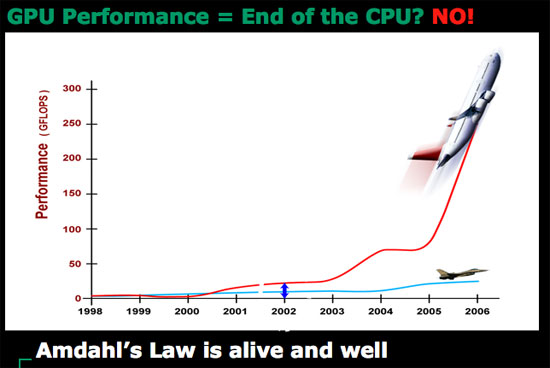

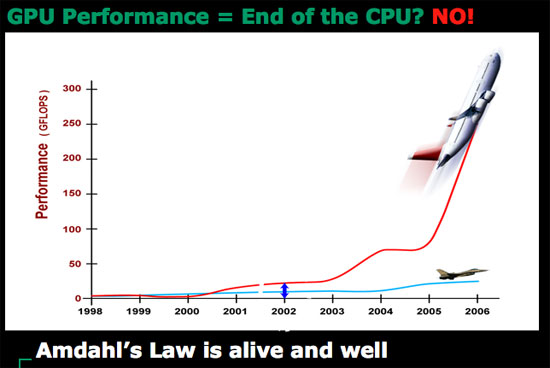

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

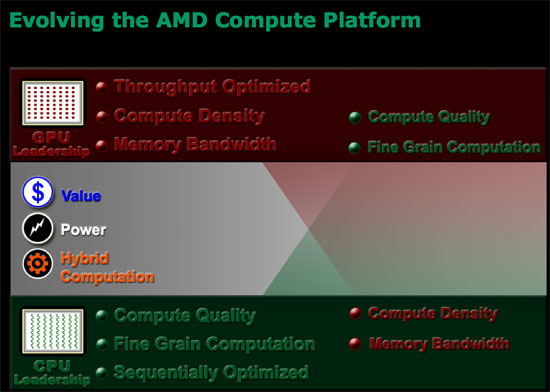

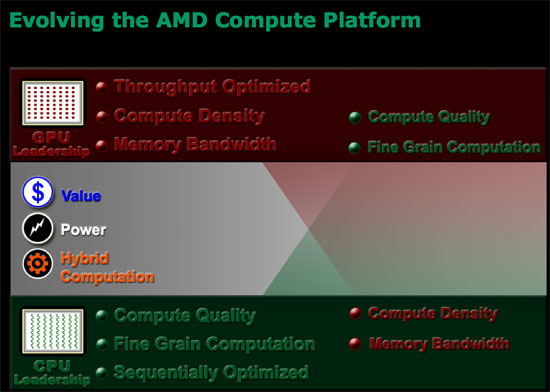

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

55 Comments

View All Comments

osalcido - Sunday, May 13, 2007 - link

Like they price gouged when X2s were introduced?If so, I hope Intel kills them off. If I'm gonna get gouged I'd rather it be by a monopoly so maybe the government will do something about it

Spoelie - Sunday, May 13, 2007 - link

there was really no price gouging as far as I know. AMD was capacity constrained, they were selling every possible cpu they could make at those prices, and even had backorders. I remember that around the summer of 2005, they had sold out their capacity for at least the next 6 months.It would be absolutely economically INSANE to lower prices under those conditions. If you sell every single cpu you can make, you're not gonna lower prices to increase demand..

But well yeah, around feb 2006 came the news of core 2 ;)

Kougar - Sunday, May 13, 2007 - link

I'm not sure, but whatever image protection you are using to protect direct image linking seems to now be breaking ALL images in Opera. I have had problems in the past regarding Anandtech review/article images, but chalked it up to a browser setting I could not pin down.So far it still only happens with Anandtech images, and after a full reinstall of Opera 9.20 I still can't see any images or any image placeholders in this article. I did not know there was even any images until I got to page 7 where the captions were left hanging in midpage. I really hate having to switch to IE7 to read articles, so if this can be easily fixed I'd very much appreciate it. Thanks.

If it helps any, if I am looking for it I can sometimes spot an image start to load, before it is near instantly removed from the page and the text reshuffled to fill the empty void.

bigbrent88 - Sunday, May 13, 2007 - link

I know this may be a simple way to look at AMD's Fusion and future chips based on that idea, but isn't this close to what the Cell already is. Imagine you could remake the cell with a current C2D(using current power leader) and include more, better SPE's with something like HT in AM2 and all of this is on a smaller die than you could do now. Would that not be the basic first step they are going to take? Many have said the Cell is ahead of its time and I also agree that some design elements are inhibiting its overall power, but the success of Folding shows what the Cells processing can do in these types of environments and thats what AMD is looking at in the near term.I just can't wait to drop my x2 3800 and get a good upgrade to go along with that new DX10 card sometime in the next year. Bring it AMD!

noxipoo - Friday, May 11, 2007 - link

get everything in focus for christ sakes.plonk420 - Friday, May 11, 2007 - link

it's interesting how many commercial programs aren't multithreaded. take a look at this year's Breakpoint demos/intros, and just about ALL the top 3 or 5 (or more) take advantage of 2 cores (i don't have more than 2 to know if they would make use of the extra ones or not). check out the Breakpoint 2007 entries at pouet.net and fire something up with a Task Manager open on a second monitor and see for yourself ;)OcHungry - Friday, May 11, 2007 - link

From the tone of your article I have no doubt AMD is about to put Intel where it belongs, in the so so technology arena with lots of marketing maneuvering to sell inferior products. I like the Jetliner graph where the air bus is taking off at a steep angle and the other small jet is going horizontal w/a little inclination. That says it all, and how the 2 (Intel and AMD) are perceived in the technology world.It’s like this: Intel refines the same old, but AMD is into innovation and new things. Good for AMD, it’s about time. The heteroggenenous architecture, the fusion, and Torenza, are where computing technology should be heading, and AMD is taking the lead, as always. I live in Austin, TX, and have a few friends working @ AMD and tell me: buy AMD shares as much as you can, because good things are about to explode and neither Intel, nor Nvidia can catch up to it, ever.

sandpa - Friday, May 11, 2007 - link

actually they are asking everybody to buy AMD shares so that they can sell off their worthless AMD stock for a better price :) dont listen to them ... they are not your friends. No one will be able to catch up with AMD "ever" ??? yeah keep dreaming fanboi!OcHungry - Friday, May 11, 2007 - link

Yeah right. Tell that to Fidelity who bought more of AMD shares lately (13% total).And I guess the rise in price yesterday and today were meaningless?

Intel marketing thugs are at work, no change there.

http://www.theinquirer.net/default.aspx?article=39...">http://www.theinquirer.net/default.aspx?article=39...

yyrkoon - Friday, May 11, 2007 - link

This is exactly what I was thinking while reading, then I ran into the above paragraph, and my suspicions were 'reinforced'. However, if this is the case, I can not help but wonder what will happen to nVidia. Will nVidia end up like 3dfx ? I guess only time will tell. There is a potential problem I am seeing here however, if we do finally get integrated graphics on the CPU die, what next ? Audio ? After a while this could be a problem for the consumer base, and may ressemble something along the lines of how a lot of Linux users view Microsoft, wit htheir 'Monopoly'. In the end, 'we' lose flexability, and possibly the freedom to choose what software that will actually run on our hardware. This is not to say, I buy into this beleif 100%, but it is a distinct possibility.

Apparently Intel suspects something is going on as well. One look at the current prices of the E6600 C2D should confirm this, as its currently half the price of what it was a month ago. Unless, there is something else I am missing, but the Extreme CPUs still seem to be hovering around ~$1000 usd.

I am very pleased to hear that AMD is continuing support for Socket AM2. It was my previous belief, that they were going to phase this socket out, for a newer socket, and if this was the case a few months ago, I am glad that they listen, and learn. Releasing products that underperform the competition is one thing, but alienating your user base is another . . . That being said, I really do hope that Barcelona/K10/whatever the hell the official name is, will give Intel some very tight competition (at least).

I can completely understand why AMD is being tight lipped, I have suspected the reasons why for some time now, and personally, I believe it to be in their best interrest to remain doing so. And yes, it may reflect badly on AMD at this point in time, but what would you preffer ? Intel learning your secretes, and thus rendering them moot, or a few 'whinners', such as ourselves, not knowing what is going on ? They are doing the right thing by them, and that is all that matters. No one, including Intel 'fanboys' want AMD to go under, they may think so, until it really does happen, then they are locked into whatever Intel deems nessisary, which is bad for everyone.

Now, if AMD could come up with something similar to vPro/AMT, or perhaps AMD/Intel could make a remote administration (BIOS, or similr level) 'Standard', I think I would be happy, for at least a little while . . .