Performance Scaling with OCZ's 8800 GTX

by Derek Wilson on February 16, 2007 11:00 AM EST- Posted in

- GPUs

GeForce 8800 GTX Core Clock Scaling

As we previously mentioned, in order to test core clock scaling, we fixed the memory clock at 1020 MHz and the shader clock at 1350 MHz. Our tests were performed at different clock speeds, and we will report on performance vs. clock speed.

One of the issues with games that don't make use of a timedemo that renders exactly the same thing every time is consistency. Both Oblivion and F.E.A.R. can vary in their results as the action that takes place in each benchmark is never exactly the same twice. These differences are normally minimized in our testing by using multiple runs. Unfortunately, with the detail we wanted to use to look at performance, normal variance was playing havoc with our graphs. For this, we devised a solution.

Our formula for determining average framerate at each clock speed is as follows:

FinalResult = MAX(AvgFPS.run1, AvgFPS.run2, ... , AvgFPS.run5, PreviousClockSpeedResult)

What this means is that we don't see normal fluctuation that would cause a higher clock speed to yield a lower average FPS while still maintaining a good deal of accuracy. Normally, our tests have a plus or minus 3 to 5 percent variability. Due to the number of samples we've taken and the fact that previous test results are used, deviation is cut down quite a bit. In actual gameplay, there will be much more fluctuation here.

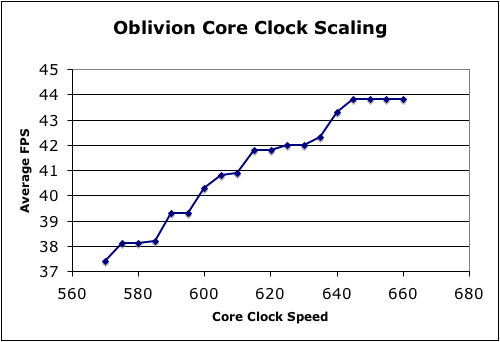

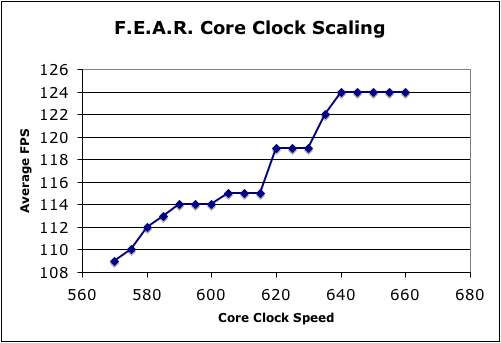

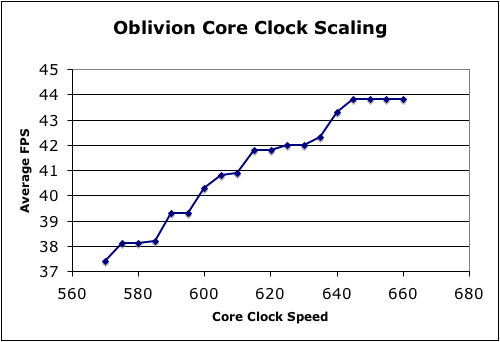

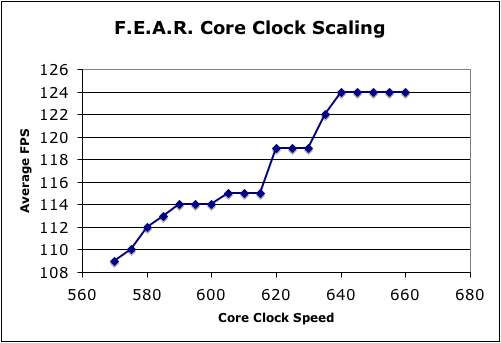

As far as settings go, Oblivion is running with maximum quality settings, the original texture pack, no anisotropic filtering and no antialiasing. We have chosen 1920x1440 in an attempt to test a highly compute limited resolution without taxing memory as much as something like 2560x1600 would. For F.E.A.R., we are also using 1920x1440. All the quality settings are on maximum with the exception of soft shadows, which we leave disabled.

While the maximum stable clock speed of our OCZ card is 640, we were able to squeak out a couple benchmarks at higher frequencies if we powered down for a while between runs. It seems like aftermarket cooling could help our card maintain higher clock speeds, but we'll have to save that test for another article.

It's clear that there are some granularity issues in setting the core clock frequency of the 8800 GTX. Certainly, this doesn't seem to be nearly the problem we saw with the 7800 GTX, but it is still something to be aware of. In general, we see performance improvements similar to the percent increase of clock speed. This indicates that core clock speed has a fairly direct effect on performance.

The F.E.A.R. graph looks a little more disturbingly discrete, but it is important to note that the built in benchmark only provides whole numbers and not real average framerates. Again, for the most part, performance increases keep up with the percent increase in clock speed. This becomes decreasingly true at the higher end of the spectrum, and this could indicate that extreme overclocking of 8800 GTX cards will have diminishing returns over 660 MHz. Unfortunately, we don't have a card that can handle running at higher speeds at the moment.

As we previously mentioned, in order to test core clock scaling, we fixed the memory clock at 1020 MHz and the shader clock at 1350 MHz. Our tests were performed at different clock speeds, and we will report on performance vs. clock speed.

One of the issues with games that don't make use of a timedemo that renders exactly the same thing every time is consistency. Both Oblivion and F.E.A.R. can vary in their results as the action that takes place in each benchmark is never exactly the same twice. These differences are normally minimized in our testing by using multiple runs. Unfortunately, with the detail we wanted to use to look at performance, normal variance was playing havoc with our graphs. For this, we devised a solution.

Our formula for determining average framerate at each clock speed is as follows:

FinalResult = MAX(AvgFPS.run1, AvgFPS.run2, ... , AvgFPS.run5, PreviousClockSpeedResult)

What this means is that we don't see normal fluctuation that would cause a higher clock speed to yield a lower average FPS while still maintaining a good deal of accuracy. Normally, our tests have a plus or minus 3 to 5 percent variability. Due to the number of samples we've taken and the fact that previous test results are used, deviation is cut down quite a bit. In actual gameplay, there will be much more fluctuation here.

As far as settings go, Oblivion is running with maximum quality settings, the original texture pack, no anisotropic filtering and no antialiasing. We have chosen 1920x1440 in an attempt to test a highly compute limited resolution without taxing memory as much as something like 2560x1600 would. For F.E.A.R., we are also using 1920x1440. All the quality settings are on maximum with the exception of soft shadows, which we leave disabled.

While the maximum stable clock speed of our OCZ card is 640, we were able to squeak out a couple benchmarks at higher frequencies if we powered down for a while between runs. It seems like aftermarket cooling could help our card maintain higher clock speeds, but we'll have to save that test for another article.

It's clear that there are some granularity issues in setting the core clock frequency of the 8800 GTX. Certainly, this doesn't seem to be nearly the problem we saw with the 7800 GTX, but it is still something to be aware of. In general, we see performance improvements similar to the percent increase of clock speed. This indicates that core clock speed has a fairly direct effect on performance.

The F.E.A.R. graph looks a little more disturbingly discrete, but it is important to note that the built in benchmark only provides whole numbers and not real average framerates. Again, for the most part, performance increases keep up with the percent increase in clock speed. This becomes decreasingly true at the higher end of the spectrum, and this could indicate that extreme overclocking of 8800 GTX cards will have diminishing returns over 660 MHz. Unfortunately, we don't have a card that can handle running at higher speeds at the moment.

12 Comments

View All Comments

bigtoe36 - Wednesday, February 28, 2007 - link

DerekI think you have the overclocking results a little wrong in the article.

here is what I get your results to be. I removed the GTS card.

Asus 621/1026

BFG 648/972

EVGA 648/1008

Leadtech 621/1026

MSI 648/1044

OCZ 648/1026

Sparkle C 621/918

Sparkle 621/1008

Shader domain will have been the major factor here holding some cards back, also did you try manipulating the PCIE bus as this will usually allow the cards to clock higher?

Overall i get the MSI top and OCZ card second from your testing which is not at all bad.

Beenthere - Saturday, February 17, 2007 - link

OCZ's PSUs and other products haven't been top shelf like their memory original was, so I'd take any claims with a large grain of salt.Many PC hardware companies have discovered how technically challenged most consumers are and thus have decided to profit from this. OCZ and other companies know that most hardware review sites will shill for them just to get free hardware to review. Few sites have the integrity to write an honest review lambasting a company for a crappy product. Instead the reviewer generally notes the positives and ignores the blemishes or outright malfuntions. When consumers purchase the product and find it's a piece of garbage, that's when the shit hits the fan.

DerekWilson - Monday, February 19, 2007 - link

Personally, I really like OCZ PSUs ... I use them in my personal and test systems.There really was no reason to tear apart OCZ for their 8800 GTX. While there is nothing incredibly noteworthy about the part, there weren't any problems. While we can't say all OCZ 8800 GTX cards will overclock as well as the one we had, we were able to acheive higher clock speeds than a few others we've tested.

We will certaily tear into hardware that deserves it.

lopri - Friday, February 16, 2007 - link

I feel the pain of reviewing vendor cards. All of them essentially being reference cards, there is only as much a reviewer can explore. I'd have like some more comments regarding warranty and customer service experience, but the card being supplied by OCZ that would have also been somewhat pointless.However, the good part of the review where core clock / shader clock scaling was discussed in-dept is very informative. I've been aware of this behavior myself via RivaTuner monitoring and AT's review confirms it. For anyone hasn't understood this before, this information should be taken very seriously if s/he intends to overclock 8800 series cards. Basically, most (if not all) GTX are capable of 600+MHz core clock, and the performance jump occurs at 620~621MHz and 636~637MHz. This means an overclok of 630MHz will net you the same FPS as that of 620MHz.

Most interesting bit for me was that after the last performance jump @649MHz(?), no ammount of overclocking will bring extra performance gain. I didn't know about this and thank Derek for this finding. Again, this is very interesting. I would like to know why this is the case, as well as the exact formula NV utilizes for this clock speed jump. Maybe in the next review? :)

adholmes - Friday, February 16, 2007 - link

The idea that all of these cards are reference designs got me thinking. I wonder if the review was done with 5 different cards from the same manufacturer, would the results would be the same? Are the differences we observe due to vendor implementation, memory selection, etc., or just natural manufacturing variances?DerekWilson - Friday, February 16, 2007 - link

we'd love to get nvidia's formula for clocking their cards, but I don't think they'll give us the timing diagram :-)And I wouldn't say that there would be *no* ammount of overclocking that would bring more performance -- we just don't have a card that will go high enough ...

similar to previous cards, it looks like the gap widens as clock speed increases -- in other words, the higher you go, the more you need to increase clock speed to hit the next performance bump.

We might see something in the mid 670s ...

I think aftermarket cooling would help -- which I might try. That would certainly make it interesting -- if water or phase change cooling could get you enough clock head room to reach the next plateau.

lopri - Friday, February 16, 2007 - link

The reason why I thought there was some kind of 'ceiling' is that the touted rumor before the G80's debut. If I remember correctly, there was something along the line of :- Up to 1.5GHz Shader Core Clock

Since the shader clock rises with core clock, if the shader clock's theoretical limit is 1.5GHz by design - again, this is a guesswork on my part - wouldn't there be some sort of ceiling?

Anyway, I think NV should disclose this mystery regarding the whole clock domain thingy. :D

abhaxus - Friday, February 16, 2007 - link

But 640mhz was 5th out of 9 in your chart, and 1020mhz was 4th out of 9. I just hate it when statistics are misused. The numbers LOOK high but in fact were close to median results in your small sample. In fact the core clock was below average... Nitpicky I know, but maybe it's flattering for you guys to know people read your articles closely enough to notice crap like that? I hope so.I liked the review and was very interested in the scaling of the GTX (although I am now leaning toward a 320mb GTS for my 1680x1050 LCD).

DerekWilson - Friday, February 16, 2007 - link

640 MHz is actually 4th ... you're including the XFX 8800 GTS overclocked to 654 -- which shouldn't count in a comparison of 8800 GTX cards.1020 MHz more or less == 1021 MHz ... in fact, I'm absolutely positive that the OCZ 8800 GTX would run stable at 1021 -- I just didn't bother to bump the clock speed up that little bit. I suppose it's a slight difference in method between the way Josh and I determine clock speed. That extra MHz isn't going to do anything for anyone ... (it's cool to know that something happening an extra million times per second isn't going to have any impact on the outcome isn't it).

Anyway, I do appreciate that you guys keep up with that sort of thing -- I know I did back when I was just reading the site. :-)

If you're still interested in GTX scaling, please stay tuned -- I didn't originally include Vista because NVIDIA's driver didn't properly support overclocking, but with the BIOS editor I can just change the clock speeds at a low level. Combine that with the fact that NVIDIA just released a DX10 demo that uses all kinds of neat shader centered features and I'm really excited about doing some clock scaling with DX10 code.

http://www.nzone.com/object/nzone_cascades_home.ht...">http://www.nzone.com/object/nzone_cascades_home.ht...

Wish I'd had this before I finished testing for this article, but I think I can definitely find some other things to talk about with DX10, Vista, and overclocking.

abhaxus - Saturday, February 17, 2007 - link

putting it that way... yes, it is actually very cool. At least cool in the way we nerds think about things.

When you do that scaling with DX10 I would really really really love you guys if you ran some stuff on the GTS 320mb to see if there's a big disadvantage with the lower memory with dx10 shaders. As I said, I have a 22" WS LCD and so far it seems like the 320mb version is more than enough for what I do in current games, would be nice to know if it does well in the future also.