AnandTech Exclusive: CrossFire Benchmarks Revealed

by Derek Wilson on July 22, 2005 6:00 AM EST- Posted in

- GPUs

The System

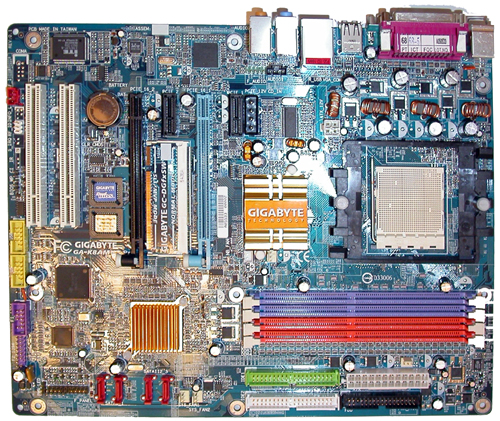

The GA-K8AMVP Pro is based on the ATI's latest RD480 chipset paired with a ULi south bridge and features CrossFire support. We'll be taking a closer look at this board as well, as Gigabyte tells us that it can even support 2x 3D1 cards. The board looks very similar to Gigabyte's NF4 SLI boards, including the selector paddle for configuring the PCI Express ports. Flipped to single GPU, the first PCI Express slot gets 16 lanes, and flipped to multi GPU mode will run each slot at x8.

The board itself is stable and runs well. We had no problems with the platform while running our tests, and performance seems to be very solid. On this board, the master card had to be plugged into the slot closest to the CPU.

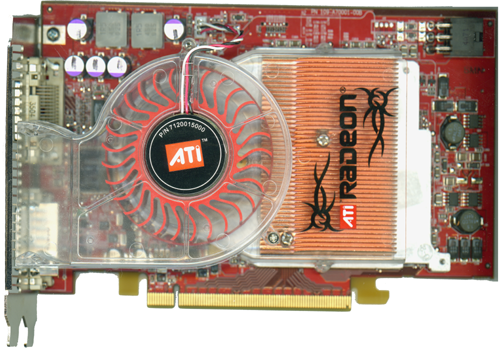

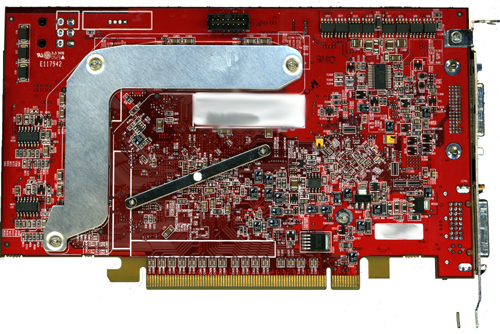

Our CrossFire master card looked very similar to a regular X850 XT. The port furthest from the motherboard connects to the dongle, which plugs into the monitor as well as the port closest to the motherboard on the slave card.

The card really does look a lot like a normal X850 XT, but we can see that the solder points for the Rage Theater chip are missing and there are quite a few components on the board in its place. All this circuitry (along with a couple of surface-mount LEDs) is likely part of the hardware needed to combine the output of both cards for final display. NVIDIA's parts don't need quite as many additional board components, as the GPU has die space committed to multi-chip rendering and all the work is done on the GPU and in the frame buffers.

That's not to say that ATI's solution is less adequate. Since the DVI port is inherently digital, the external dongle does nothing to lower image quality like the old analog dongle that 3dfx used to employ for SLI.

Before we get to the benchmarks, we will note again that with the early hardware and drivers, we had some trouble with some of the games and settings that we wanted to run. We tested most of the games that we ran in our recent 7800 launch article, but we ended up seeing numbers that didn't make sense. We don't have any reason to think that these problems will remain when the product launches, but it does shorten the list of games that we felt gave a good indication of CrossFire's performance.

For our tests, we used numbers from our original 7800 GTX review. The system (except for the motherboard) is the same as the setup used in the 7800 review (FX-55, 1GB 2:2:2 DDR400, 600W PSU, 120GB HD).

And what are our results? Take a look.

65 Comments

View All Comments

Hasse - Wednesday, July 27, 2005 - link

Sigh.. Read comment to first post..DerekWilson - Thursday, July 28, 2005 - link

We're all still getting used to the new design :-)To actually reply -- the crossfire card can work alone. you don't need another card. I know this because it was running by itself while I was trying various methods to coax it into submission :-) ... Also, even if gigabyte's hardware is close to complete, ATI is in charge of the driver for the mobo and the graphics card, as well as getting a solid video bios out to their customers. These things are among the last in the pipe to be fully optimized.

I don't think I could give up the F520 even for desk space. I'd rather type on an LCD panel any day, but if I am gonna play or test games, there's just no substitute for a huge CRT.

DanaGoyette - Monday, July 25, 2005 - link

Want to know why 1280x1024 always looks wrong on CRTs? It's because IT IS wrong! 800x600, 1024x768, 1600x1200 -- all are 4:3 aspect ratio,but

1280x1024? That's 5:4! To get 4:3, you need to use 1280x960! That should be benchmarked too, if possible.

Whoever invented that resolution should be forced to watch a CRT flickering at 30 Hz interlaced for the rest of his life! Or at least create more standard resolutions with the SAME aspect ratio!

I could rant on this endlessly....

DerekWilson - Tuesday, July 26, 2005 - link

There are a great many lcd panels that use 1280x1024 -- both for desktop systems and on notebooks.the resolution people play games on should match the aspect ratio of the display device. At the same time, there is no point in testing 1280x960 and 1280x1024 because their performance would be very similar. We test 1280x1024 because it is the larger of the two resolutions (and by our calculations, the more popular).

Derek Wilson

Hasse - Wednesday, July 27, 2005 - link

True!I'ld have to agree with Derek. Most people that play on 1280 use 1280x1024. But it is also true that 960 is the true 4:3... While I don't know why it's so that there's 2 versions of 1280 (probably something related to video or TV), I also know that the performance difference is allmost zero, try for yourself. Running Counterstrike showed no difference on my computer.

-Hasse

giz02 - Saturday, July 23, 2005 - link

Nothing wrong with 16x12 adn sli'd 7800's. If your monitor can't go beyond that, then surely it would welcome the 8 and 16xaa modes that come for free, besidesvision33r - Saturday, July 23, 2005 - link

For the price of $1000+ to do Crossfire, you could've just bought the 7800GTX and you're done.coldpower27 - Saturday, July 23, 2005 - link

Well Multi GPU technology to me makes sense simply when you can't get the same performance you need out of a single card. I would rather have the 7800 GTX then the Dual 6800 Ultra setup, for reduced power consumption.You gotta hand it to Nidia able to market their SLI setup to the 6600 GT, and soon to be 6600 LE line.

karlreading - Saturday, July 23, 2005 - link

I have to say im running a SLI setup.i went from a AGP based ( nf3, 754 a64 3200+ hammer ) 6800GT system, to the current SLI setup ( nf4 SLI/s939 3200+ winchester ) dual 6600GT setup.

to be honest, SLI aint all that. i miss the fact that to use my dual desktop ( i have 2*20inch CRT's ) I have to turn SLI off in the drivers, which involves a reboot, so thats hassel. Yes, the dual GT's beat even a 6800 ULTRA in non AA settings in doom 3 but they get creamed with AA on, even the 6600GT'S i have are feeling cpu strangulation, so you need a decent cpu to feed it. and not all games are SLI ready. To be honest, apart from the nice view in the case of to cards in there ( looks trick ) i would rather have a decent single card set up then a SLI setup.

crossfire is looking strong though! AIT needed it and now they have it, the playing field is much more level! karlos!

AnnoyedGrunt - Saturday, July 23, 2005 - link

Also, as far as XFire competing with SLI, it seems to offer nothing really different from SLI, and in that sense only competes by being essentially the same thing but for ATI cards. However, NV is clearly concerned about it (as evidenced by their AA improvements, the reduction on the SLI chipset prices, and the improvements in default game support). So, ATI's entry into the dual card arena has really improved the features for all users, which is why this heated competition between the companies is so good for consumers. Hopefully ATI will get their XFire issues resolved quickly, although I agree with the sentiment that the R520 is far more important than XFire (at least in the graphics arena - in the chipset arena ATI probably can't compete without XFire).-D'oh!