AMD Teases FSR 2.0: Temporal Upscaling Tech for Games Coming in Q2

by Ryan Smith on March 17, 2022 9:01 AM EST

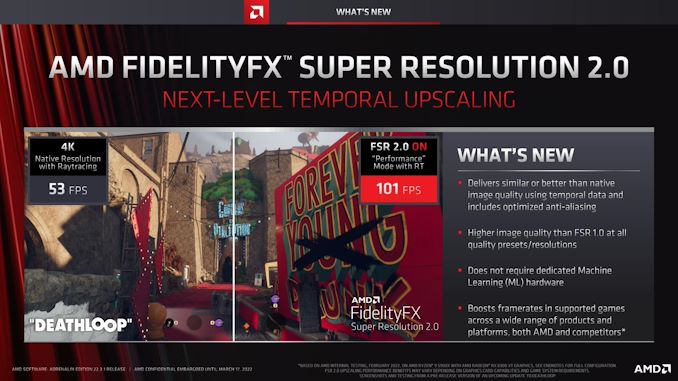

Alongside their spring driver update, AMD this morning is also unveiling the first nugget of information about the next generation of their FidelityFX Super Resolution (FSR) technology. Dubbed FSR 2.0, the next generation of AMD’s upscaling technology will be taking the logical leap into adding temporal data, giving FSR more data to work with, and thus improving its ability to generate details. And, while AMD is being coy with details for today’s early teaser, at a high level this technology should put AMD much closer to competing with NVIDIA’s temporal-based DLSS 2.0 upscaling technology, as well as Intel’s forthcoming XeSS upscaling tech.

AMD’s current version of FSR, which is now being referred to as FSR 1.0, was released last summer by the company. Implemented as a compute shader, FSR 1.0 was a (relatively) simple spatial upscaler, which could only use data from the current frame for generating a higher resolution frame. Spatial upscaling’s simplicity is great for compatibility (see: today’s RSR announcement) but it’s limited by the data it has access to, allowing for more advanced multi-frame techniques to generate more detailed images. For that reason, AMD has been very careful with their image quality claims for FSR 1.0, treating it more like a supplement to other upscaling methods than a rival to NVIDIA’s class-leading DLSS 2.0.

However it’s been clear from the start that if AMD wanted to truly go toe-to-toe with NVIDIA’s upscaling technology that they’d need to develop their own temporal-base upscaling tech, and that’s exactly what AMD is doing. Set to launch in Q2 (Computex, anyone?), AMD is developing and will be releasing a new generation of FSR that incorporates both spatial and temporal data for more detailed images.

Given that FSR 2.0 is an AMD technology, the company isn’t really beating around the bush here as to why they’re doing it: using temporal data allows for higher quality images. And while it goes beyond the scope of today’s teaser from AMD, like DLSS (and XeSS), they are clearly going to be relying on motion vectors as the heart of their temporal data. This means that, like DLSS/XeSS, developers will need to build FSR 2.0 into their engines in order to provide FSR with the necessary motion vector data. Which is a notable trade-off from the free-wheeling FSR 1.0, but is none the less a good trade-off to make if it can produce better upscaled images.

And although FSR 2.0 won’t launch for a few months, AMD is already taking some efforts to underscore how it will be different from DLSS 2.0. In particular, AMD’s technique does not require machine learning hardware (e.g tensor/matrix cores) on the rendering GPU. Which is especially important for AMD, since they don’t have that kind of hardware on RDNA 2 GPUs. As a result, conceptually, FSR 2.0 can be used on a much wider array of hardware, including older AMD GPUs and rival GPUs, a sharp departure from DLSS 2.0’s NVIDIA-only compatibility.

Though even if AMD doesn’t require dedicated ML hardware in client GPUs, this doesn’t mean they aren’t using ML as part of the upscaling process. To be sure, as temporal AA/upscaling has been the subject of research in games for over a decade now, there are multiple temporal-style methods that don’t rely on ML. At the same time, however, the image quality benefits of using a neural network have proven to be significant, which is why both DLSS and XeSS incorporate neural networks. So at this point I would be more surprised if AMD didn’t use one.

If AMD is using a neural network, then at a high level this sounds quite similar to Intel’s universal version of XeSS, which runs inference on a neural net as a pixel shader, making heavy use of the DP4a instruction to get the necessary performance. These days DP4a support is found in the past few generations of discrete GPUs, making its presence near-ubiquitous. And while DP4a doesn’t offer the kind of performance that dedicated ML hardware does – or the same range of precisions, for that matter – it’s a faster way to do math that’s still good enough to enable temporal upscaling and improve on FSR 1.0’s image quality.

Update: According to a report from Computerbase.de, AMD has confirmed to the news site that they are not using any kind of neural network. So it seems AMD is indeed going with a purely non-ML (and DP4a-free) temporal upscaling implementation. Color me surprised.

As for licensing, AMD is also confimring today that FSR 2.0 will be released as an open source project on their GPUOpen portal, similiar to how FSR 1.0 was released last year. So developers will have full access to the source code for the image upscaling technology, to implement and modify as they see fit.

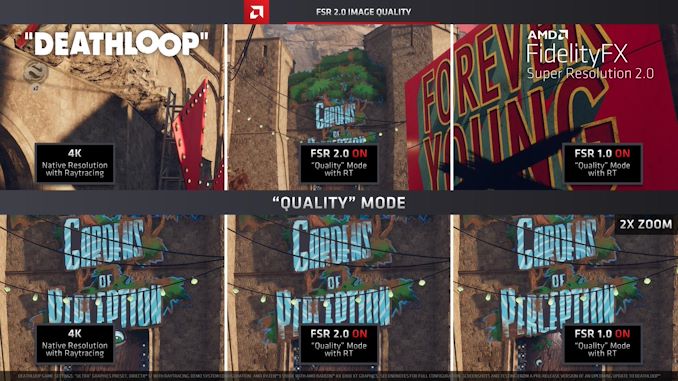

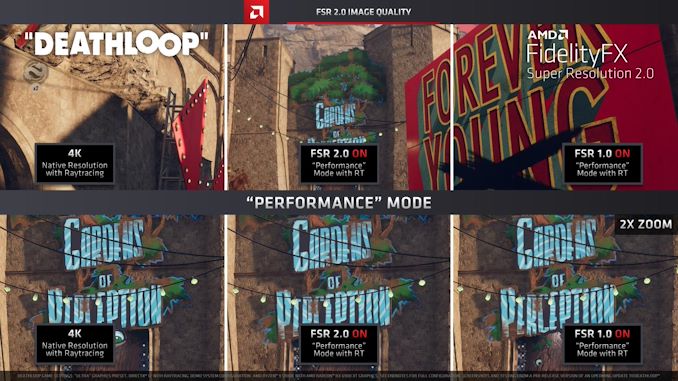

Finally, as part of AMD’s teaser the company has released a set of PNG screenshots of Deathloop rendered with both FSR 1.0 and an early version of FSR 2.0. Though early screenshots should always be taken with a grain of salt – they’ve been cherry-picked for a reason – the difference between FSR 1.0 and FSR 2.0 in performance mode is easy enough to pick up on.

Meanwhile the difference versus native is less clear (which is the idea), though it should be noted that even native 4K is already running temporal AA here.

Native (4K) vs. FSR 2.0

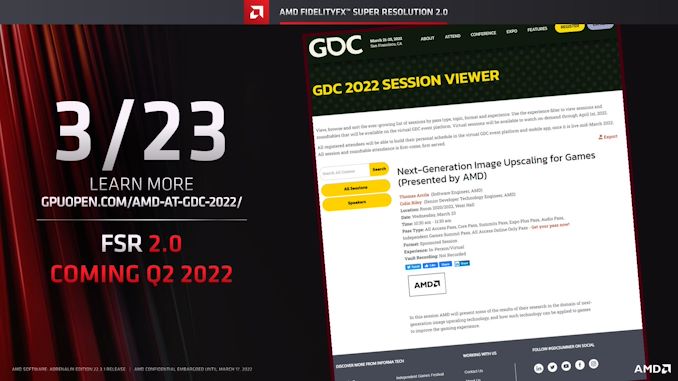

Ultimately, today’s announcement is a teaser for more information to come. At next week’s GDC, AMD will be hosting a session on the 23rd called “Next-Generation Image Upscaling for Games”, where AMD will be presenting their research into image upscaling in a developer-oriented context. According to AMD we should expect a little more technical information from that session, while the full details of the technology will be something AMD holds on to until closer to its launch.

If all goes according to plan, FSR 2.0 will launch next quarter.

46 Comments

View All Comments

Makaveli - Thursday, March 17, 2022 - link

installing these drivers now.linuxgeex - Friday, March 18, 2022 - link

If you have the capability, I'd appreciate if you could report what the input lag difference is between Native and FSR 2.0. I spotted a 1 frame lag for FSR 2.0 in the youtube video. That may just be an editing error. It would be good to know.cigar3tte - Thursday, March 17, 2022 - link

What lineup of cards will support FSR 2.0? And does it require support from games as well?NextGen_Gamer - Thursday, March 17, 2022 - link

It explicitly requires support from each individual game, as the article says. For cards, since AMD is not relying on ML hardware (which none of their cards support), a good guess would be full compatibility with RDNA 1 & 2, and most likely Vega as well.Makaveli - Thursday, March 17, 2022 - link

Also possible this is RDNA 1,2,3 only since Vega is going to be 5 years old this august. If anyone is still on Vega or Polaris with prices finally starting to drop and RDNA 3 coming out this year will will also force prices down its time to upgrade.vlad42 - Thursday, March 17, 2022 - link

But you are ignoring the fact that Vega was used as the iGPU in all Zen APUs up until Rembrandt/Van Gogh. These are prime candidates for an FSR 2.0 performance uplift, especially in laptops where you cannot simply add a GPU.Oxford Guy - Thursday, March 17, 2022 - link

OMG. Five whole years? Someone call Methuselah for a reaction vid.mode_13h - Monday, March 28, 2022 - link

5 years *would* be a long time for GPUs, if not for the sorry state of the GPU market for much of that time.emn13 - Thursday, March 17, 2022 - link

It's conceivable they add vega support not because vega is particularly relevant, but because they used vega-based tech in a bunch of still far more recent APU's and this _is_ the kind of tech an APU could benefit from. But who knows; they might not.Spunjji - Monday, March 21, 2022 - link

They've got RDNA 2 APUs coming up for sale, though. They might decide to go for the upgrade incentive over backwards compatibility.I hope they don't do things that way, but I can't use RSR on an RDNA notebook dGPU because of the Vega iGPU, so they do seem to be moving in that direction.