Arm Announces Neoverse V1, N2 Platforms & CPUs, CMN-700 Mesh: More Performance, More Cores, More Flexibility

by Andrei Frumusanu on April 27, 2021 9:00 AM EST- Posted in

- CPUs

- Arm

- Servers

- Infrastructure

- Neoverse N1

- Neoverse V1

- Neoverse N2

- CMN-700

The Neoverse V1 Microarchitecture: X1 with SVE?

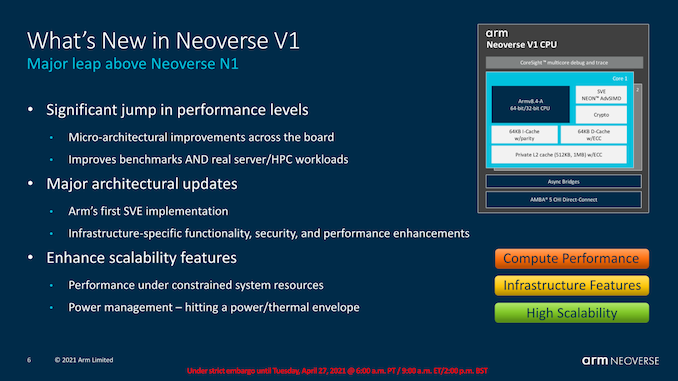

Starting off with the new Neoverse V1, the design is both of a familiar origin, but also has a few distinct features that we see for the first time ever in an Arm CPU. As noted in the introduction, the V1 was designed at the same time as the Cortex-X1 by the same team at Arm’s Austin design centre, with large similarities between the two microarchitectures when it comes to the block structures.

What’s notable about the V1, in comparison to the X1 and of course the predecessor N1, is the fact that this is now an SVE capable processor, with two native 256b SIMD pipelines, and also introducing server-only features such as coherent L1I caches, bFloat16 execution capabilities, and a slew of distinct characteristics we’ll cover in just a bit.

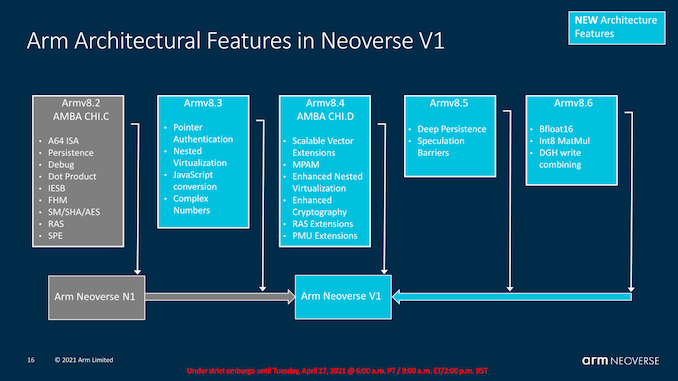

The architectural features of the Neoverse V1 are probably the most complicated in terms of describing – essentially, it’s a v8.4 baseline architecture which also pulls v8.5 and v8.6 features in for the HPC oriented workloads the design is aimed for. Given that we talked about Armv9 only a month ago, this may seem a bit odd, but again we have to remember that the V1 has been designed some time ago and that customers have had the IP for quite a while now, taping in or having already taped out V1 processors.

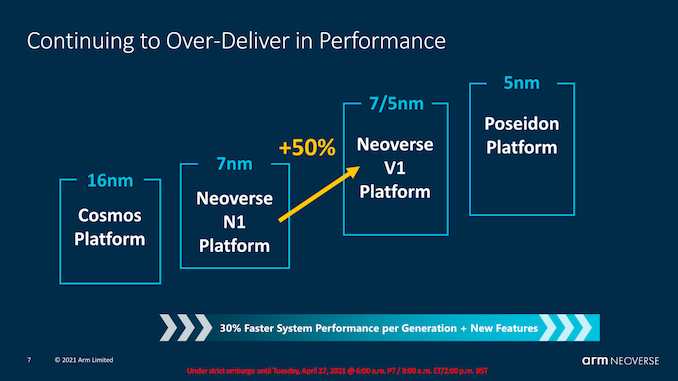

The big promise of the V1 is its extremely large performance jump over the N1, coming in at an IPC increase of +50%. This sounds large, and it is, but it’s also not all that surprising given that the microarchitecture essentially is 2 microarchitecture design generations newer than the N1, even through from a infrastructure product standpoint it’s only one generation newer.

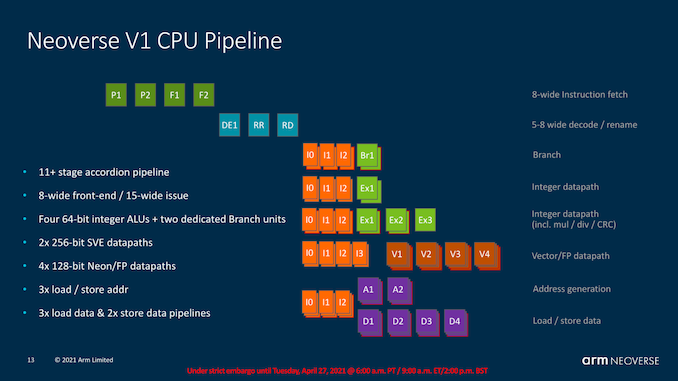

From a high-level pipeline standpoint and microarchitecture view, the Neoverse V1 is very similar to the X1. It’s still an extremely short pipeline design that has a minimum of 11 stages, with Arm putting a lot of focus on this aspect of their microarchitectures to reduce branch misprediction penalties as much as possible. This aspect of the microarchitecture has remained relatively static over the last few iterations of the Austin family of designs starting with the A76, so Arm notes that the frequency capabilities of the V1 is essentially unchanged when compared to the N1, with performance boosts coming solely from increased IPC.

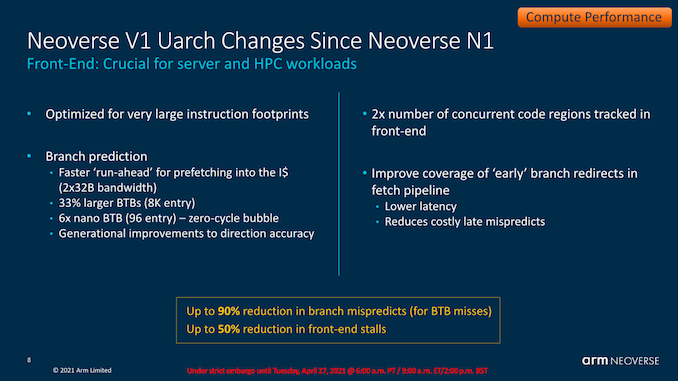

The V1 sees a lot of the front-end improvements we’ve seen with the Cortex-A77 and Cortex-X1 generations, which saw larger front-end branch improvements such as a doubled up bandwidth for the decoupled fetch unit, much larger L2 BTB to up to 8K entries, and a rearranging and resizing of the lower level BTBs, with the L0 (nanoBTB) growing to 96 entries, and the L1 BTB (microBTB) no longer being present when compared to the Neoverse N1.

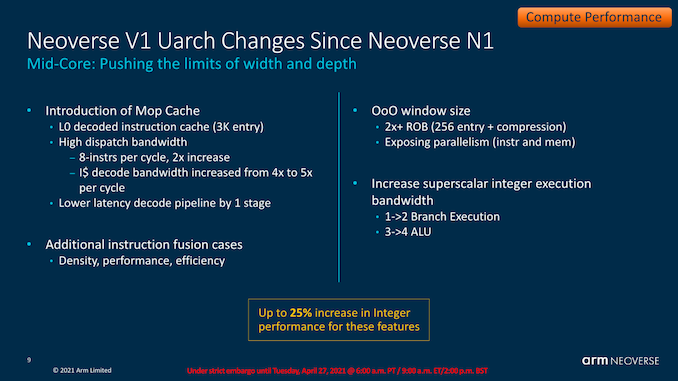

The V1 one when compared to the N1 also adds in new structures that hadn’t been present in the design, such as the introduction of a macro-Op cache of up to 3K decoded instructions. The dispatch bandwidth from the Mop cache is 8-wide, while the actual instruction decoder this generation is 5-wide, much the same as on the X1.

The out-of-order windows size is essentially doubled when compared to the Neoverse N1, with the ROB growing to 256 entries. This is actually a tad larger than what Arm was willing to disclose for the Cortex-X1 where the company had only talked about a “OoO window size of 224”, so in this regard this seems to be a differentiation to what we’ve seen in the X1.

On the back-end integer execution pipelines, the design also pulls in the many changes we’ve seen with the A77 generations, which amongst others include a doubling of the branch execution ports, and a new complex ALU capable of simple instructions such as additions as well as more complex operations such as multiplications and divisions.

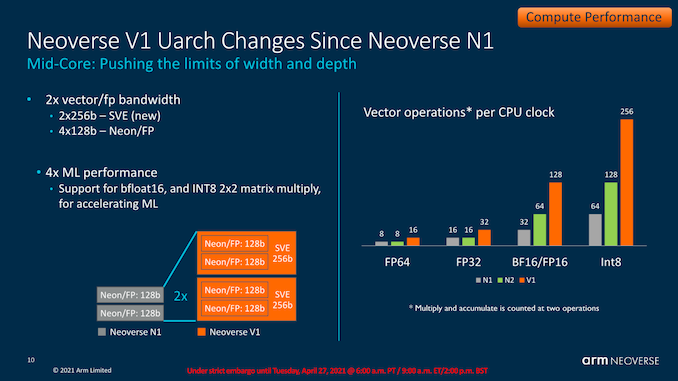

Obviously enough, the new SIMD pipelines are very different on the V1 given that this is Arm’s first ever SVE capable microarchitecture. The design has two pipelines with seemingly two dedicated schedulers, with native capability for 256b wide SVE vectors. The design is fully backwards compatible for 128b NEON/FP operations in which the pipelines then essentially act as 4x128b units, meaning it has the same execution width as the X1 in that regard.

Compared to the N1, the new design also supports new bFloat16 and Int8 data formats which greatly increase the AI and ML inferencing performance capabilities of the core.

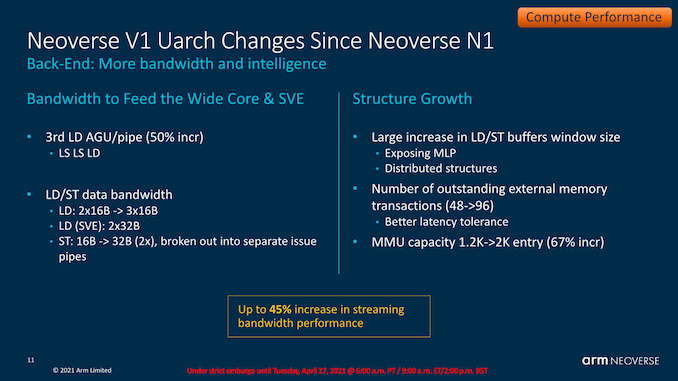

On the memory subsystem side, we also see the increased unit count found on the Cortex-X1, including 2 load/store units and one load unit, meaning the core is capable of up to 3 loads per cycle and 2 stores per cycle maximum. SVE vector bandwidth is 2x32B per cycle for loads, and 32B per cycle for stores.

The core naturally includes the data parallelism improvements seen on the X1 in order to increase MLP (Memory-level parallelism) capabilities.

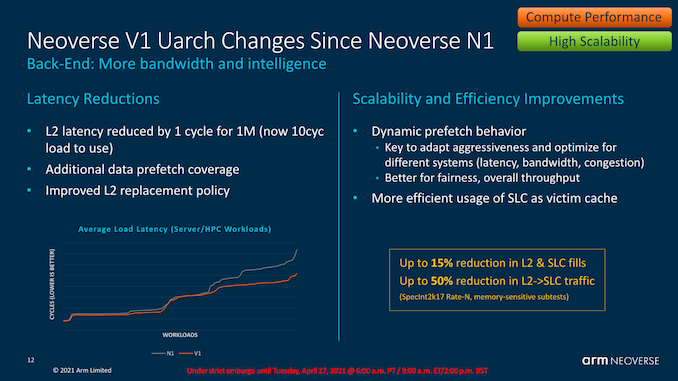

The L2 cache has also adopted a similar design to that of the X1, which is now 1 cycle faster at the same 1MB size, and has double the number of banks in order for increased access parallelism.

Arm here discloses a quite large reduction in the system level latency for the V1. Besides structural improvements, new generation prefetchers are a big part of this, such as the introduction of a new type of temporal prefetcher which is able to latch onto arbitrary access patterns over time and recognise subsequent iterations of the same pattern, and pull the data in.

Arm discloses that the core has new dynamic prefetching behaviour that plays a major role in reducing L2 to interconnect traffic, which is a critical metric in large core count systems where every byte of bandwidth needs to be of actual use and cannot be wasted for wrongly speculated prefetching.

95 Comments

View All Comments

yeeeeman - Tuesday, April 27, 2021 - link

what about a cortex a55 successor?SarahKerrigan - Tuesday, April 27, 2021 - link

I'd expect to see one next month launching alongside Matterhorn.eastcoast_pete - Tuesday, April 27, 2021 - link

Hi Sarah, can you post any links (including rumors) about that? Given ARM's focus on bigger, high performance-oriented designs, the LITTLE cores haven't gotten a lot of love in recent years. The persistence of the in-order designs for ARM LITTLE cores is one of the reasons why I find the dominance of ARM troubling; that clearly stood still because there is nowhere else to turn to for many, i.e. they didn't have to change it. In x86, at least we have two larger players having their own, yet compatible designs.SarahKerrigan - Tuesday, April 27, 2021 - link

I've seen it reported in a few places, including on RWT which is a pain to search - but since task migration generally requires compatible instruction sets between big and little cores, it's pretty clear that Matterhorn will bring a small, low-power friend when it arrives.Raqia - Tuesday, April 27, 2021 - link

I wonder if they could simply repurpose either a refresh of the A73 or A75 as the little core. Surely with the new fabrication processes available, die area relative to a big Matterhorn core should be comparable to A55 vs A78/X1, but the question becomes performance / energy. Integer performance of A75/73 vs. Ice Storm is comparable with the former winning by a bit in FP, but efficiency is light years apart:https://images.anandtech.com/doci/13614/SPEC-perf-...

https://images.anandtech.com/doci/16192/spec2006_A...

SarahKerrigan - Tuesday, April 27, 2021 - link

I think use of a refreshed A65 without multithreading and with the new ops seems more plausible to me.Raqia - Tuesday, April 27, 2021 - link

That could make sense; there's fairly little information on the micro-architecture of the A65 or A65AE at present except that it does do OoOE, and it's unclear what clocks and efficiency it can achieve as well:https://developer.arm.com/documentation/100439/010...

It does sport a bigger maximum L2 configuration than the A55. They do need to up their game here as the A55 makes a pretty poor showing for efficiency compared to Apple's small core (which got even worse in the A14 generation):

https://images.anandtech.com/doci/14072/SPEC2006ef...

At least wattage and hence current draw is low.

SarahKerrigan - Tuesday, April 27, 2021 - link

A65 is E1, which has had a uarch dive on this site.Raqia - Wednesday, April 28, 2021 - link

Got it, thanks for that! The A65 is interesting, without SMT they are quoting a pretty modest bump in integer performance < 20% at a bit more than half the power of A55 at 7nm:https://images.anandtech.com/doci/13959/07_Infra%2...

https://images.anandtech.com/doci/13959/07_Infra%2...

They could probably tune this to be better without SMT, but are you against having SMT for security reasons?

It's still not close to Apple's small cores in performance, but efficiency might be in the same ballpark now. ARM designs are quite good in terms of PPA but even their performance oriented X1 is likely only 70% the die area as a Firestorm core, and their cache hierarchies are more complex as core designs pull double duty for servers parts too.

It probably made sense to have fewer transistors per CPU core as quite a few Android SoC vendors integrated modems on die, but this may change once Qualcomm digests its Nuvia purchase and move to a smaller node. All parties may hit a wall for per core improvements as slowing SRAM density improvements at new nodes bottleneck what gains are gotten from logic density improvements.

Kangal - Thursday, April 29, 2021 - link

TL;DR - ARM needs to focus on a new product stack. It needs to have a diverse ARMv9 lineup of small, medium, large chipset options. With the small chipset being very scalable down to Tiny IoT Sensor level. Whereas the large chipset being scalable up to large supercomputers and servers. Whilst the medium chipset focusing on phones and tablets. As this covers full SoC, it includes both CPUs and GPUs.Long version:

I know making these architectures is a huge challenge, but ARM has been a little lazy in some scenarios. I know they're basically following the money in the industry, and that means chasing the "phablet" market for CPUs and GPUs. But they've been leaving themselves vulnerable to gaps, in either smaller power or larger power systems, that can be exploited by competitors, such as RISC-V. If not, even x86 might poke some wins here and there.

Ages ago, like 2013, they had the A7 (tiny), A15 (small), and A57 (medium) core designs. Basically covering most bases. Along with the Mali-400 iGPU, and 1GB-2GB Shared-RAM, to do some compute tasks. To say ARM was innovative would be a disservice to the technology they brought forward. That's in contrast to x86 Intel's Atom (small) and Intel's Core-i7Y/M (large), as well as Intel Iris Pro iGPU with 8GB Shared-RAM in systems of the time. Then ARM made the leap into 64bit processing around 2016. The lineup evolved into the A35 (tiny), A53 (small), A73 (medium) core designs, running with 1GB-2GB-4GB sRAM, and used modest G31 (tiny) to G51 (small) to G71 (medium) iGPU options. Again, this lineup was very innovative and impressive. Contrast that to the new x86 competition in AMD's 16nm Vega Large-iGPU, and Zen1 Large-CPU.

However... There hasn't been any upgrades for the "tiny" portfolio, being stuck to the offerings of Cortex A35 CPU and G31 GPU ever since. There has been only a slight refresh to the "small" portfolio, upgrading to the Cortex A55 CPU, and the G52 and G57 iGPUs. To the point that they're a joke, and easily surpassable by the competitors. ARM really needs a revolutionary new design here, it needs to be super-efficient. Perhaps something that can scale between both tiny and small categories: with performance ranging from the A55 (or more) at the "tiny" power-level, to the A73 (or more) at the "small" power-level. Basically catching up to Apple, if unable to surpass them.

Whereas the "medium" portfolio has seen very frequent upgrades, in the CPU-side to the Cortex A75, A76, A77, and A78. In the GPU-side we've seen G72, G76, G77, G78 which have been mostly competitive, surpassing some custom implementations (Samsung/MediaTek) and losing to others (Apple/Qualcomm). Not much needs to change here to be honest. We've also seen the emergence of a new "large" category of ARM processors. Firstly popularised by custom implementations from Apple (A10 and onwards), then Samsung (Mongoose M3, and onwards). Now it's supported officially by ARM in the form of the Cortex A77+ and the Cortex A78X / X1. This has been mostly underwhelming and uncompetitive, with Apple being the only one implementing good designs. There hasn't been any new "large" category for iGPUs from ARM or competitors, with the only Large-iGPU exception actually being inside the Apple Silicon M1. ARM (without counting Apple) needs to do better here, and it looks like ARM might already be focussing here in the future with ARMv9. Again contrast this to the x86 markets offering 7nm Large-CPUs of Zen2 and Zen3, with RDNA-1 and RDNA-2 Large-GPUs.