IDF Spring 2005 - Day 3: Justin Rattner's Keynote, Predicting the Future

by Anand Lal Shimpi on March 3, 2005 3:17 PM EST- Posted in

- Trade Shows

The Super Resolution demo

With the proliferation of broadband comes the increase in expectations for the quality of media you find on the internet. Unfortunately, not everyone has a good digital camera and not everyone has a good digital video camera. Especially with more and more cell phones coming with integrated cameras, movies made on them are usually pretty poor quality when viewed at 2x their size.

At the same time, we've all seen TV shows like 24 or CSI where someone sitting at a keyboard can simply "sharpen that up" and make even the blurriest, lowest resolution image clear enough to pick out someone's face. We all usually scoff at the idea and complain about how unrealistic things like that are, but in reality, there is some truth to what's going on.

There are a set of algorithms that look at images and perform motion analysis on a pixel basis as well as statistical analysis on a per frame basis to help enhance the resolution of an image or a movie. In today's keynote, this technique is referred to as Super Resolution (no comment).

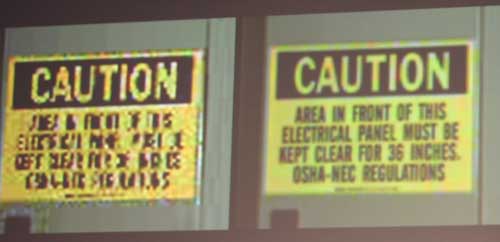

The demo was of a loop of about 3 seconds of low resolution video of a caution sign, you can see the original video cap below:

Take on a camera phone, the video was then cleaned up using these Super Resolution techniques to result in the following:

The results were nothing short of impressive - but why the demo? It took the current generation microprocessors about 1 minute to clean up that 3 second video, to do a full length conversion on more difficult material would require around 1000x the compute power current platforms offer. Rattner used Super Resolution as an example of what multi-core CPUs by 2015 will be able to enable.

15 Comments

View All Comments

Verdant - Saturday, March 5, 2005 - link

sigh - no compiler is going to magically make software work in parallel.not everything is "parallel-able" (my new word for the day)

some tasks must be serial-processed, it is the nature of computing.

my main point is that i hope we can see individual "cores" keep increasing their speed..

did he say anything about what the highlighted "photonics" box on the slide was about?

mkruer - Friday, March 4, 2005 - link

As if Intel can predict 10yrs into the future. They having trouble predicting one year in advance. I seriously doubt that Intel massive parallelism will be the solution to all their CPU issues. Looking somewhat ahead I see the parallelism thread dying out at around 8 pipelines for the simple reason, that most “standard” (non games or scientific apps) programs would never use more then eight. Look at RISC, most RISC architecture have 10 thread, and its been that way for the last 10yrs or more. You can only go so wide before the width becomes detrimental to the processing of the instruction.Locut0s - Thursday, March 3, 2005 - link

#12 Oops should have reap above posts. Yeah that makes more sense then.xsilver - Thursday, March 3, 2005 - link

the super resolution demo requires video people;it interpolates a 60-90 frames into 1 frame like the guy above said....

and #8 ... I think they mean 1000x because the size of the image used in the demo is very small... so if you wanted to use it on say a face then you would need WAY more computing power.... eg. the stuff on CSI is so bunk....

Locut0s - Thursday, March 3, 2005 - link

Am I the only one that thinks that the "Super Resolution" Demo shown there is just a little too good to be true?xsilver - Thursday, March 3, 2005 - link

"nanoscale thermal pumps"sounds like some tool you need to get botox done :)

sphinx - Thursday, March 3, 2005 - link

All I can say is, we'll see.DCstewieG - Thursday, March 3, 2005 - link

60 seconds to do 3 seconds of footage. That would seem to me it needs 20x the power to do it in real-time. What's this about 1000x?clarkey01 - Thursday, March 3, 2005 - link

Intel said in early 03 that they would be at 10 Ghz ( nehalem) in 2005.So dont hold you breathe on thier dual core predictions

Phlargo - Thursday, March 3, 2005 - link

Didn't Intel originally say that they could scale the P4 architecture to 10 ghz?