Intel’s New 224G PAM4 Transceivers

by Dr. Ian Cutress on August 21, 2020 3:00 PM EST

One battleground in the world of FPGAs is the transceiver – the ability to bring in (or push out) high speed signals onto an FPGA at low power. In a world where FPGAs offer the ultimate ability in re-programmable logic, having multiple transceivers to bring in the bandwidth is a key part of design. This is why SmartNICs and dense server-to-server interconnect topologies all rely on FPGAs for initial deployment and adaptation, before perhaps moving to an ASIC. As a result, the key FPGA companies that play in this space often look at high-speed transceiver adoption and design as part of the product portfolio.

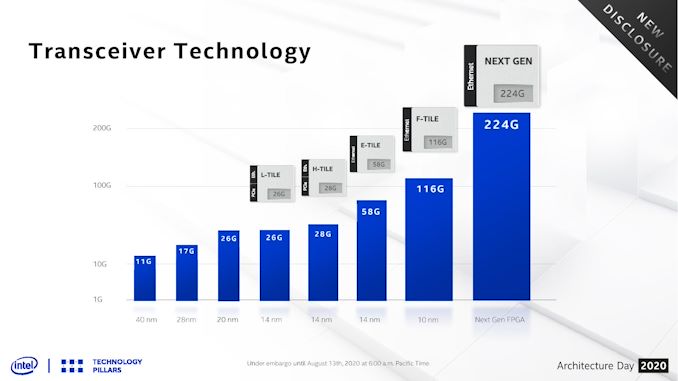

In recent memory, Xilinx and Altera (now Intel), have been going back and forth, talking about 26G transceivers, 28G transceivers, 56G/58G, and we were given a glimpse into the 116G transceivers that Intel will implement as an option for its M-Series 10nm Agilex FPGAs back at Arch Day 2018. The Ethernet based 116G ‘F-Tile’ is a separate chiplet module connected to the central Agilex FPGA through an Embedded Multi-Die Interconnect Bridge (EMIB), as it is built on a different process to the main FPGA.

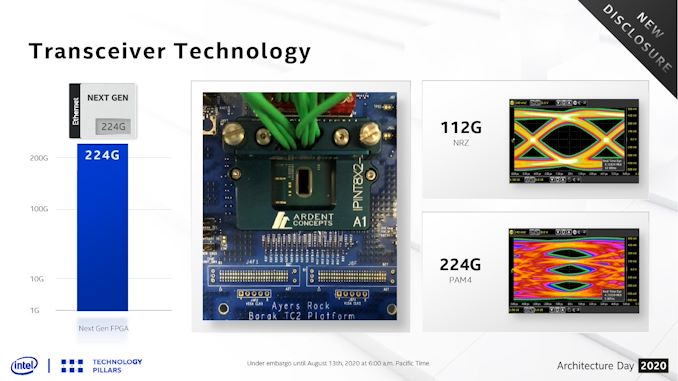

As part of Intel’s Architecture Day 2020, the company announced that it is now working on a new higher speed module, rated at 224G. This module is set to support both 224G in a PAM4 mode (4-bits) and 112G in an NRZ mode (2-bits). This should enable future generations of the Ethernet protocol stack, and Intel says it will be ready in late 2021/2022 and will be backwards compatible with the Agilex hardened 100/200/400 GbE stack. Intel didn’t go into any detail about bit-error rates or power at this time, but did show a couple of fancy eye diagrams.

Related Reading

- Intel Launches Stratix 10 TX: Leveraging EMIB with 58G Transceivers

- Hot Chips 2020 Live Blog: Intel 10nm Agilex FPGAs (8:30am PT)

- Intel’s EMIB Now Between Two High TDP Die: The New Stratix 10 GX 10M FPGA

- Xilinx Announces World Largest FPGA: Virtex Ultrascale+ VU19P with 9m Cells

- Intel Agilex: 10nm FPGAs with PCIe 5.0, DDR5, and CXL

_carousel_thumb.jpg)

_carousel_thumb.jpg)

11 Comments

View All Comments

JayNor - Friday, August 21, 2020 - link

Do PAM4 drivers go on the CPU chips when PCIE6 comes along?PixyMisa - Friday, August 21, 2020 - link

Yep. GDDR6X is also PAM4, so GPUs will be getting it as well. I wonder if DDR6 will also be PAM4.And with 224G transceivers on the way, the path is set for PCIe 8.0 and 112GB/s M.2 drives.

rpg1966 - Friday, August 21, 2020 - link

"This module is set to support both 224G in a PAM4 mode (4-bits)..."PAM4 is 2 bits (4 levels), not 4 bits?

TimSyd - Saturday, August 22, 2020 - link

Correct - PAM4 is 2 bits per symbol.BTW - I hate to think how much power these things guzzle.

Doing *anything* at 112G/224G on Si is an enormous challenge and throwing power at it is pretty much the only way to move signals around on chip. 1 simple nand/inverter gate delay can be 4-6ps in 10nm FinFET & here we're talking just 8ps bit times!

Much kudos to the Serdes engineers who made this happen.

back2future - Saturday, August 22, 2020 - link

seems things are getting towards extremely fast analog to digital conversion, means DDR5 to DDR6 will need higher power envelope on top bandwidth speeds?JayNor - Saturday, August 22, 2020 - link

The Tofino 2 presentation states it has 260 lanes of 56Gb PAM4. Looks like the serdes are on a separate die than the core in their presentation diagrams.https://www.anandtech.com/show/16003/hot-chips-202...

JayNor - Saturday, August 22, 2020 - link

This article says Intel was previously using TSMC 58G serdes chiplets with their FPGAs. I see the 112G and 224G transceivers are being built with an Intel 10nm process now. I wonder if it is the same 10nm process as used on the cpu, or if this requires separate chiplets.https://www.eejournal.com/article/intel-fpga-hits-...

https://www.intel.com/content/www/us/en/products/p...

richmaxw - Sunday, August 23, 2020 - link

This article doesn't say what form factor the transceiver is. Is it SFP or QSFP? 200-gigabit QSFP56 transceivers are already available. But a 200-gigabit SFP transceiver would be big news.extide - Tuesday, August 25, 2020 - link

It's just the logic -- there is no "connector" -- just pins on the die. You could put whatever connector you wanted, or perhaps it's just being used on a single board for chip to chip communication.richmaxw - Tuesday, August 25, 2020 - link

I see. I am a networking guy. Hence the confusion. So, would it be fair to say that this is a single lane?