The Intel Comet Lake Core i9-10900K, i7-10700K, i5-10600K CPU Review: Skylake We Go Again

by Dr. Ian Cutress on May 20, 2020 9:00 AM EST- Posted in

- CPUs

- Intel

- Skylake

- 14nm

- Z490

- 10th Gen Core

- Comet Lake

Conclusion: Less Lakes, More Coves Please

One thing that Intel has learned through the successive years of the reiterating the Skylake microarchitecture on the same process but with more cores has been optimization – the ability to squeeze as many drops out of a given manufacturing node and architecture as is physically possible, and still come out with a high-performing product when the main competitor is offering similar performance at a much lower power.

Intel has pushed Comet Lake and its 14nm process to new heights, and in many cases, achieving top results in a lot of our benchmarks, at the expense of power. There’s something to be said for having the best gaming CPU on the market, something which Intel seems to have readily achieved here when considering gaming in isolation, though now Intel has to deal with the messaging around the power consumption, similar how AMD had to do in the Vishera days.

One of the common phrases that companies in this position like to use is that ‘when people use a system, they don’t care about how much power it’s using at any specific given time’. It’s the same argument borne out of ‘some people will pay for the best, regardless of price or power consumption’. Both of these arguments are ones that we’ve taken onboard over the years, and sometimes we agree with them – if all you want is the best, then yes these other metrics do not matter.

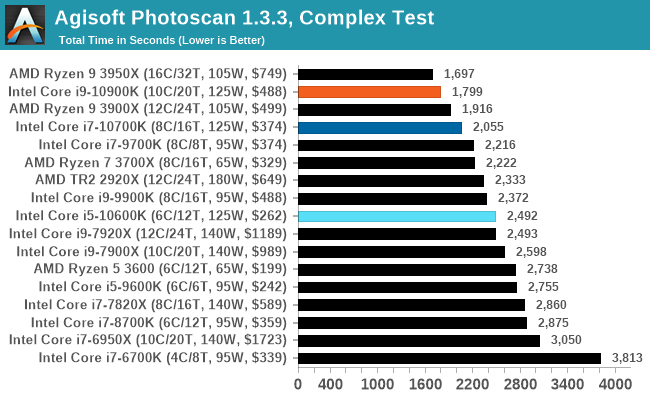

In this review we tested the Core i9-10900K with ten cores, the Core i7-10700K with eight cores, and the Core i5-10600K with six cores. On the face of it, the Core i9-10900K with its ability to boost all ten cores to 4.9 GHz sustained (in the right motherboard) as well as offering 5.3 GHz turbo will be a welcome recommendation to some users. It offers some of the best frame rates in our more CPU-limited gaming tests, and it competes at a similar price against an AMD processor that offers two more cores at a lower frequency. This is really going to be a case of ‘how many things do you do at once’ type of recommendation, with the caveat of power.

The ability of Intel to pull 254 W on a regular 10 core retail processor is impressive. After we take out the system agent and DRAM, this is around 20 W per core. With its core designs, Intel has often quoted its goal to scale these Core designs from fractions of a Watt to dozens of Watts. The only downside now of championing something in the 250 W range is going to be that there are competitive offerings that do 24, 32, and 64 cores in this power range. Sure, those processors are more expensive, but they do a lot more work, especially for large multi-core scenarios.

If we remember back to AMD’s Vishera days, the company launched a product with eight cores, 5.0 GHz, with a 220 W TDP. At peak power consumption, the AMD FX-9590 was nearer 270 W, and while AMD offered caveats listed above (‘people don’t’ really care about the power of the system while in use’) Intel chastised the processor for being super power hungry and not high performance enough to be competitive. Fast forward to 2020, we have the reverse situation, where Intel is pumping out the high power processor, but this time, there’s a lot of performance.

The one issue that Intel won’t escape from is that all this extra power requires extra money to be put into cooling the chip. While the Core i9 processor is around the same price as the Ryzen 9 3900X, the AMD processor comes with a 125 W cooler which will do the job – Intel customers will have to go forth and source expensive cooling in order to keep this cool. Speaking with a colleague, he had issues cooling his 10900K test chip with a Corsair H115i, indicating that users should look to spending $150+ on a cooling setup. That’s going to be a critical balancing element here when it comes to recommendations.

For recommendations, Intel’s Core i9 is currently performing the best in a lot of tests, and that’s hard to ignore. But will the end-user want that extra percent of performance, for the sake of spending more on cooling and more in power? Even in the $500 CPU market, that’s a hard one to ask. Then add in the fact that the Core i9 doesn’t have PCIe 4.0 support, we get to a situation that it’s the best offering if you want Intel, and you want the best processor, but AMD has almost the same ST performance, better MT performance in a lot of cases, and a much better power efficiency and future PCIe support – it comes across as the better package overall.

Throughout this piece I’ve mainly focused on the Core i9, with it being the flagship part. The Core i7 and the Core i5 are also in our benchmark results, and their results are a little mixed.

The Core i5 doesn’t actually draw too much power, but it doesn’t handle the high performance aspect in multi-threaded scenarios. At $262 it comes in at more expensive than the Ryzen 5 3600 which is $200, and the trade-off here is the Ryzen’s better IPC against the Core i5’s frequency. Most of the time the Core i5-10600K wins, as it should do given that it costs an extra $60, but again doesn’t have the PCIe 4.0 support that the AMD chip offers. When it comes to building that $1500 PC, this will be an interesting trade-off to consider.

For the Core i7-10700K, at 8C/16T, we really have a repeat of the Core i9-9900K from the previous generation. It offers a similar sort of performance and power, except with Turbo Boost Max 3.0 giving it a bit more frequency. There’s also the price – at $374 it is certainly a lot more attractive than it was at $488. Anand once said that there are no bad products, only bad prices, and a $114 price drop on the 8c overclocked part is highly welcome. At $374 it fits between the Ryzen 9 3900X ($432) and the Ryzen 7 3800X ($340), so we’re still going to see some tradeoffs against the Ryzen 7 in performance vs power vs cost.

Final Thoughts

Overall, I think the market is getting a little bit of fatigue from continuous recycling of the Skylake microarchitecture on the desktop. The upside is that a lot of programs are optimized for it, and Intel has optimized the process and the platform so much that we’re getting a lot higher frequencies out of it, at the expense of power. Intel is still missing key elements in its desktop portfolio, such as PCIe 4.0 and DDR4-3200, as well as anything resembling a decent integrated graphics processor, but ultimately one could argue that the product team are at the whims of the manufacturing arm, and 10nm isn’t ready yet for the desktop prime time.

When 10nm will be ready for the desktop we don’t know - Intel is set to showcase 10nm Ice Lake for servers at Hot Chips in August, as well as 10nm Tiger Lake for notebooks. If we’re stuck on 14nm on the desktop for another generation, then we really need a new microarchitecture that scales as well. Intel might be digging itself a hole, optimizing Skylake so much, especially if it can’t at least match what might be coming next – assuming it even has enough space on the manufacturing line to build it. Please Intel, bring a Cove our way soon.

For the meantime, we get a power hungry Comet Lake, but at least the best chip in the stack performs well enough to top a lot of our charts for the price point.

These processors should be available from today. As far as we understand, the overclockable parts will be coming to market first, with the rest coming to shelves depending on region and Intel's manufacturing plans.

220 Comments

View All Comments

Darkworld - Wednesday, May 20, 2020 - link

10500k?Chaitanya - Wednesday, May 20, 2020 - link

Pointless given R5 3000 family of CPUs.yeeeeman - Wednesday, May 20, 2020 - link

Yeah right. Except it will beat basically all and lineup in games. Otherwise it is pointless.yeeeeman - Wednesday, May 20, 2020 - link

All AMD lineup*SKiT_R31 - Wednesday, May 20, 2020 - link

Yeah with a 2080 Ti the flagship 10 series CPU beats AMD in most titles, generally by a single digit margin. Who is pairing a mid-low end CPU with such a GPU? Also if there were to be a 10500K, you probably don't need to look much further than the 9600K in the charts above.This may have been missed on you, but what CPU reviews like the above show is: unless you are running the most top end flagship GPU and are low resolution high fps gaming, AMD is better at every single price point. Just accept it, and move on.

Drkrieger01 - Wednesday, May 20, 2020 - link

It also means that if you have purchased an Intel 6th gen CPU in i5 or i7, there's not much reason to upgrade unless you need more threads. And it will only be faster if you're using those said threads effectively. I'm still running an i5 6600K, granted it's running at 4.6GHz - there's no reason for me to upgrade until either Intel and/or AMD come up with better architecture and frequency combination (IPC + clock speed).I'll likely be taking the jump back to AMD for the Ryzen 4000's after a long run since the Sandy Bridge era.

Anyone needing only 4-6 cores should wait until then as well.

Samus - Thursday, May 21, 2020 - link

That's most people, including me. I'm still riding my Haswell 4C/8T because for my applications the only thing more cores will get me is faster unraring of my porn.Lord of the Bored - Thursday, May 21, 2020 - link

Hey, that's an important task!Hxx - Wednesday, May 20, 2020 - link

at 1440p intel still leads in gaming. It may not lead by much or may not lead by enough to warranty buying it over Intel but the person buying this chip is rocking a high end gpu and will likely upgrade to a high end gpu and the performance gap will only widen in intel's favor as the gpu becomes less of a bottleneck. So yeah pairing this with a 2060 makes no sense, go AMD. but pairing this with a 2080ti and a soon to be released 3080TI oh yeah this lineup will be a better choice.DrKlahn - Thursday, May 21, 2020 - link

By that logic the new games released since the Ryzen 3x000 series debut last year should show a larger gap at 1440+ between Intel and AMD. But they don't. And judging by past trends I doubt they will in the future either.As GPUs advance so does the eye candy in the newer engines, keeping the bottleneck pretty much where it always is at higher resolutions and detail levels, the GPU.