The AMD Radeon RX 5500 XT Review, Feat. Sapphire Pulse: Navi For 1080p

by Ryan Smith on December 12, 2019 9:00 AM ESTThe Test

As is usually the case for launches without reference hardware, we’ve had to dial down our Sapphire cards slightly to meet AMD’s reference specifications. In this case, Sapphire’s secondary BIOS offers reference settings, so for our reference-spec testing, we’re using that BIOS. Otherwise, for at-stock testing of the Sapphire Pulse RX 5500 XT 8GB, that is being done with the primary (performance) BIOS.

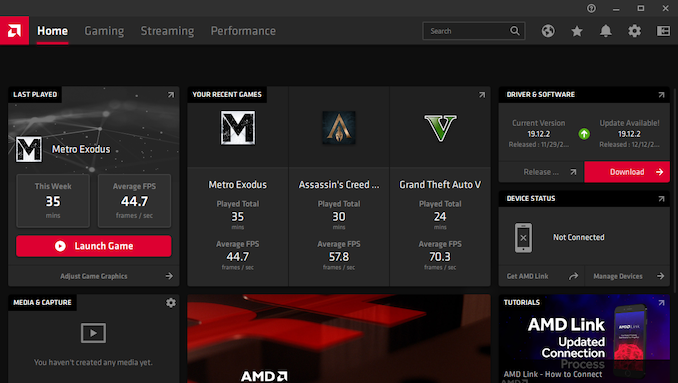

Meanwhile on the driver front, we’re using AMD’s new Radeon Software Adrenaline Edition 19.2.2 software set, which are the launch drivers for the RX 5500 XT. AMD has introduced a number of control panel features here (not to mention a UI overhaul) that we’ll cover in a separate article. Otherwise for performance testing, these drivers are not substantially different from earlier AMD drivers – though we’ve retested the RX 570 and RX 5700 to ensure those results are fully up to date.

Finally, as the RX 5500 series is focused on 1080p gaming, this is what our benchmark results will focus on. I have also tested the RX 5500 XT 8GB at our 1440p settings – as expected, it’s not very playable there – and while these results haven’t been graphed, they are available in our Bench system.

| CPU: | Intel Core i9-9900K @ 5.0GHz |

| Motherboard: | ASRock Z390 Taichi |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Phison E12 PCIe NVMe SSD (960GB) |

| Memory: | G.Skill Trident Z RGB DDR4-3600 2 x 16GB (17-18-18-38) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon RX 5700 Sapphire Pulse RX 5500 XT 8GB Sapphire Pulse RX 5500 XT 4GB AMD Radeon RX 580 AMD Radeon RX 570 AMD Radeon RX 460 4GB AMD Radeon R9 380 NVIDIA GeForce GTX 1660 Super NVIDIA GeForce GTX 1660 NVIDIA GeForce GTX 1650 Super NVIDIA GeForce GTX 1060 3GB |

| Video Drivers: | NVIDIA Release 441.41 NVIDIA Release 441.07 AMD Radeon Software Adrenalin 2020 Edition 19.12.2 AMD Radeon Software Adrenalin 2019 Edition 19.10.2 |

| OS: | Windows 10 Pro (1903) |

97 Comments

View All Comments

YB1064 - Thursday, December 12, 2019 - link

Poor man's NVidia indeed.flashbacck - Thursday, December 12, 2019 - link

What do you mean by that?Valantar - Thursday, December 12, 2019 - link

Apparently the same performance at the same price with a better encode/decode block and better idle power usage but slightly worse gaming power usage and buggy OC controls equals "poor man's Nvidia". Who knew?assyn - Thursday, December 12, 2019 - link

Actually the Nvenc is better encode/decode because has wider format support and can decode 8K videos, while the AMD only capable of 4K.Valantar - Thursday, December 12, 2019 - link

The 1650 and 1650S have a cut-down NVENC block lacking pretty much every single improvement made with the Turing generation. Not all NVENC is equal.Ryan Smith - Thursday, December 12, 2019 - link

1650S has a full NVENC block. It's based on TU116, not TU117.jgraham11 - Friday, December 13, 2019 - link

Seems like this will be similar to the GCN cards in the past (RX5XX Series), at the beginning its ok, then over the years AMD's fine wine kicks in. Making incremental improvements that improve overall performance with every driver release. Do realize they are still using the GCN instruction set but RDNA is a new architecture, an architecture that will be in both the Xbox and PlayStation systems.WaltC - Saturday, December 21, 2019 - link

I feel as though AMD has hobbled this card. They put the GDDR6 VRAM onboard, effectively doubling more or less the bandwidth of GDDR5, but then they took it all back with the 128-bit bus...;) I think they missed an opportunity here--but I'm not privy to the manufacturing particulars for the product--so maybe not. Anyway, it seems like they might have made the 5500XT a GDDR6 8GBs, 256-bit bus GPU for $279; then made the 5500 with 4GB, and a 128-bit bus for ~$129 or so. The SPs by themselves are sufficiently cut down to the degree that even a 256-bit bus GPU like I've dreamed up here is going to be sludge next to a 5700--or, that should certainly be the case, I would think. The card almost seems "overly hobbled" to my way of thinking. I think that @ 256-bit 5500XT, 8GBs of GDDR6 might've been a smash seller for AMD @ a ~$279 MSRP, leaving the 5700 still clobbering it simply based on the much higher number of SPs. I just see a lot of potential here that seems overlooked--unless they have plans for a 5600XT, maybe, early 1 Quarter 2020....;)WaltC - Sunday, December 22, 2019 - link

Will someone please set me straight on whether this is an 8-lane PCIe card? I'm reading this stuff you-know-where but it doesn't make any sense at all to me. It's claimed that accordingly the 5500XT runs much faster on PCIe4 than PCIe3. Eh?https://www.reddit.com/r/Amd/comments/edo4u8/rx_55...

RX-480/580/590 are all PCIex16 GPUs with 256-bit buses running GDDR5. Is the article linked here factual? thanks--to whomever can answer....;) Doesn't sound probable to me--but if it's true then AMD has hog-tied this card six ways to Sunday--trussed up tighter than a Christmas piglet...;)

WaltC - Sunday, December 22, 2019 - link

OK, I saw the one line in the review I missed first time about "I suspect 8 PCIe lanes"--just very strange--I don't understand this product at all! Perhaps the full incarnation of the GPU is going into the PS5/XBox 2, it's just weird. To have to hobble it that much, the GPU must be very robust.