Spotted at Supercomputing 2019: A 256 GB Gen-Z Memory Module

by Dr. Ian Cutress on November 29, 2019 12:00 PM EST- Posted in

- Enterprise

- Memory

- DDR4

- Trade Shows

- Gen-Z

- Supercomputing 19

- SC19

- ZMM

As a millennial, everything in the media that ‘Gen Z’ does often gets lumped into the millennial category. Thankfully there’s another type of Gen-Z in the world: the cache coherent memory-semantic standard. Where standards like CXL are designed to work inside a node, CXL is meant to work between nodes, providing a switched fabric or a point-to-point connectivity for memory, storage, accelerators, and even other servers.

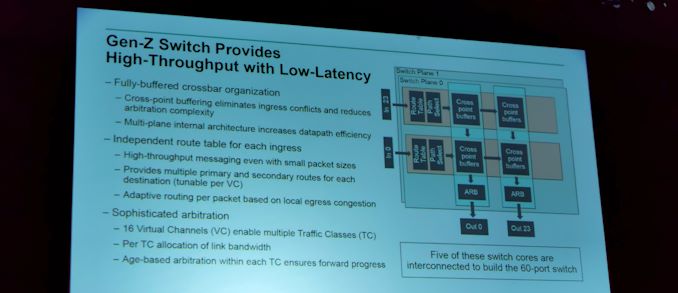

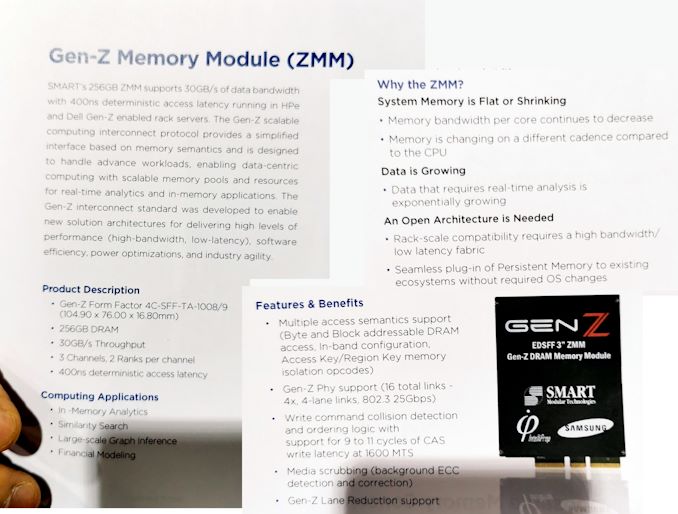

Earlier this year we saw the announcement of a Gen-Z switch which provides a fabric backbone to which hardware can be connected. The switch allows for fabric management, switching, routing, and security, and allows hardware configuration mixes of storage, compute, and accelerators. We found one such add-on at this year’s Supercomputing: the ZMM, or Gen-Z Memory Module.

What we had in front of us was actually a dummy unit for show purposes, but it is a 3-inch wide memory device that adds additional distributed memory to the network such that different nodes can take advantage of it when needed. Inside is 256 GB of DRAM, providing 30 GB/s bandwidth through the Gen-Z interface: that’s the equivalent of dual channel DDR4-1866. The total latency is listed as 400 ns which is an order of magnitude slower than main memory. Ultimately this is slower than traditional memory-controller supported DRAM, but aims to be faster than network attached storage.

We also saw marketing for a PCIe Gen 5.0 compatible Gen-Z Connector

With these modules, ultimately the goal of Gen-Z is to have a 4U unit in a rack that customers can install any number of memory modules, storage drives, accelerators, or other compute resources, without worrying about exactly where they are in the system or how the system can access them. The Gen-Z consortium is aiming for ‘rack-scale compatibility’, and wants to be able to make these rack-level adjustments seemless to existing ecosystems without OS changes.

11 Comments

View All Comments

hetzbh - Friday, November 29, 2019 - link

"Where standards like CXL are designed to work inside a node, CXL is meant to work between nodes"Hmmm?????

Antony Newman - Friday, November 29, 2019 - link

Typo :CXL : short distance = inside-a-node

Gen-Z : long distance = between nodes.

AJ

Smell This - Friday, November 29, 2019 - link

I suspect in the current vernacular we should call this a seamless *ZiMM* ??

(instead of 'seemless' ...)

Yojimbo - Friday, November 29, 2019 - link

OK, boomer. :P I didn't even know seemless was a word. It seems it means unseemly.timecop1818 - Saturday, November 30, 2019 - link

yea, zimm, as in zero-insertions-memory-module.as in how many times this useless shit will be used once the ridiculous iot/ai scam runs out of their stream of non stop bullshit, oh wait who the fuck am i kidding.

Brane2 - Sunday, December 1, 2019 - link

With these kind of latencies, isn't this useless for the most part ?One has to pay hefty price premium to get what - equivalent of shitty low-cost DDR3,

hanging off memory controller, done with LEGOs ?

shelbystripes - Sunday, December 1, 2019 - link

I think you’re missing the point. This doesn’t hang off the memory controller. It’s storage you can add on top of local memory controller storage, not in lieu of it.Gen-Z is a switching interconnector fabric. It seems like Cray/HPE’s original long term vision was to one day replace local memory controllers, but that isn’t how these first generations will work (and that may never work). This allows you to connect multiple systems to a Gen-Z switch, to share additional Gen-Z resources on that switch.

So don’t think of this as shitty expensive DDR. Think of it as insanely high performance network attached storage. A bunch of servers in a rack can connect (via short distance optical cabling) to a 4U Gen-Z “hub” in the rack, the front lined with Gen-Z cartridge slots. You can populate them with Gen-Z DRAM for pooled ADDITIONAL storage much faster than even a purely NVMe based NAS. Or other Gen-Z devices such as custom compute accelerators (I’m assuming these will also have larger slots and better cooling options). Or some combination of the two.

Once you’ve maxed out RAM per server, if you still need maximum performance for next-level storage (and even better, pooled among your servers) this looks like a pretty damn fast option.

Brane2 - Sunday, December 1, 2019 - link

Meh. Corner cases. You'd still have to pay premum for RAM (+ GenZ infrastructure), only to use it more like NAND FLASH.This is kind of like having extra 16GB of L1 cache off board. Insanely expensive per MB and power hungry, but fast. Or it would be, if it weren't killed by the relatively slow link.

I understand Gen-Z and similar efforts, at least in principle.

I'm saying that they will have to find some solution or workaround for these kind of problems or

they won't be able to make progress into the heart of HPC etc.

Xajel - Sunday, December 1, 2019 - link

I hope to see a new article from Anandtech explaining the current and plans for consumer storage and interfaces. They were planning on using U.2 drives to succeed SATA but they never went off. We're hearing also about OCuLink which is supposed to compete against U.2.which is supposed also to be more flexible than U.2.One of the dead ends with U.2 is the 4x lanes. While it's okay now with PCIe 3.0 & 4.0 and 5.0 coming also. But PCIe lanes are expensive, I mean regular motherboards have anywhere from 4-6 SATA ports. Using 6 U.2 drives means 24 PCIe lanes. And not all of the drives will use the bandwidth of 4 lanes, especially with PCIe 4.0 & 5.0 coming now.

AFAIK, OCuLink can split the same connector into multiple connectors with fewer PCIe lanes, so a single OCuLink can drive 4 drives at x1 lanes which can give 1GB/s (PCIe 3.0), 2GB/s (PCIe 4.0) and even 4GB/s (PCIe 5.0).

But I've never heard anything about bringing mass NVMe storage to completely replace SATA drives. Or at least the next SATA 4.0 (if any, these should have NVMe support as well).

shelbystripes - Sunday, December 1, 2019 - link

M.2 is the de facto next-gen performance storage standard for consumers. It’s already happened. By now pretty much every new motherboard has at least one M.2 slot, the flexibility of having SATA or NVMe support enabled the transition to consistently add this new physical slot more cheaply, and even many budget motherboards support NVMe by now too. They might do it with a x2 PCIe connection, but they’ll offer it. And that flexibility (to do x2 or x4 connections) also helped speed up adoption.Consumers don’t need all their drives to be high performance, so having 6 NVMe devices makes no sense. One or two M.2 slots for NVMe SSDs, plus four SATA ports for slower spinning disks, still allow more total storage space than 99% of consumers needs. Theres no deal move to replace SATA3 in the consumer space because it’s not really needed. It would increase cost much more than it would provide noticeable consumer benefit. Much cheaper, and good enough for consumers, to just use a large enough M.2 SSD as main storage and SATA3 spinning disks for cold storage.