Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

by Dr. Ian Cutress on September 17, 2019 10:00 AM ESTA Short Detour on Mobile CPUs

For our readers that focus purely on the desktop space, I want to dive a bit into what happens with mobile SoCs and how turbo comes into effect there.

Most Arm based SoCs use a mechanism called EAS (Energy-Aware Scheduling) to manage how it implements both turbo but also which cores are active within a mobile CPU. A mobile CPU has one other aspect to deal with: not all cores are the same. A mobile CPU has both low power/low performance cores, and high power/high performance cores. Ideally the cores should have a crossover point where it makes sense to move the workload onto the big cores and spend more power to get them done faster. A workload in this instance will often start on the smaller low performance cores until it hits a utilization threshold and then be moved onto a large core, should one be available.

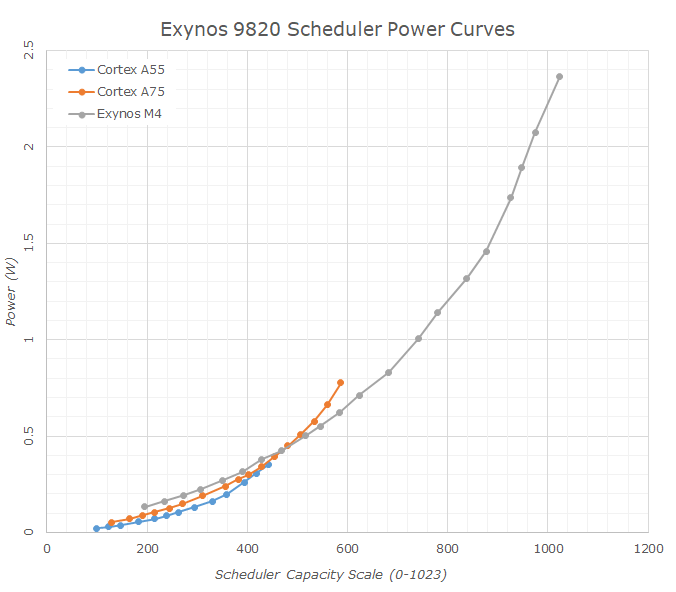

For example, here's Samsung's Exynos 9820, which has three types of cores: A55, A75, and M4. Each core is configured to a different performance/power window, with some overlap.

Peak Turbo on these CPUs is defined in the same way as Intel does on its desktop processors, but without the Turbo tables. Both the small CPUs and the big CPUs will have defined idle and maximum frequencies, but they will conform to a chip-to-chip defined voltage/frequency curve with points along that curve. When the utilization of a big core is high, the system will react and offer it the highest voltage/frequency up that curve as is possible. This means that the strongest workloads get the strongest frequency.

However, in Energy Aware Scheduling, because the devices that these chips go into are small and often have thermal limitations, the power can be limited by battery or thermals. There is no point for the chip to stay at maximum frequency only to burn in the hand. So the system will apply an Energy Aware algorithm, combined with the thermal probes inside the device, to ensure that the turbo and workload tend towards a peak skin temperature of the device (assuming a consistent, heavy workload). This power is balanced across the CPU, the GPU, and any additional accelerators within the system, and the proportion of that balance can be configured by the device manufacturer to respond to what proportion of CPU/GPU/NPU instructions are being fed to the chip.

As a result, when we see a mobile processor that advertises ‘2.96 GHz’, it will likely hit that frequency but the design of the device (and the binning of the chip) will determine how long before thermal limits kick in.

144 Comments

View All Comments

Dragonstongue - Tuesday, September 17, 2019 - link

ty so much for the pro article and summing in questionnot always do I come here and "want" to continue reading over...I keep to myself.

thankfully this was not such an article

o7

Iger - Thursday, September 19, 2019 - link

+1This mirrors my thoughts and feelings exactly.

mikato - Monday, September 23, 2019 - link

I completely agree. I had seen some of this with Hardware Unboxed, Gamers Nexus, Der8auer, Reddit. This was a great summary with solid explanation behind it, a more helpful way to learn about the whole issue.Now for the next issue - Hey, Ian is a doctor now, thinks he's better than all of us. Discuss... :)

(yes I knew he had a doctorate already)

azfacea - Tuesday, September 17, 2019 - link

death to intel LULPhynaz - Wednesday, September 18, 2019 - link

Drugs are bad for you, seek treatment.Smell This - Wednesday, September 18, 2019 - link

It sure is interesting that the **Chipzillah Propaganda Machine** has entered high gear/over-drive over the last several weeks after reports that the "Intel Apollo Lake CPUs May Die Sooner Than Expected" ...https://www.tomshardware.com/news/intel-apollo-lak...

Funny that, huh?

eastcoast_pete - Tuesday, September 17, 2019 - link

Thanks Ian, helpful article with good explanations!Question: The "binning by expected lifespan" caught my eye. Could you do another nice backgrounder on how overclocking affects lifespan? I believe many out there believe that there is such a thing as a free lunch. So, how fast does a CPU (or GPU) degrade if it gets pushed (overclocked and overvolted) to the still-usable limit. Maybe Ryan can chime in on the GPU aspect, especially the many "factory overclocked" cards. Thanks!

Ian Cutress - Tuesday, September 17, 2019 - link

I've been speaking to people about this to see if we can get a better understanding about manufacturing as it relates to expected product lifetimes and such. Overclocking would obviously be an extension to that. If something happens and we get some info, I'll write it up.igavus - Tuesday, September 17, 2019 - link

Aside from overclocking, it'd be interesting to know if the expected lifetime is optimized with warranty times and if what we're seeing is a step forward on the planned obsolescence path.It's sort of more important now than ever, because with 8 core being the new norm soon - we'll probably see even longer refresh cycles as workloads catch up to saturate the extra performance available. And limiting product lifetime would help curb those longer than profitable refresh cycles.

FunBunny2 - Tuesday, September 17, 2019 - link

"if what we're seeing is a step forward on the planned obsolescence path."you really, really should get this 57 Plymouth, cause your 56 Dodge has teeny tail fins.