The OPPO Reno 10x Zoom Review: Bezeless Zoom

by Andrei Frumusanu on September 18, 2019 10:00 AM EST- Posted in

- Mobile

- Smartphones

- Oppo

- Snapdragon 855

- Oppo Reno 10x Zoom

Machine Learning Inference Performance

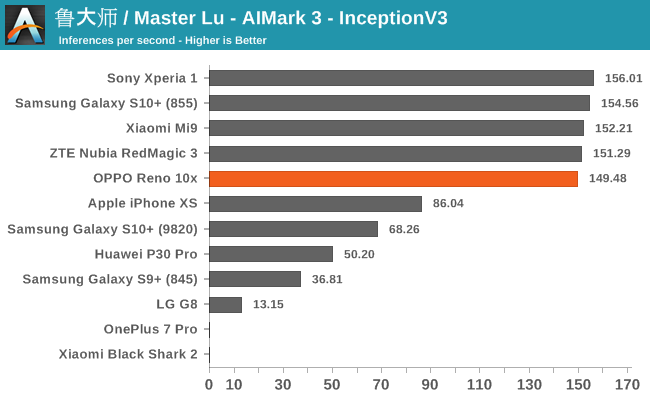

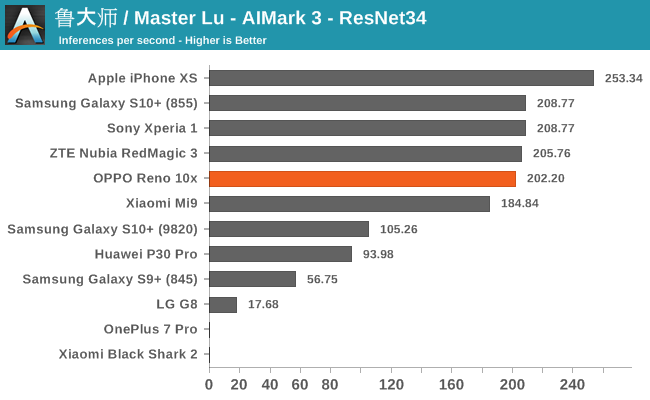

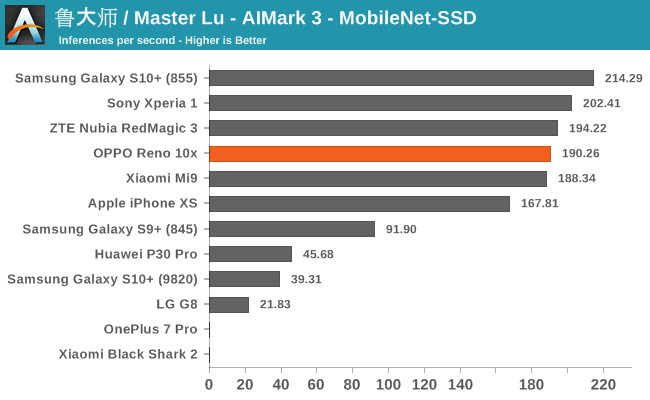

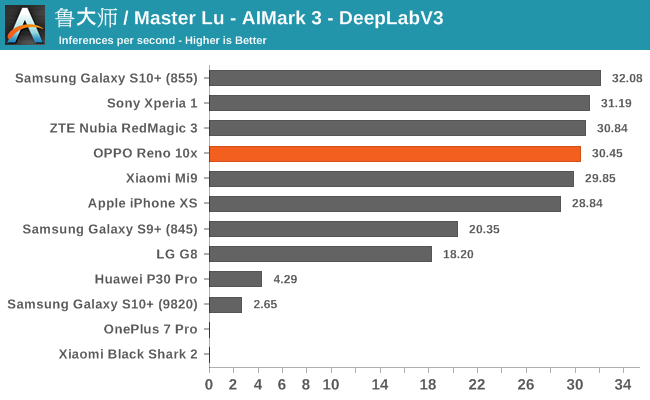

AIMark 3

AIMark makes use of various vendor SDKs to implement the benchmarks. This means that the end-results really aren’t a proper apples-to-apples comparison, however it represents an approach that actually will be used by some vendors in their in-house applications or even some rare third-party app.

In AIMark, the Oppo Reno 10x ends up quite adequately amongst the Snapdragon 855 devices who come shipping with the corresponding Qualcomm libraries to make the benchmark work. We do note that other S855 phones have a slight edge here, likely due to their more optimised software stack.

AIBenchmark 3

AIBenchmark takes a different approach to benchmarking. Here the test uses the hardware agnostic NNAPI in order to accelerate inferencing, meaning it doesn’t use any proprietary aspects of a given hardware except for the drivers that actually enable the abstraction between software and hardware. This approach is more apples-to-apples, but also means that we can’t do cross-platform comparisons, like testing iPhones.

We’re publishing one-shot inference times. The difference here to sustained performance inference times is that these figures have more timing overhead on the part of the software stack from initialising the test to actually executing the computation.

AIBenchmark 3 - NNAPI CPU

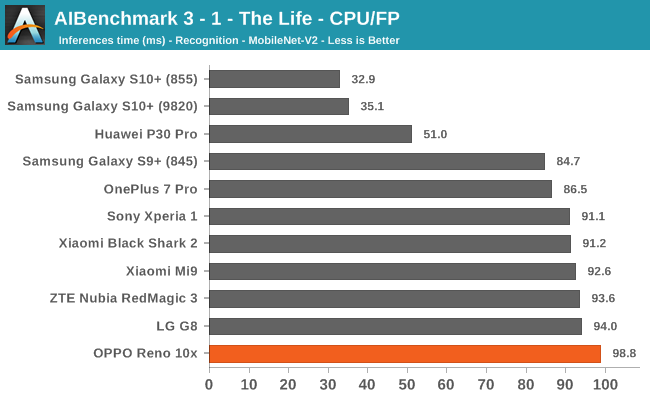

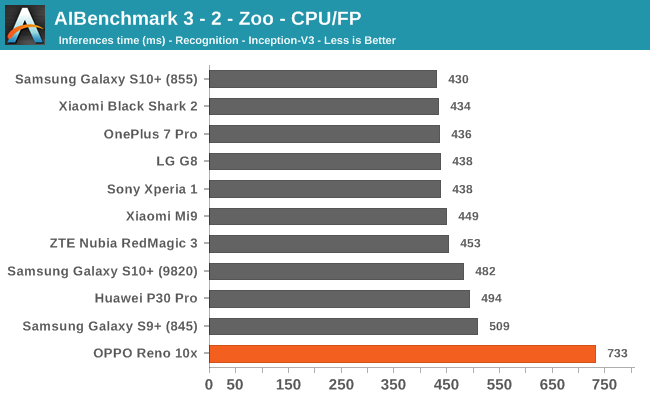

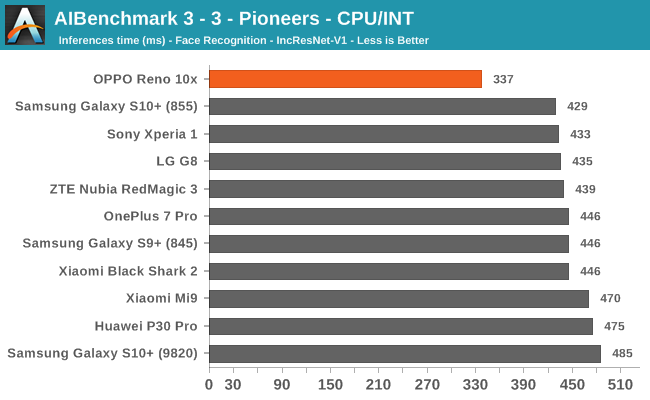

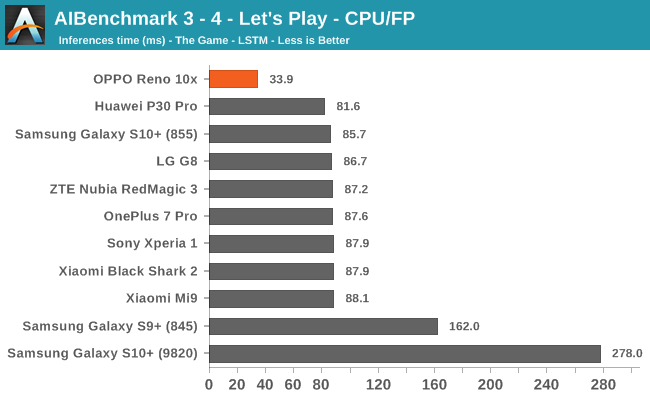

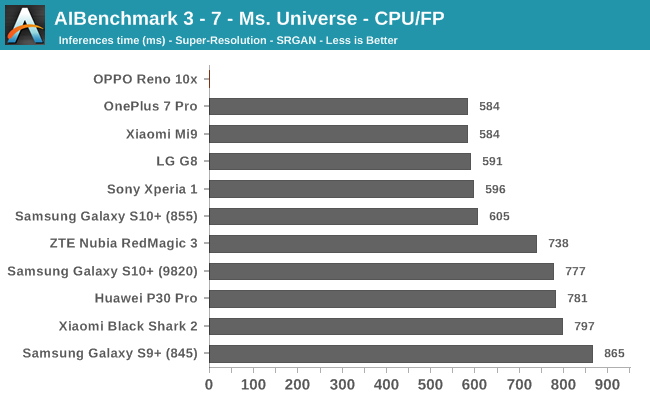

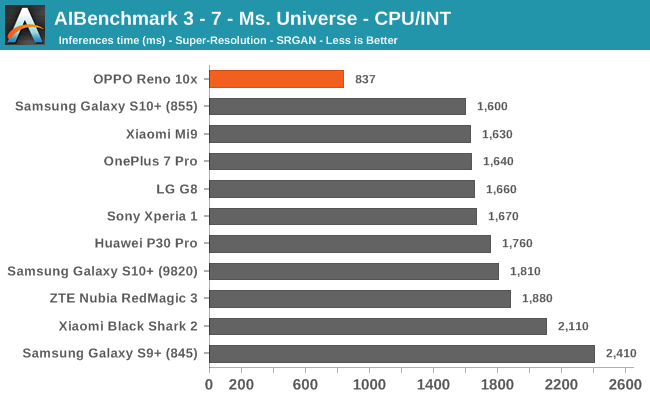

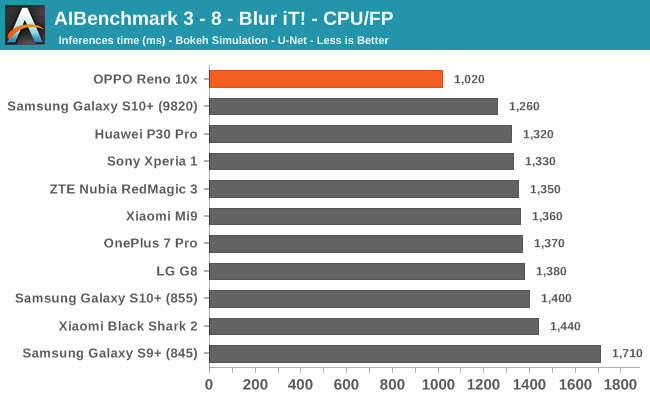

We’re segregating the AIBenchmark scores by execution block, starting off with the regular CPU workloads that simply use TensorFlow libraries and do not attempt to run on specialized hardware blocks.

In the CPU accelerated workloads, we see that the Oppo Reno 10x absolutely stands out compared to all other Snapdragon devices, having nothing in common with the rest of the pack in terms of the resulting performance figures. The phone ends up with one performance regression, one test that didn’t complete, but otherwise the performance looks to actually be better than that of what’s available on other Snapdragon 855 phones.

The only explanation that I can offer here is that it looks like rather than using Qualcomm’s NN driver stack, the phone is falling back to the default Android Tensorflow libraries. The interesting thing here is indeed that the phone looks to be performing better with these libraries than that the ones that are shipping with the Qualcomm BSP, something the company should certainly look into.

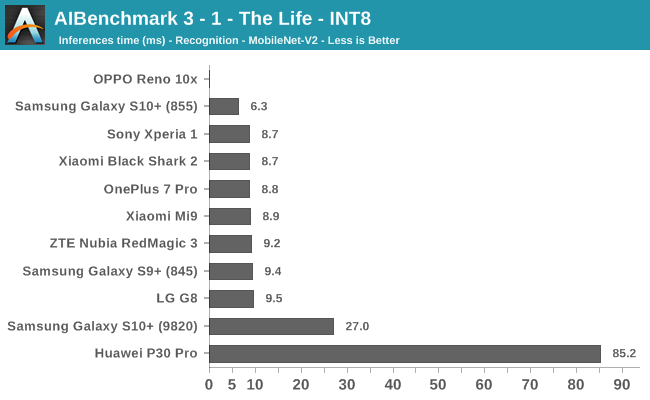

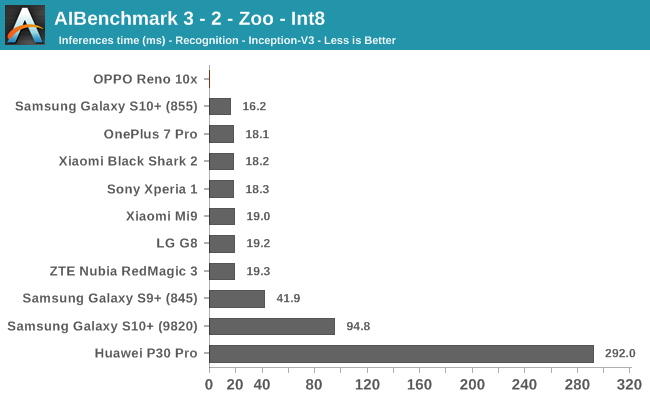

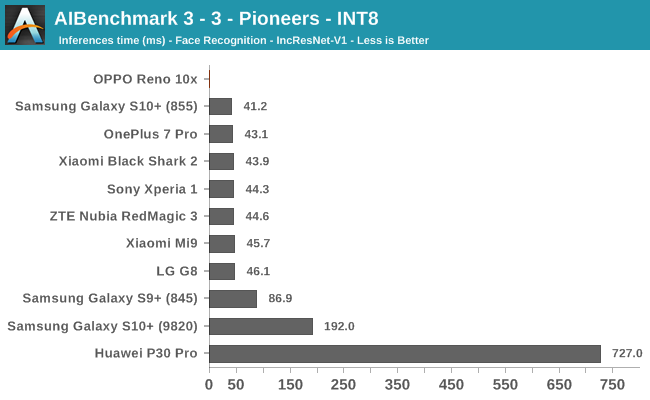

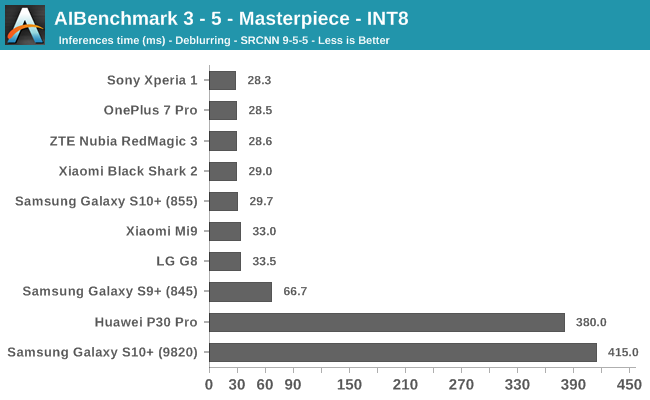

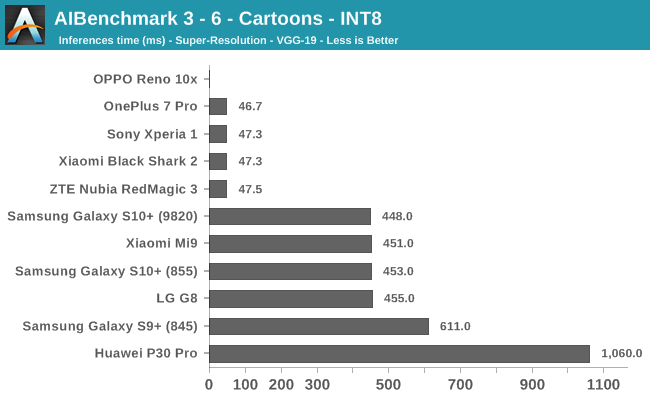

AIBenchmark 3 - NNAPI INT8

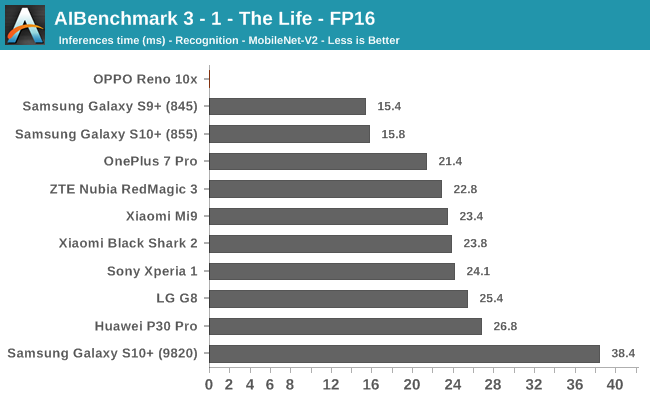

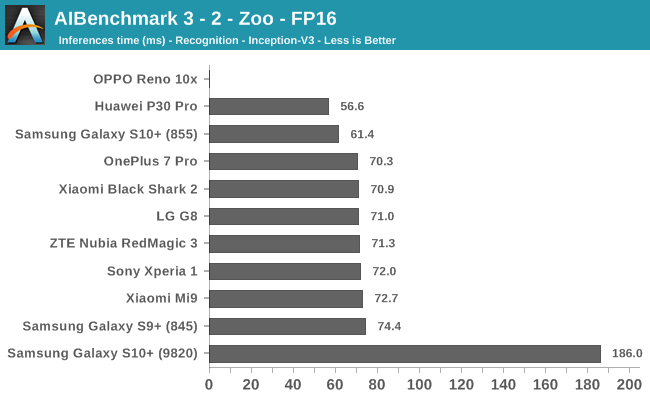

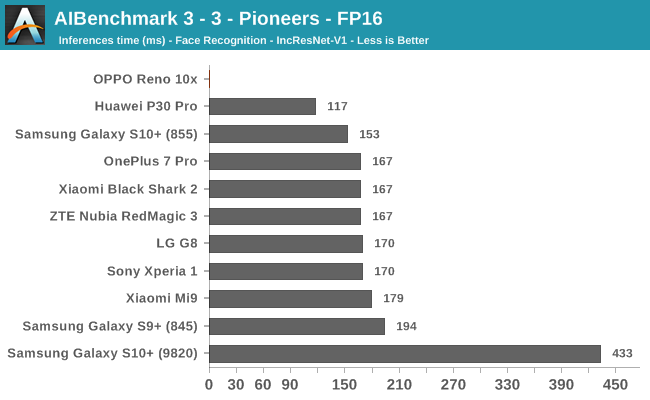

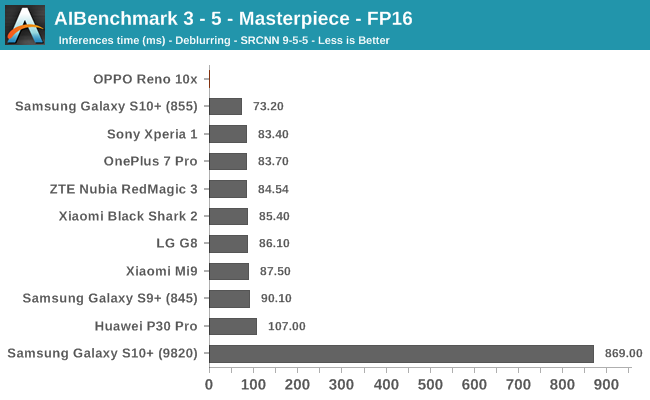

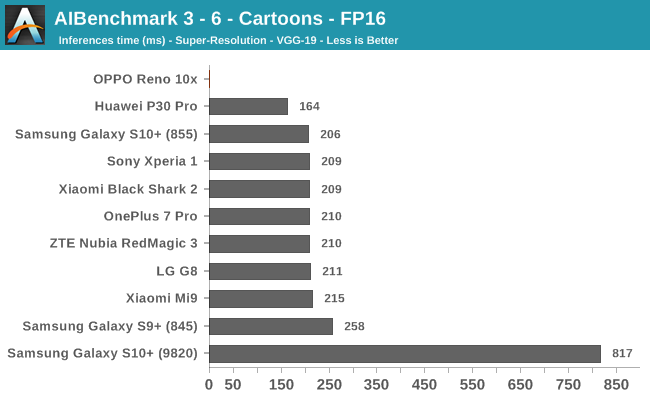

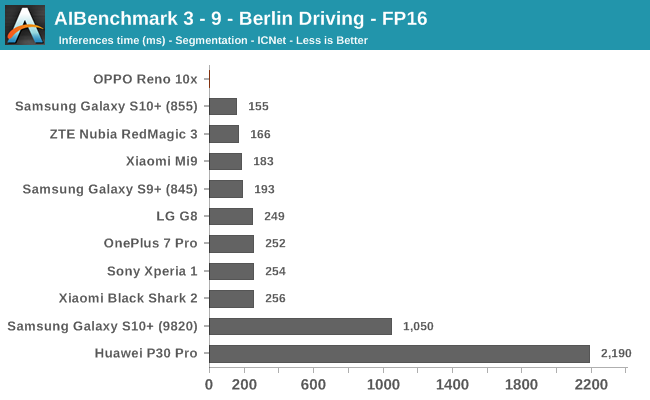

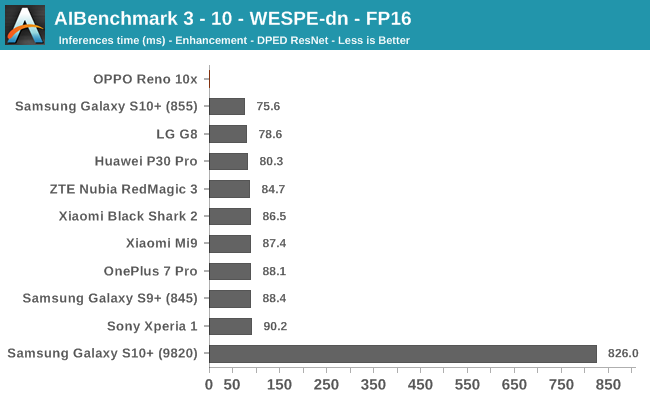

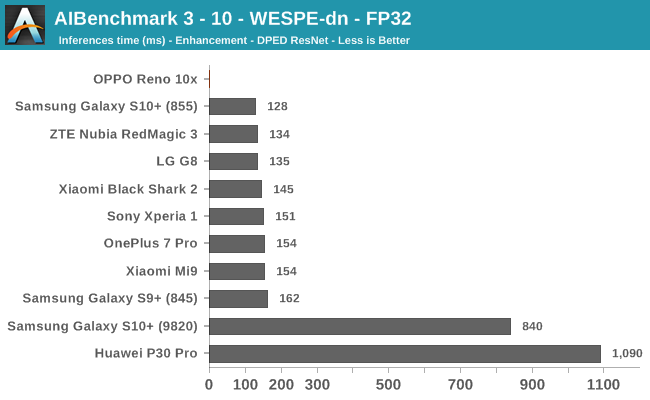

AIBenchmark 3 - NNAPI FP16

AIBenchmark 3 - NNAPI FP32

Unfortunately for the rest of the AI Benchmark tests which make use of NNAPI acceleration for INT8, FP16 and FP32 models, the Reno 10x just didn’t have the correct drivers and thus wasn’t able to perform the tests.

If there’s one thing that’s worse than bad performance, it’s not able to perform a task at all. I’m not sure if the Reno 10x performs any differently in a global firmware version of if this issue is solely a characteristic of the Chinese variant. In any case, I find it quite disappointing for the machine learning ecosystem as Oppo is a major vendor, and the Reno is its flagship device. How are application developers supposed to embrace machine learning when we the software situation still has such enormous draw-backs?

40 Comments

View All Comments

Alistair - Wednesday, September 18, 2019 - link

Everytime I get excited by new phones I check the weight and am shocked at how heavy they are. The iPhone XR is very wide and heavy also, all these phones are 190g to 250g.I'll take the LG G8 or Samsung S10 just for the weight savings (150-160g), I'll keep the notch or hole instead.

Andrei Frumusanu - Wednesday, September 18, 2019 - link

Wholeheartedly agree.close - Saturday, September 21, 2019 - link

Phones get bigger and the weight scale of phone materials tends to follow the famed "premium feel" scale (polycarbonate<aluminum<glass<ceramic<steel) promoted for years.This is larger than an S10+ or a G8 but other than that is there anything in particular that would make it heavier than any other phone with Gorilla Glass front and back and steel frame? Wonder if the mechanical motorized slide-out camera is a major contributor.

flyingpants265 - Wednesday, September 18, 2019 - link

Wow, the thing that matters the absolute least out of every aspect of a phone..Alistair - Wednesday, September 18, 2019 - link

If you use it a lot and you feel fatigued because it is too heavy or hard to hold, that is the most important aspect of a "mobile" phone.Alistair - Wednesday, September 18, 2019 - link

Like how people don't care about the weight of their laptop, unless their use case is carrying it around in their backpack all day.StevoLincolnite - Wednesday, September 18, 2019 - link

It's not the end of the world.250g isn't significant, I work out... If it's an issue for you, perhaps you should too?

antifocus - Wednesday, September 18, 2019 - link

It's about the perception of the weight, the extra 250g can very well make you uncomfortable during travel.nils_ - Sunday, September 22, 2019 - link

That's the part where I care about it the least, though I generally don't care as much about the weight or height. If the compromise is between weight/height and performance/cooling, I'd rather have more performance.Notmyusualid - Sunday, October 6, 2019 - link

Whimp.