NVIDIA To Bring DXR Ray Tracing Support to GeForce 10 & 16 Series In April

by Ian Cutress & Ryan Smith on March 18, 2019 6:40 PM EST

During this week, both GDC (the Game Developers’ Conference) and GTC (the Game Technology Conference) are happing in California, and NVIDIA is out in force. The company's marquee gaming-related announcement today is that, as many have been expecting would happen, NVIDIA is bringing DirectX 12 DXR raytracing support to the company's GeForce 10 series and GeForce 16 series cards.

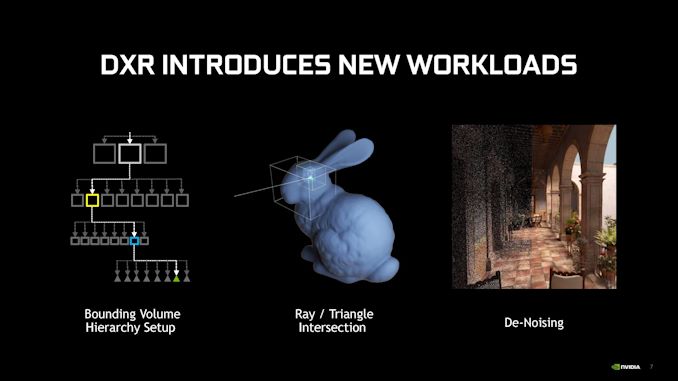

When Microsoft first announced the DirectX Raytracing API just over a year ago, they set out a plan for essentially two tiers of hardware. The forward-looking plan (and long-term goal) was to get manufacturers to implement hardware features to accelerate raytracing. However in the shorter term, and owing to the fact that the API doesn't say how ray tracing should be implemented, DXR would also allow for software (compute shader) based solutions for GPUs that lack complete hardware acceleration. In fact, Microsoft had been using their own internally-developed fallback layer to allow them to develop the API and test against it internally, but past that they left it up to hardware vendors if they wanted to develop their own mechanisms for supporting DXR on pre-raytracing GPUs.

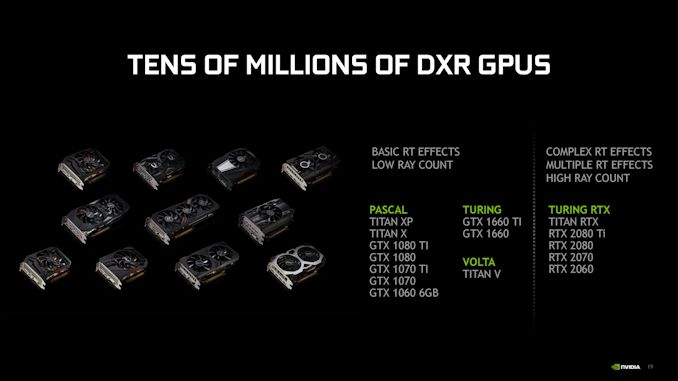

NVIDIA for their part has decided to go ahead with this, announcing that they will support DXR on many of their GeForce 10 (Pascal) and GeForce 16 (Turing GTX) series video cards. Specifically, the GeForce GTX 1060 6GB and higher, as well as the new GTX 1660 series video cards.

Now, as you might expect, raytracing performance on these cards is going to be much (much) slower than it is on NVIDIA's RTX series cards, all of which have hardware raytracing support via NVIDIA's RTX backend. NVIDIA's official numbers are that the RTX cards are 2-3x faster than the GTX cards, however this is going to be workload-dependent. Ultimately it's the game and the settings used that will determine just how much of an additional workload raytracing will place on a GTX card.

The inclusion of DXR support on these cards is functional – that is to say, its inclusion isn't merely for baseline featureset compatibility, ala FP64 support on these same parts – but it's very much in a lower league in terms of performance. And given just how performance-intensive raytracing is on RTX cards, it remains to be seen just how useful the feature will be on cards lacking the RTX hardware. Scaling down image quality will help to stabilize performance, for example, but then at that point will the image quality gains be worth it?

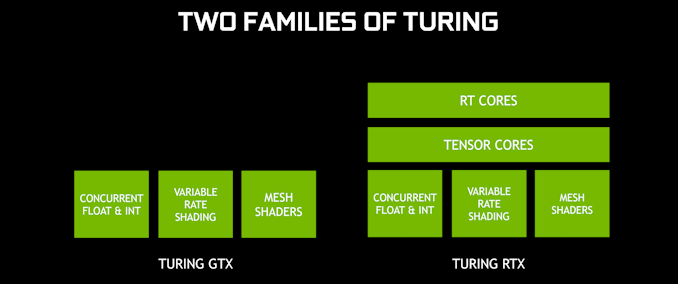

Under the hood, NVIDIA is implementing support for DXR via compute shaders run on the CUDA cores. In this area the recent GeForce GTX 16 series cards, which are based on the Turing architecture sans RTX hardware, have a small leg up. Turing includes separate INT32 cores (rather than tying them to the FP32 cores), so like other compute shader workloads on these cards, it's possible to pick up some performance by simultaneously executing FP32 and INT32 instructions. It won't make up for the lack of RTX hardware, but it at least gives the recent cards an extra push. Otherwise, Pascal cards will be the slowest in this respect, as their compute shader-based path has the highest overhead of all of these solutions.

This list of cards includes mobile equivalents

One interesting side effect is that because DXR support is handled at the driver level, the addition of DXR support is supposed to be transparent to current DXR-enabled games. That means developers won't need to issue updates to get DXR working on these GTX cards. However it goes without saying that because of the performance differences, they likely will want to anyhow, if only to provide settings suitable for video cards lacking raytracing hardware.

NVIDIA's own guidance is that GTX cards should expect to run low-quality effects. Users/developers will also want to avoid the most costly effects such as global illumination, and stick to "cheaper" effects like material-specfic reflections.

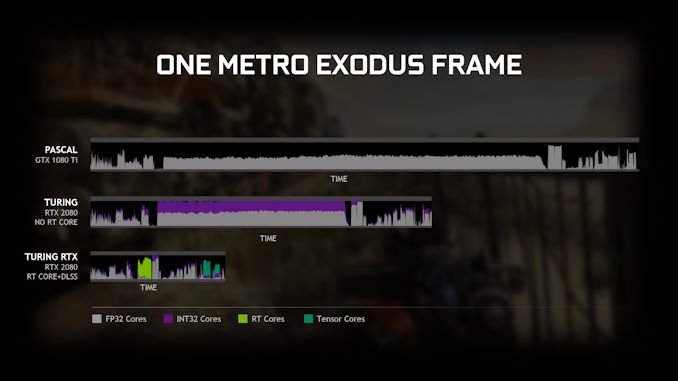

Diving into the numbers a bit more, in one example, NVIDIA showed a representative frame of Metro Exodus using DXR for global illumination. The top graph shows a Pascal GPU, with only FP32 compute, having a long render time in the middle for the effects. The middle bar, showing an RTX 2080 but could equally be a GTX 1660 Ti, shows FP32 and INT32 compute working together during the RT portion of the workload and speeding the process up. The final bar shows the effect of adding RT cores to the mix, and tensor cores at the end.

Ultimately the release of DXR support for NVIDIA's non-RTX cards shouldn't be taken as too surprising – on top of the fact that the API was initially demoed on pre-RTX Volta hardware, NVIDIA was strongly hinting as early as last year that this would happen. So by and large this is NVIDIA fulfilling earlier goals. Still, it will be interesting to see whether DXR actually sees any use on the company's GTX cards, or if the overall performance is simply too slow to bother. The performance hit in current games certainly favors the latter outcome, but it's going to be developers that make or break it in the long run.

Meanwhile, gamers will get to find out for themselves with NVIDIA's DXR-enabled driver release, which is expected to come out next month.

Source: NVIDIA

38 Comments

View All Comments

BigMamaInHouse - Monday, March 18, 2019 - link

So you can think now what's AMD gonna do with all their compute power on Polaris and Vega :-).BigMamaInHouse - Monday, March 18, 2019 - link

P.S: Vega Supports 2:1 FP16!Sttm - Monday, March 18, 2019 - link

Offer 6 fps on the Vega VII instead of 5 fps on the 1080ti.:)

uefi - Monday, March 18, 2019 - link

Unless if DXR allows running FP32 and/or INT32 solely on a second card. It should be more common in games to utilise a second GPU for compute tasks. It's something low level APIs like Vulkan promises, right?Kevin G - Tuesday, March 19, 2019 - link

Oh, the promise is there from hardware manufacturers but that takes effort from software developers and anything that requires effort there of a minority of users (second GPU) is a non-starter.The sole exception would be a handful of developer that see fit to leverage both an discrete and Intel integrated graphics together due to the shear market share of this combination. Even though, I wonder how useful leveraging this would actually be in the real world.

WarlockOfOz - Tuesday, March 19, 2019 - link

So long as the IGP provides more benefit than is lost by coordinating the different processors it would be nice to see it used. I'm guessing it's a fairly hard problem to solve at a general level otherwise something like Ryzen Vega + GPU Vega (which I presume to be the easiest to do technically, the easiest to get both sides to agree to and the easiest to benefit from cross-marketing) would be a thing.CiccioB - Tuesday, March 19, 2019 - link

The cost of an added capable enough GPU that can support real time raytracing without dedicated units is the same as having those units inside the single GPU.That simplifies everything, from a programming point of view, to the general support (you are not targeting the few 0.x% of users with 2 big enough GPUs), to power consumption to calculation efficiency in general (dedicated units use less energy for the same work and being inside a single GPU does not require the constant copy of data forth and back between the two GPU).

You may think that this will not be an issue when chiplets will be in use for MCM architectures, but again, implementing the dedicated units near the general purpose ALUs (and filtering cores) just increases efficiency a lot.

shing3232 - Tuesday, March 19, 2019 - link

but it would subject to same bandwidth constrain and power. They would rather to have another dedicated RT chip until they reach 7nmCiccioB - Monday, March 18, 2019 - link

The same that was and is available with Pascal and Volta, that is not enough raw power to do anything serious with RT.blppt - Monday, March 18, 2019 - link

In a way, it makes sense from a business point of view---allow the Pascal user to experience the eye candy of enabled RT, at a framerate so bad that they'll be more inclined to dump money on the RTX upgrade.