Comparing Two 1TB NVMe Drives with Same NAND, Same Controller: XPG SX8200 Pro vs HP EX950

by Billy Tallis on February 6, 2019 11:30 AM ESTWhole-Drive Fill: Testing SLC Cache Size

Most modern drives, in order to accelerate writing speed, designate a part of the drive as an 'SLC cache'. This is part of the storage that acts in 'single bit-per-cell' mode, which allows for faster reads and writes. The reason why it isn't used across the whole drive is that it doesn't allow as much data to be stored in the same area (TLC is three bits per cell, so 3x the density). As the user puts a sustained file write on the drive, this SLC cache will fill up at full speed. If the write size is bigger than the cache and goes without a break, it can spill into normal TLC territory (which is slower). When there is a pause in operation, the drive will compact the data in the SLC cache and move it to TLC blocks, freeing up cache space for future writes. Some drives adjust the size of this SLC cache dynamically based on the amount of free space left, while others have it as a fixed capacity.

We test this function as part of our review, to see how different drives react to a sustained write across the drive without a pause.

Testing Our Drives

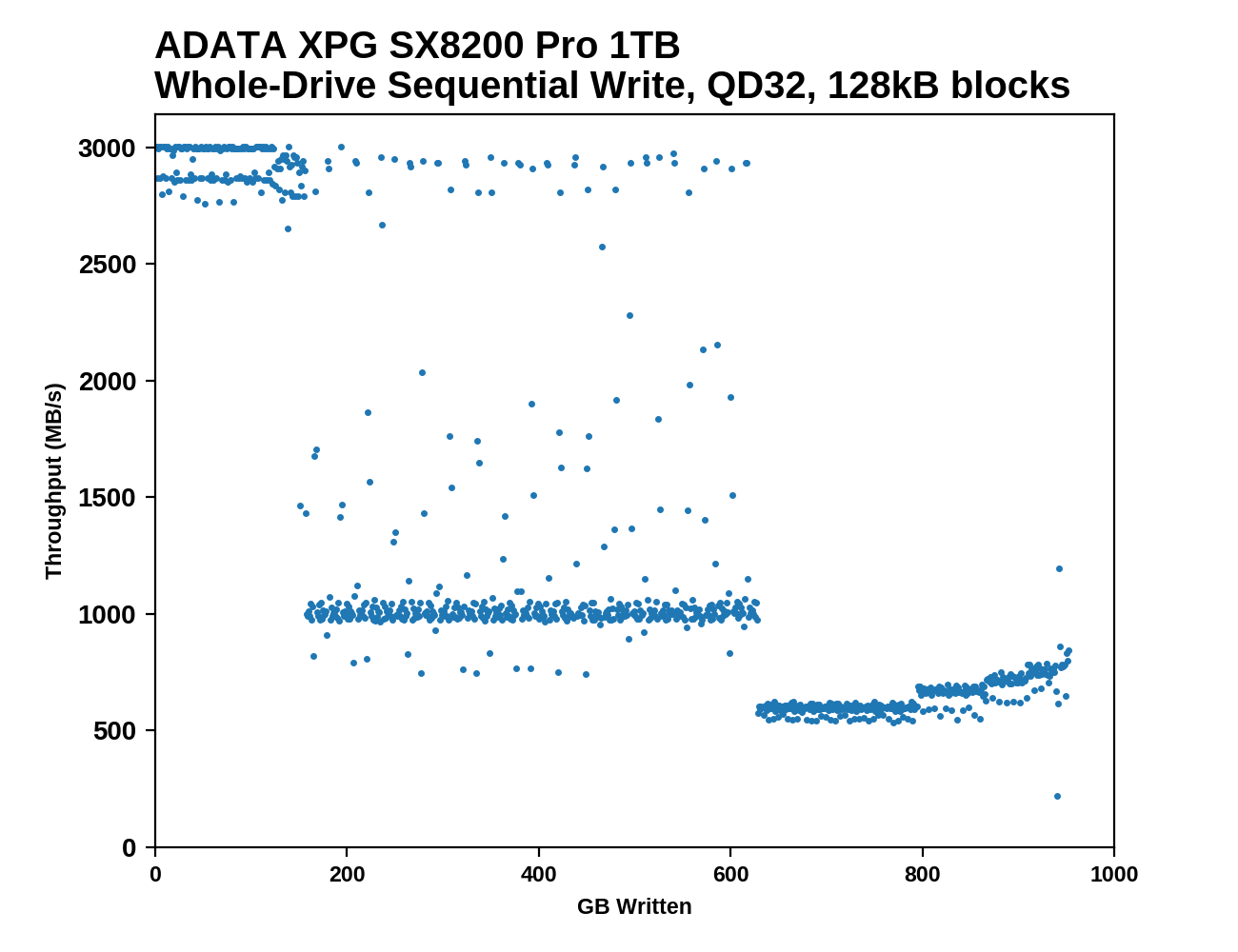

This test starts with a freshly-erased drive and fills it with 128kB sequential writes at queue depth 32, recording the write speed for each 1GB segment. This test is not representative of any ordinary client/consumer usage pattern, but it does allow us to observe transitions in the drive's behavior as it fills up. This can allow us to estimate the size of any SLC write cache, and get a sense for how much performance remains on the rare occasions where real-world usage keeps writing data after filling the cache.

|

|||||||||

The process of filling up a SM2262EN drive with sequential writes can be divided into three distinct phases. First is writing to the SLC cache at around 3 GB/s. The SM2262EN drives have the largest write caches we've seen in a TLC drive: where the 1TB HP EX920's speed first drops after about 136 GB, the 1TB SX8200 Pro lasts for about 150 GB and the 1TB HP EX950 goes slightly further with its first significant speed drop occurring after 156 GB. At this point, just shy of half of the drive's NAND cells are being treated as SLC.

Once that fills up, we see a middle phase where the writes mostly bypass the SLC cache and go straight to TLC, at around 1 GB/s for the 1TB models and 1.5 GB/s for the 2TB. During this middle phase there is some background work to flush the SLC cache which occasionally gets in the way of writing new data, but also means there are frequent momentary bursts back up to SLC write speed.

The final phase occurs when the TLC portion of the drive fills up and the drive has to start shrinking the SLC cache. Each new chunk of data the host sends requires the controller to free up some space by folding data in the SLC cache into TLC blocks, and these competing processes impose a significant performance hit now that the folding cannot be treated as low-priority. However, as the drive approaches 100% full, the SLC cache shrinks and performance creeps back up toward what we saw during the middle phase.

|

|||||||||

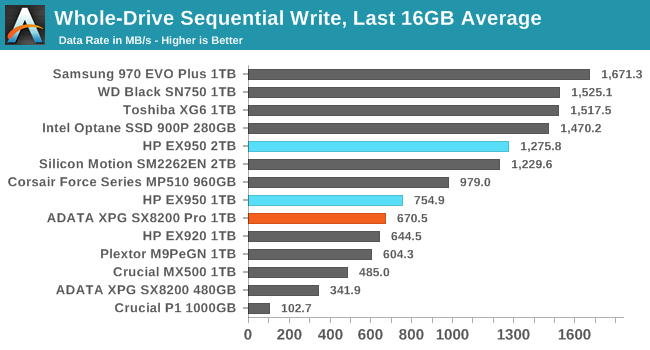

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

The SM2262EN drives improve over their predecessors in post-SLC write speeds, but still lag far behind most other high-end TLC drives. The performance stays above SATA speeds for the entire drive fill process, but Samsung, Toshiba and Western Digital all have TLC drives that can maintain 1.5GB/s. The 2TB EX950 averages about that speed during the middle phase of writing directly to TLC, but its overall average is only about 1.3GB/s, and the 1TB models average under 1GB/s.

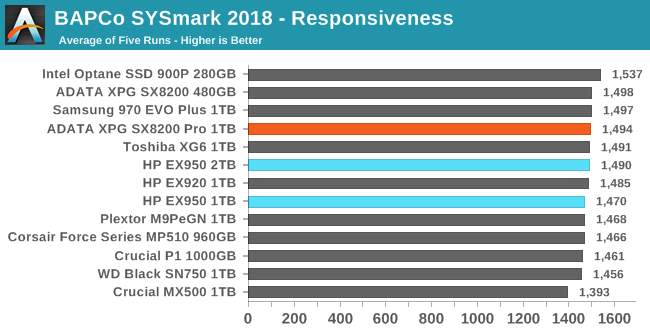

BAPCo SYSmark 2018

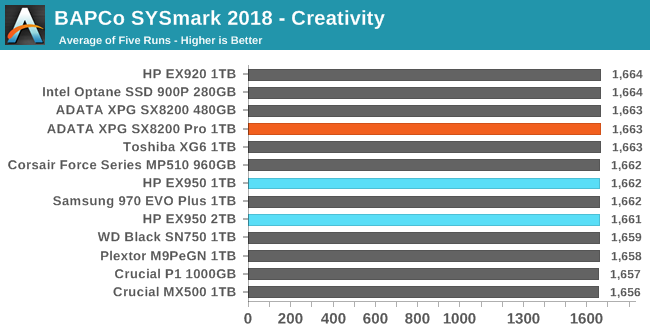

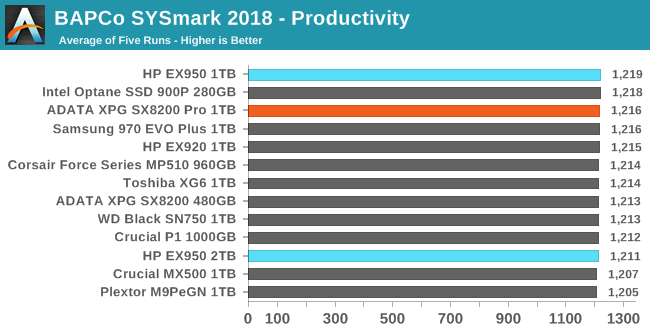

BAPCo's SYSmark 2018 is an application-based benchmark that uses real-world applications to replay usage patterns of business users, with subscores for productivity, creativity and responsiveness. Scores represnt overall system performance and are calibrated against a reference system that is defined to score 1000 in each of the scenarios. A score of, say, 2000, would imply that the system under test is twice as fast as the reference system.

SYSmark scores are based on total application response time as seen by the user, including not only storage latency but time spent by the processor. This means there's a limit to how much a storage improvement could possibly increase scores, because the SSD is only in use for a small fraction of the total test duration. This is a significant difference from our ATSB tests where only the storage portion of the workload is replicated and disk idle times are cut short to a maximum of 25ms.

| AnandTech SYSmark SSD Testbed | |

| CPU | Intel Core i5-7400 |

| Motherboard | ASUS PRIME Z270-A |

| Chipset | Intel Z270 |

| Memory | 2x 8GB Corsair Vengeance DDR4-2400 CL17 |

| Case | In Win C583 |

| Power Supply | Cooler Master G550M |

| OS | Windows 10 64-bit, version 1803 |

Our SSD testing with SYSmark uses a different test system than the rest of our SSD tests. This machine is set up to measure total system power consumption rather than just the drive's power.

Storage improvements make little to no difference in SYSmark performance on our quad-core test system with integrated graphics. Only the responsiveness test shows a spread of scores that's wider than the normal variation between runs, and the gap between a SATA SSD and an Intel Optane SSD is still only 10%, with high-end TLC NVMe drives falling in the middle.

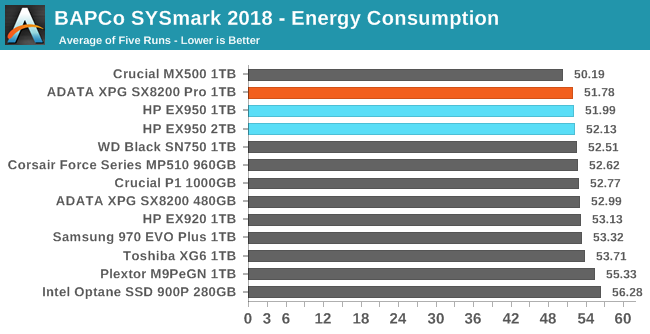

Energy Usage

The SYSmark energy usage scores measure total system power consumption, excluding the display. Our SYSmark test system idles at around 26 W and peaks at over 60 W measured at the wall during the benchmark run. SATA SSDs seldom exceed 5 W and idle at a fraction of a watt, and the SSDs spend most of the test idle. This means the energy usage scores will inevitably be very close. A typical notebook system will tend to be better optimized for power efficiency than this desktop system, so the SSD would account for a much larger portion of the total and the score difference between SSDs would be more noticeable.

The energy consumption scores show more meaningful differences between drives than the performance scores, though it's still not enough to matter to desktop use. The NVMe drives are all still clearly more power-hungry than decent SATA drives, but the SM2262EN drives close the gap slightly.

42 Comments

View All Comments

wolfesteinabhi - Wednesday, February 6, 2019 - link

this is a bit of worrying trend where we are getting same products with new/updated firmware...the firmware that was essentially free earlier...and get improved performance...now they have to pay and buy new hardware for it.ERJ - Wednesday, February 6, 2019 - link

When is the last time you updated the firmware on your hard drive? RAID card / BIOS / Video Card sure, but hard drive?Now, you could argue that the controller is essentially part of the hard drive in this case but still.

jeremyshaw - Wednesday, February 6, 2019 - link

Hard drive? They aren't that old, right? You do remember HDD firmware updates. As for SSDs, I've recently updated the firmware on my SSDs. Heck, even my monitor has been through a firmware update. Like the SSD updates, the monitor firmware affected performance and compatibility.jabber - Thursday, February 7, 2019 - link

I used to worry about SSD firmware updates when I was getting in 1 every blue moon and it had novelty value but 200+ SSDs later I now just don't bother. At the end of the day getting an extra 30MBps just isnt the boost it was all those years ago.ridic987 - Wednesday, February 6, 2019 - link

We are discussing SSD's not hard drive. Literally the thing what he is saying most applies to.Samus - Thursday, February 7, 2019 - link

I agree, the lack of SSD firmware updates - particularly what WD has pulled with the Black SSD's - is troubling. To artificially limit product improvement through restricting software updates and requiring the user to purchase an entirely new product sets a bad precedent. They could at least do what HP does in the server market and charge for support beyond the warranty. After a server warranty is up (typically 3 years) you have to pay for firmware and BIOS updates for servers. This isn't a terrible policy, at least it wasn't until meltdown\spectre hit and all the sudden it seemed somewhat necessary to update server firmware that were many years old.Hard drives get firmware updates all the time - just not from the manufacturers. They typically applicable to retail products for reasons. But go ahead and look up a random OEM PC's drivers from Dell or HP and you might see various firmware updates available for the hard disk models those PC's shipped with.

Are they important though? Rarely. Barring any significant bug, hard disks have little to benefit from firmware updates as they are so mechanically limited and the controllers are generally quite mature, having been based on incremental generations of past firmware. This could all change as MAMR and HAMR become more mainstream, the same way the only hard disk firmware I remember being common were the WD Black2 (the SSD+HDD hybrid) and various Seagate SSHD's - for obvious reasons. The technology was new, and there are benefits to updating the SSD firmware as improvements are made through testing and customer feedback.

melgross - Thursday, February 7, 2019 - link

I think of it the other way around. Unless there’s a serious bug in the firmware, firmware upgrades are a gift, that manufacturers don’t have to give.The fact is that even when they are available, almost no one applies them. That’s true even for most who know they’re available. It’s alwasys dangerous to upgrade firmware on a product so central to needs. If there’s an unfounded bug in the new upgrade, that could be worse than firmware that’s already working just fine, but isn’t quite as fast as you might wish foe.

I’ve never seen any major upgrade in performance from a drive firmware upgrade. Ever.

FullmetalTitan - Thursday, February 7, 2019 - link

Those of us around for sandforce controllers remember well the pains of updating SSD firmware to make our drives useableDigitalFreak - Wednesday, February 6, 2019 - link

Billy - how far are we away from having 4TB M.2 NVMe drives?Billy Tallis - Wednesday, February 6, 2019 - link

Most SSD vendors could produce 4TB double-sided M.2 drives using off the shelf parts. Putting 4TB on a single-sided module would require either going with a DRAMless controller, stacking DRAM and the controller on the same package, or using 1Tb+ QLC dies instead of TLC.So currently any 4TB M.2 drive would have at least some significant downside that either compromises performance, restricts compatibility, or drives the price up well beyond twice that of a 2TB M.2 drive. There's simply not enough demand for such drives, and likely won't be anytime soon.