The AMD Radeon RX 590 Review, feat. XFX & PowerColor: Polaris Returns (Again)

by Nate Oh on November 15, 2018 9:00 AM ESTClosing Thoughts

As we bring this to a close, the Radeon RX 590 features few, if any, surprises, indeed outperforming the RX 580 via higher clockspeeds but at the cost of additional power consumption. This is enabled by being fabbed on 12nm and being afforded higher TBPs, but in terms of overall effect, the port to 12nm is less of a die shrink and more of a new stepping; in terms of transistor count and die size Polaris 30 is identical to Polaris 10. Feature-wise, whereas the RX 500 series did introduce a new mid-power memory state, the RX 590 doesn't add anything.

Altogether, AMD is helping push the RX 590 along with a three-game launch bundle and emphasizing the value of FreeSync monitors. One might add that the RX 590 also makes the most out of the game bundle, which now presents itself as a direct value-add for the card. But the card by itself isn't providing more bang for your buck than with the RX 580.

By the numbers, then, where does the RX 590 land? Reference-to-reference, the RX 590 is about 12% faster than the RX 580 at 1080p/1440p, and 14-15% faster than the RX 480. Across the aisle, this turns out to be 9% faster than the reference GTX 1060 6GB, though the GeForce card still takes the lead in games like GTA V and Total War: Warhammer II. That matchup itself is more-or-less an indicator of how far Polaris has come - the RX 480 at launch was 11% behind the GTX 1060 6GB, and the RX 580 at launch was 7% behind. Improvements have come over the years with drivers and such, where the RX 480 and RX 580 are much closer, but the third time around, Polaris can finally claim the victory at launch.

That being said, the 9% margin is well within reach of factory-overclocked GTX 1060 6GB cards, especially with a 9Gbps option that the RX 590 doesn't have. Even with the XFX Radeon RX 590 Fatboy the delta only increases to about 11%. In other words, as custom factory-overclocked cards the RX 590 and GTX 1060 6GB are likely to trade blows. And heavily factory-overclocked RX 580s are in the similar situation.

But similar to the RX 580 and 570, the RX 590 achieves this at the cost of even more power consumption. In practical situations like in Battlefield 1, the RX 590 results in the system pulling around 110W more from the wall than with the GTX 1060 6GB. The RX 590 is not a power efficient card at the intended clocks and TBP, and so won't be suitable for SFF builds or reducing air conditioning usage.

Once again though, we've observed that VRAM is never enough. It was only a few years ago that 8GB of VRAM was considered excessive, only useful for 4K. But especially with the popularity of HD texture packs, even 6GB of framebuffer could prove limiting at 1080p with graphically demanding games. In that respect, the RX 590's 8GB keeps it covered but also brings additional horsepower over the 8GB R9 390. For memoryhogging games like Shadow of War, Wolfenstein II, or now Far Cry 5 (with HD textures), the 8GBs go a long way. Even at 1080p, GTX 980/970 performance in Wolfenstein II tanks because of the lack of framebuffer. For those looking to upgrade from 2GB or 4GB cards, both the RX 580 and RX 590 should be of interest.

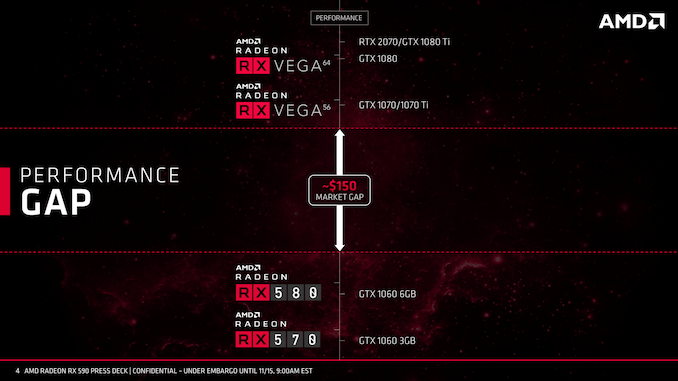

So in terms of a performance gap, the custom RX 590s seem poised to fill in, though it might be in the range of 5 to 10% when comparing to existing heavily factory-overclocked RX 580s. That gap, of course, would likely have been filled by a 'Vega 11' product, which never came to fruition. Beyond a smaller discrete Vega GPU, 12nm Vega seems less likely given that 14nm+ Vega is no longer on the roadmap. And with 7nm Vega announced as Radeon Instinct MI60 and MI50 accelerators, there's no current indication of those GPUs coming into the consumer space. We also know very little about Navi other than being fabbed with TSMC on 7nm and due in 2019.

So of the possible cards in AMD’s deck, we are seeing a single 12nm Polaris SKU slotting in above the existing RX 580. If there are no further refreshes coming, then gamers will naturally ask when Polaris will be replaced as AMD's mainstream offering, as otherwise the RX 500 series will be holding the fort until then. If this is a prelude to further refreshes, then it becomes a question of how much more performance can be squeezed from Polaris.

The bigger picture is that from the consumer point-of-view, RX Vega in August 2017 was the most recent video card launch before today. There are more pressing concerns in the present: NVIDIA's recently-launched Turing-based GeForce RTX 20 series, as well as Intel's renewed ambition for discrete graphics and subsequent graphics talent leaving AMD for Intel. While the RX 590 avoids the optics of having no hardware response whatsoever in the wake of the RTX 20 cards, it is still a critical juncture with respect to DirextX Raytracing (DXR) development. And regardless, Intel has announced 2020 as the date for their modern discrete GPUs.

So as we head into 2019, it will be a crucial year for AMD and RTG. But returning to the Radeon RX 590, it applies pressure to the GTX 1060 6GB and older GTX 900 series cards, while avoiding direct pressure on existing RX 580 inventory by virtue of pricing. And right now, without anything to compete with the GTX 1080 Ti/RTX 2070 range or above, AMD is likely more than happy to take any advantage where they can. For now, though, much depends on the pricing of top-end RX 580s.

136 Comments

View All Comments

El Sama - Thursday, November 15, 2018 - link

To be honest I believe that the GTX 1070/Vega 56 is not that far away in price and should be considered as the minimum investment for a gamer in 2019.Dragonstongue - Thursday, November 15, 2018 - link

over $600 for a single GPU V56, no thank you..even this 590 is likely to be ~440 or so in CAD, screw that noise.minimum for a gamer with deep pockets, maybe, but that is like the price of good cpu and motherboard (such as Ryzen 2700)

Cooe - Thursday, November 15, 2018 - link

Lol it's not really the rest of the world's fault the Canadian Dollar absolutely freaking sucks right now. Or AMD's for that matter.Hrel - Thursday, November 15, 2018 - link

Man, I still have a hard 200 dollar cap on any single component. Kinda insane to imagine doubling that!I also don't give a shit about 3d, virtual anything or resolutions beyond 1080p. I mean ffs the human eye can't even tell the difference between 4k and 1080, why is ANYONE willing to pay for that?!

In any case, 150 is my budget for my next GPU. Considering how old 1080p is that should be plenty.

igavus - Friday, November 16, 2018 - link

4k and 1080p look pretty different. No offence, but if you can't tell the difference, perhaps it's time to schedule a visit with an optometrist? Nevermind 4K, the rest of the world will look a lot better also if your eyes are okay :)Great_Scott - Friday, November 16, 2018 - link

My eyes are fine. The sole advantage of 4K is not needing to run AA. That's about it.Anyone buying a card just so they can push a solid framerate on a 4K monitor is throwing money in the trash. Doubly so if they aren't 4->1 interpolating to play at 1K on a 4K monitor they needed for work (not gaming, since you don't need to game at 4K in the first place).

StevoLincolnite - Friday, November 16, 2018 - link

There is a big difference between 1080P and 4k... But that is entirely depending on how large the display is and how far you sit away from said display.Otherwise known as "Perceived Pixels Per Inch".

With that in mind... I would opt for a 1440P panel with a higher refresh rate than 4k every day of the week.

wumpus - Saturday, November 17, 2018 - link

Depends on the monitor. I'd agree with you when people claim "the sweet spot of 4k monitors is 28 inches". Maybe the price is good, but my old eyes will never see it. I'm wondering if a 40" 4k TV will make more sense (the dot pitch will be lower than my 1080P, but I'd still likely notice lack of AA).Gaming (once you step up to the high end GPUs) should be more immersive, but the 2d benefits are probably bigger.

Targon - Saturday, November 17, 2018 - link

There are people who notice the differences, and those who do not. Back in the days of CRT monitors, most people would notice flicker with a 60Hz monitor, but wouldn't notice with 72Hz. I always found that 85Hz produced less eye strain.There is a huge difference between 1080p and 2160p in terms of quality, but many games are so focused on action that the developers don't bother putting in the effort to provide good quality textures in the first place. It isn't just about not needing AA as much as about a higher pixel density and quality with 4k. For non-gaming, being able to fit twice as much on the screen really helps.

PeachNCream - Friday, November 16, 2018 - link

I reached diminishing returns at 1366x768. The increase to 1080p offered an improvement in image quality mainly by reducing jagged lines, but it wasn't anything to get astonished about. Agreed that the difference between 1080p and 4K is marginal on smaller screens and certainly not worth the added demand on graphics power to push the additional pixels.