The Crucial P1 1TB SSD Review: The Other Consumer QLC SSD

by Billy Tallis on November 8, 2018 9:00 AM ESTPower Management Features

Real-world client storage workloads leave SSDs idle most of the time, so the active power measurements presented earlier in this review only account for a small part of what determines a drive's suitability for battery-powered use. Especially under light use, the power efficiency of a SSD is determined mostly be how well it can save power when idle.

For many NVMe SSDs, the closely related matter of thermal management can also be important. M.2 SSDs can concentrate a lot of power in a very small space. They may also be used in locations with high ambient temperatures and poor cooling, such as tucked under a GPU on a desktop motherboard, or in a poorly-ventilated notebook.

| Crucial P1 NVMe Power and Thermal Management Features |

|||

| Controller | Silicon Motion SM2263 | ||

| Firmware | P3CR010 | ||

| NVMe Version |

Feature | Status | |

| 1.0 | Number of operational (active) power states | 3 | |

| 1.1 | Number of non-operational (idle) power states | 2 | |

| Autonomous Power State Transition (APST) | Supported | ||

| 1.2 | Warning Temperature | 70 °C | |

| Critical Temperature | 80 °C | ||

| 1.3 | Host Controlled Thermal Management | Supported | |

| Non-Operational Power State Permissive Mode | Not Supported | ||

The Crucial P1 includes a fairly typical feature set for a consumer NVMe SSD, with two idle states that should both be quick to get in and out of. The three different active power states probably make little difference in practice, because even in our synthetic benchmarks the P1 seldom draws more than 3-4W.

| Crucial P1 NVMe Power States |

|||||

| Controller | Silicon Motion SM2263 | ||||

| Firmware | P3CR010 | ||||

| Power State |

Maximum Power |

Active/Idle | Entry Latency |

Exit Latency |

|

| PS 0 | 9 W | Active | - | - | |

| PS 1 | 4.6 W | Active | - | - | |

| PS 2 | 3.8 W | Active | - | - | |

| PS 3 | 50 mW | Idle | 1 ms | 1 ms | |

| PS 4 | 4 mW | Idle | 6 ms | 8 ms | |

Note that the above tables reflect only the information provided by the drive to the OS. The power and latency numbers are often very conservative estimates, but they are what the OS uses to determine which idle states to use and how long to wait before dropping to a deeper idle state.

Idle Power Measurement

SATA SSDs are tested with SATA link power management disabled to measure their active idle power draw, and with it enabled for the deeper idle power consumption score and the idle wake-up latency test. Our testbed, like any ordinary desktop system, cannot trigger the deepest DevSleep idle state.

Idle power management for NVMe SSDs is far more complicated than for SATA SSDs. NVMe SSDs can support several different idle power states, and through the Autonomous Power State Transition (APST) feature the operating system can set a drive's policy for when to drop down to a lower power state. There is typically a tradeoff in that lower-power states take longer to enter and wake up from, so the choice about what power states to use may differ for desktop and notebooks.

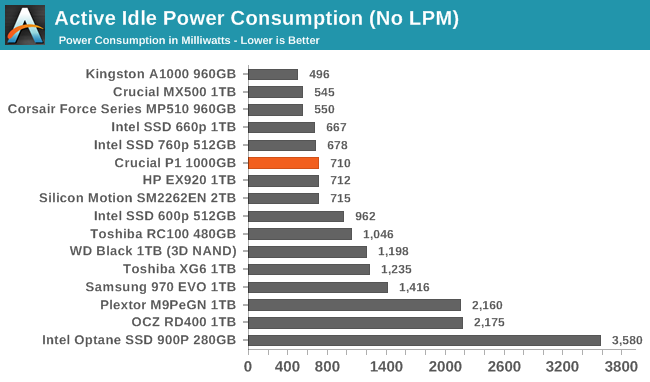

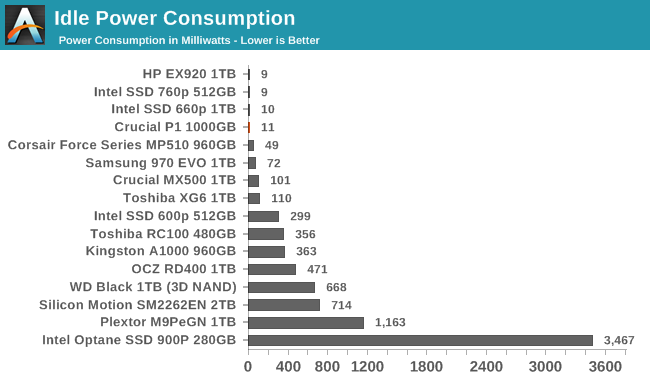

We report two idle power measurements. Active idle is representative of a typical desktop, where none of the advanced PCIe link or NVMe power saving features are enabled and the drive is immediately ready to process new commands. The idle power consumption metric is measured with PCIe Active State Power Management L1.2 state enabled and NVMe APST enabled if supported.

The idle power consumption numbers from the Crucial P1 match the pattern seen with other recent Silicon Motion platforms. The active idle draw is a bit higher for the P1 than the 660p due to the latter having less DRAM, but both do very well when put to sleep.

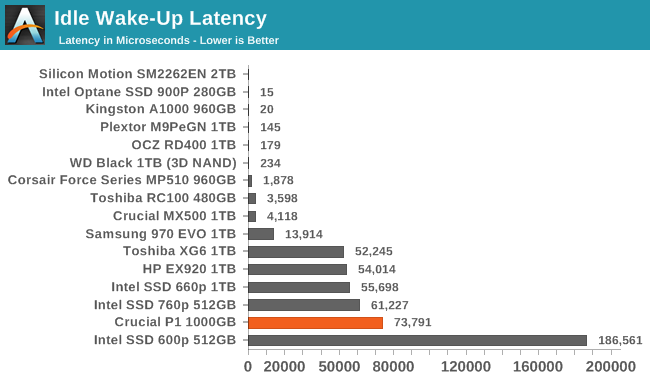

The wake-up latency of over 73ms for the Crucial P1 is fairly high, and definitely much worse than what the drive advertises to the operating system. This could lead to some responsiveness problems if the OS is misled into choosing an overly-aggressive power management strategy.

66 Comments

View All Comments

Marlin1975 - Thursday, November 8, 2018 - link

" The company's first attempt at an NVMe drive was ready to hit the market but was canceled when it became clear that it would not have been competitive."Looking at this one maybe it should follow the same fate. Or the price should be much lower.

StrangerGuy - Thursday, November 8, 2018 - link

The MSRP for the 1TB is a completely non starter when the excellent EX920 1TB already hit $170, but if the actual price drops to $120 it's definitely appealing especially for a low write count usage like a Steam install drive.FullmetalTitan - Thursday, November 8, 2018 - link

The 970 EVO 1TB NVMe was just on sale for $228 on most retail sites in the US. At the same cost/GB and significantly better performance, it isn't even a question what to buy currently.menting - Thursday, November 8, 2018 - link

comparing sale price vs retail price is pretty meaningless.Billy Tallis - Thursday, November 8, 2018 - link

Given how flash memory prices have been dropping, today's sale price is next month's everyday retail price.DigitalFreak - Friday, November 9, 2018 - link

Just saw the 970 EVO 1TB is $219 at Microcenter. Unless it gets a $50+ price cut immediately, the P1 is DOA.tokyojerry - Friday, April 19, 2019 - link

Currently $128 at Amazon. As of 4/19/2019 3:14:42 PM0ldman79 - Monday, November 12, 2018 - link

I just paid $139 for a WD Blue 1TB 3D m.2 a couple of days ago. Haven't even beaten on it thoroughly.In quick testing (video encoding) during the decompress it will sustain 300MBps for a while, not sure if I'm hitting a drive limit, IO limit or CPU limit shortly there after. The program starts a few other processes, so I'm thinking CPU.

III-V - Thursday, November 8, 2018 - link

They are supposedly having yield issues. If they resolve those, there is plenty of room for cost to come down.Oxford Guy - Thursday, November 8, 2018 - link

Look at what happens with DRAM every time. DDR2 comes out and DDR1 becomes more expensive. Rinse repeat.QLC may lead to higher TLC prices, if TLC volume goes down and/or gets positioned as a more premium product as manufacturers try to sell us QLC.