The Corsair Force MP510 SSD (960GB) Review: A High-End Contender

by Billy Tallis on October 18, 2018 10:00 AM ESTSequential Read Performance

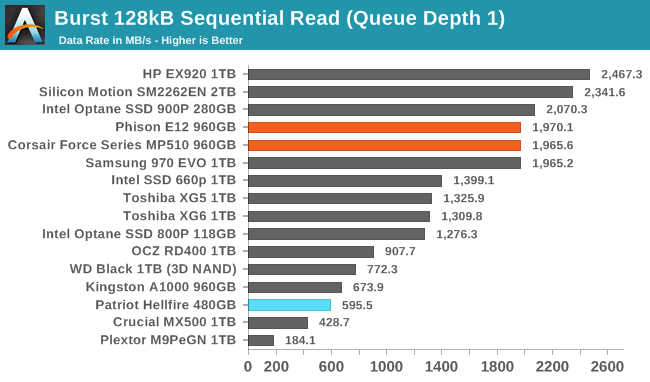

Our first test of sequential read performance uses short bursts of 128MB, issued as 128kB operations with no queuing. The test averages performance across eight bursts for a total of 1GB of data transferred from a drive containing 16GB of data. Between each burst the drive is given enough idle time to keep the overall duty cycle at 20%.

The Patriot Hellfire, in blue, is highlighted as an example of a last-generation Phison E7 drive. Although we didn't test it at the time, the MP500 was based on the same controller and memory.

With a sequential read burst speed just shy of 2GB/s, the Corsair Force MP510 isn't the absolute fastest TLC drive on the market, but there isn't much that can beat it.

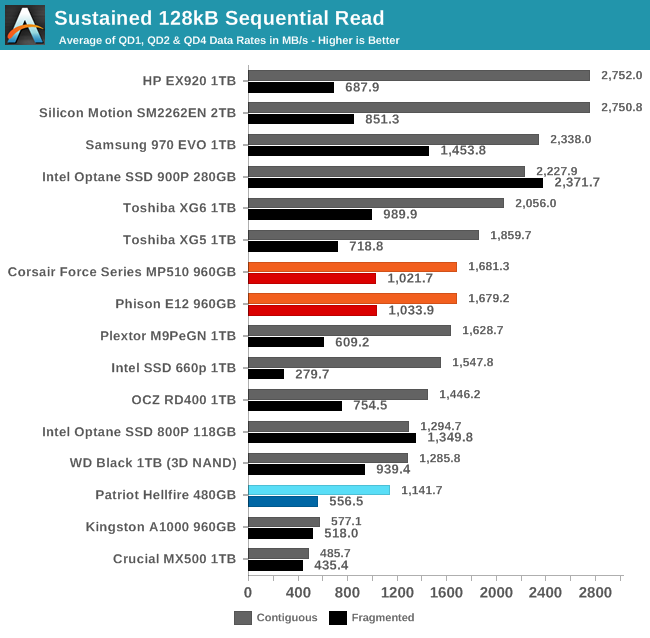

Our test of sustained sequential reads uses queue depths from 1 to 32, with the performance and power scores computed as the average of QD1, QD2 and QD4. Each queue depth is tested for up to one minute or 32GB transferred, from a drive containing 64GB of data. This test is run twice: once with the drive prepared by sequentially writing the test data, and again after the random write test has mixed things up, causing fragmentation inside the SSD that isn't visible to the OS. These two scores represent the two extremes of how the drive would perform under real-world usage, where wear leveling and modifications to some existing data will create some internal fragmentation that degrades performance, but usually not to the extent shown here.

On the longer sequential read test, the MP510's standing falls and it is one of the slower drives in the current high-end NVMe segment. However, it does handle reading fragmented data better than most of its competitors.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

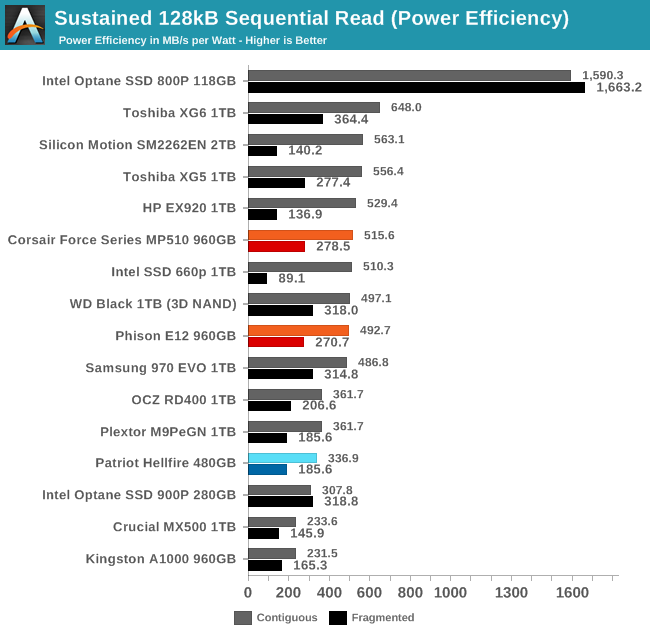

The power efficiency of the MP510 on the sequential read test is similar to most of the other high-end competition, and the MP510 isn't setting any records.

|

|||||||||

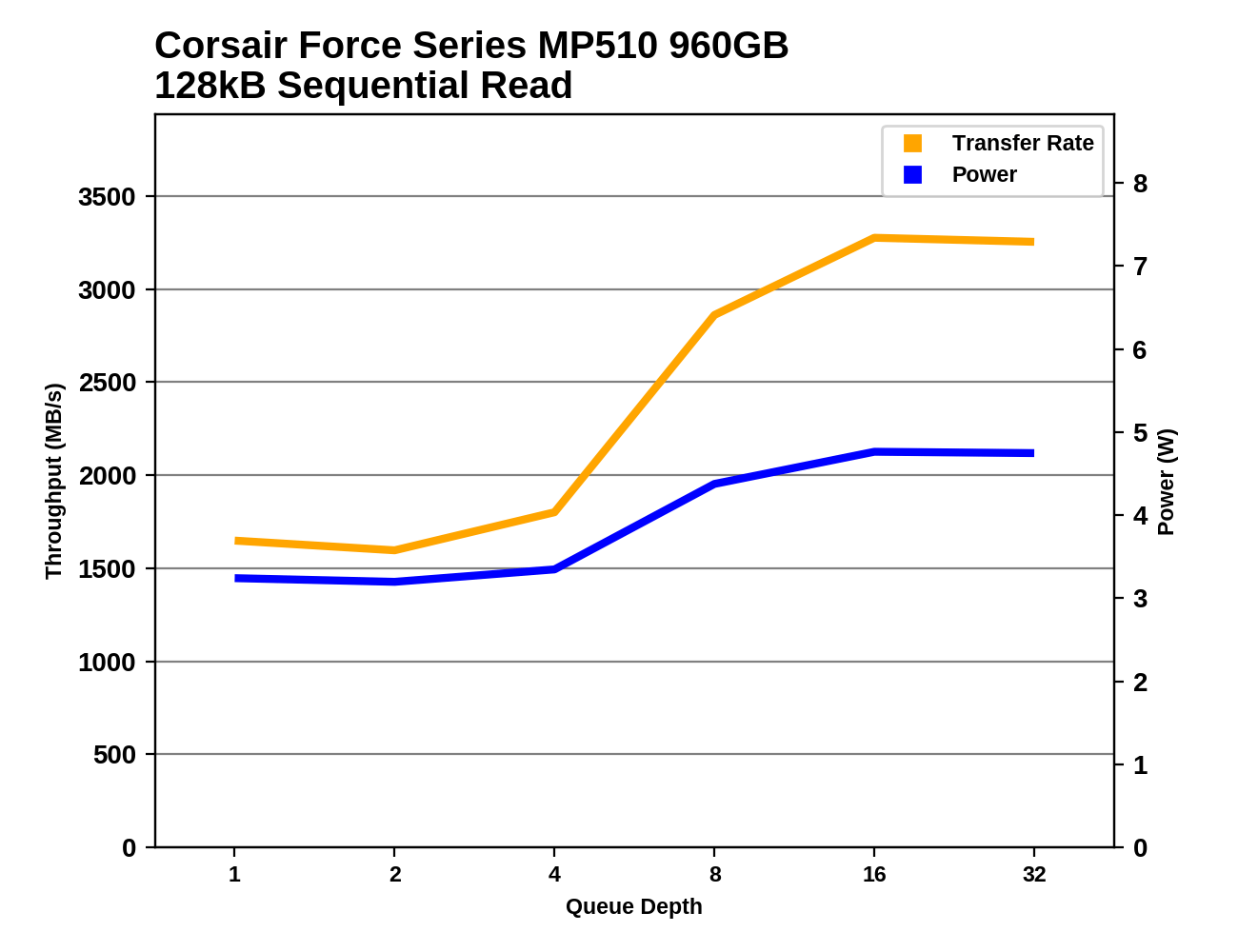

The Corsair Force MP510 hits quite high sequential read speeds when operating with a high enough queue depth, but it doesn't scale well at lower queue depths with QD4 performance only slightly higher than QD1.

Sequential Write Performance

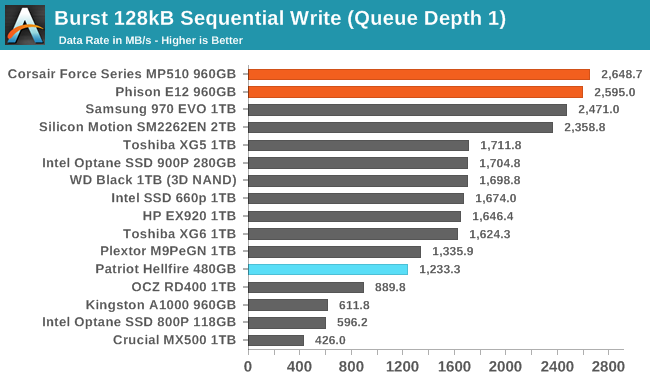

Our test of sequential write burst performance is structured identically to the sequential read burst performance test save for the direction of the data transfer. Each burst writes 128MB as 128kB operations issued at QD1, for a total of 1GB of data written to a drive containing 16GB of data.

The Corsair Force MP510 handsle bursts of sequential writes just as well as it does random writes, so its SLC cache sets another record. Only a handful of drives can manage more than 2GB/s for QD1 writes, and the MP510 exceeds 2.6GB/s on this test.

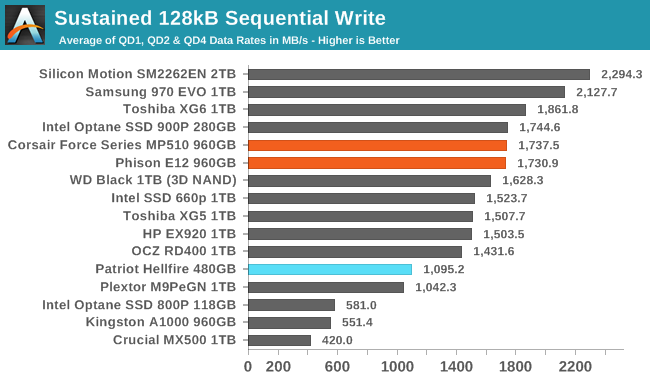

Our test of sustained sequential writes is structured identically to our sustained sequential read test, save for the direction of the data transfers. Queue depths range from 1 to 32 and each queue depth is tested for up to one minute or 32GB, followed by up to one minute of idle time for the drive to cool off and perform garbage collection. The test is confined to a 64GB span of the drive.

On the longer sequential write test that adds in some higher queue depths, the MP510 falls out of first place but stays in a fairly high performance tier.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

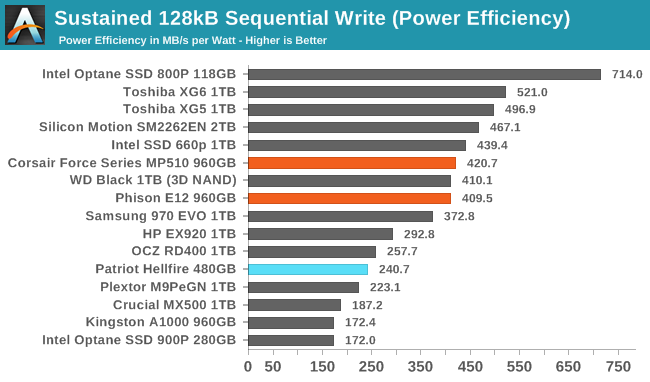

The power efficiency of the MP510 during sequential writes is well below what the BiCS TLC NAND can manage when paired with Toshiba's controller, but still above average when considering the broader field of competition.

|

|||||||||

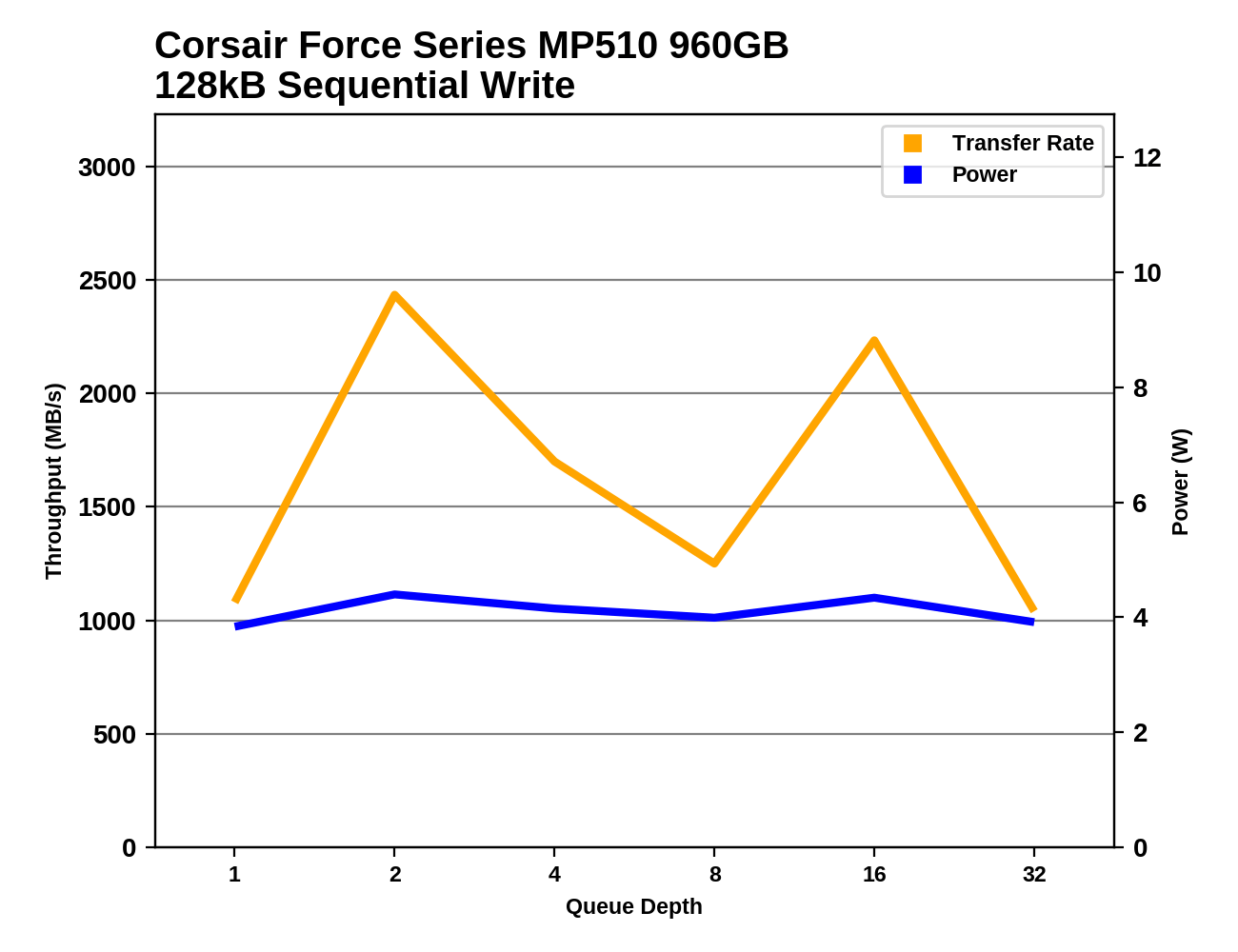

The Corsair Force MP510 shows unsteady performance during the sequential write test, indicating that the very fast SLC write cache does fill up and that can have a significant but temporary impact on performance. At its worst, the MP510 is still handling more than 1GB/s of writes on average, so filling up the SLC cache doesn't ruin performance.

42 Comments

View All Comments

imaheadcase - Thursday, October 18, 2018 - link

Wow, i had no idea how cheap SSD have come. You know, its getting to price points soon that home servers would easily use SSD drives vs mechanical.bill.rookard - Thursday, October 18, 2018 - link

If a 4TB drive becomes somewhat more affordable, then yes, they can. I guess it depends on how big of a server array you have. Personally, I have about 30TB in a 2U server using 4x4tb ZFS + 4x3tb ZFS for 20TB effective. Even a bargain basement setup for a similar size using the cheapest Micron 1100's 2TB SSDs you could find - you'd need 11 of them @ $280 each.Or - just a stitch over $3000.00. Meanwhile, the drives I used were factory refurbed enterprise drives and all 8 of them cost around $500.00

nathanddrews - Thursday, October 18, 2018 - link

I'm definitely waiting for larger SSDs to come down. I think if we ever get to $100/TB, I'll start to swap out more drives. 2TB for $199 would be great.I only recently started to experiment with "hybrid" storage on my home server. I've got about 40TB of rust with about 800GB of SSDs (older SSDs that didn't have a home anymore), using software to manage what folders/files are stored/backed up on which drives. UHD Blu-ray and other disc backups on the slow hard drives (still fast enough to saturate 1GbE) and documents/photos, etc. on the SSD array. My server doesn't have anything faster than SATA6Gbps, but the SSDs are still much quicker for smaller files/random access.

Lolimaster - Thursday, October 18, 2018 - link

I would upgrade to cheap 2.5-5Gbit NICnathanddrews - Thursday, October 18, 2018 - link

I've already got a couple 10GbE NICs, just waiting on an affordable switch...leexgx - Thursday, October 18, 2018 - link

use a PC :) youtube video of a person doing it do need to make sure you have the right mobo so it can handle 10gb speeds between PCI-E 10GB cards or you be getting low speeds between cards (still far cheaper than a actual 10gb switch)https://www.youtube.com/watch?v=p39mFz7ORco

Valantar - Friday, October 19, 2018 - link

You're recommending running a PC 24/7 as a switch to provide >GbE speeds from a NAS? Really?nathanddrews - Friday, October 19, 2018 - link

LOL that's a good joke! I mean, it's creative, but there's no way I'm doing that. I can wait a little longer to get a proper switch(es).rrinker - Thursday, October 18, 2018 - link

I'm at the point of contemplating a new server for home, and hybrid was the way I was going to go, since 16TB or so of all SSD is just too expensive still. But 1-2TB of SSD as fast cache for a bunch of 4TB spinny drives would be relatively inexpensive and offer most of the benefits. And SSD for the OS drive of course.DominionSeraph - Monday, October 22, 2018 - link

Yup, I picked up 24TB for $240. SSDs really can't compete.