The Toshiba XG6 1TB SSD Review: Our First 96-Layer 3D NAND SSD

by Billy Tallis on September 6, 2018 8:15 AM ESTAnandTech Storage Bench - The Destroyer

The Destroyer is an extremely long test replicating the access patterns of very IO-intensive desktop usage. A detailed breakdown can be found in this article. Like real-world usage, the drives do get the occasional break that allows for some background garbage collection and flushing caches, but those idle times are limited to 25ms so that it doesn't take all week to run the test. These AnandTech Storage Bench (ATSB) tests do not involve running the actual applications that generated the workloads, so the scores are relatively insensitive to changes in CPU performance and RAM from our new testbed, but the jump to a newer version of Windows and the newer storage drivers can have an impact.

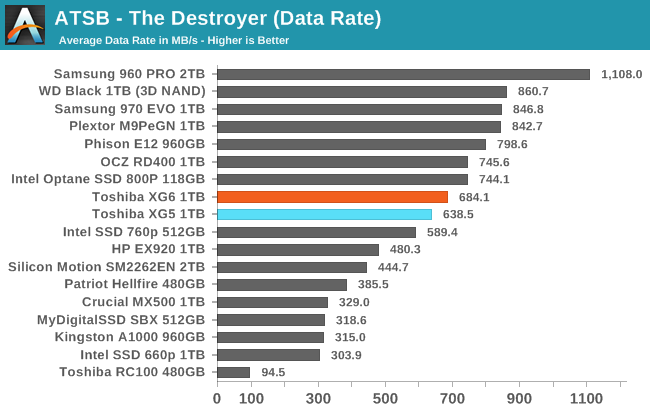

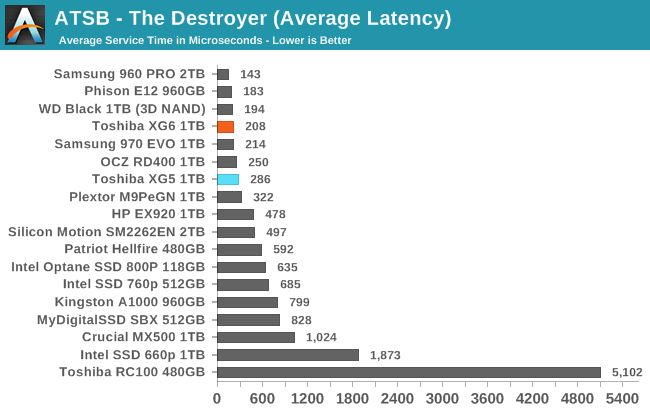

We quantify performance on this test by reporting the drive's average data throughput, the average latency of the I/O operations, and the total energy used by the drive over the course of the test.

The Toshiba XG6 is slightly faster than the XG5 on The Destroyer. It still trails behind the fastest retail SSDs but at twice the speed of a mainstream SATA drive it's well into high-end territory.

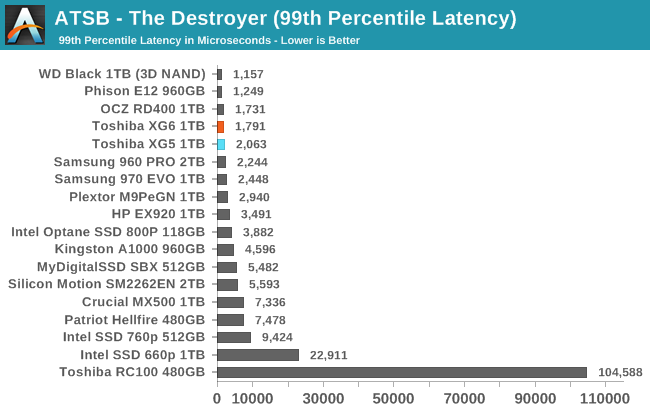

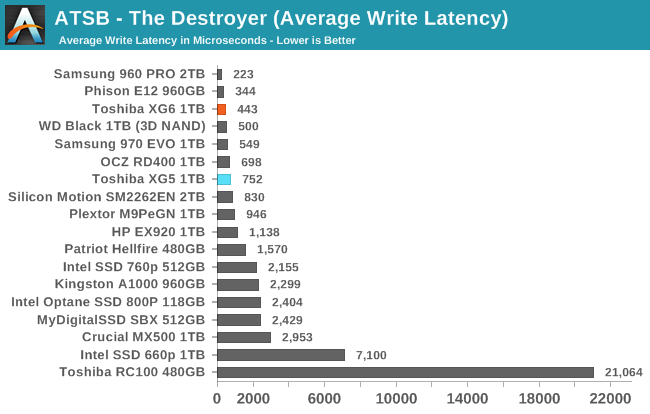

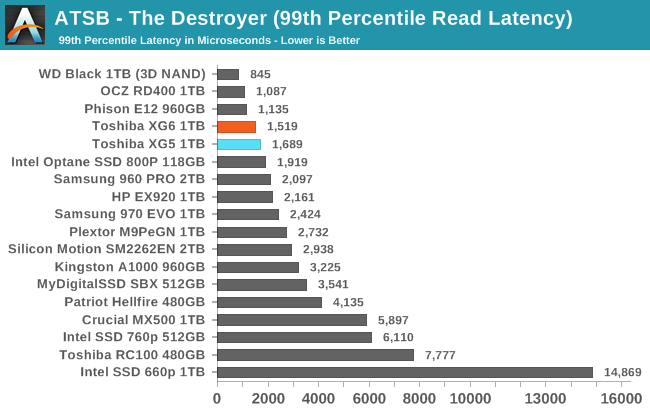

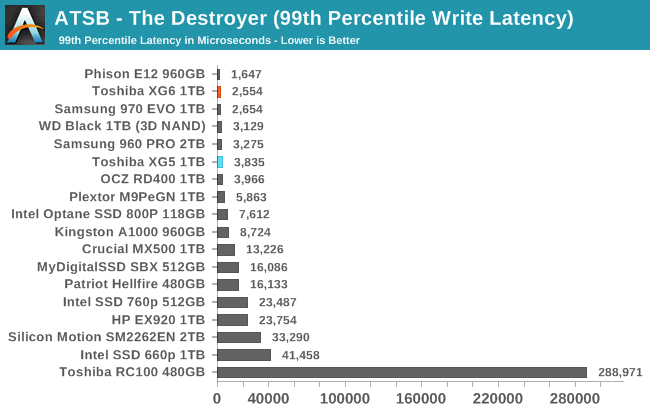

Average and 99th percentile latency have both improved for the XG6, bringing it even closer to the top of the charts and leaving only a small handful of drives that score better.

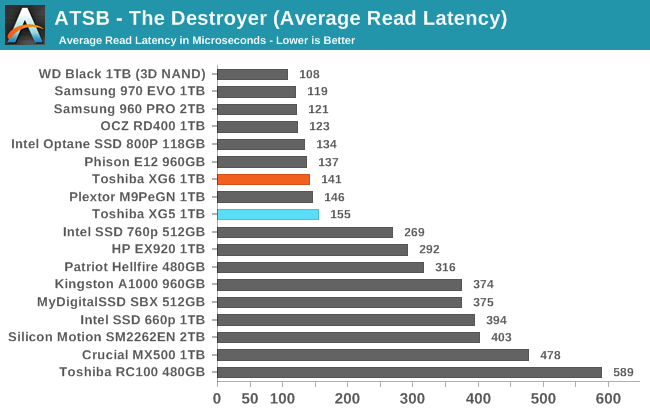

The average read latency for the XG6 is only slightly better than the XG5, which was the slowest drive in the high-end tier. For average write latency, the XG6 represents a much more substantial improvement that puts it ahead of almost every other TLC-based drive.

The XG5 already had very good QoS with 99th percentile read and write latencies that were quite low. The XG6 improves on both counts, with writes particularly improving.

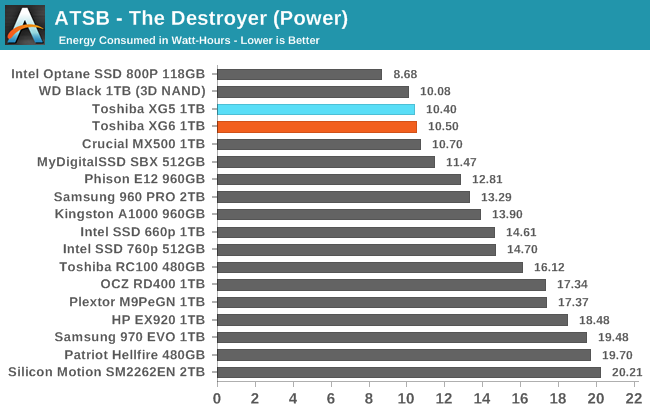

The total energy usage of the XG6 over the course of The Destroyer is very slightly higher than what the XG5 required, but this tiny efficiency sacrifice is easily justified by the performance increases. Toshiba's XG series remains one of the few options for a high-performance NVMe SSD with power efficiency that is comparable to mainstream SATA drives.

31 Comments

View All Comments

Spoelie - Thursday, September 6, 2018 - link

2 short questions:- what happened to the plextor M9Pe, performance is hugely different from the review back in march.

- i know this is already the case for a year or so, but what happened to the perf consistency graphs, where can i deduce the same information from?

hyno111 - Thursday, September 6, 2018 - link

M9Pe had firmware updates, not sure if it's applied or related though.DanNeely - Thursday, September 6, 2018 - link

I don't recall the details, but something went wrong with generating the performance consistency data, and they were pulled pending finding a fix due to concerns they were no longer valid. IF you have the patience to dig through the archive, IIRC the situation was explained in the first review without them.Billy Tallis - Thursday, September 6, 2018 - link

I think both of those are a result of me switching to a new version of the test suite at the same time that I applied the Spectre/Meltdown patches and re-tested everything. The Windows and Linux installations were updated, and a few tweaks were made to the synthetic test configuration (such as separating the sequential read results according to whether the test data was written sequentially or randomly). I also applied all the drive firmware updates I could find in the April-May timeframe.The steady-state random write test as it existed a few years ago is gone for good, because it really doesn't say anything relevant about drives that use SLC caching, which is now basically every consumer SSD (except Optane and Samsung MLC drives). I also wasn't too happy with the standard deviation-based consistency metric, because I don't think a drive should be penalized for occasionally being much faster than normal, only much slower than normal.

To judge performance consistency, I prefer to look at the 99th percentile latencies for the ATSB real-world workload traces. Those tend to clearly identify which drives are subject to stuttering performance under load, without exaggerating things as much as an hour-long steady-state torture test.

I may eventually introduce some more QoS measures for the synthetic tests, but at the moment most of them aren't set up to produce meaningful latency statistics. (Testing at a fixed queue depth leads to the coordinated omission problem, potentially drastically understating the severity of things like garbage collection pauses.) At some point I'll also start graphing the performance as a drive is filled, but with the intention of observing things like SLC cache sizes, not for the sake of seeing how the drive behaves when you keep torturing it after it's full.

I will be testing a few consumer SSDs for one of my upcoming enterprise SSD reviews, and that will include steady-state full drive performance for every test.

svan1971 - Thursday, September 6, 2018 - link

I wish current reviews would use current hardware, the 970 Pro replaced the 960 Pro months ago.Billy Tallis - Thursday, September 6, 2018 - link

I've had trouble getting a sample of that one; Samsung's consumer SSD sampling has been very erratic this year. But the 970 Pro is definitely a different class of product from a mainstream TLC-based drive like the XG6. I would only include 970 Pro results here for the same reason that I include Optane results. They're both products for people who don't really care about price at all. There's no sensible reason to be considering a 970 Pro and an XG6-like retail drive as both potential choices for the same purchasing decision.mapesdhs - Thursday, September 6, 2018 - link

Please never stop including older models, the comparisons are always useful. Kinda wish the 950 Pro was in there too.Spunjji - Friday, September 7, 2018 - link

I second this. I know that I am (and feel most other savvy consumers would be) more likely to compare an older high-end product to a newer mid-range product, partly to see if it's worth buying the older gear at a discount and partly to see when there is no performance trade-off in dropping a cost tier.jajig - Friday, September 7, 2018 - link

I third it. I want to know if an upgrade is worth while.dave_the_nerd - Sunday, September 9, 2018 - link

Very much this. And not all of us upgrade our gear every year or two.