ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

The R420 Vertex Pipeline

The point of the vertex pipeline in any GPU is to take geometry data, manipulate it if needed (with either fixed function processes, or a vertex shader program), and project all of the 3D data in a scene to 2 dimensions for display. It is also possible to eliminate unnecessary data from the rendering pipeline to cut out useless work (via view volume clipping and backface culling). After the vertex engine is done processing the geometry, all the 2D projected data is sent to the pixel engine for further processing (like texturing and fragment shading).

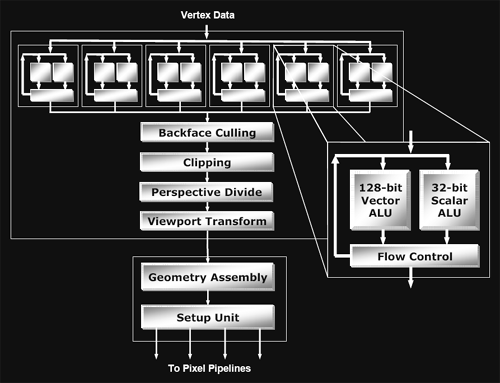

The vertex engine of R420 includes 6 total vertex pipelines (R3xx has four). This gives R420 a 50% per clock increase in peak vertex shader power per clock cycle.

Looking inside an individual vertex pipeline, not much has changed from R3xx. The vertex pipeline is laid out exactly the same, including a 128bit vector math unit, and a 32bit scalar math unit. The major upgrade R420 has had from R3xx is that it is now able to compute a SINCOS instruction in one clock cycle. Before now, if a developer requested the sine or cosine of a number in a vertex shader program, R3xx would actually compute a taylor series approximation of the answer (which takes longer to complete). The adoption of a single cycle SINCOS instruction by ATI is a very smart move, as trigonometric computations are useful in implementing functionality and effects attractive to developers. As an example, developers could manipulate the vertices of a surface with SINCOS in order to add ripples and waves (such as those seen in bodies of water). Sine and cosine computations are also useful in more basic geometric manipulation. Overall, R420 has a welcome addition in single cycle SINCOS computation.

So how does ATI's new vertex pipeline layout compare to NV40? On a major hardware "black box" level, ATI lacks the vertex texture unit featured in NV40 that's required for shader model 3.0's vertex texturing support. Vertex texturing allows developers to easily implement any effect which would benefit from allowing texture data to manipulate geometry (such as displacement mapping). The other major difference between R420 and NV40 is feature set support. As has been widely talked about, NV40 supports Shader Model 3.0 and all the bells and whistles that come along with it. R420's feature set support can be described as an extended version of Shader Model 2.0, offering a few more features above and beyond the R3xx line (including more support of longer shader programs, and more registers).

What all this boils down to is that we are only seeing something that looks like a slight massaging of the hardware from R300 to R420. We would probably see many more changes if we were able too peer deeper under the hood. From a functionality standpoint, it is sometimes hard to see where performance comes from, but (as we will see even more from the pixel pipeline) as graphics hardware evolves into multiple tiny CPUs all laid out in parallel, performance will be effected by factors traditionally only spoken of in CPU analysis and reviews. The total number of internal pipeline stages (rather than our high level functionality driven pipeline), cache latencies, the size of the internal register file, number of instructions in flight, number of cycles an instructions takes to complete, and branch prediction will all come heavily into play in the future. In fact, this review marks the true beginning of where we will be seeing these factors (rather than general functionality and "computing power") determine the performance of a generation of graphics products. But, more on this later.

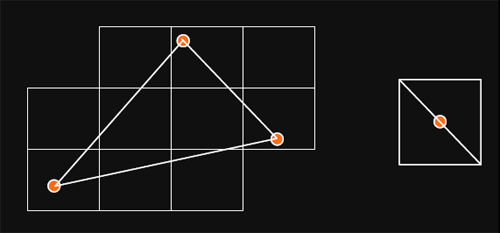

After leaving the vertex engine portion of R420, data moves into the setup engine. This section of the hardware takes the 2D projected data from the vertex engine, generates triangles and point sprites (particles), and partitions the output for use in the pixel engine. The triangle output is divided up into tiles, each of which are sent to a block of four pixel pipelines (called a quad pipeline by ATI). These tiles are simply square blocks of projected pixel data, and have nothing to do with "tile based rendering" (front to back rendering of small portions of the screen at a time) as was seen in PowerVR's Kyro series of GPUs.

Now we're ready to see what happens on the per-pixel level.

95 Comments

View All Comments

raks1024 - Monday, January 24, 2005 - link

free ati x800: http://www.pctech4free.com/default.aspx?ref=46670Ritalinkid - Monday, June 28, 2004 - link

After reading almost all of the video cards reviews posted on anandtech I start to get the feeling the anandtech has a grudge against nvidia. The reviews seem to put nvidia down no matter what area they excel in. With leading openGL support, ps3.0 support, and the 6850 shadowing the x800 in directX, its seems like nvidia should not be counted out as the "best card."I would love to see a review that tested all the features that both cards offered especially if showed the games that would benefit the most from each cards features (if they are available). Maybe then could I decide which is better, or which could benefit me more.

BlackShrike - Saturday, May 8, 2004 - link

Hey if anyone is gonna be buying one of these new cards, would anyone want to sell their 9700 pro or 9800 por/Xt for like 100-150 bucks? If you do contact me at POT989@hotmail.com. Thanks.DonB - Saturday, May 8, 2004 - link

No TV tuner on this card either? Will there be an "All-In-Wonder" version soon that will include it?xin - Friday, May 7, 2004 - link

(my bad, I didn't notice that I was on the first page of the posts, and replied to a message there heh)Well, since everyone else is throwing their preferences out there... I guess I will too. My last 3 cards have been ATI cards (9700Pro & 9500Pro, and an 8500 "Pro"), and I have not been let down. Right at this moment I lean towards the x800XT.

However, I am not concerned about power since I am running a TruePower550, and I will be interested in seeing what happens with all of this between now and the next 4-6 weeks when these cards actually come to market... and I will make my decision then on which card to buy.

xin - Friday, May 7, 2004 - link

Besides that, even if it were true (which it isn't), there is a world of difference between have *some* level of support, and requiring it. (*some* meaning the intial application of PS3.0 technology to games, that will likely be as sloppy as your first time in the back of a car with your first girlfriend).

Game makers will not require PS3.0 support for a long long long time... because it would alienate the vast majority of the people out there, or at least for the time being any person who doesn't have a NV40 card.

Some games may implement it and look slightly better, or even still look the same only run faster while looking the same.... but I would put money down that by the time PS3.0 usage in games comes anywhere close to mainstream, both mfg's will have their new, latest and greatest cards out, probably a 2 generations or more past these cards.

xin - Friday, May 7, 2004 - link

first of all... "alot of the upcoming topgames will support PS3.0!" ??? They will? Which ones exactly?

Z80 - Friday, May 7, 2004 - link

Good review. Pretty much tells me that I can select either Nvidia or ATI with confidence that I'm getting alot of "bang for my buck". However, my buck bang for video cards rarely exceeds $150 so I'm waiting for the new low to mid range cards before making a purchase.xin - Friday, May 7, 2004 - link

I love how a handful of stores out there feel the need to rip people off by charing $500+ for the x800PRO cards, since the XT isn't available yet.

Anyway, something interesting I noticed today:

http://www.compusa.com/products/product_info.asp?p...

http://www.compusa.com/products/product_info.asp?p...

Notice the "expected ship date"... at least they have their pricing right.

a2y - Friday, May 7, 2004 - link

Trog, I Also agree, the thing is.. its true i do not have complete knowledge of deep details of video cards.. u see my current video card is now 1 year old (Geforce4 mx440) which is terrible for gaming (50fps and less) and some games actually do not support it (like deusEX 2). I wanted a card that would be future proof, every consumer would go thinking this way, I do not spend everything i earned, but to me and some others $400-$500 is O.K. If it means its going to last a bit longer.I especially worry about the technology used more than the other specs of the cards, more technologies mean future games are going to support it. I DO NOT know what i'v just said actually means, but I fealt it during the past few years and have been affected by it right now (like the deus ex 2 problem!) it just doesn't support it, and my card performs TERRIBLY in all games

now my system is relatively slow for hardcore gaming:

P4 2.4GHz - 512MB RDRAM PC800 - 533MHz FSB - 512KB L2 Cache - 128MB Geforce4 mx440 card.

I wanted a big jump in performance especially in gaming so thats why i wanted the best card currently available.