The AMD Threadripper 2990WX 32-Core and 2950X 16-Core Review

by Dr. Ian Cutress on August 13, 2018 9:00 AM ESTThermal Comparisons and XFR2: Remember to Remove the CPU Cooler Plastic!

Every machine build has some targets: performance, power, noise, thermal performance, or cost. It is certainly hard to get all of them, so going after two or three is usually a good target. Well it turns out that there is one simple error that can make you lose on ALL FIVE COUNTS. Welcome to my world of when I first tested the 32-core AMD Ryzen Threadripper 2990WX, where I forgot to remove the plastic from my CPU liquid cooler.

Don’t Build Systems After Long Flights

Almost all brand new CPU coolers, either air coolers, liquid coolers, or water blocks, come pre-packaged with padding, foam, screws, fans, and all the instructions. Depending on the manufacturer, and the packaging type, the bottom of the CPU cooler will have been prepared in two ways:

- Pre-applied thermal paste

- A small self-adhesive plastic strip to protect the polishing during shipping

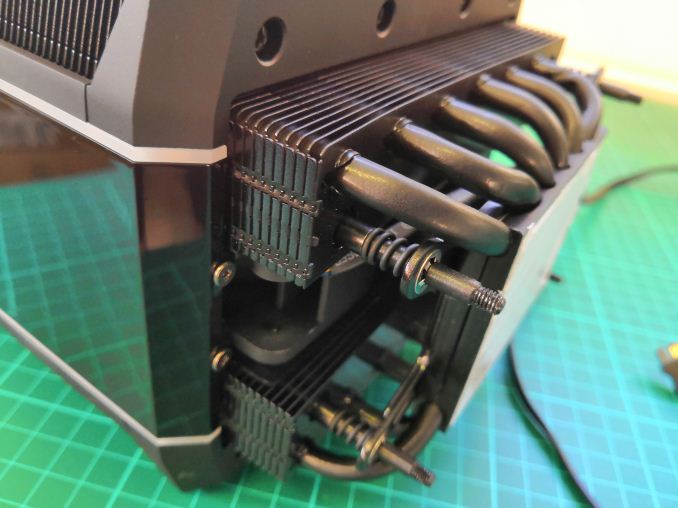

In our review kit, the Wraith Ripper massive air cooler, made by Cooler Master but promoted by AMD as the ‘base’ air cooler for new Threadripper 2 parts, had pre-applied thermal paste. It was across the whole base, and it was thick. It made a mess when I tried to take photos.

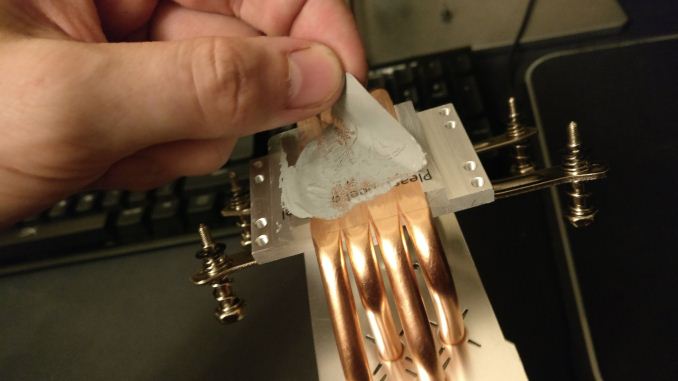

Also in our review kit was the Enermax Liqtech TR4 closed loop liquid cooler, with a small tube of thermal paste included. The bottom of the CPU block for the liquid cooler was covered in a self-adhesive plastic strip to protect the base in the packaging.

Example from TechTeamGB's Twitter

So confession time. Our review kit landed the day before I was travelling from the UK to San Francisco, to cover Flash Memory Summit and Intel’s Datacenter Summit. In my suitcases, I took an X399 motherboard (the ASUS ROG Zenith), three X399 chips (2990WX, 2950X, 1950X), an X299 motherboard (ASRock X299 OC Formula), several Skylake-X chips, a Corsair AX860i power supply, an RX 460 graphics card, mouse, keyboard, cables – basically two systems and relying on the monitor in the hotel room for testing. After an 11 hour direct flight, two hours at passport control, a one hour Uber to my hotel, I set up the system with the 2990WX.

I didn’t take off the plastic on the Enermax cooler. Well, I didn’t realize it at the time. I even put thermal paste on the processor, and it still didn’t register when I tightened the screws.

I set the system up at the maximum supported memory frequency, installed Windows, installed the security updates, installed the benchmarks, and set it to run overnight while I slept. I didn’t even realize the plastic was still attached. Come mid-morning, the benchmark suite had finished. I did some of the extra testing, such as base frequency latency measurements, and then went to replace the processor with the 2950X. It was at this time I performed a facepalm.

It was at that point, with thermal paste all over the processor and the plastic, I realized I done goofed. I took the plastic off, re-pasted the processor, and set the system up again, this time with a better thermal profile. But rather than throw the results away, I kept them.

Thermal Performance Matters

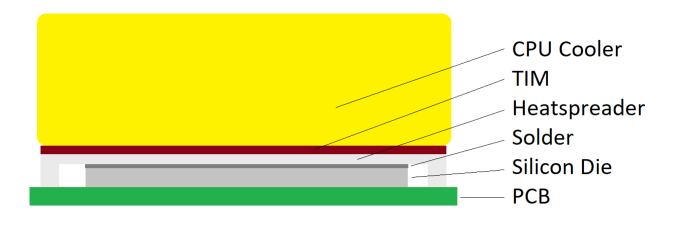

The goal of any system is to keep it with a sufficient thermal window to maintain operation: most processors are rated to work properly from normal temperatures up to 105C, at which point they shut down to avoid permanent thermal damage. When a processor shuttles electrons around and does things, it consumes power. That power is lost as heat, and it dissipates from the silicon out into two main areas: the socket and the heatspreader.

For AMD’s Threadripper processors, the thermal interface material between the silicon dies and the heatspreader is an indium-tin solder, a direct metal-to-metal bonding for direct heat transfer. Modern Intel processors use a silicone thermal grease instead, which is not as great, but has one benefit – it lasts longer through thermal cycling. As metals heat up, they expand: with two metals bonded together, with different thermal expansion coefficients, with enough heat cycles will crack and be ineffective – thermal grease essentially eliminates that issue. Thermal grease also happens to be cheaper. So it’s a trade-off between price/longevity and performance.

Above the heatspreader is the CPU cooler, but between the two is another thermal interface which the user can decide. The cheapest options involve nasty silicone thermal grease that costs cents per gallon, however performance enthusiasts might look towards a silver based thermal paste or a compound with good heat transfer characteristics – usually the ability for a paste to spread under pressure is a good quality. Extreme users can implement a liquid metal policy, similar to that of the solder connection, which binds the CPU to the CPU cooler pretty much permanently.

So what happens if you suddenly put some microns of thermally inefficient plastic between the heatspreader and the CPU cooler?

First of all, the conductive heat transfer is terrible. This means that the thermal energy stays in the paste and headspreader for longer, causing heat soak in the processor, raising temperatures. This is essentially the same effect when a cooler is overwhelmed by a large processor – heat soak is real and can be a problem. It typically leads to a runaway temperature rise, until the temperature gradient can equal the heat energy output. This is when a processor gets too hot, and typically a thermal emergency power state kicks in, reducing voltage and frequency to super low levels. Performance ends down the drain.

What does the user see in the system? Imagine a processor hitting 600 MHz while rendering, rather than a nice 3125 MHz at stock (see previous page). Base temperatures are higher, load temperatures are higher, case temperatures are higher. Might as well dry some wet clothes in there while you are at it. A little thermal energy never hurt a processor, but a lot can destroy an experience.

AMD’s XFR2

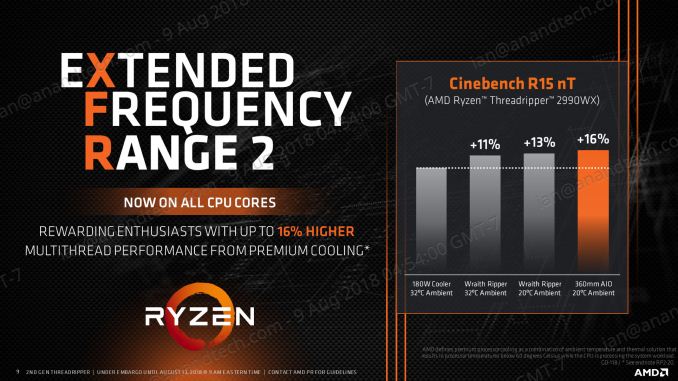

Ultimately this issue hurts AMD more than you might think. The way AMD implements its turbo modes is not a look-up-table where cores loaded equals turbo frequency – it relies on power, current, and thermal limits of a given chip. Where there is room, the AMD platform is designed to add frequency and voltage where possible. The thermal aspect of this is what AMD calls XFR2, or eXtended Frequency Range 2.

At AMD’s Tech Day for Threadripper 2, we were presented with graphs showing the effects of using better coolers on performance: around 10% better benchmark results due to having higher thermal headroom. Stick the system in an environment with a lower ambient temperature as well, and AMD quoted a 16% performance gain over a ‘stock’ system.

However, the reverse works too. By having that bit of plastic in there, what this effectively did was lower that thermal ceiling, from idle to load, which should result in a drop in performance.

Plastic Performance

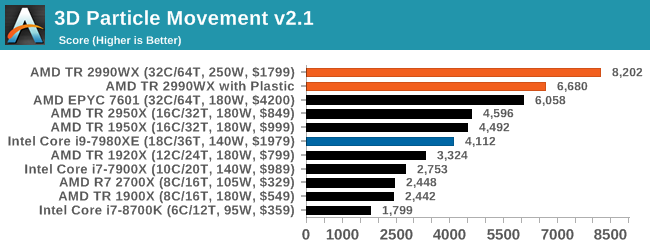

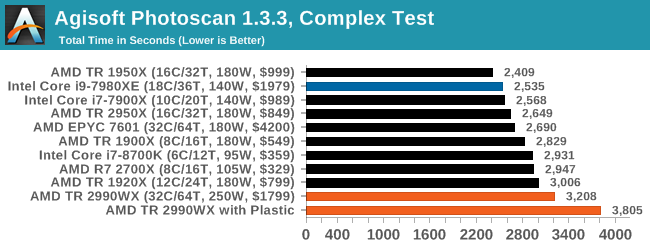

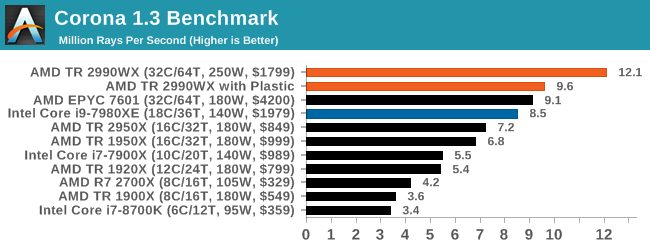

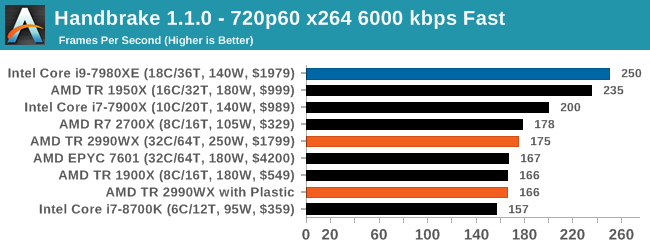

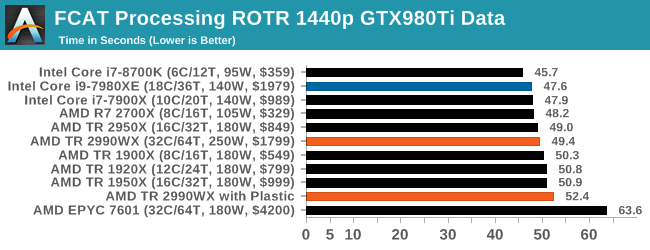

So despite being in a nice air-conditioned hotel room, that additional plastic did a number on most of our benchmarks. Here is the damage:

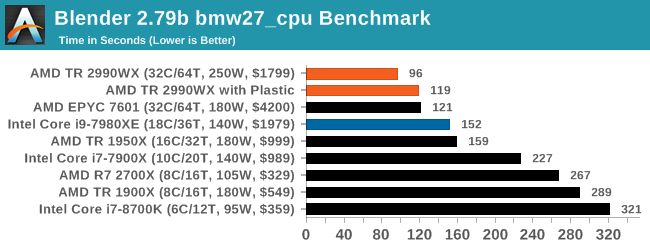

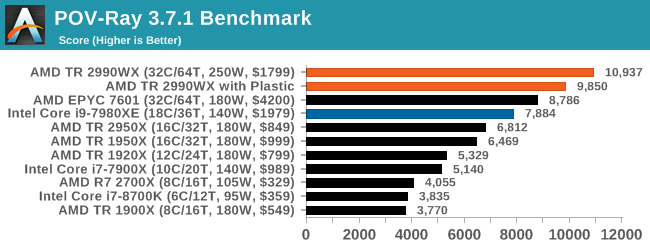

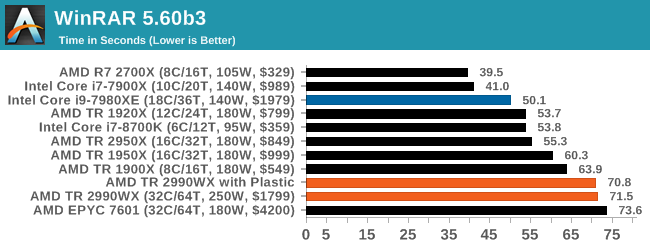

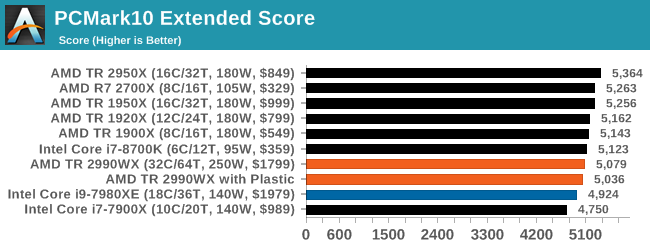

For all of our multi-threaded tests, where the CPU is hammered hard, there is a significant decrease in performance as expected. Blender saw a 20% decrease in throughput, POV-Ray was 10% lower, 3DPM was 19%. PCMark was only slightly lower, as it has a lot of single threaded tests, and annoyingly in some benchmarks we saw it swing the other way, such as WinRAR, which is more DRAM bound. Other benchmarks not listed include our compile test, where the plasticated system was 1% slower, or Dolphin, where there was a one-second difference.

What Have I Learned?

Don’t be a fool. Building a test bed with new components when super tired may lead to extra re-tests.

171 Comments

View All Comments

ibnmadhi - Monday, August 13, 2018 - link

It's over, Intel is finished.milkod2001 - Monday, August 13, 2018 - link

Unfortunately not even close. Intel was dominating for last decade or so. Now when AMD is back in game, many will consider AMD but most will still get Intel instead. Damage was done.It took forever to AMD to recover from being useless and will take at least 5 years till it will get some serious market share. Better late than never though...tipoo - Monday, August 13, 2018 - link

It's not imminent, but Intel sure seems set for a gradual decline. It's hard to eke out IPC wins these days so it'll be hard to shake AMD off per-core, they no longer have a massive process lead to lead on core count with their margins either, and ARM is also chipping away at the bottom.Intel will probably be a vampire that lives another hundred years, but it'll go from the 900lb gorilla to one on a decent diet.

ACE76 - Monday, August 13, 2018 - link

AMD retail sales are equal to Intel now...and they are starting to make a noticeable dent in the server market as well...it won't take 5 years for them to be on top...if Ryzen 2 delivers a 25% increase in performance, they will topple Intel in 2019/2020HStewart - Monday, August 13, 2018 - link

"AMD retail sales are equal to Intel now"Desktop maybe - but that is minimal market.

monglerbongler - Monday, August 13, 2018 - link

Pretty much this.No one really cares about workstation/prosumer/gaming PC market. Its almost certainly the smallest measurable segment of the industry.

As far as these companies' business models are concerned:

Data center/server/cluster > OEM consumer (dell, hp, microsoft, apple, asus, toshiba, etc.) > random categories like industrial or compact PCs used in hospitals and places like that > Workstation/prosumer/gaming

AMD's entire strategy is to desperately push as hard as they can into the bulwark of Intel's cloud/server/data center dominance.

Though, to be completely honest, for that segment they really only offer pure core count and PCIe as benefits. Sure they have lots of memory channels, but server/data center and cluster are already moving toward the future of storage/memory fusion (eg Optane), so that entire traditional design may start to change radically soon.

All important: Performance per unit of area inside of a box, and performance per watt? Not the greatest.

That is exceptionally important for small companies that buy cooling from the power grid (air conditioning). If you are a big company in Washington and buy your cooling via river water, you might have to invest in upgrades to your cooling system.

Beyond all that the Epyc chips are so freaking massive that they can literally restrict the ability to design 2 slot server configuration motherboards that also have to house additional compute hardware (eg GPGPU or FPGA boards). I laugh at the prospect of a 4 slot epyc motherboard. The thing will be the size of a goddamn desk. Literally a "desktop" sized motherboard.

If you cant figure it out, its obvious:

Everything except for the last category involves massive years-spanning contracts for massive orders of hundreds of thousands or millions of individual components.

You can't bet hundreds of millions or billions in R&D, plus the years-spanning billion dollar contracts with Global Foundries (AMD) or the tooling required to upgrade and maintain equipment (Intel) on the vagaries of consumers, small businesses that make workstations to order, that small fraction of people who buy workstations from OEMs, etc.

Even if you go to a place like Pixar studios or a game developer, most of the actual physical computers inside are regular, bone standard, consumer-level hardware PCs, not workstation level equipment. There certainly ARE workstations, but they are a minority of the capital equipment inside such places.

Ultimately that is why, despite all the press, despite sending out expensive test samples to Anandtech, despite flashy powerpoint presentations given by arbitrary VPs of engineering or CEOs, all of the workstation/Prosumer/gaming stuff is just low-binned server equipment.

because those are really the only 2 categories of products they make;

pure consumer, pure workstation. Everything else is just partially enabled/disabled variations on those 2 flavors.

Icehawk - Monday, August 13, 2018 - link

I was looking at some new boxes for work and our main vendors offer little if anything AMD either for server roles or desktop. Even if they did it's an uphill battle to push a "2nd tier" vendor (AMD is not but are perceived that way by some) to management.PixyMisa - Tuesday, August 14, 2018 - link

There aren't any 4-socket EPYC servers because the interconnect only allows for two sockets. The fact that it might be difficult to build such servers is irrelevant because it's impossible.leexgx - Thursday, August 16, 2018 - link

is more then 2 sockets needed when you have so many cores to play withRelic74 - Wednesday, August 29, 2018 - link

Actually there are, kind of, supermicro for example has created a 4 node server for the Epyc. Basically it's 4 computers in one server case but the performance is equal to that if not better than that of a hardware 4 socket server. Cool stuff, you should check it out. In fact, I think this is the way of the future and multi socket systems are on their way out as this solution provides more control over what CPU. As well as what the individual cores are doing and provides better power management as you can shut down individual nodes or put them in stand by where as server with 4 sockets/CPU's is basically always on.There is a really great white paper on the subject that came out of AMD, where the stated that they looked into creating a 4 socket CPU and motherboard capable of handling all of the PCI lanes needed, however it didn't make any sense for them to do so as there weren't any performance gains over the node solution.

In fact I believe we will see a resurrection of blade systems using AMD CPU's, especially now with all of the improvements that have been made with multi node cluster computing over the last few years.