NVIDIA GeForce 6800 Ultra: The Next Step Forward

by Derek Wilson on April 14, 2004 8:42 AM EST- Posted in

- GPUs

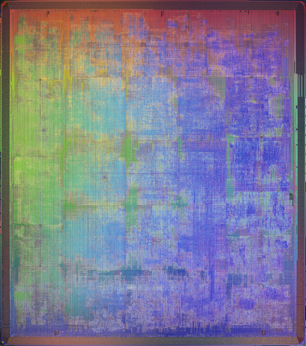

NV40 Under the Microscope

The NV40 chip itself is massive. Weighing in at a hefty 222 Million transistors, NVIDIA's newest GPU has more than three times the number of transistors as Intel's Northwood P4, and about 33% more transistors than the Pentium 4 EE. This die is droped onto a 40mm x 40mm flipchip BGA package.

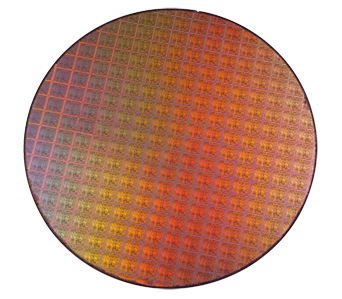

NVIDIA doesn't publish their die size information, but we have been able to interpolate a little bit from the data we have available on their process information, and a very useful wafer shot.

As we can see, somewhere around 16 chips fit horizontally on the wafer, while they can squeeze in about 18 chips vertically. We know that NVIDIA uses a 130nm IBM process on 300mm wafers. We also know that the P4 EE is in the neighborhood of 250mm^2 in size. Doing the math indicates that the NV40 GPU is somewhere between 270mm^2 and 305mm^2. It is difficult to get a closer estimate because we don't know how much space is between each chip on the wafer (which also makes it hard to estimate waste per wafer).

Since we don't have information on yields either, it's hard to say how well NVIDIA will be making out on this GPU. Increasing the transistor count and die size will lower yields, and the retail value of cards based on NV40 will have the same price at release as when NV38 was released.

Of course, even if they don't end up making as much money as they want off of this card, throwing down the gauntlet and pushing everything as hard as they can will be worth it. After the GeForce FX series of cards failed to measure up to the hype (and the competition), NVIDIA has needed something to reestablish their position as performance leader in the industry. This industry can be brutal, and falling short twice is well nigh a death sentence.

But, all those transistors on such a big die must draw a lot of power right? Just how much juice do we need to feed this beast ...

77 Comments

View All Comments

Pete - Monday, April 19, 2004 - link

Shinei,I did not know that. </Johnny Carson>

Derek,

I think it'd be very helpful if you listed the game version (you know, what patches have been applied) and map tested, for easier reference. I don't even think you mentioned the driver version used on each card, quite important given the constant updates and fixes.

Something to think about ahead of the X800 deadline. :)

zakath - Friday, April 16, 2004 - link

I've seen a lot of comments on the cost of these next-gen cards. This shouldn't surprise anyone...it has always been this way. The market for these new parts is small to begin with. The best thing the next gen does for the vast majority of us non-fanbois-who-have-to-have-the-bleeding-edge-part is that it brings *todays* cutting edge parts into the realm of affordability.Serp86 - Friday, April 16, 2004 - link

Bah! My almost 2 year old 9700pro is good enough for me now. i think i'll wait for nv50/r500....Also, a better investment for me is to get a new monitor since the 17" one i have only supports 1280x1024 and i never turn it that high since the 60hz refresh rate makes me go crazy

Wwhat - Friday, April 16, 2004 - link

that was to brickster, neglected to mention thatWwhat - Friday, April 16, 2004 - link

Yes you are aloneChronoReverse - Thursday, April 15, 2004 - link

Ahem, this card has been tested by some people with a high-quality 350W power supply and it was just fine.Considering that anyone who could afford a 6800U would have a good powersupply (Thermaltake, Antec or Enermax), it really doesn't matter.

The 6800NU uses only one molex.

deathwalker - Thursday, April 15, 2004 - link

Oh my god...$400 and u cant even put it in 75% of the systems on peoples desks today without buying a new power supply at a cost of nearly another $100 for a quailty PS...i think this just about has to push all the fanatics out there over the limit...no way in hell your going to notice the perform improvement in a multiplayer game over a network..when does this maddness stop.Justsomeguy21 - Monday, November 29, 2021 - link

LOL, this was too funny to read. Complaining about a bleeding edge graphics card costing $400 is utterly ridiculous in the year 2021 (almost 2022). You can barely get a midrange card for that price and that's assuming you're paying MSRP and not scalper prices. 2004 was a great year for PC gaming, granted today's smartphones can run circles around a Geforce 6800 Ultra but for the time PC hardware was being pushed to the limits and games like Doom 3, Far Cry and Half Life 2 felt so nextgen that console games wouldn't catch up for a few years.deathwalker - Thursday, April 15, 2004 - link

Shinei - Thursday, April 15, 2004 - link

Pete, MP2 DOES use DX9 effects, mirrors are disabled unless you have a PS2.0-capable card. I'm not sure why, since AvP1 (a DX7 game) had mirrors, but it does nontheless. I should know, since my Ti4200 (DX8.1 compatible) doesn't render mirrors as reflective even though I checked the box in the options menu to enable them...Besides, it does have some nice graphics that can bog a card down at higher resolutions/AA settings. I'd love to see what the game looks like at 2048x1536 with 4xAA and maxed AF with a triple buffer... Or even a more comfortable 1600x1200 with same graphical settings. :D