The NVIDIA GeForce GTX 1070 Ti Founders Edition Review: GP104 Comes in Threes

by Nate Oh on November 2, 2017 9:00 AM EST- Posted in

- GPUs

- GeForce

- NVIDIA

- Pascal

- GTX 1070 Ti

Compute

Shifting gears, let’s take a look at compute performance on the GTX 1070 Ti.

As the GTX 1070 Ti is another GP104 SKU – and a fairly straightforward one at that – there shouldn’t be any surprises here. Relative to the GTX 1070, all of NVIDIA's performance improvements actually favor compute performance, so we should see a decent bump in performance here. However it won't change the fact that ultimately the GTX 1070 Ti will still come in below the GTX 1080, which has more SMs and a higher average clockspeed (never mind the benefits of more memory bandwidth).

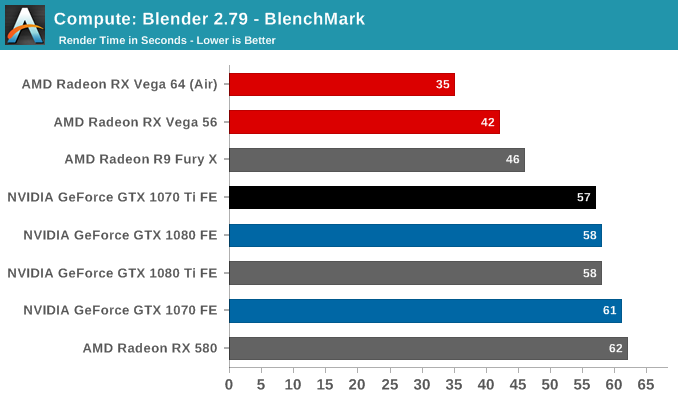

Starting us off for our look at compute is Blender, the popular open source 3D modeling and rendering package. To examine Blender performance, we're running BlenchMark, a script and workload set that measures how long it takes to render a scene. BlechMark uses Blender's internal Cycles render engine, which is GPU accelerated on both NVIDIA (CUDA) and AMD (OpenCL) GPUs.

As you might expect, the GTX 1070 Ti's performance shoots ahead of the GTX 1070's due to the additional enabled SMs of this new video card SKU. In fact it technically outpaces the GTX 1080 by a single second, which although eye-popping, is within our margin of error. However what it can't do is overtake AMD's lead here, with the NVIDIA cards trailing the Vega family by quite a bit.

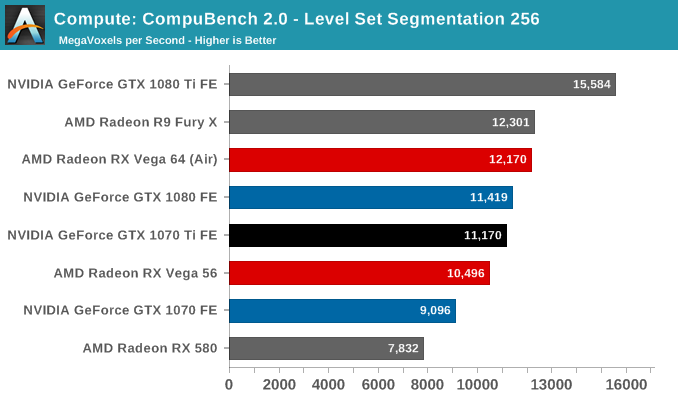

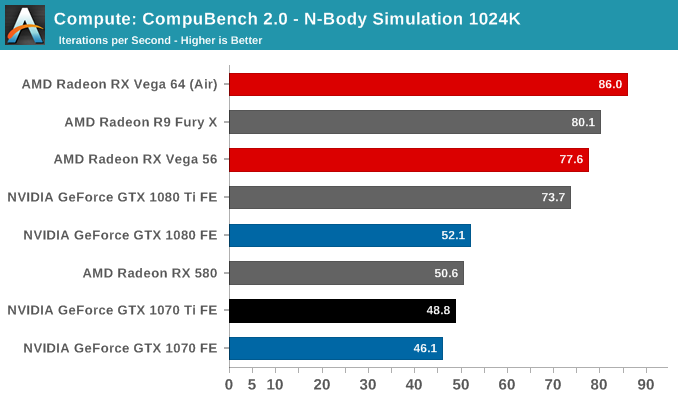

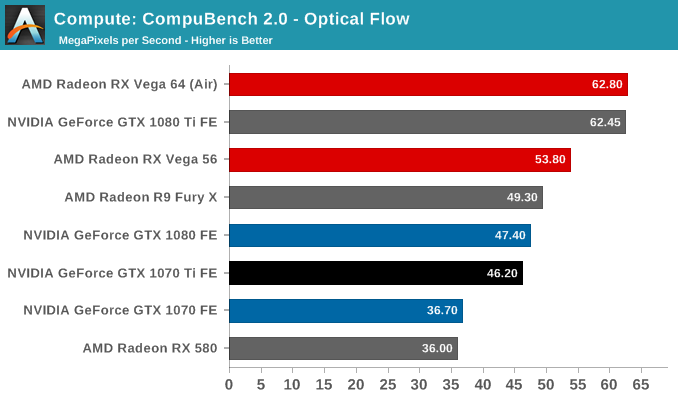

For our second set of compute benchmarks we have CompuBench 2.0, the latest iteration of Kishonti's GPU compute benchmark suite. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on level set segmentation, optical flow modeling, and N-Body physics simulations.

In all 3 sub-tests, the GTX 1070 Ti makes modest gains. Overall, performance is now quite close to the GTX 1080, which makes sense given the relatively small gap in on-paper compute performance between the two cards. This also means that at least in the case of these benchmarks, the lack of additional memory bandwidth isn't hurting the GTX 1070 Ti too much. However looking at the broader picture, all of the NVIDIA GP104 cards are trailing AMD's Vega family outside of the more equitable level set segmentation sub-test.

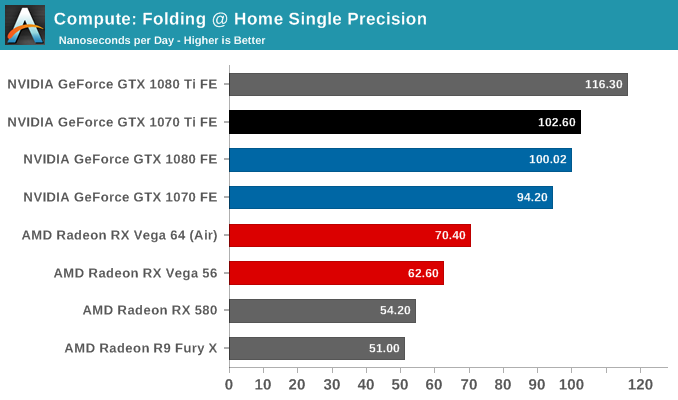

Moving on, our 3rd compute benchmark is the next generation release of FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance.

The GTX 1080 and GTX 1070 were already fairly close on this benchmark, so there's not a lot of room for the GTX 1070 Ti to stand out. Interestingly this is another case where performance actually slightly exceeds the GTX 1080 – though again within the margin of error – which further affirms just how close the compute performance of the new card is to the GTX 1080.

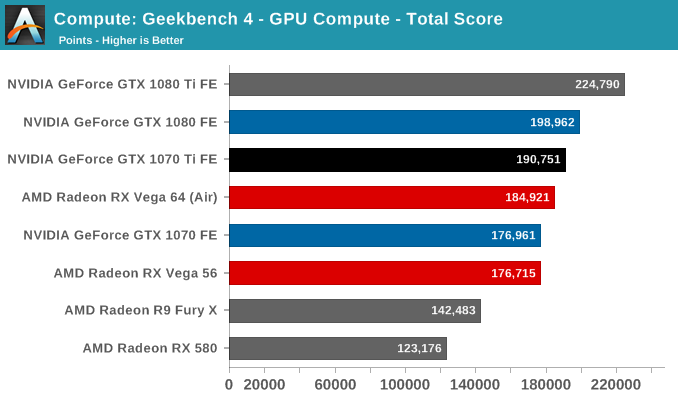

Our final compute benchmark is Geekbench 4's GPU compute suite. A multi-faceted test suite, Geekbench 4 runs seven different GPU sub-tests, ranging from face detection to FFTs, and then averages out their scores via their geometric mean. As a result Geekbench 4 isn't testing any one workload, but rather is an average of many different basic workloads.

As with our other benchmarks, the GTX 1070 Ti more or less bridges the gap between the GTX 1080 and GTX 1070, falling just a few percent short of the GTX 1080 in performance. This is a test where NVIDIA was already doing better than average at, and now with its increased SM count, the GTX 1070 Ti has enough compute performance to surpass AMD's RX Vega 64, something the regular GTX 1070 could not do.

78 Comments

View All Comments

moxin - Thursday, November 2, 2017 - link

Still think it's a bit expensiveSpunjji - Thursday, November 2, 2017 - link

Agreed. This should be 1070 price, 1070 down to the 970's original MSRP... Anything less is gouging.Yojimbo - Thursday, November 2, 2017 - link

I think it should cost $20.edlee - Friday, January 12, 2018 - link

This mining rush might kill the pc gaming industry, when a gamer cannot find a single high performance card at msrp prices, they will just flock to the xbox one x or ps4 pro, this is outrageous.Ryan Smith - Thursday, November 2, 2017 - link

Hey everyone, please watch your language. (not you specifically, Spunjji, I removed a comment below you)CiccioB - Thursday, November 2, 2017 - link

I think that any comment whining about prices should be removed ASAP.Ratman6161 - Thursday, November 2, 2017 - link

I'm not a gamer so I'm more wide eyed at a the idea of a video card that draws 80 watts at idle and over 300 under load...more than my whole system under load. And upwards of $500? Wow. I guess I'm sort of glad I'm not a gamer. :)DanNeely - Thursday, November 2, 2017 - link

Those are total system numbers, not the card itself.CaedenV - Thursday, November 2, 2017 - link

The card by itself idles at ~20W and load at ~250WStill quite a bit compared to a small desktop or a laptop though lol

BrokenCrayons - Thursday, November 2, 2017 - link

Yup, those power numbers are terrible. The desire for improvement in visual quality and competition between the two remaining dGPU manufactures has certainly done us no favors when it comes to electrical consumption and waste heat generation in modern PCs. Sadly, people often forget that good graphics don't automatically imply tons of fun will be had at the keyboard and they consequently create demand that causes a positive feedback loop that make 200+ watt TDP GPUs viable products. I remember the many hours I killed playing games on my Palm IIIxe and it needed a new pair of AAA batteries once every 3 or so weeks. Not everyone feels that way though and for an obviously large number of consumer buyers, graphics and resolution mean the world to them no matter the price of entry or the power consumption.