Memory Scaling on Ryzen 7 with Team Group's Night Hawk RGB

by Ian Cutress & Gavin Bonshor on September 27, 2017 11:05 AM ESTGaming Performance

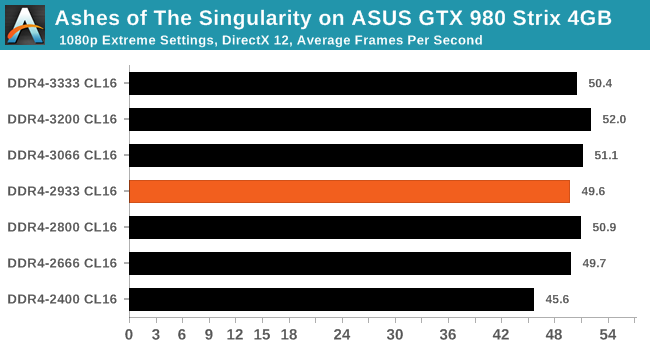

Ashes of the Singularity (DX12)

Seen as the holy child of DX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go and explore as many of the DX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

Performance with Ashes over our different memory settings was varied at best. The DDR4-2400 value can certainly be characterized as the lowest number near to ~45-46 FPS, while everything else is rounded to 50 FPS or above. Depending on the configuration, this could be an 8-10% difference in frame rates by not selecting the worst memory.

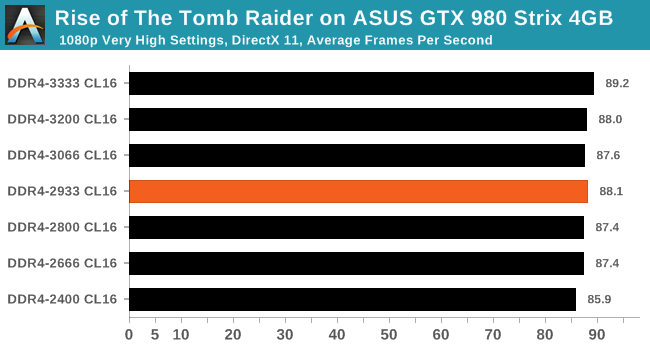

Rise Of The Tomb Raider (DX12)

One of the newest games in the gaming benchmark suite is Rise of the Tomb Raider (RoTR), developed by Crystal Dynamics, and the sequel to the popular Tomb Raider which was loved for its automated benchmark mode. But don’t let that fool you: the benchmark mode in RoTR is very much different this time around.

Visually, the previous Tomb Raider pushed realism to the limits with features such as TressFX, and the new RoTR goes one stage further when it comes to graphics fidelity. This leads to an interesting set of requirements in hardware: some sections of the game are typically GPU limited, whereas others with a lot of long-range physics can be CPU limited, depending on how the driver can translate the DirectX 12 workload.

We encountered insignificant performance differences in RoTR on the GTX 980. The 3.3 FPS increase at average framerates from top to bottom does not exactly justify the price cost between DDR4-2400 and DDR4-3333 when using a GTX 980 - not in this particular game at least.

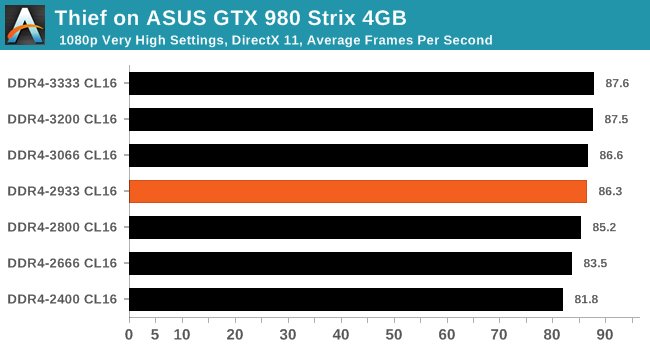

Thief

Thief has been a long-standing title in the hearts of PC gamers since the introduction of the very first iteration back in 1998 (Thief: The Dark Project). Thief is the latest reboot in the long-standing series and renowned publisher Square Enix took over the task from where Eidos Interactive left off back in 2004. The game itself uses the UE3 engine and is known for optimised and improved destructible environments, large crowd simulation and soft body dynamics.

For Thief, there are some small gains to be had from moving through from DDR4-2400 to DDR4-2933, around 5% or so, however after this the performance levels out.

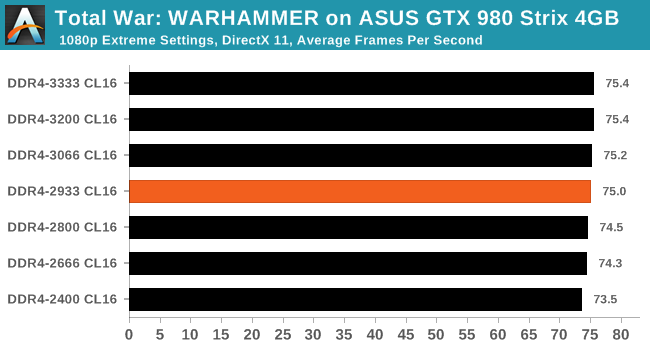

Total War: WARHAMMER

Not only is the Total War franchise one of the most popular real time tactical strategy titles of all time, but Sega has delved into multiple worlds such as the Roman Empire, the Napoleonic era, and even Attila the Hun. More recently the franchise has tackeld the popular WARHAMMER series. The developers Creative Assembly have integrated DX12 into their latest RTS battle title, it aims to take benefits that DX12 can provide. The game itself can come across as very CPU intensive, and is capable of pushing any top end system to their limits.

Even though Total War: WARHAMMER is very CPU performance focused benchmark, memory had barely any effect on the results.

65 Comments

View All Comments

Drumsticks - Wednesday, September 27, 2017 - link

Interesting findings. I've seen Ryzen hailed on other simple forums like Reddit as having great scaling. There's definitely some at play, but not as much as I'd have thought.How does this compare to Intel? Are there any plans to do an Intel version of this article?

ScottSoapbox - Thursday, September 28, 2017 - link

2nd!I'd like to see how much quad channel helps the (low end) X299 vs the dual channel Z370. With overlapping CPUs in that space it could be really interesting.

blzd - Thursday, September 28, 2017 - link

Yes please compare to Intel memory gains, would be very interested to see if it sees less/more boost from higher speed memory.Great article BTW.

jospoortvliet - Saturday, September 30, 2017 - link

While I wouldn't mind another test there have been plenty over the last year's as the authors also pointed out in the opening of the article and the results were simple - it makes barely any difference, far less even than for Ryzen..vodka - Wednesday, September 27, 2017 - link

Default subtimings in Ryzen are horribly loose, and there's lots of performance left on the table apart from IF scaling through memory frequency and more bandwidth. You've got B-die here, you could try these, thanks to The Stilt:http://www.overclock.net/t/1624603/rog-crosshair-v...

This has also been explored by AMD in one of their community updates, at least in games:

https://community.amd.com/community/gaming/blog/20...

Ian Cutress - Wednesday, September 27, 2017 - link

The sub-timings are determined by the memory kit at hand, and how aggressive the DRAM module manufacturer wants to make their ICs. So when you say 'default subtimings on Ryzen are horribly loose', that doesn't make sense: it's determined by the DRAM here. Sure there are adjustments that could be made to the kit. We'll be tackling sub-timings in a later piece, as I wanted Gavin's first analysis piece for us to a reasonable task but not totally off the deep end (as our Haswell scaling piece showed, 26 different DRAM/CL combinations can take upwards of a month of testing). I'll be working with Gavin next week, when I'm back in the office from an industry event the other side of the world and I'm not chasing my own deadlines, to pull percentile data from his numbers and bringing parity with some of our other testing.xTRICKYxx - Wednesday, September 27, 2017 - link

.vodka is right. Please investigate!looncraz - Wednesday, September 27, 2017 - link

AMD sets its own subtimings as memory kits were designed for Intel's IMC and the subtimings are set accordingly.The default subtimings are VERY loose... sometimes so loose as to even be unstable.

.vodka - Wednesday, September 27, 2017 - link

Sadly, that's the situation right now. We'll see if the upcoming AGESA 1.0.0.7 does anything to get things running better at default settings.This article as is, isn't showing the entire picture.

notashill - Wednesday, September 27, 2017 - link

There's a new AGESA 1.0.0.6b but AMD has said very little about what changed in it.