Silicon Motion Roadmap: Lots Of NVMe SSD Controllers

by Billy Tallis on August 25, 2017 8:00 AM EST

At Flash Memory Summit (FMS) this month, Silicon Motion demonstrated several of their upcoming NVMe SSD controllers and engineers presented several of the technologies Silicon Motion has developed for these controllers. Between the exhibit and the technical presentations, a total of six upcoming SSD controllers were mentioned.

Currently, most SSDs using Silicon Motion controllers feature either the SM2258 SATA controller or the SM2260 NVMe controller. The SM2258XT is a DRAMless variant of the SM2258. Silicon Motion's new SM2259 SATA controller recently debuted in the Intel SSD 545s, but hasn't been spotted in any other consumer products yet and there are still new SM2258 products being announced. Silicon Motion hasn't shared much information on the SM2259 and it doesn't even appear on their website yet, but thanks to the presentations at FMS we now know that one of the key improvements over the SM2258 is Silicon Motion's second-generation LDPC encoder. Like the SM2260 NVMe controller, the SM2259 uses a 2kb codeword size instead of the 1kb codeword size used by the SM2256 and SM2258 SATA controllers. As a result of the larger codeword size and other changes to the LDPC system, the SM2259 can offer much higher error correction throughput and tolerate a higher error rate than its predecessors. The improved performance comes at the cost of requiring significantly more die area on the controller and higher power draw, but our test results from the Intel SSD 545s indicate these tradeoffs were worthwhile.

Silicon Motion's upcoming generation of NVMe SSD controllers will have four members to cover a broader range of the market than the current SM2260. The low-end NVMe SSD market will be served by the SM2263 and its DRAMless counterpart SM2263XT. These controllers still use up to four lanes of PCIe 3.0, but are equipped with only four channels on the flash interface side, the same as Silicon Motion's SATA controllers. The DRAMless SM2263XT will also be used for BGA SSDs that stack the NAND flash on top of the controller in a single package. Both the PCIe x4 16mm by 20mm package standard and the PCIe x2 11.5mm by 13mm package standard are usable by the SM2263XT for BGA SSDs. As with most DRAMless NVMe controllers, the SM2263XT supports the NVMe Host Memory Buffer feature that allows it to use a small portion of the host system's DRAM to avoid most of the performance penalties that DRAMless SATA SSDs suffer from.

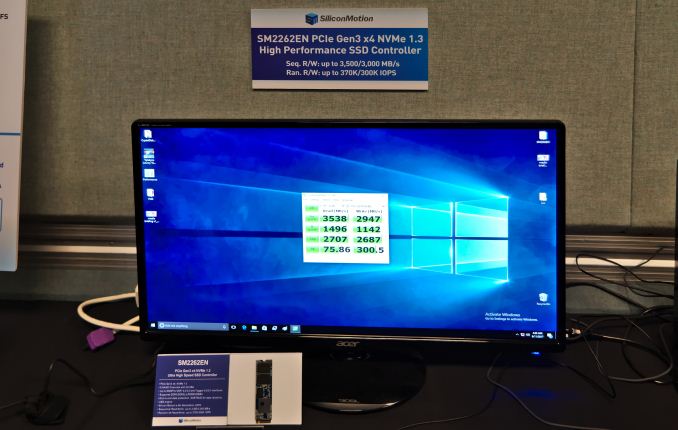

The most direct successor to the SM2260 will be the SM2262. This is another 8-channel controller intended for high performance client and consumer SSDs. The SM2262 uses the same package and pinout as the SM2260 but will offer much higher performance. Reliability and write endurance should also be improved due to the inclusion of the same LDPC upgrades present in the SM2259 SATA SSD controller. Lastly for the upcoming generation, the SM2262EN will be a higher-performance counterpart to the plain SM2262. The -EN version is intended to help Silicon Motion break into the enterprise SSD market, but given its performance specifications it will probably also be used in several enthusiast-oriented consumer SSDs. To help ensure the SM2262EN is suitable for the enterprise market, Silicon Motion has added end-to-end data path protection including ECC on all the internal SRAM buffers. The other three controllers in this generation also get this benefit due to the shared architecture across the product family.

Future NVMe controllers beyond the -62/63 generation will include the SM2264 and SM2270. These haven't been officially announced but a few details have been released. At Computex our friends at Tom's Hardware spotted a roadmap showing SM2264 as their first controller supporting PCIe 4.0. With four PCIe lanes and an eight channel flash interface, the SM2264 would be the successor to the SM2262 (and possibly also the SM2262EN). Both the SM2264 and SM2270 will feature Silicon Motion's third-generation LDPC encoder, now supporting 4kb codewords as part of an error correction system designed to meet the needs of QLC NAND. When paired with QLC NAND, the SM2264 and SM2270 will allow Silicon Motion to compete in the new product segment of capacity-optimized PCIe SSDs. Most of these will be enterprise SSDs, but if the more optimistic projections for 64+ layer 3D QLC NAND write endurance are to be believed, QLC may also have a place in the consumer SSD market.

| Silicon Motion NVMe SSD Controller Comparison | |||||

| Controller | SM2260 | SM2263XT | SM2263 | SM2262 | SM2262EN |

| Host Interface | PCIe 3 x4 | PCIe 3 x4 | PCIe 3 x4 | PCIe 3 x4 | PCIe 3 x4 |

| NAND Flash Channels | 8 | 4 | 4 | 8 | 8 |

| DRAM Support | Yes | No | Yes | Yes | Yes |

| Sequential Read | 2400 MB/s | 2400 MB/s | 2400 MB/s | 3200 MB/s | 3500 MB/s |

| Sequential Write | 1000 MB/s | 1700 MB/s | 1700 MB/s | 1900 MB/s | 3000 MB/s |

| Random Read IOPS | 120k | 280k | 300k | 370k | 370k |

| Random Write IOPS | 140k | 250k | 250k | 300k | 300k |

Silicon Motion didn't have much to say at FMS about the timeline for these controllers. However, the fact that there were live demos of both the SM2263 and SM2262EN speaks volumes. The hardware for the upcoming generation is ready and the firmware is not entirely polished but is good enough to deliver record-setting performance when paired with Intel's 64-layer 3D NAND. With current speeds of around 3.5GB/s for sequential reads and 3GB/s for sequential writes (at QD32, as measured by CrystalDiskMark) and with random read and write speeds of about 75MB/s and 300MB/s respectively at QD1, the SM2262EN has a serious chance of challenging even Samsung's NVMe SSDs in just a few months time. The limiting factor will most likely be availability of 64-layer 3D NAND.

The SM2264 was previously revealed to be planned for the end of 2018, which means it could start showing up in products in the spring of 2019. The PCIe 4.0 hardware ecosystem will still be in its infancy at that time, so SSD vendors will probably not be in a hurry to deploy SM2264 except for use with QLC NAND. Since the SM2270 controller was not shown on any of Silicon Motion's roadmaps and was only mentioned in the context of the third-generation LDPC encoder, we don't have any indication of which market segments it will target or when it will be available.

Source: Silicon Motion

6 Comments

View All Comments

ddriver - Friday, August 25, 2017 - link

Cool, but what controllers really must be focusing on is random performance. We already have more sequential performance than needed. More really wouldn't make a difference.MrSpadge - Friday, August 25, 2017 - link

Sure, but does your comment have anything to do with the article? Random read throughput more than tripled going from SM2260 to SM2262, whereas random write more than doubled. Both gains surpass the sequential gains.I would say random performance at low QDs and latency still have room & need for improvement.

ddriver - Friday, August 25, 2017 - link

"Random read throughput more than tripled going from SM2260 to SM2262, whereas random write more than doubled."Those are "up to " numbers, actual sustained performance is massively disappointing.

Take that crystaldiskmark image and the performance claims above it. It claims 370k IOPS for random reads, but it only scores a mere 75 mb per second. 370k iops * 4kb is more than 1.4 GB per second, and actual performance is the staggering 18.6 TIMES lower. Random writes will most likely by even worse if the workload extends beyond the 1 GB used in testing, as the drive would run out of cache.

If you disregard the "up to" claims and focus on actual real world performance, you will realize than random reads improvements are minuscule. So yeah, contrary to your claims, based on taking PR numbers for granted, sequential performance has seen significantly more improvements than random performance.

MrSpadge - Friday, August 25, 2017 - link

"Up to" obviously means at high QD, which results in 1496 MB/s = 374k iops for reads and 1142 MB/s = 286k iops for writes. That's pretty close to the claimed values for pre-production firmware, I'd say.You may argue that performance at low QD is more important for desktop use, and I have already agreed to that. However, if you want to talk about improvements (as in your 1st post) you'll have to compare similar quantities. Either high QD for new & old hardware (as I did) or low QD for new & old hardware (I don't have the numbers for that).

Taurothar - Saturday, August 26, 2017 - link

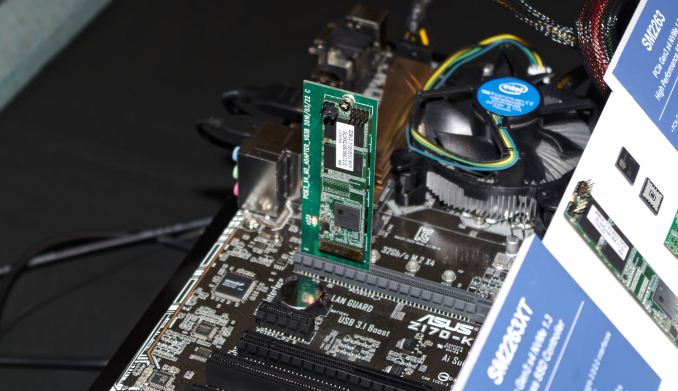

I'm confused by the first picture. Is that an NVMe SSD stuck into a standard PCI-E x16 slot? Is that something that could potentially work or is it just a shitty picture?Billy Tallis - Saturday, August 26, 2017 - link

There's an adapter between the M.2 connector used by the SSD and the PCIe add-in card connector. But all it's really doing is making up for the different pitch between contacts on the two card types. Beyond that, PCIe lanes are just PCIe lanes, and everything supports auto-negotiation of link speed and lane count.