The Clevo P870DM2 / Mythlogic Phobos 8716 Laptop Review: DTR With GTX 1080

by Brett Howse on October 27, 2016 2:00 PM ESTDisplay

Not too many years ago, gaming laptops were still shipping with TN displays on the large screen laptops. Luckily that’s no longer the case, and the Clevo P870DM2 / Mythlogic Phobos 8716 is available with two choices in display. The base model is a 1920x1080 IPS panel with a 120 Hz refresh rate. That’s great to see in a gaming laptop, and looking at the gaming results in the last section, this laptop can easily hit 120+ frames per second to keep up with that refresh rate. Mythlogic also offers a a 3840x2160 (UHD) AHVA panel with the more common 60 Hz refresh rate, but also includes G-SYNC. For those that want to meet somewhere in the middle, there is now a 2560x1440 AHVA 120 Hz panel option as well with G-SYNC.

The review unit has the lowest resolution panel, which is going to be the highest frame rate at the native resolution. The GTX 1080 in this review unit could likley handle at least the 2560x1440 though, and even the UHD would still give reasonable performance especially with G-SYNC.

To measure a display’s characteristics, we use an X-Rite i1Display Pro Colorimeter for brightness and contrast readings, and an X-Rite i1Pro2 Spectrophotometer for accuracy readings. SpectraCal CalMAN 5 Business with a custom workflow is the software used. Mythlogic shipped the device with an included ICC profile, but I found it actually was more accurate without the ICC profile, so the measurements below will be with it disabled.

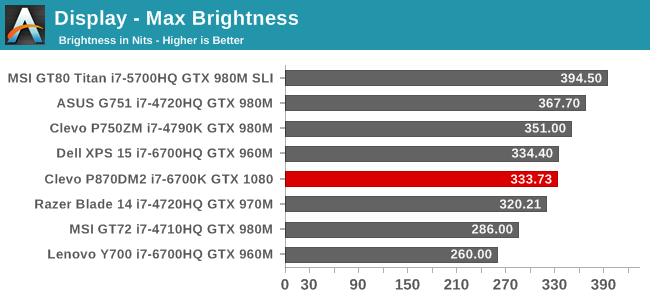

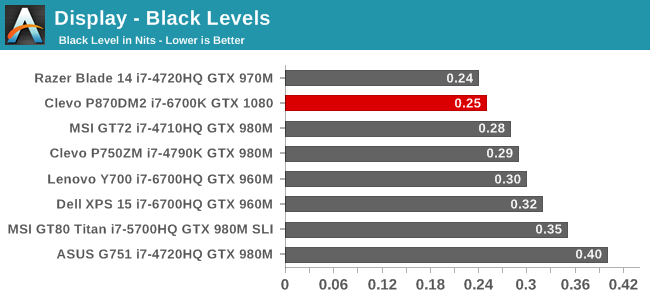

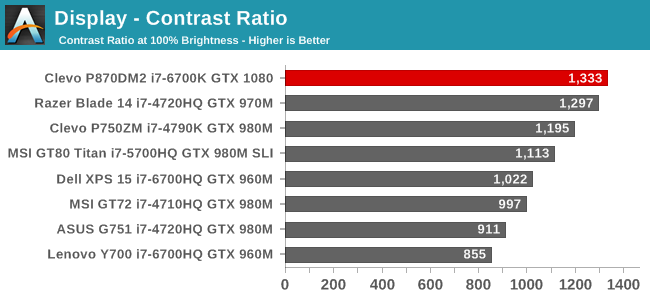

Brightness and Contrast

333 nits is a decent brightness for a laptop like this, which is unlikely to get used outdoors very much. What is more impressive though is the excellent black levels, which gives a great contrast ratio over 1300:1. For those that like to use their computer in the dark, the display goes down to 16 nits, which isn’t the lowest measured, but should be adequate.

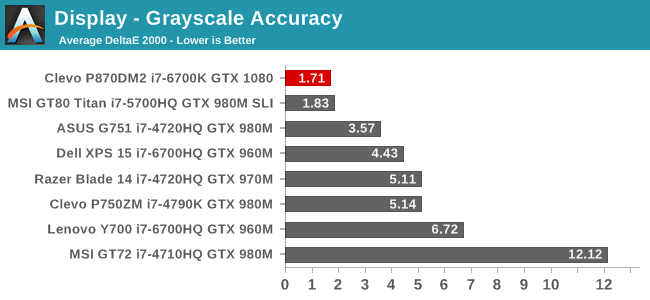

Grayscale

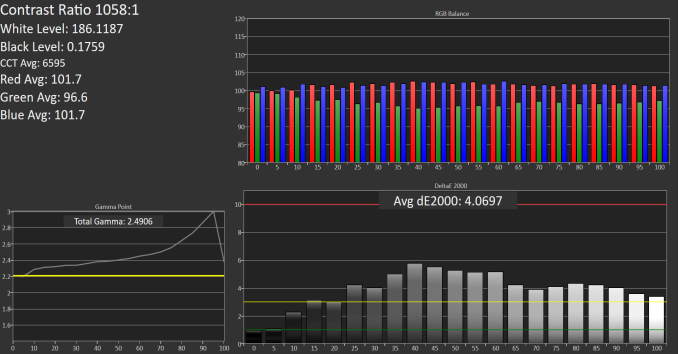

SpectraCal CalMAN 5 - Grayscale with included ICC Profile

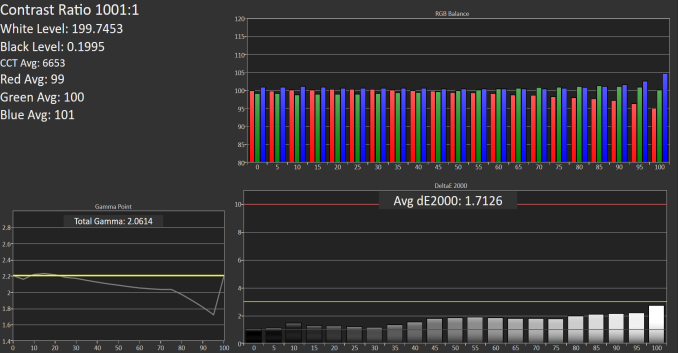

SpectraCal CalMAN 5 - Grayscale with no ICC

The top image is the laptop with the included ICC profile enabled. It’s pretty clear how much it doesn’t help, and makes the grayscale have much higher errors, and the gamma is way too high. With the ICC disabled, the grayscale is fantastic at an average dE2000 of just 1.7. Gamma still has some issues, but overall it’s much better. I don’t provide ICC profiles of laptops I’ve reviewed because there’s no guarantee an ICC made on panel A will help panel B, and in this case, it makes it much worse. The white point is very good too, which is clear when you see how closely the red, green, and blue track at the different white levels. This is one of the best grayscales on any laptop tested.

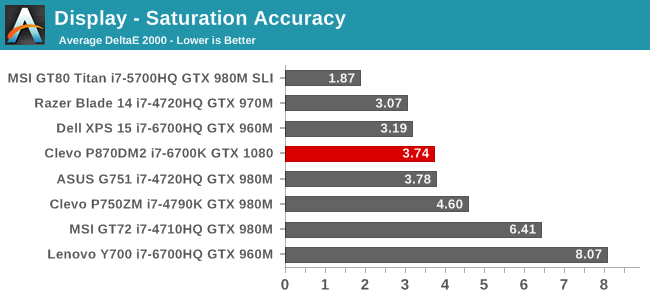

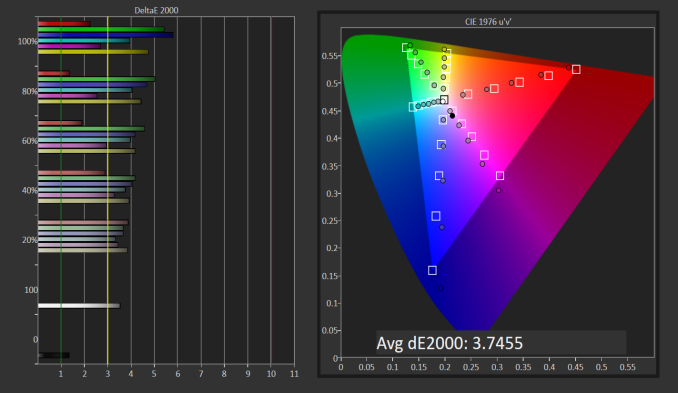

Saturation

Unfortunately, the saturation results weren’t quite as good as the grayscale, but with an average dE2000 of 3.75, it is pretty good in a notebook of this caliber. There isn’t just a single color which is off pulling the scores up, with all of the colors having errors ever three at some point in the sweeps. Still, it’s a decent result for this type of laptop.

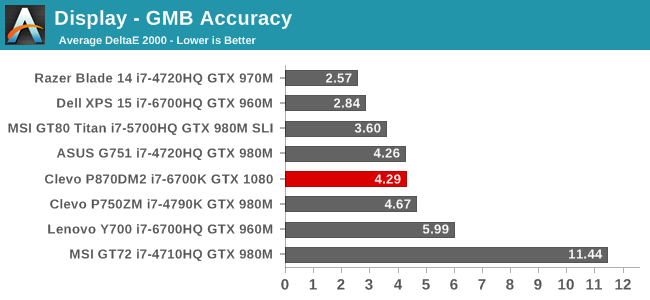

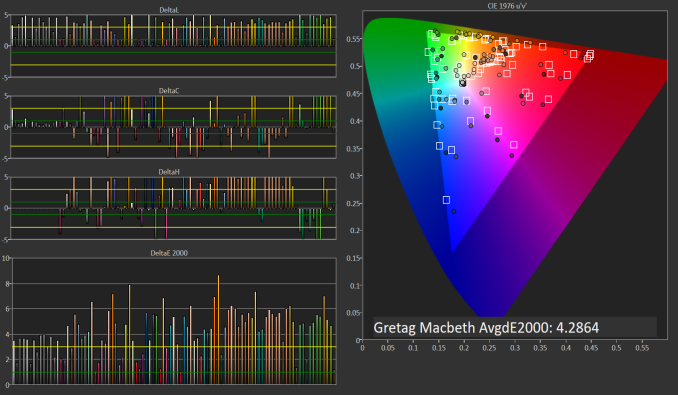

Gretag MacBeth

The GMB score is the worst of the bunch, but it’s still not too bad. 4.3 is definitely above the error levels under 3.0 that would be best, but the overall average is decent. The worst scores are the skin tones, and individual one color even has an error level over 8. Although better is always better, Mythlogic isn’t selling this notebook for color sensitive work, and if you are going to be doing that it would be best to get an external monitor. This is a pretty good result for a gaming notebook.

Overall, the 1920x1080 120 Hz display offers great out of the box white levels, with decent color accuracy. It could be better, but it’s far from the very blue displays seen a few years ago, and the higher refresh rate is going to be a benefit for gaming where movement can be very quick. The gaming I did on this notebook felt very smooth, which was also likely helped by the massive GPU available.

61 Comments

View All Comments

BrokenCrayons - Thursday, October 27, 2016 - link

Minor details...the MYTH Control Center shows an image of a different laptop. It struck me right away because of the pre-Pentium MMX Compaq Presario-esque style hinge design.As for Pascal, the performance is nice, but I continue to be disappointed by the cooling and power requirements. The number of heat pipes affixed to the GPU, the fact that it's still reaching thermal limits with such cooling, and the absurd PSU requirements for SLI make it pretty obvious the whole desktop-class GPU in a laptop isn't a consumer-friendly move on NV's part. Sure it cuts back on engineering, manufacturing, and part inventory costs and results in a leaner organization, but it's hardly nice to people who want a little more than iGPU performance, but aren't interested in running up to the other extreme end of the spectrum. It's interesting to see NV approach the cost-cutting measure of eliminating mobile GPU variants and turning it into a selling point. Kudos to them for keeping the wool up on that aspect at least.

The Killer NIC is something I think is a poor decision. An Intel adapter would probably have been a better choice for the end user since the benefits of having one have yet to be proven AND the downsides of poor software support and no driver flexibility outweigh the dubious claims from Killer's manufacturer.

ImSpartacus - Thursday, October 27, 2016 - link

Nvidia just named their mobile GPUs differently.Fundamentally, very little has changed.

A couple generations ago, we had a 780mx that was based on an underclocked gk104. Nvidia could've branded it as the "laptop" 770 because it was effectively an underclocked 770, just like the laptop 1080 is an underclocked 1080.

But the laptop variants are surely binned separately and they are generally implemented on the mxm form factor. So there isn't any logistical improvements just by naming their laptop GPUs differently.

The_Assimilator - Thursday, October 27, 2016 - link

"The number of heat pipes affixed to the GPU, the fact that it's still reaching thermal limits with such cooling, and the absurd PSU requirements for SLI make it pretty obvious the whole desktop-class GPU in a laptop isn't a consumer-friendly move on NV's part."nVIDIA is doing crazy things with perf/watt and all you can do is complain that it's not good enough? The fact that they can shoehorn not just one, but TWO of the highest-end consumer desktop GPUs you can buy into a bloody LAPTOP, is massively impressive and literally unthinkable until now. (I'd love to see AMD try to pull that off.) Volta is only going to be better.

And it's not like you can't go for a lower-end discrete GPU if you want to consume less power, the article mentioned GTX 1070 and I'm sure the GTX 1060 and 1050 will eventually find their way into laptops. But this isn't just an ordinary laptop, it's 5.5kg of desktop replacement, and if you're in the market for one of these I very much doubt that you're looking at anything except the highest of the high-end.

BrokenCrayons - Thursday, October 27, 2016 - link

Please calm down. I realize I'm not in the target market for this particular computer or the GPU it uses. I'm also not displaying disappointment in order to cleverly hide some sort of fangirl obsession for AMD's graphics processors either. What I'm pointing out are two things:1.) The GPU is forced to back off from its highest speeds due to thermal limitations despite the ample (almost excessive) cooling solution.

2.) While performance per watt is great, NV elected to put all the gains realized from moving to a newer, more efficent process into higher performance (in some ways increasing TDP between Maxwell/Kepler/etc. and Pascal in the same price brackets such as the 750 Ti @ 60W vs the 1050 Ti @ 75W) and my personal preference is that they would have backed off a bit from such an aggressive performance approach to slightly reduce power consumption in the same price/performance categories even if it cost in framerates.

It's a different perspective than a lot of computer enthusiasts might take, but I much perfer gaining less performance while reaping the benefits of reduced heat and power requirements. I realize that my thoughts on the matter aren't shared so I have no delusion of pressing them on others since I'm fully aware I don't represent the majority of people.

I guess in a lot of ways, the polarization of computer graphics into basically two distinct categories that consist of "iGPU - can't" and "dGPU - can" along with the associated power and heat issues that's brought to light has really spoiled the fun I used to find in it as a hobby. The middle ground has eroded away somewhat in recent years (or so it seems from my observations of industry trends) and when combined with excessive data mining across the board, I more or less want to just crawl in a hole and play board games after dumping my gaming computer off at the local thrift store's donation box. Too bad I'm screen addicted and can't escape just yet, but I'm working on it. :3

bji - Thursday, October 27, 2016 - link

"Please calm down" is an insulting way to begin your response. Just saying.BrokenCrayons - Thursday, October 27, 2016 - link

I acknowledge your reply as an expression of your opinion. ;)The_Assimilator - Friday, October 28, 2016 - link

Yeah, but my response wasn't exactly calm and measured either, so it's all fair.BrokenCrayons - Friday, October 28, 2016 - link

"...so it's all fair."It's also important to point out that I was a bit inflammatory in my opening post. It wasn't directed at anyone in particular, but was/is more an expression of frustration with what I think is the industry's unintentional marginalization of the lower- and mid-tiers of home computer performance. Still, being generally grumpy about something in a comments box is unavoidably going to draw a little ire from other people so, in essence, I started it and it's my fault to begin with.

bvoigt - Thursday, October 27, 2016 - link

"my personal preference is that they would have backed off a bit from such an aggressive performance approach to slightly reduce power consumption in the same price/performance categories even if it cost in framerates."They did one better, they now give you same performance with reduced power consumption, and at a lower price (980 Ti -> 1070). Or if you prefer the combination of improved performance and slightly reduced power consumption, you can find that also, again at a reduced price (980 Ti -> 1080 or 980 -> 1070).

Your only complaint seems to be that the price and category labelling (xx80) followed the power consumption. Which is true, but getting hung up on that is stupid because all the power&performance migration paths you wanted do exist, just with a different model number than you'd prefer.

BrokenCrayons - Thursday, October 27, 2016 - link

You know, I never thought about it like that. Good point! Here's to hoping there's a nice, performance boost realized from a hypothetical GT 1030 GPU lurking in the product stack someplace. Though I can't see them giving us a 128-bit GDDR5 memory bus and sticking to the ~25W TDP of the GT 730. We'll probably end up stuck with a 64-bit memory interface with this generation.