The Samsung 960 Pro (2TB) SSD Review

by Billy Tallis on October 18, 2016 10:00 AM ESTPerformance Consistency

Our performance consistency test explores the extent to which a drive can reliably sustain performance during a long-duration random write test. Specifications for consumer drives typically list peak performance numbers only attainable in ideal conditions. The performance in a worst-case scenario can be drastically different as over the course of a long test drives can run out of spare area, have to start performing garbage collection, and sometimes even reach power or thermal limits.

In addition to an overall decline in performance, a long test can show patterns in how performance varies on shorter timescales. Some drives will exhibit very little variance in performance from second to second, while others will show massive drops in performance during each garbage collection cycle but otherwise maintain good performance, and others show constantly wide variance. If a drive periodically slows to hard drive levels of performance, it may feel slow to use even if its overall average performance is very high.

To maximally stress the drive's controller and force it to perform garbage collection and wear leveling, this test conducts 4kB random writes with a queue depth of 32. The drive is filled before the start of the test, and the test duration is one hour. Any spare area will be exhausted early in the test and by the end of the hour even the largest drives with the most overprovisioning will have reached a steady state. We use the last 400 seconds of the test to score the drive both on steady-state average writes per second and on its performance divided by the standard deviation.

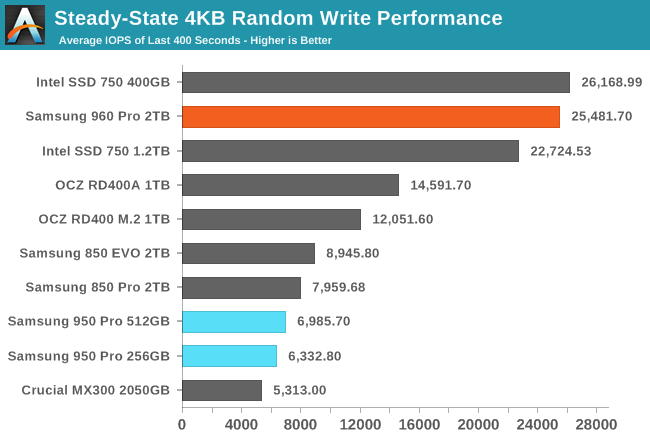

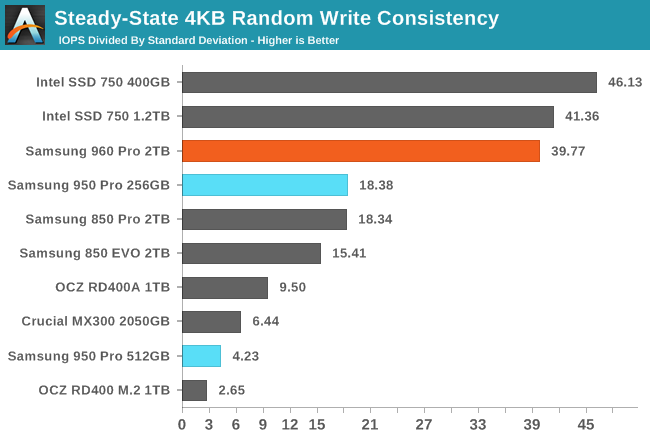

The enterprise SSD heritage of the Intel SSD 750 continues to shine through as it holds on to the lead for steady-state random write performance, but Samsung has mostly caught up with the 960 Pro. This is a huge change from the 950 Pro, which had steady-state performance that was no better than typical SATA SSDs. A few consumer SSDs have offered great steady-state random write performance—most notably OCZ's drives based on the Indilinx Barefoot 3 controller—but the 960 Pro is the first one to reach the level of the Intel SSD 750.

In addition to mostly closing the performance gap, the 960 Pro has a great consistency score that is almost as good as the Intel SSD 750's score. While OCZ's Vector 180 offered remarkably high average performance in its steady state, it was far less consistent than the either the Samsung 960 Pro or the Intel SSD 750 and instead the standard deviation of its steady state performance was more than ten times greater.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

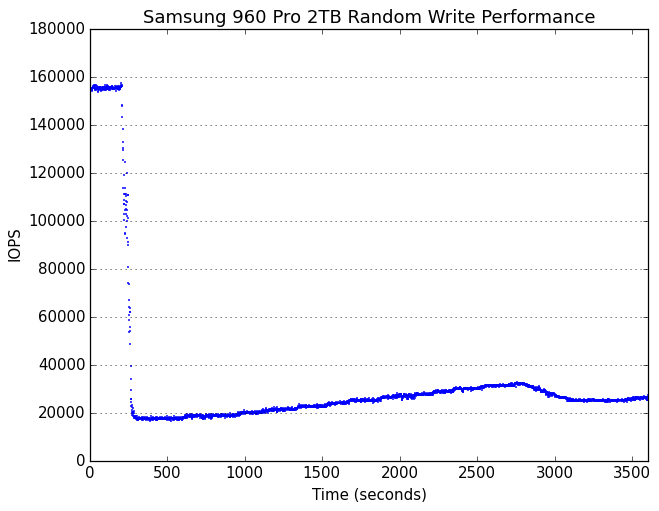

After the initial period of very high performance, the 960 Pro enters a steady state with very good short-term consistency but gradual long-term variation in performance. This is more similar in character to the behavior of the Intel SSD 750 than Samsung's earlier SSDs, though it's interesting to note that the 960 Pro is more twice as fast during the initial phase before transitioning to steady state.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

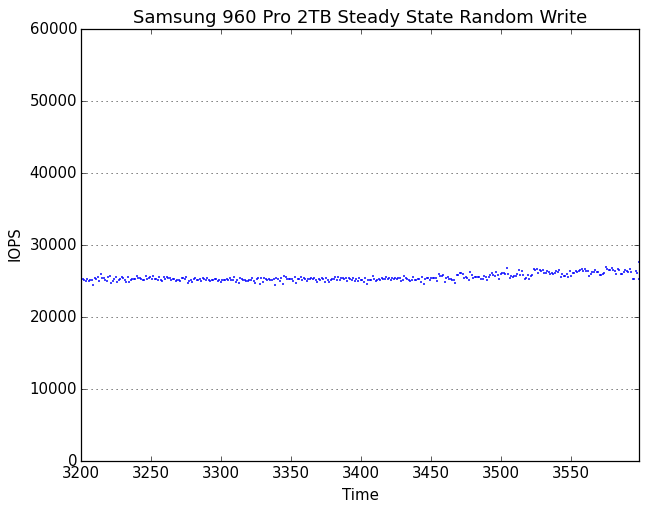

Focusing on the last 400 seconds of the test shows the 960 Pro's steady state to be essentially flawless, rounding out a full page of what can be considered to be perfect scores for a consumer drive. The performance would even make the 960 Pro a pretty good enterprise SSD, and this is usually not the case for drives with consumer-oriented firmware.

72 Comments

View All Comments

JoeyJoJo123 - Tuesday, October 18, 2016 - link

Not too surprised that Samsung, once again, achieves another performance crown for another halo SSD product.Eden-K121D - Tuesday, October 18, 2016 - link

Bring on the competitionibudic1 - Tuesday, October 18, 2016 - link

Intel 750 is better. The only thing that you can tell is random write 4K QD1-4. Also it's really bad when you don't have the consistency when you need it. There's nothing worse than a hanging application, it's about consistancy not outright speed. Which reminds me...When evaluating graphics cards a MINIMUM frame rate is WAY more important than average or maximum.

Just like in racing the slowest speed in the corner is what separates great cars from average.

Hopefully Anandtech can recognize this in future reviews

Flying Aardvark - Wednesday, October 19, 2016 - link

Exactly. Intel 750 is still the king for someone who seriously needs storage performance. 4K randoms and zero throttling.I'd stick with the Evo or 600P, 3D TLC stuff unless I really needed the performance then I'd go all the way up to the real professional stuff with the 750. I need a 1TB M.2 NVME SSD myself and eager to see street prices on the 960 EVO 1TB and Intel 600P 1TB.

iwod - Wednesday, October 19, 2016 - link

Exactly, when majority ( 90%+ ) of consumer usage is going to be based on QD1. Giving me QD32 numbers is like a Mpixel or Mhz race. I used to think we reached the limit of Random read write performance. Turns out we haven't actually improved Random Read Write QD1 much, hence it is likely still the bottleneck.And yes we need consistency in QD1 Random Speed test as well.

dsumanik - Wednesday, October 19, 2016 - link

Nice to see there are still some folks out there who arent duped by marketing, random write and full capacity consistency are the only 2 things a look at. When moving large video files around sequential speeds can help, but difference between 500 and 1000 mb/s isnt much, you start the copy then go do something else. In many cases random write is the bottleneck for the times you are waiting on the computer to "do something", and dictates if the computer feels "snappy". Likewise, performance loss when a drive is getting full also makes you 'notice' things are slowing down.Samsung if you are reading this, go balls out random write performance on the next generation, tyvm.

Samus - Wednesday, October 19, 2016 - link

You can't put an Intel 750 in a laptop though, and it also caps at 1.2TB. But your point is correct, it is a performance monster.edward1987 - Friday, October 28, 2016 - link

Intel SSD 750 SSDPEDMW400G4X1 PCI-Express-v3-x4 - HHHLAND Samsung SSD 960 PRO MZ-V6P512BW M.2 2280 NVMe

IOPS 230-430K VS 330K

ead speed (Max) 2200 VS 3500

Much better in comparison http://www.span.com/compare/SSDPEDMW400G4X1-vs-MZ-...

shodanshok - Tuesday, October 18, 2016 - link

Let me do a BIG WARNING against disabling write-buffer flushing. Any drive without special provisions for power loss (eg: supercapacitor), can really lose much data in the event of a unexpected power loss. In the worst scenario, entire filesystem loss can happen.What the two Windows settings do? In short:

1) "enable write cache on the device" enables the controller's private DRAM writeback cache and it is *required* for good performance on SSD drives. The reason exactly the one cited on the article: for good performance, flash memory requires batched writes. For example, with DRAM cache disabled I recorded write speed of 5 MB/s on a otherwise fast Crucial M550 256 GB. With DRAM cache enabled, the very same disk almost saturated the SATA link (> 400 MB/s).

However, a writeback cache imply some data loss risk. For that reason the IDE/SATA standard has some special commands to force a full cache flush when the OS need to be sure about data persistence. This bring us that second option...

2) "turn off write-cache buffer flushing on the device": this option should be absolutely NOT enabled on consumer, non-power-protected disks. With this option enabled, Windows will *not* force a full cache flush even on critical tasks (eg: update of NTFS metadata). This can have catastrophic consequence if power is loss at the wrong moment. I am not speaking about "simple", limited data loss, but entire filesystem corruption. The key reason for such a catastrophic behavior is that cache-flush command are not only used for store critical data, but for properly order their writeout also. In other words, with cache flushing disabled, key filesystem metadata can be written out of order. If power is lost during a incomplete, badly-reordered metadata writes, all sort of problems can happen.

This option exists for one, and only one, case: when your system has a power-loss-protected array/drives, you trust your battery/capacitor AND your RAID card/drive behave poorly when flushing is enabled. However, basically all modern RAID controllers automatically ignores cache flushes when the battery/capacitor are healthy, negating the needing to disable cache flushes software-side.

In short, if such a device (960 Pro) really need disabled cache flushing to shine, this is a serious product/firmware flaw which need to be corrected as soon as possible.

Br3ach - Tuesday, October 18, 2016 - link

Is power loss a problem for M.2 drives though? E.g. my PSU's (Corsair AX1200i) capacitors keeps the MB alive for probably 1 minute following power loss - plenty of time for the drive to flush any caches, no?