NVIDIA GeForce FX 5800 Ultra: It's Here, but is it Good?

by Anand Lal Shimpi on January 27, 2003 3:50 AM EST- Posted in

- GPUs

Anti-Aliasing Quality

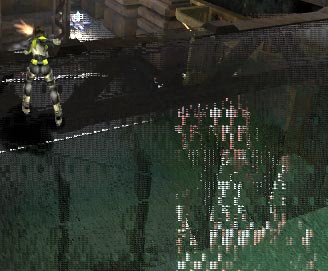

Now that we've investigated the GeForce FX's Anisotropic Filtering modes, the next step is to see how well its Anti-Aliasing engine works. We followed the same procedure as we did with our AF investigation, and in doing so we managed to uncover a bug in the 42.63 drivers NVIDIA sent us for testing; it turns out that a bug in this build of the drivers causes visual artifacts if NVIDIA's 4XS Anti-Aliasing is enabled, and thus we had to exclude that setting from our tests.

An example of the visual artifacts present with 4XS AA enabled on the GeForce

FX

NVIDIA plans to fix this bug in a later revision of the driver, but given that it was the weekend they could not fix it in time for this review.

Below you'll find the scene we used from the same Inferno Flyby in Unreal Tournament 2003 for our AA quality tests:

The white box is the area we zoomed in on (400%) to investigate the actual quality of the AA algorithm each of the cards employed. Once again, we'll start with the newcomer - NVIDIA's GeForce FX.

To view the different screenshots simply hold your mouse over the appropriate link and the corresponding screenshot will appear. By default, AA disabled is the screenshot that will be shown if you don't mouse-over the other links:

[ AA

Disabled | 2X

| QC

| 4X

| 6XS

| 8XS

]

The first thing you should notice is that neither of the 2-sample AA algorithms (2X or Quincunx) seem to be doing much of anything in the way of actually anti-aliasing. We brought this to the attention of NVIDIA but heard nothing in return and can only conclude that there are still unfinished issues with the current GeForce FX drivers. There is a performance drop (as you'll soon see) with 2X/QC AA enabled, and there is a slight difference in image quality but to say that the 2X mode is doing a good job is stretching it.

Luckily the 4X and higher settings seem to be working just fine; remember that all of the 'S' modes (e.g. 6XS, 8XS) are only available under Direct3D and not OpenGL; this isn't too big of a problem considering that even the GeForce FX doesn't have the power in it to run most of today's games at high resolutions with anything above 4X enabled at reasonable frame rates.

Just so you're familiar with what we're comparing to let's take a look at the Radeon 9700 Pro:

[ AA

Disabled | 2X

| 4X

| 6X

]

With the Radeon 9700 Pro ATI got rid of the Performance/Quality AA settings and just went to one single algorithm, which makes our job much simpler. By looking at ATI's 2X AA mode you can already tell that it's doing more than NVIDIA's 2X setting, but to make things easier let's look at the two compared directly.

0 Comments

View All Comments