NVIDIA Introduces GeForce FX (NV30)

by Anand Lal Shimpi on November 18, 2002 10:13 AM EST- Posted in

- GPUs

The Chip behind the Name

As

you should all know by now the GeForce FX is a 0.13-micron GPU but what you're

not aware of is that this chip is composed of no less than 125 million transistors.

The majority of the increase in transistor count comes from the fact that the

GeForce FX GPU is fully floating point from start to finish, a requirement for

DirectX 9 compliance.

As

you should all know by now the GeForce FX is a 0.13-micron GPU but what you're

not aware of is that this chip is composed of no less than 125 million transistors.

The majority of the increase in transistor count comes from the fact that the

GeForce FX GPU is fully floating point from start to finish, a requirement for

DirectX 9 compliance.

Note that the 0.15-micron R300 is just over 110 million transistors and Intel's own Xeon MP clocks in at 108 million transistors. What is important to note is that although Intel's Xeon MP comes close in transistor count, almost 2/3 of those 108 million transistors are used for cache. The same isn't true for the Radeon 9700 and GeForce FX, where the vast majority of the transistors that make up the GPUs are used for implementing the 3D pipeline and not merely cache; these things are getting quite complex to say the least.

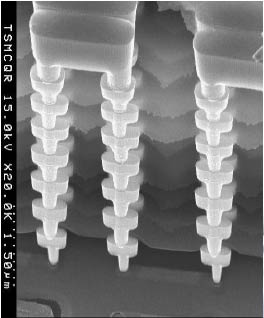

The benefits of going with a 0.13-micron process for GeForce FX are clear; not only does NVIDIA benefit from faster switching transistors, they also use less power and produce less heat than the previous generation 0.15-micron transistors. The smaller 0.13-micron transistors also let you pack more of them into a smaller area, which also increases the need for better packaging technology. In this case, NVIDIA went with the logical choice of a FCBGA (Flip Chip Ball Grid Array) packaging much like ATI did with the R300; again this is necessary because of the incredible pincount of the chip and the extremely densely packed transistors.

NVIDIA has not decided on final clock speeds for the GeForce FX but at least one version of the GPU will run close to the target frequency of 500MHz. With 8 pixel pipelines, the GeForce FX already starts out with more fill rate than the Radeon 9700 Pro which is only clocked at 325MHz.

Unlike both ATI and Matrox, the GeForce FX features a 128-bit memory interface. The 128-bit interface is composed of four independent, load-balanced 32-bit memory controllers. If you'll remember back to the architecture of the GeForce4, the memory controller setup hasn't changed at all. Where NVIDIA manages to remain competitive is by implementing higher speed "DDR2" memories. We put "DDR2" in quotes because there is no official DDR2 spec for graphics memory, and the only difference between this memory and conventional DDR is that the electrical and signaling characteristics of the memory are borrowed from the JEDEC DDR2 specification. This memory does not transfer 4 times per clock but simply improves on the way data gets in and out of the chip, allowing for much higher clock rates. This should sound familiar as it is very similar to what ATI did with GDDR3.

NVIDIA is shooting for around a 500MHz clock speed (effectively 1GHz) for the "DDR2" memory on the GeForce FX. NVIDIA partnered with Samsung to provide memory for the GeForce FX built to NVIDIA's specification.

The rest of the GeForce FX specs are as follows:

- 0.13-micron GPU

- 125 million transistors

- 8 pixel rendering pipelines, 1 texture unit per pipeline, can do 16 textures per pass

- 1 massively parallel vertex rendering engine

- 4 x 32-bit "DDR2" memory controllers running at ~500MHz DDR

- Up to 48GB/s of memory bandwidth using compression

- AGP 8X support

- Full DX9 Pixel & Vertex Shader support

We've explained the 3D pipeline in previous articles so we'll point you back at those for a background understanding of what's going on as we talk about the GeForce FX's pipeline:

0 Comments

View All Comments