The LG G5 Review

by Matt Humrick on May 26, 2016 8:00 AM EST- Posted in

- Smartphones

- Snapdragon

- Qualcomm

- LG

- Mobile

- Snapdragon 820

- LG G5

System Performance

The year 2015 was disappointing for SoC aficionados and difficult for OEMs who struggled to field devices that delivered better performance and battery life than their previous products. A confluence of factors led to this calamity, including a leaky process node and poor design decisions by several companies. With few options to choose from, LG opted for Qualcomm’s second-tier Snapdragon 808 for the G4, which offered the same or often better performance than the 810 in sustained workloads, using two fewer CPU cores, due to less thermal throttling.

Fortunately, 2016 is proving to be a renaissance for smartphone SoCs, fueled by Samsung’s 14nm and TSMC’s 16nm FinFET process nodes. Intense competition has given rise to not one but two new CPU microarchitectures: Qualcomm’s Kryo and Samsung’s Mongoose. Of course we continue to see big.LITTLE SoCs based on ARM’s Cortex-A72 and -A53 CPU cores and Apple still has its Twister CPU. It’s a great year for smartphone buyers and processor geeks!

For the G5, LG uses Qualcomm’s Snapdragon 820 SoC that includes four of its new 64-bit Kryo CPU cores and an upgraded Adreno 530 GPU. While we will be comparing its performance results to several of the latest smartphones using a mixture of SoCs both new and old, we’re not going to discuss the reasons behind the performance deltas we see in any depth. Andrei is currently working on an article that will discuss and compare the new CPU microarchitectures in much more detail in a future separate SoC-centric deep-dive article.

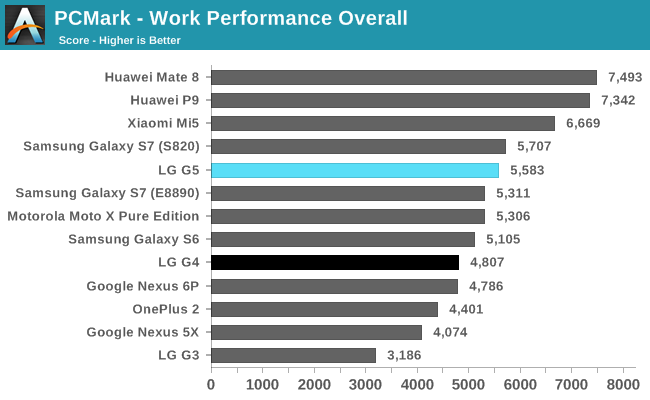

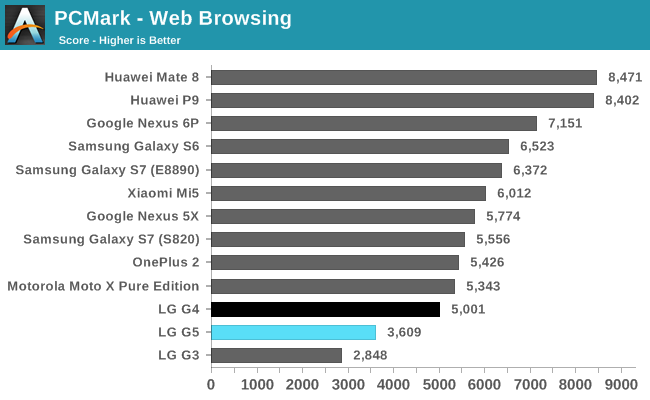

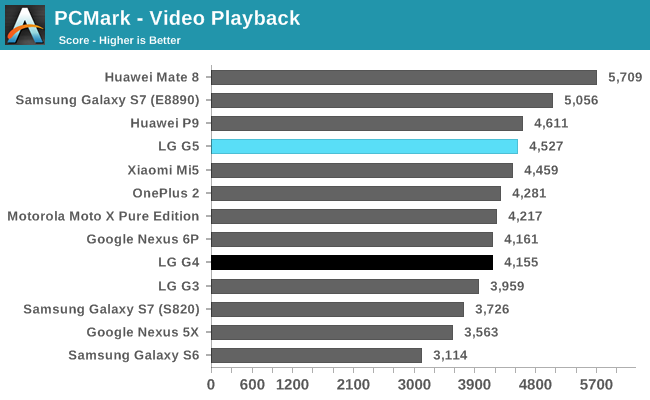

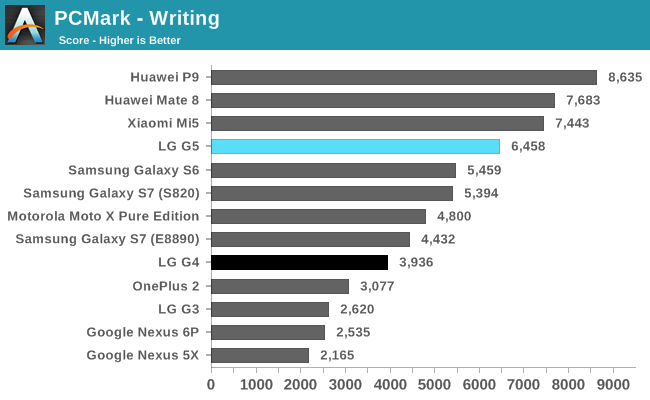

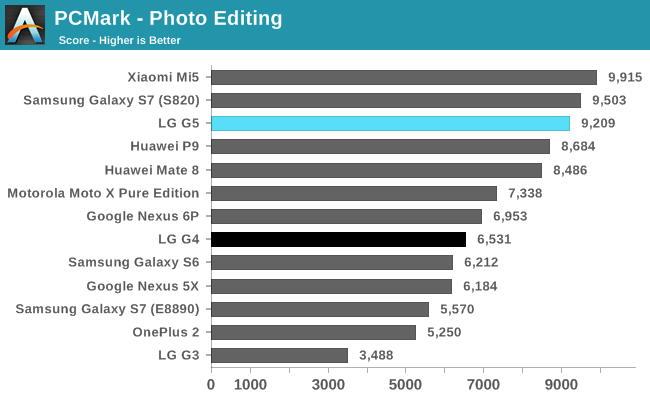

PCMark is currently our best test for evaluating overall system performance. Its real-world workloads exercise the CPU governor in much the same way commonly used apps do and is generally a good indicator of the performance you can expect to see.

The G5 does well overall, offering a modest improvement over the G4. Like the other Snapdragon 820 devices, the G5 delivers good performance in the Video Playback and Writing tests, although not as good as the Kirin 95x-based Huawei Mate 8 and P9. The Photo Editing test, which uses both the CPU and GPU to apply a series of photo effects and also performs some file operations, is where the G5 shows the largest increase (41%) relative to the G4. It’s also the only PCMark test where Snapdragon 820 holds a performance advantage over the Kirin 95x.

The Web Browsing test shows that two different devices using the same SoC do not necessarily perform the same. The Galaxy S7 outperforms the G5 by 54% even though they both use the Snapdragon 820. Monitoring CPU activity and memory bus frequency makes it clear that Samsung and LG use different strategies for balancing performance, battery life, and thermals.

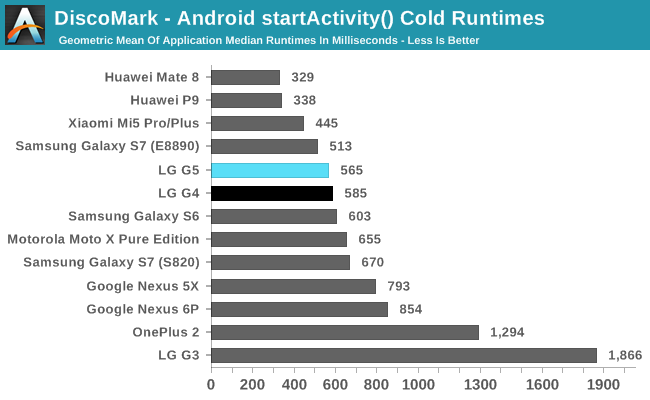

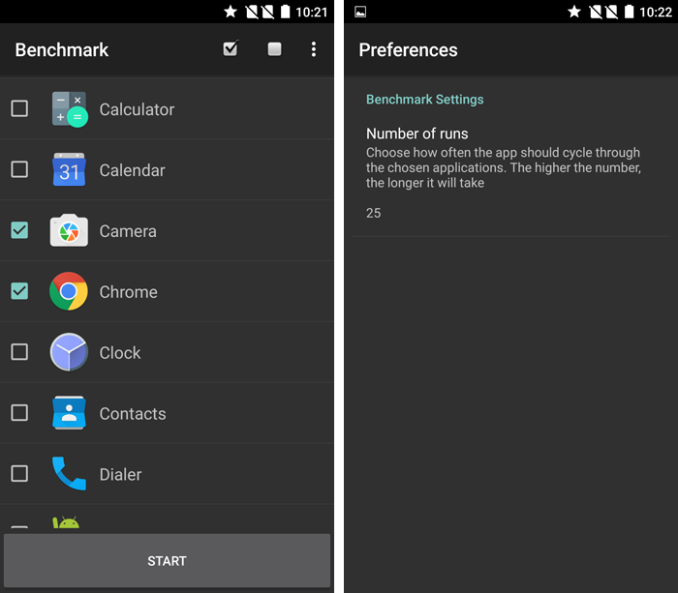

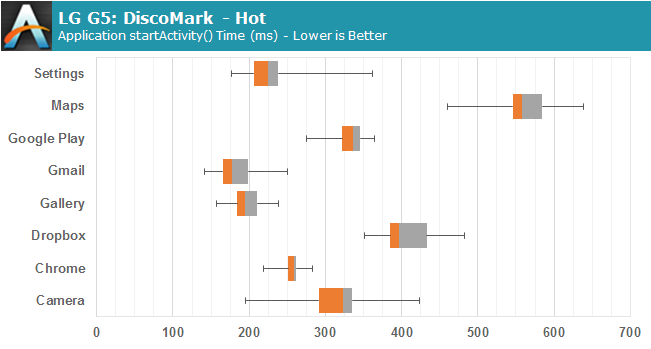

As part of our new 2016 benchmark suite, we're introducing DiscoMark. DiscoMark is an application developed by the Distributed Computing Group at ETH Zürich and presents itself as an incredibly useful tool in objectively measuring an every-day experience with today's smartphones: application launch times. To date measuring and capturing this metric required either imprecise high-speed video analysis of an application's runtimes or laborious manual system tracing of a device. The folks over at ETH Zürich found a solution to this by making use of Android's accessibility services to be able to automate and measure an application's startActivity() method. In Android's activity life-cycle, the default startActivity() is the first method called to what subsequently builds and renders the UI elements of the app. For the vast majority of applications out there this is a valid and accurate measurement of the time it takes for one to see the first UI elements.

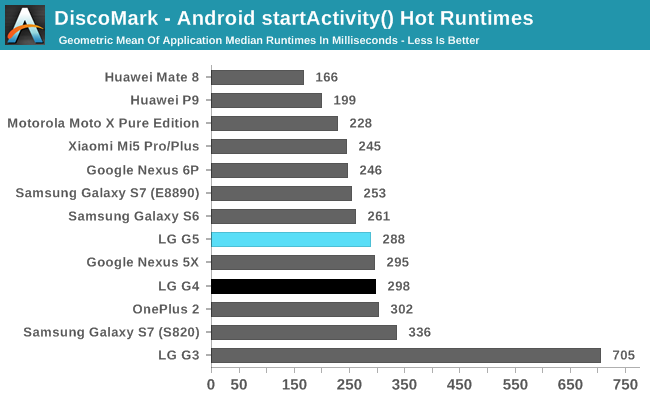

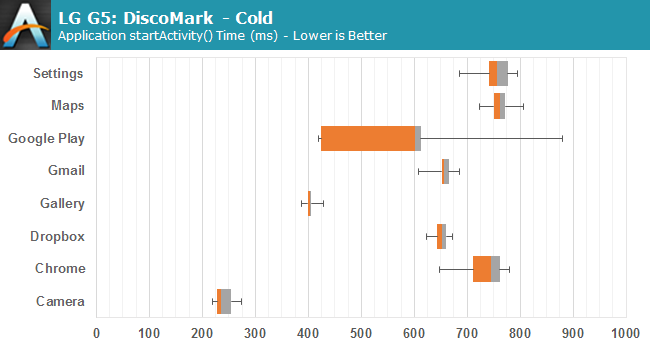

For our benchmark suite we decided to use the new tool in two ways: Cold startup measurements and hot startup measurements. In the cold measurements we run our set of 8 applications once through the benchmark cycle in sequence, after which we clear the device's memory via evicting all apps from memory. We repeat this 10 times until we have a satisfactory sample pool. The cold test is run after the hot test so measurements here represent values that are possibly affected by kernel filesystem caching and stable device memory management behavior. The cold runtimes are both affected by a device's SoC performance as well as NAND performance, making this an accurate representation of the times required to launch an app not already in memory. The hot runtimes are a sequence of 25 runs with the first 5 ejected to help compensate for cold filesystem caches, making this a relative accurate representation of real-world switching between applications which are already loaded in memory.

To present the data, we use the geometric mean of each application's median measured runtimes through our samples. The applications chosen are both third-party applications as well as some of the most used OEM applications. This mix ensures that we represent both raw speed in apples-to-apples comparisons between devices and performance of some cornerstone applications, including Settings, Gallery, and Camera launch times. For advanced readers who are curious about the breakdown between third-party and OEM applications, we chose to display the data in whisker-charts, which are able to represent the statistical breakdown and behavior of the runtimes measured. Here we show minimum, maximum, first and third quartile boundaries (Q1, Q3) as well as the median values of the runtime distributions.

The G5 launches apps quickly, similar to the Galaxy S6 and S7 devices. At least for the smaller apps we tested, the G5’s UFS 2.0 NAND does not provide any noticeable performance increase over the G4’s eMMC 5.0 storage; however, they both show a more than a 3x improvement over the older G3, which is very noticeable in normal use. The G5 is also at least 29% faster than the Nexus 5X and Nexus 6P. This does not sound like a lot, but it’s enough to make the 6P feel slow after using the G5 for awhile. What’s surprising is just how quickly Huawei’s Mate 8 and P9 open apps: about 40% faster than the G5.

There’s a tighter grouping when it comes to switching between open apps, which is to be expected. Huawei’s Mate 8 and P9 still lead the group, averaging at least 45% faster than the G5. Once again the G5 does not offer much improvement over the G4, but it does manage to outperform the Snapdragon 820 version of the Galaxy S7.

Both of our user experience tests, PCMark and DiscoMark, confirm our subjective observations: The G5 simply feels fast, especially when coming from a phone more than one generation old. The UI is fluid, apps launch quickly, and browser scrolling is pretty smooth. It’s generally comparable to the Galaxy S7, although Samsung’s phone performs a little better with web browsing in Chrome and even better with its native browser.

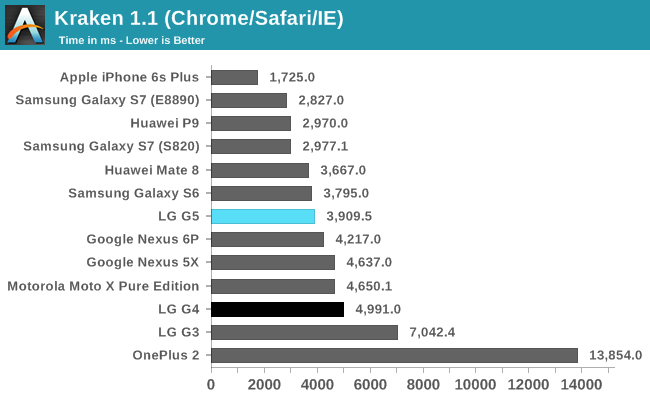

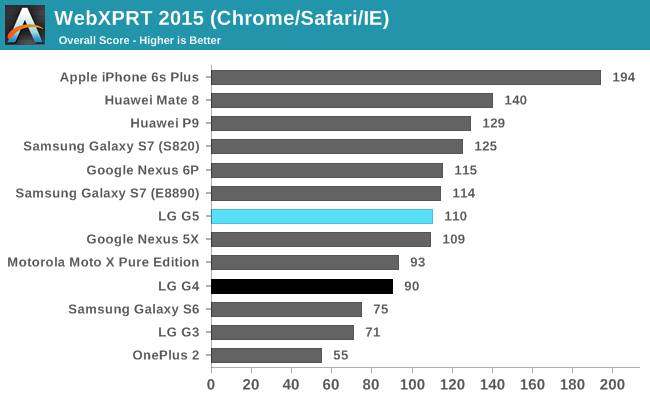

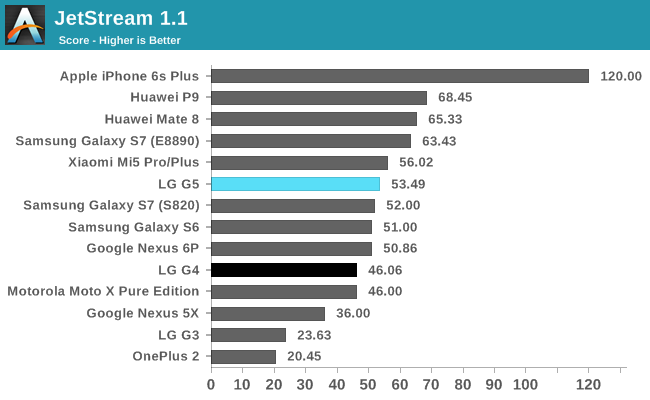

Google’s Chrome is the only browser that comes installed on the G5, so that’s what we used for our browser testing. The Mate 8 and P9 are at or near the top of the chart in each test, outperforming the G5 by 17% or more in WebXPRT 2015 and JetStream. There’s a similar gap of around 20% between the G5 and G4. The G5 is faster than both Nexus phones in most cases, but falls a little behind the Galaxy S7.

The LG G5 is not the fastest flagship phone, but it’s certainly competitive. Anyone upgrading from a phone more than a year old will notice how much faster the G5 feels. Even the Nexus 6P feels slow by comparison. The only negative thing I can say about system performance is the G5’s slightly slower web browsing experience, but that’s just nitpicking.

92 Comments

View All Comments

osxandwindows - Thursday, May 26, 2016 - link

finally!.zeeBomb - Thursday, May 26, 2016 - link

Damn... This review surprised me! Still won't get it, but nice to have itv finally doneAlexey291 - Thursday, May 26, 2016 - link

As usual their reviews are so late that the devices of the current generation have been bought already by those who was planning to buy them.Unless it's an iPhone ofc. That shit gets a review in a week

tuxRoller - Thursday, May 26, 2016 - link

First, that's probably an exaggeration.Second, there are only a couple of iphone launches every year, at most. That's a much easier load to track compared to the swarm of android devices that dribble out over the year (with a plurality released around March, tbh).

marcolorenzo - Sunday, May 29, 2016 - link

So what? Why does it matter how many Android devices there are compared to iPhones? The OS has nothing to do with reviewing a phone, that kind of segregation makes absolutely no logical sense. Of course, during this period when several manufacturers decide to release their phones at the same time, the workload of reviewers would suddenly spike but it still doesn't excuse the delays they have during other times of the year. Let's just face facts. IPhones, like sex, sells. Of course they would double down and getting a review of the latest iPhones out the door, they get more viewers that way.tuxRoller - Sunday, May 29, 2016 - link

It matters because the person I responded to mentioned how iPhone reviews come out relatively quickly. I responded by explaining my understanding of the situation, which is that phones are, in a practical sense, categorized by their OS. Specifically with regards to apple, they are there only ones who produce ios phones, and only make, at most, a couple of releases a year. What's more, those particular phones are the most purchased of any particular phone so interest is highest in them.For Android, the sales distribution is far more diffuse, and Samsung, for one, has at least a couple major releases every year.

When you have a finite amount of man power you have to distribute it in a way that provides the most benefits.

As for their delays during other times, there were, iirc, mitigating circumstances(reviewers were sick, schoolwork, etc). If you have actual knowledge that indicates otherwise I'd be interested.

anoxy - Monday, May 30, 2016 - link

Wait, are you really that dense? You answered your own question but you're still throwing a hissyfit?More phone releases = more workload = increased delays in using (adequately) and reviewing every phone.

There are usually two iPhones, and they likely receive review units well in advance and thus have plenty of time to use them. And yes, there is a greater incentive to review an insanely popular device like the iPhone. Why does that upset you?

Ranger1065 - Friday, May 27, 2016 - link

Nicely written article, but even, "better late than never" barely applies. Anandtech takes another GIANT step, towards obsolescence...Alex J. - Friday, May 27, 2016 - link

I agree, it's pretty disappointing how more and more "late" all of their reviews are becoming... At this rate, the HTC10 review would probably come at the end of the summer.And yea, "better late than never" phrase is also losing its relevancy - most of the people whom I personally know and who were planning to "upgrade" their Android phones this year have already done so, meaning the "late" reviews like this have lost all relevance to them. But whatever - if Purch Media wants to run Anandtech into the ground - it's their choice.

DiamondsWithaZ - Monday, September 19, 2016 - link

It did haha. The HTC 10 review literally just got posted... The phone has been out for almost 6 months.