AMD Reveals Polaris GPU Architecture: 4th Gen GCN to Arrive In Mid-2016

by Ryan Smith on January 4, 2016 9:00 AM EST

For much of the last month we have been discussing bits and pieces of AMD’s GPU plans for 2016. As part of the Radeon Technology Group’s formation last year, the leader and chief architect of the group, Raja Koduri, has set about to make his mark on AMD’s graphics technology. Along with consolidating all graphics matters under the RTG, Raja and the rest of the RTG have also set about to change how they interact with the public, with developers, and with their customers.

One of those changes – and the impetus for these recent articles – has been that the RTG wants to be more forthcoming about future product developments. Traditionally AMD always held their cards close to their chest about architectures, keeping them secret until the first products based on a new architecture launch (and even then sometimes not talking about matters in detail). With the RTG this is changing, and similar to competitors Intel and NVIDIA, the RTG wants to prepare developers and partners for new architectures sooner. As a result the RTG has been giving us a limited, high-level overview of their GPU plans for 2016.

Back in December we started things off talking about RTG’s plans for display technologies – DisplayPort, HDMI, Freesync, and HDR – and how the company would be laying the necessary groundwork in future architectures to support their goals for higher resolution displays, more ubiquitous Freesync-over-HDMI, and the wider color spaces and higher contrast of HDR. The second of RTG’s presentations that we covered was focused on their software development plans, including Linux driver improvements and the consolidation of all of RTG’s various GPU libraries and SDKs under the GPUOpen banner, which will see these resources released on GitHub as open source projects.

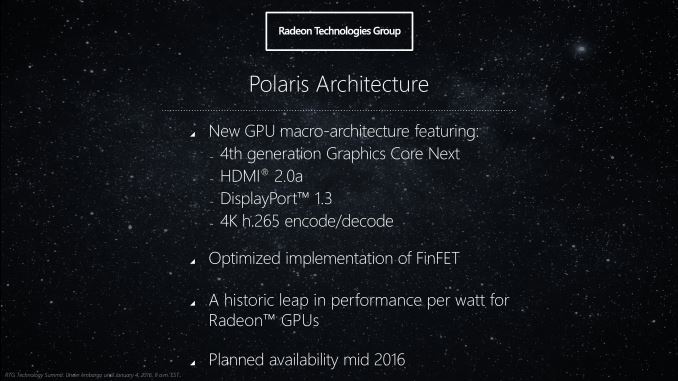

Last but not least among RTG’s presentations is without a doubt the most eagerly anticipated subject: the hardware. As RTG (and AMD before them) has commented on in the past couple of years, a new architecture is being developed for future RTG GPUs. Dubbed Polaris (the North Star), RTG’s new architecture will be at the heart of their 2016 GPUs, and is designed for what can now be called the current-generation FinFET processes. Polaris incorporates a number of new technologies, including a 4th generation Graphics Core Next design for the heart of the GPU, and of course the new display technologies that RTG revealed last month. Finally, the first Polaris GPUs should be available in mid-2016, or roughly 6 months from now.

First Polaris GPU Is Up and Running

But before we dive into Polaris and RTG’s goals for the new architecture, let’s talk about the first Polaris GPUs. With the first products expected to launch in the middle of this year, to no surprise RTG has their first GPUs back from the fab and up & running. To that end – and I am sure many of you are eager to hear about – as part of their presentation RTG showed off the first Polaris GPU in action, however briefly.

As a quick preface here, while RTG demonstrated a Polaris based card in action we the press were not allowed to see the physical card or take pictures of the demonstration. Similarly, while Raja Koduri held up an unsoldered version of the GPU used in the demonstration, again we were not allowed to take any pictures. So while we can talk about what we saw, at this time it’s all we can do. I don’t think it’s unfair to say that RTG has had issues with leaks in the past, and while they wanted to confirm to the press that the GPU was real and the demonstration was real, they don’t want the public (or the competition) seeing the GPU before they’re ready to show it off. That said, I do know that RTG is at CES 2016 planning to recap Polaris as part of AMD’s overall presence, so we may yet see the GPU at CES after the embargo on this information has expired.

In any case, the GPU RTG showed off was a small GPU. And while Raja’s hand is hardly a scientifically accurate basis for size comparisons, if I had to guess I would wager it’s a bit smaller than RTG’s 28nm Cape Verde GPU or NVIDIA’s GK107 GPU, which is to say that it’s likely smaller than 120mm2. This is clearly meant to be RTG’s low-end GPU, and given the evolving state of FinFET yields, I wouldn’t be surprised if this was the very first GPU design they got back from Global Foundries as its size makes it comparable to current high-end FinFET-based SoCs. In that case, it could very well also be that it will be the first GPU we see in mid-2016, though that’s just supposition on my part.

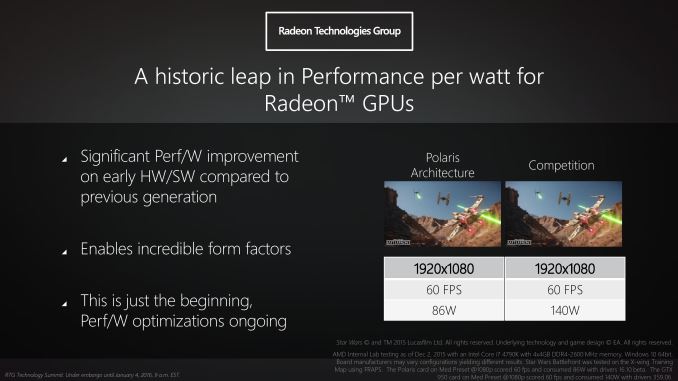

For their brief demonstration, RTG set up a pair of otherwise identical Core i7 systems running Star Wars Battlefront. The first system contained an early engineering sample Polaris card, while the other system had a GeForce GTX 950 installed (specific model unknown). Both systems were running at 1080p Medium settings – about right for a GTX 950 on the X-Wing map RTG used – and generally hitting the 60fps V-sync limit.

The purpose of this demonstration for RTG was threefold: to showcase that a Polaris GPU was up and running, that the small Polaris GPU in question could offer performance comparable to GTX 950, and finally to show off the energy efficiency advantage of the small Polaris GPU over current 28nm GPUs. To that end RTG also plugged each system into a power meter to measure the total system power at the wall. In the live press demonstration we saw the Polaris system average 88.1W while the GTX 950 system averaged 150W. Meanwhile in RTG’s own official lab tests (and used in the slide above) they measured 86W and 140W respectively.

Keeping in mind that this is wall power – PSU efficiency and the power consumption of other components is in play as well – the message RTG is trying to send is clear: that Polaris should be a very power efficient GPU family thanks to the combination of architecture and FinFET manufacturing. That RTG is measuring a 54W difference at the wall is definitely a bit surprising as GTX 950 averages under 100W to begin with, so even after accounting for PSU efficiency this implies that power consumption of the Polaris video card is about half that of the GTX 950. But as this is clearly a carefully arranged demo with a framerate cap and a chip still in early development, I wouldn’t read too much into it at this time.

153 Comments

View All Comments

Dribble - Monday, January 4, 2016 - link

Clearly they do it to make AMD look good - there's plenty of nvidia chips that can hit fps and use less power, particularly mobile ones. Capping fps is a bigger flaw - obviously the AMD chip can only manage 60 hence the cap, but the Nvidia one can manage more. They could have been more blatant and used an OC 980Ti - that would also give you 60 fps if capped at 60 fps and use even more power!TheinsanegamerN - Tuesday, January 5, 2016 - link

Got any proof that the amd chip can't do more than 60?fequalma - Thursday, January 14, 2016 - link

You got proof that it can?beck2050 - Thursday, January 7, 2016 - link

AMD, over promise and under deliveriwod - Monday, January 4, 2016 - link

No mention of GDDR5X and HBM2?HEVC decode and encode. Do AMD now pay 50 million every year to HEVC Advance?

tipoo - Monday, January 4, 2016 - link

I'm going to cautiously turn my optimism dial up to 3. Raja has some good stuff between his ears (and we're going to also attribute any good news to Based Scott, right?), and the 28nm GPU stalemate sucked for everyone. It's certain that the die shrink will be a big boon for consumers, less certain is if AMD can grab back any market share as Nvidia won't be sitting with their thumbs up their butts either.fequalma - Thursday, January 14, 2016 - link

C-suite executives can't save floundering companies from extinction. AMD is going extinct because of an erosion of brand value, and inferior technology.Nobody gives a crap if your R9 380 is "competitive". It consumes 150W more than the competition. Inability to improve performance per watt is a fatal design flaw, and it will ultimately limit the availability of AMD's inferior design.

DanNeely - Monday, January 4, 2016 - link

Is starwars battlefront still a game with a very lopsided performance differential in AMDs favor? When I looked at what was needed for 1080p/max for a friend right after launch it was a $200 AMD card vs a $300 nVidia one. I haven't paid attention to it since then, has nVidia made any major driver performance updates to narrow the gap?cknobman - Monday, January 4, 2016 - link

Star Wars Battlefront is a game that supports the GeForce Experience and is listed on the Nvidia website as an optimized game.Friendly0Fire - Monday, January 4, 2016 - link

All that means is that Nvidia has performance profiles for their cards.