AMD Reveals Polaris GPU Architecture: 4th Gen GCN to Arrive In Mid-2016

by Ryan Smith on January 4, 2016 9:00 AM ESTPolaris: What’s In a Name?

One area where AMD/RTG struggled quite a bit with their existing Graphics Core Next GPUs has been on giving the press and the public consistent and meaningful architecture names. The matter of product naming has always encompassed a certain degree of strife, as a single product can have multiple names: an architecture name, a retail name, a development name, etc. Coupled with the fact that not all of these names are meant to be used in the public, and a certain need to avoid calling attention to architectural differences in what consumers are supposed to perceive as a homogenous product line, and it can quickly become a confusing mess.

Thankfully for Polaris, RTG is revising their naming policies in order to present a clearer technical message about the architecture. Beginning with Polaris, RTG will be using Polaris as something of an umbrella architecture name – what RTG calls a macro-architecture – meant to encompass several aspects of the GPU. The end result is that the Polaris architecture name isn’t all that far removed from what would traditionally be the development family codenames (e.g. Evergreen, Southern Islands, etc), but with any luck we should be seeing more consistent messaging from RTG and we can avoid needing to create unofficial version numbers to try to communicate the architecture.

To that end the Polaris architecture will encompass a few things: the fourth generation Graphics Core Next core architecture, RTG's updated display and video encode/blocks, and the next generation of RTG's memory and power controllers. Each of these blocks is considered a seperate IP by RTG, and as a result they can and do mix and match various versions of these blocks across different GPUs, such as the GCN 1.2 based Fiji containing an HEVC decoder but not the GCN 1.2 based Tonga. This, consequently, is part of the reason why AMD has always been slightly uneasy about our unofficial naming. What remains to be seen then is how (if at all) RTG goes about communicating any changes should they update any of these blocks on future parts, and whether say a smaller update like a new video decoder would warrant a new architecture name.

As for the Polaris name itself, RTG tells us that Polaris was chosen as a nod to photons, and ultimately energy efficiency. Though it’s not clear right now what the individual GPUs will be named (if they get names at all), but given the number of stars in the universe RTG certainly isn’t at risk of running out of codenames in the near future.

The Polaris Architecture: At A High Level

As I briefly mentioned a bit earlier in this article, today’s reveal from RTG is not meant to be a deep dive into Polaris, fourth generation GCN, or any other aspect of RTG’s architecture. Rather today is meant to offer a very high level overview of RTG’s architectural and development plans – similar to what we traditionally get from Intel and NVIDIA – with more information to come at a later date. So it’s fair to say that today’s reveal won’t answer many of the major questions regarding the architecture, but it gives us the briefest of hints of what we’ll be talking about in depth here later this year.

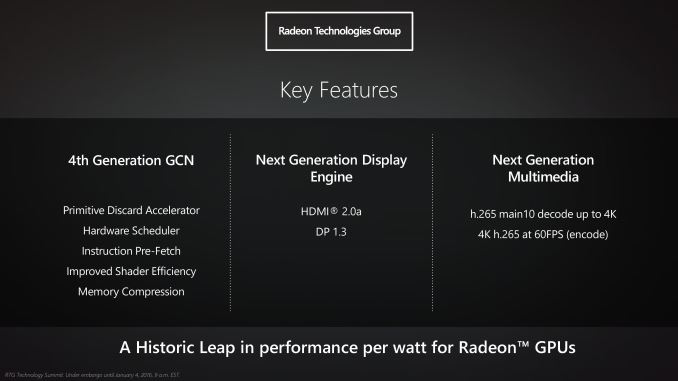

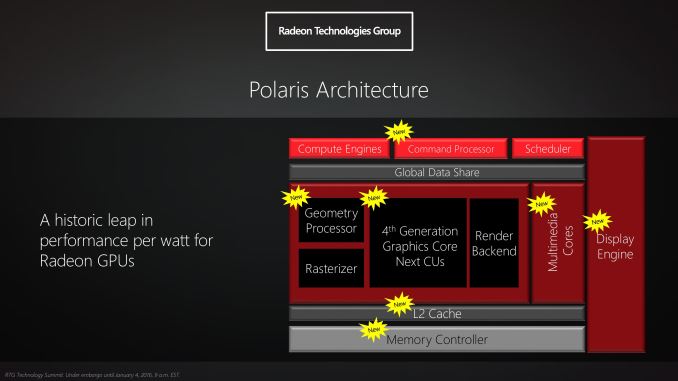

The Polaris architecture encompasses several different RTG technologies, and with a focus on energy efficiency RTG claims that this will be largest jump in performance per watt in the history of Radeon GPUs. At its heart is RTG’s fourth generation Graphics Core Next architecture, which despite the generational naming is easily the biggest change to RTG’s shader core architecture since the launch of first generation of GCN GPUs in 2012. Officially RTG has not assigned a short-form name to this architecture at this time, but as reading the 8-syllable “fourth generation GCN” name will get silly rather quickly, for now I’m going to call it GCN 4.

For GCN 4, RTG will be making a number of changes in order to improve the efficiency of the architecture from both a throughput perspective and a power perspective. Of the few details revealed to us, one of the things we have been reassured of is that GCN 4 is still very much Graphics Core Next. I expect that means retaining the current 16-wide vector SIMD as the base element of the architecture, in which case it should be fair to say that while GCN 4 is the biggest change to GCN since its introduction, RTG is further building on top of GCN rather than throwing large parts out.

RTG’s few comments, though obviously made at a very high level, offer a good deal of insight on where we should expect GCN 4 to go from a throughput perspective. Overall RTG packs quite a bit of hardware into their GCN GPUs, with Fiji reaching 4096 FP32 stream processors, however putting all of those SPs to good use has been difficult for RTG. This is something we’ve seen first-hand over the last year, as the Radeon Fury ended up being surprisingly close to the Fury X in performance despite the difference in SP counts. Similarly, the recent launch of DirectX 12 and the first uses of asynchronous shading have shown that RTG’s architectures at times significantly benefit from the technology, which is an indicator that they haven't always been able to fill up their SPs with work under normal circumstances.

As a result a lot of the disclosed GCN 4 key features point to improving throughput of GCN. Improved shader efficiency is somewhat self-explanatory in that regard. At the same time RTG is also disclosing that we will be seeing some kind of hardware scheduler in GCN 4 along with instruction pre-fetch capabilities, which again should help them improve throughput in ways to be determined. Meanwhile in an improvement for the GPU front-end, GCN 4 will be adding a primitive discard accelerator – and again we don’t have any further details than this – but it should help the architecture clamp down on getting rid of unseen geometry. Finally, GCN 4 will also include a newer generation of RTG’s memory compression technology, this coming just one generation after it was most recently (and significantly) improved for the third generation GCN architecture (GCN 1.2).

What isn’t mentioned in RTG’s key features for GCN 4 but is clearly a major aspect of the core architecture’s design is energy efficiency. While RTG will be drawing a significant amount of their energy efficiency gains from the switch to FinFET (more on this in a bit), the architecture will also play a large role here. RTG was upfront in telling us that on average the node shrink will probably count for more of Polaris's gains than architecture improvements, but this will also be very workload dependent. In order to make that happen the architecture team has been spending quite a bit of time on the matter, analyzing and simulating different workloads and architecture options to find ways to improve the architecture. Truthfully I’m not really sure how much of this will ever be disclosed by RTG – energy efficiency is very much the secret sauce of the GPU industry these days – but hopefully we’ll get to find out more about what kind of energy optimizations GCN 4 includes closer to the launch of the first GPUs.

Meanwhile along with the improvements coming courtesy of GCN 4, Polaris also encompasses RTG’s updated display and multimedia capabilities. As we covered the display tech last month I won’t dwell on that too much here, but suffice it to say Polaris includes a new display controller that will support the latest DisplayPort and HDMI standards.

Finally, along with today’s announcement RTG is also disclosing a bit of what we can expect for Polaris’s multimedia controllers. On the decode side RTG has confirmed that Polaris will include an even more capable video decoder than Fiji; along with existing format support for 8-bit HEVC Main profile content, Polaris’s decoder will support 10-bit HEVC Main10 profile content. This goes hand-in-hand with the earlier visual technologies announcement, as 10-bit encoding will be necessary to prevent banding and other artifacts with the wider color spaces being used for HDR. Meanwhile RTG’s UVD video encode block has also been updated, and HEVC encoding at up to 4K@60fps will now be supported, marking a major jump over the previous generation encoder block only supporting H.264. There is no word on whether that will include Main10 support as well, however.

Polaris Hardware: GDDR5 & HBM

Although not a part of RTG’s presentation, along with the architecture itself we also learned a few items of note about RTG’s hardware plans that I wanted to mention here.

First and foremost, Polaris will encompass both GDDR5 and High Bandwidth Memory (HBM) products. Where the line will be drawn has not been disclosed, but keeping in mind that HBM is still a newer technology, it’s reasonable to expect that we’ll only see HBM on higher-end parts. Meanwhile the rest of the Polaris lineup will continue to use GDDR5, something that is not surprising given the lesser bandwidth needs of lower-end parts and the greater cost sensitivity.

Meanwhile RTG has also disclosed that the first Polaris parts are GDDR5 based. Going hand-in-hand with what I mentioned earlier about RTG’s Polaris demonstration, it seems likely that this means we’ll see the lower-end Polaris parts first, with high-end parts to follow.

As for the specific laptop and desktop markets, RTG tells us that desktop and mobile Polaris parts will launch close together. I wouldn’t be surprised if mobile is RTG’s primary focus since it’s already the majority of PC sales, but even so desktop users shouldn’t be far behind.

153 Comments

View All Comments

smartthanyou - Tuesday, January 5, 2016 - link

So AMD announces their next GPU architecture that will eventually be delayed and then will disappoint when it is finally released. Neat!EdInk - Tuesday, January 5, 2016 - link

I'm surprised that RTG are comparing their upcoming tech with a GTX950..how bizzare... why not compare it to another AMD product as we all know how power hungry their cards have been.The game is also been tested at 1080p, capped @ 60FPS on medium setting....C'MON!!!

mapsthegreat - Wednesday, January 6, 2016 - link

Good Job! AMD! It will be a really gamechanger! congrats in advance! :Dvladx - Wednesday, January 6, 2016 - link

Since the press called the previous GCN 1.0, 1.1, 1.2 calling the new version GCN 2.0 sounds a lot more natural than GCN 4.zodiacfml - Wednesday, January 6, 2016 - link

It is not surprising that they employ TSMC for the latest node as it is their primary producer for their GPUs. GloFlo will continue to produce CPUs/APUs, which could mean, they might be producing them soon.melgross - Thursday, January 7, 2016 - link

I haven't been able to read all the comments, so I don't know if this has been brought up already. We read here, that FinFet will be used for generations to come. But the industry has acknowledged that FinFet doesn't look to work at 7nm. I know that a ways off yet, but it's a problem nevertheless, as the two technologies touted as its replacement at that node haven't been proven to work either.So what we're talking about right now, is optimization of 14nm, which hasn't yet been done, and then, sometime in 2017, for Intel, at least, the beginnings of 10nm. After that, we just don't know yet.

vladx - Thursday, January 7, 2016 - link

It won't be market viable below 5nm anyway so both Intel and IBM are probably most of their R&D to nanotubes research.mdriftmeyer - Friday, January 8, 2016 - link

Moved to a carbon based solution like nanotubes and other exotic materials will be the future. Silicon is a dead end.none12345 - Friday, January 8, 2016 - link

Cept its really more like the difference between 300 watts full system and 250 watts full system. Which 8 hours a day would be 146 kwh per year. Where i live thats about $20.And where i live its actually less then that, because its cold climate, where most of the year i would need to run the heater for that difference anyway. My system currently draws just about 300 watts when running maxed(that covers monitor, network router, etc, everything plugged into my UPS as well), and thats not enough heat for that room in the winter. In the summer it would mean AC, if the house had AC...it does not(not common where i live because you would only really use it for a month or 2).

So, ya at least for me i don't care at all if one card draws 50 more watts then the other. Ill buy whatever is best performance/$ at the time.

fequalma - Thursday, January 14, 2016 - link

Take everything in an AMD slide deck with a HUGE grain of salt.There is no way AMD will deliver a GPU that will consume 86W @ 1080p. AMD doesn't have that kind of technology.

No way.