Netgear Nighthawk X8 R8500 AC5300 Router Brings Link Aggregation Mainstream

by Ganesh T S on December 31, 2015 8:00 AM EST- Posted in

- Networking

- NetGear

- Broadcom

- 802.11ac

- router

Link Aggregation in Action

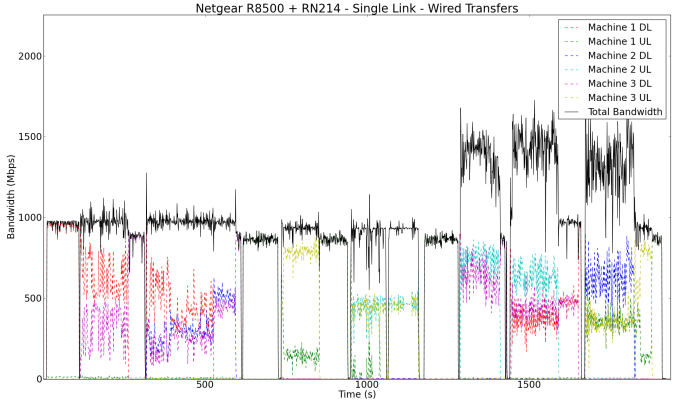

In order to get an idea of how link aggregation really helps, we first set up the NAS with just a single active network link. The first set of tests downloads the Blu-ray folder from the NAS starting with the PC connected to port 3, followed by simultaneous download of two different copies of the content from the NAS to the PCs connected to ports 3 and 4. The same concept is extended to three simultaneous downloads via ports 3, 4 and 5. A similar set of tests is run to evaluate the uplink part (i.e, data moves from the PCs to the NAS). The final set of tests involve simultaneous upload and download activities from the different PCs in the setup.

The upload and download speeds of the wired NICs on the PCs were monitored and graphed. This gives an idea of the maximum possible throughput from the NAS's viewpoint and also enables us to check if link aggregation works as intended.

The above graph shows that the download and upload links are limited to under 1 Gbps (taking into account the transfer inefficiencies introduced by various small files in the folder). However, the full duplex 1 Gbps nature of the NAS network link enables greater than 1 Gbps throughput when handling simultaneous uploads and downloads.

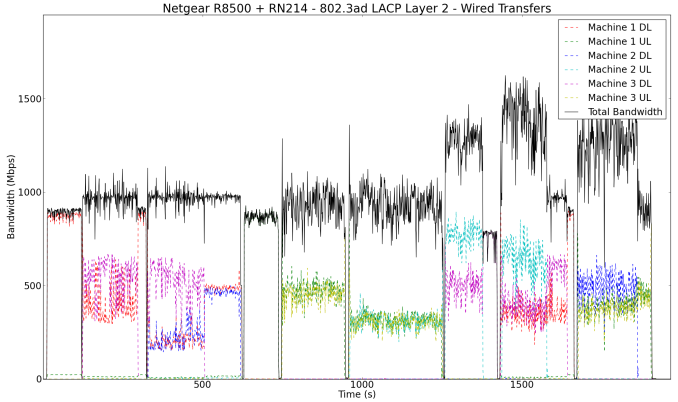

In our second wired experiment, we teamed the ports on the NAS with the default options (other than explicitly changing the teaming type to 802.3ad LACP). This left the hash type at Layer 2. Running our transfer experiments showed that there was no improvement over the single link results from the previous test.

In our test setup / configuration, Layer 2 as the transmit hash policy turned out to be ineffective. Readers interested in understanding more about the transmit hash policies which determine the distribution of traffic across the different physical ports in a team should peruse the Linux kernel documentation here (search for the description of the parameter 'xmit_hash_policy').

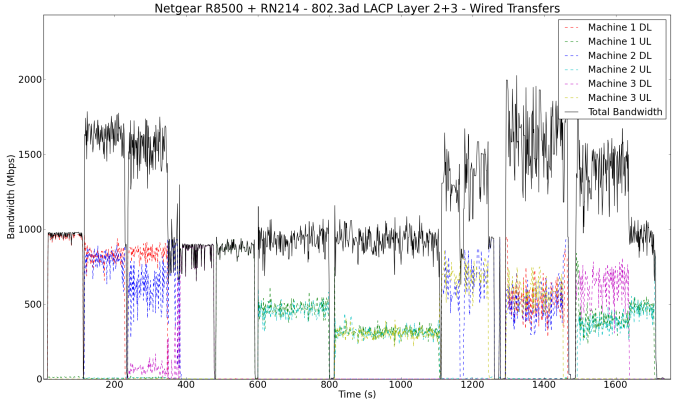

After altering the hash policy to Layer 2 + 3 in the ReadyNAS UI, the effectiveness of link aggregation became evident.

In the pure download case with two PCs, we can see each of the PCs getting around 800 Mbps (implying that the NAS was sending out data on both the physical NICs in the team). An interesting aspect to note in the pure download case with three PCs is that Machine 1 (connected to port 3) manages the same 800 Mbps as in the previous case, but the download rates on Machines 2 and 3 (connected to ports 4 and 5) add up to a similar amount. This shows that the the network ports 4 and 5 are bottlenecked by a 1 Gbps connection to the switch chip handling the link aggregation ports. This is also the reason why Netgear suggests using port 3 as one of the ports for handling the data transfer to/from the link aggregated ports. Simultaneous uploads and downloads also show some improvement, but the pure upload case is not any different from the single link case. These could be attributed to the limitations of the NAS itself. Note that we are using real-world data streams transferred using the OS file copy tools (and not artificial benchmarking programs) in these experiments.

66 Comments

View All Comments

c0y0te - Thursday, December 31, 2015 - link

Wireless speeds will never be as fast as wired speeds. It adds a whole encode/encrypt/transmit/receive/decrypt/decode process to every block of data sent. Besides, 5 Ghz is a joke for wall penetration. Nice of the FCC to sell the 3.5 Ghz band to sprint so they could bury it, wasn't it?phoenix_rizzen - Wednesday, January 13, 2016 - link

5 GHz not going through more than 2 walls reliably is a feature! Makes it much easier to implement wireless in schools where you need to worry more about capacity than coverage/range.With 2.4 GHz, you need to futz around with power levels and channel overlap and whatnot to support dense AP layouts. Sticking an AP into every other classroom works, but requires a lot time to make it work well.

With 5 GHz, you just stick an AP into every other classroom, and you're done. There's very little overlap between APs (even between floors), even at 100% transmit power. And you get more, wider channels to play with to boot.

pixelstuff - Thursday, December 31, 2015 - link

So why hasn't everyone tried to move 10GB connections more mainstream? Is it really that hard to build stable hardware for it or is everyone just trying to milk the top as long as possible?The cheapest I have found is a QNAP TS-563 with an add-on card ($800 before HDDs), and a Netgear ProSAFE S3300-28X ($500). Seems like assembly line technology should be able to make anything cheaper after 4-5 years of recouping the R&D expenses.

Reflex - Friday, January 1, 2016 - link

The problem is that 10Gbps links are very power hungry, for the vast majority of even prosumer use cases 1Gbit will be more than fast enough since 99% of a user's traffic is to/from the internet and few people have faster than gigabit home connections. So why spend the power on a single component of the PC (NIC) when almost nobody will use it at even a gigabit, much less 10Gbit?Conficio - Friday, January 1, 2016 - link

My take away is that even the wired speeds are marred with limitation. No Thank you. I don't want to have to read the manual for which port does actually deliver what is advertised. At least color code and label the ports.Furthermore, I wished any network gear would include a bufferbloat test.

TheRealAnalogkid - Saturday, January 2, 2016 - link

I bought one of these to replace a hodge-podge of router/ap/ap and it replaced all of them and has great coverage. Speeds are a lot faster than the Netgear N900 it replaced and it has coverage not only of the house (3800sqft, 1.25 story) but the entire acre lot. I couldn't find one device at this price to do that and am happy with it. Not to mention the Genie software, which is still one of the easiest to use. I bought the CM600 Cable Modem and switched it in and out with my Motorola Surfboard SB6141 and it was not even close. I was on the phone with the Cox tech when I was switching back and forth because he was interested in possibly getting one for the 24x8 channel bonding. I had stuff downloading all over the house (2 laptops, an Ipad, Netflix on 2 TVs, and an Iphone downstairs and a Sony 4k server, Netflix, my PC, a surface 3 and my Lumina upstairs) and the CM600 was much faster. Anecdotal, but did it 4 times with the same downloads in a row and results were similar each time. It feels good to have the network DONE. For now, yeah.Ratman6161 - Sunday, January 3, 2016 - link

You hit the nail on the head. It actually is better than previous generations. The issue occurs when marketing departments get hold of things. The real world advantages are difficult to explain to the average consumer i.e. the people NetGear products are generally aimed at. So rather than try to explain it, its easier to just slap a bigger number on it because consumers tend to buy into the ploy that a bigger number is better and "faster". The bigger number is kind of/sort of semi-useful in saying that "our new router is better than our old router" but pretty useless as far as comparing routers of different brands or even slightly different speced routers within a brand.On the other hand, most consumers won't know the difference anyway. If you are using your router primarily for connecting to the Internet and your connection is (as mine is) 60 Mb, then it won't matter how many Gb of throughput the router has. And its only with the cable company's relatively recent upgrade from 30 Mb to 60 Mb that the connection was faster than could be delivered by 802.11G. Sure, all of you smart enough to be having this discussion in the first place will know the difference. But 99% of the people who buy NetGear equipment aimed at the home will not know the difference.

kmmatney - Tuesday, January 5, 2016 - link

I did something similar to what you did, but 2 years ago with the R7000 Nighthawk. There were some issues in the beginning, but everything was fixed after a few firmware updates, and I've been very happy with the speed and coverage. I am using a SB6141 modem, which I have no complaints about - my last SamKnows report shows I'm averaging 166Mbps speed - but if I need a new modem, the CM600 looks awesome.So I've never hit any of the advertised Wifi speeds, but the bottom line is that I have 25+ devices in the house, and these routers handle it much better than my old Netgear WNDR3400, in terms of coverage and speeds when stressed.

toyotabedzrock - Saturday, January 2, 2016 - link

Typical, companies like to use fast parts but not actually connect them to an interface that can allow them to be used at the high speed.phuzi0n - Saturday, January 2, 2016 - link

The advertised/displayed numbers are OSI layer 1 link rates which should not be confused with throughput on any of the higher layers. WiFi has tremendous overhead on the physical layer compared to Ethernet, primarily for error correction since broadcasting into the open air is extremely noisy but also for other reasons. For 802.11a/b/g the best you could expect your layer 2 throughput to be was around 40% of the layer 1 link rate, for 802.11n/ac it's more like 50-60%.Now in some of the cases you were getting extremely low performance and in other cases slightly low, but the review shows a major lack of understanding and it further spreads common confusion. It is important to make the distinction between the advertised layer 1 link rates and throughput achieved at higher layers so that people understand ALL WiFi IS ADVERTISED THIS WAY and to only expect ~1/2 of what is advertised.