The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM ESTFrequency Scaling

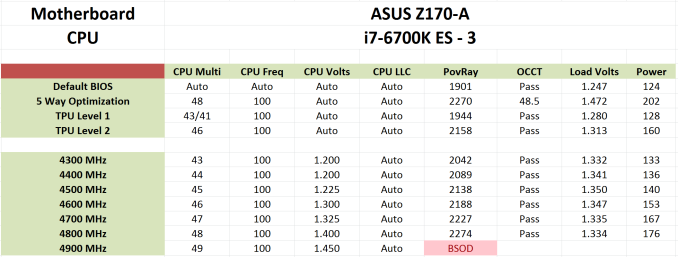

Below is an example of our results from overclock testing in a table that we publish in with both processor and motherboard. Our tests involve setting a multiplier and a frequency, some stress tests, and either raising the multiplier if successful or increasing the voltage at the point of failure/a blue screen. This methodology has worked well as a quick and dirty method to determine frequency, though lacks the subtly that seasoned overclockers might turn to in order to improve performance.

This was done on our ASUS Z170-A sample while it was being tested for review. When we applied ASUS's automatic overclock software tool, Auto-OC, it finalized an overclock at 4.8 GHz. This was higher than what we had seen with the same processor previously (even with the same cooler), so in true fashion I was skeptical as ASUS Auto-OC has been rather hopeful in the past. But it sailed through our standard stability tests easily, without reducing in overclocking once, meaning that it was not overheating by any means. As a result, I applied our short-form CPU tests in a recently developed automated script as an extra measure of stability.

These tests run in order of time taken, so last up was Handbrake converting a low quality film followed by a high quality 4K60 film. In low quality mode, all was golden. At 4K60, the system blue screened. I triple-checked with the same settings to confirm it wasn’t going through, and three blue screens makes a strike out. But therein is a funny thing – while this configuration was stable with our regular mixed-AVX test, the large-frame Handbrake conversion made it fall over.

So as part of this testing, from 4.2 GHz to 4.8 GHz, I ran our short-form CPU tests over and above the regular stability tests. These form the basis of the results in this mini-test. Lo and behold, it failed at 4.6 GHz as well in similar fashion – AVX in OCCT OK, but HandBrake large frame not so much. I looped back with ASUS about this, and they confirmed they had seen similar behavior specifically with HandBrake as well.

Users and CPU manufacturers tend to view stability in one of two ways. The basic way is as a pure binary yes/no. If the CPU ever fails in any circumstance, it is a no. When you buy a processor from Intel or AMD, that rated frequency is in the yes column (if it is cooled appropriately). This is why some processors seem to overclock like crazy from a low base frequency – because at that frequency, they are confirmed as working 100%. A number of users, particularly those who enjoy strangling a poor processor with Prime95 FFT torture tests for weeks on end, also take on this view. A pure binary yes/no is also hard for us to test in a time limited review cycle.

The other way of approaching stability is the sliding scale. At some point, the system is ‘stable enough’ for all intents and purposes. This is the situation we have here with Skylake – if you never go within 10 feet of HandBrake but enjoy gaming with a side of YouTube and/or streaming, or perhaps need to convert a few dozen images into a 3D model then the system is stable.

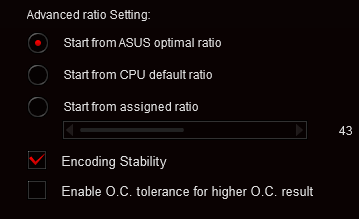

To that end, ASUS is implementing a new feature in its automatic overclocking tool. Along with the list of stress test and OC options, an additional checkbox for HandBrake style data paths has been added. This will mean that a system needs more voltage to cope, or will top out somewhere else. But the sliding scale has spoken.

Incidentally at IDF I spoke to Tom Vaughn, VP of MultiCoreWare (who develops the open source x265 HEVC video encoder and accompanying GUI interface). We discussed video transcoding, and I bought up this issue on Skylake. He stated that the issue was well known by MultiCoreWare for overclocked systems. Despite the prevalance of AVX testing software, x265 encoding with the right algorithms will push parts of the CPU beyond all others, and with large frames it can require large amounts of memory to be pushed around the caches at the same time, offering further permutations of stability. We also spoke about expanding our x265 tests, covering best case/worst case scenarios from a variety of file formats and sources, in an effort to pinpoint where stability can be a factor as well as overall performance. These might be integrated into future overclocking tests, so stay tuned.

103 Comments

View All Comments

Impulses - Saturday, August 29, 2015 - link

Why would it make a difference? The BCLK is now decoupled from anything that would matter... It's just another tool like the ratio, one that could let you eke out an extra 50MHz or whatever if you really care to take it to the edge.Khato - Friday, August 28, 2015 - link

Two inquiries regarding future Skylake testing:1. While the initial review was intriguing in terms of actually exploring the DDR3L vs DDR4 2133 performance difference, higher DDR4 frequency testing is still absent. Will there be a memory scaling article at some point?

2. What's the point of evening including the discrete gaming benchmarks? Is there a plan to revamp this category of testing to provide meaningful data - inclusion of minimum frame rates, exploring different settings, using different games.

ImSpartacus - Saturday, August 29, 2015 - link

Yeah, it would be nice if we could get some proper frame time benchmarking.varg14 - Saturday, September 5, 2015 - link

I too would love to see High end DDR3L compared to DDR4 on skylake and if the tight timings of DDR3l are beneficial in what areas if at all.ImSpartacus - Friday, August 28, 2015 - link

I feel ridiculously shallow for asking this but could we see fewer tables that look straight out of excel going forward?Anandtech has a glassy table & graph design language. While it might be a bunch of excel templates, it still lets me suspend my disbelief a little bit more.

I can't justify my request with any tangible argument other than something "feels" off. I apologize as I understand how frustrating such feedback can be. I trust Anandtech to always be improving & setting the standard on all fronts.

ImSpartacus - Friday, August 28, 2015 - link

classy*garbagedisposal - Saturday, August 29, 2015 - link

They've used the same format a number of times before and it's pretty damn clear and easy to understand. Prettifying the excel tables on a mini article is a waste of time.ImSpartacus - Saturday, August 29, 2015 - link

You're right, this isn't an isolated issue. I didn't comment on it first time or the second time.And it's hard to tell someone who exhaustively tested numerous scenarios that they oughta spend even more time to ensure that they follow style guides and that the extra time spent won't even affect the utilitarian value of the results.

V900 - Friday, August 28, 2015 - link

Nice overview.But isn't overclocking in reality not really relevant anymore? A remnant of days gone by?

Dont get me wrong, I was an eager overclocker myself back in the day. But back then, you could make a 2-300$ part perform like a CPU that cost twice as much, if not more.

Today, processors have gotten so fast, that even the cheap 200$ CPUs are "fast enough" for most tasks.

And when you do overclock a 4ghz CPU by 600mhz or more, is the 5-10% speed increase really worth it? Most people would have been better off taking the hundreds of dollars they invested in coolers, OC friendly motherboards, etc and put them towards a better CPU instead.

Impulses - Friday, August 28, 2015 - link

There's a lot of people that just do it for fun, same way people mess with their cars for often negligible gains... Not all spend a lot on it either, I'd buy the same $130-170 mobo whether I was OC'ing or not, and I'd run the same $65 cooler for the sake of noise levels (vs something like a $30 212).