The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM EST

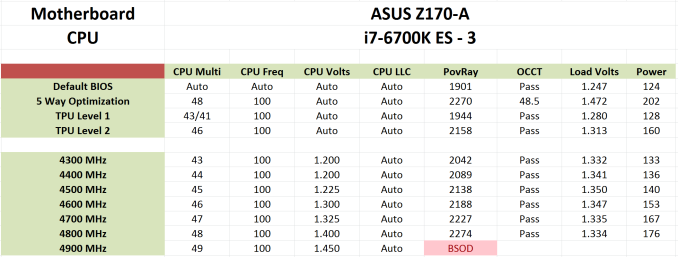

At the time of our Skylake review of both the i7-6700K and the i5-6600K, due to the infancy of the platform and other constraints, we were unable to probe the performance uptake of the processors as they were overclocked. Our overclock testing showed that 4.6 GHz was a reasonable marker for our processors; however fast forward two weeks and that all seems to change as updates are released. With a new motherboard and the same liquid cooler, the same processor that performed 4.6 GHz gave 4.8 GHz with relative ease. In this mini-test, we tested our short-form CPU workload as well as integrated and discrete graphics at several frequencies to see where the real gains are.

In the Skylake review we stated that 4.6 GHz still represents a good target for overclockers to aim for, with 4.8 GHz being indicative of a better sample. Both ASUS and MSI have also stated similar prospects in their press guides that accompany our samples, although as with any launch there is some prospect that goes along with the evolution of understanding the platform over time.

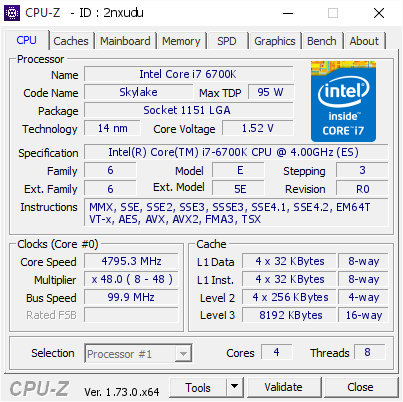

In this mini-test (performed initially in haste pre-IDF, then extra testing after analysing the IGP data), I called on a pair of motherboards - ASUS's Z170-A and ASRock's Z170 Extreme7+ - to provide a four point scale in our benchmarks. Starting with the 4.2 GHz frequency of the i7-6700K processor, we tested this alongside every 200 MHz jump up to 4.8 GHz in both our shortened CPU testing suite as well as iGPU and GTX 980 gaming. Enough of the babble – time for fewer words and more results!

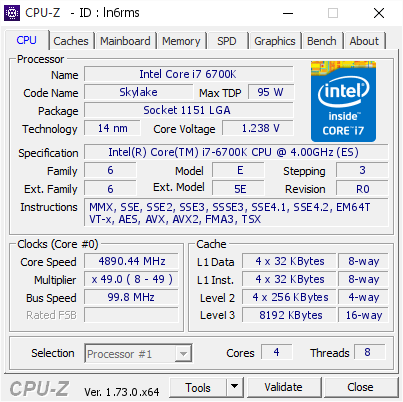

We actually got the CPU to 4.9 GHz, as shown on the right, but it was pretty unstable for even basic tasks.

(Voltage is read incorrectly on the right.)

OK, a few more words before results – all of these numbers can be found in our overclocking database Bench alongside the stock results and can be compared to other processors.

Test Setup

| Test Setup | |

| Processor | Intel Core i7-6700K (ES, Retail Stepping), 91W, $350 4 Cores, 8 Threads, 4.0 GHz (4.2 GHz Turbo) |

| Motherboards | ASUS Z170-A ASRock Z170 Extreme7+ |

| Cooling | Cooler Master Nepton 140XL |

| Power Supply | OCZ 1250W Gold ZX Series Corsair AX1200i Platinum PSU |

| Memory | Corsair DDR4-2133 C15 2x8 GB 1.2V or G.Skill Ripjaws 4 DDR4-2133 C15 2x8 GB 1.2V |

| Memory Settings | JEDEC @ 2133 |

| Video Cards | ASUS GTX 980 Strix 4GB ASUS R7 240 2GB |

| Hard Drive | Crucial MX200 1TB |

| Optical Drive | LG GH22NS50 |

| Case | Open Test Bed |

| Operating System | Windows 7 64-bit SP1 |

The dynamics of CPU Turbo modes, both Intel and AMD, can cause concern during environments with a variable threaded workload. There is also an added issue of the motherboard remaining consistent, depending on how the motherboard manufacturer wants to add in their own boosting technologies over the ones that Intel would prefer they used. In order to remain consistent, we implement an OS-level unique high performance mode on all the CPUs we test which should override any motherboard manufacturer performance mode.

Many thanks to...

We must thank the following companies for kindly providing hardware for our test bed:

Thank you to AMD for providing us with the R9 290X 4GB GPUs.

Thank you to ASUS for providing us with GTX 980 Strix GPUs and the R7 240 DDR3 GPU.

Thank you to ASRock and ASUS for providing us with some IO testing kit.

Thank you to Cooler Master for providing us with Nepton 140XL CLCs.

Thank you to Corsair for providing us with an AX1200i PSU.

Thank you to Crucial for providing us with MX200 SSDs.

Thank you to G.Skill and Corsair for providing us with memory.

Thank you to MSI for providing us with the GTX 770 Lightning GPUs.

Thank you to OCZ for providing us with PSUs.

Thank you to Rosewill for providing us with PSUs and RK-9100 keyboards.

103 Comments

View All Comments

bill.rookard - Friday, August 28, 2015 - link

I wonder if not having the FIVR on-die has to do with the difference between the Haswell voltage limits and the Skylake limits?Communism - Friday, August 28, 2015 - link

Highly doubtful, as Ivy Bridge has relatively the same voltage limits.Morawka - Saturday, August 29, 2015 - link

yea thats a crazy high voltage.. that was even high for 65nm i7 920'skuttan - Sunday, August 30, 2015 - link

i7 920 is 45nm not 65nmCellar Door - Friday, August 28, 2015 - link

Ian, so it seems like the memory controller - even though capable of driving DDR4 to some insane frequencies seems to error out with large data sets?It would interesting to see this behavior with Skylake and DDR3L.

Also it would be interesting to see in the i56600k, lacking the hyperthreading would run into same issues.

Communism - Friday, August 28, 2015 - link

So your sample definitively wasn't stable above 4.5ghz after all then.......Haswell/Broadwell/Skylake dud confirmed. Waiting for Skylake-E where the "reverse hyperthreading" will be best leveraged with the 6/8 core variant with proper quad channel memory bandwidth.

V900 - Friday, August 28, 2015 - link

Nope, it was stable above 4.5 Ghz...And no dud confirmed in Broadwell/Skylake.

There is just one specific scenario (4K/60 encoding) where the combination of the software and the design of the processor makes overclocking unfeasible.

Not really a failure on Intels part, since it's not realistic to expect them to design a mass-market CPU according to the whims of the 0.5% of their customers who overclock.

Gigaplex - Saturday, August 29, 2015 - link

If you can find a single software load that reliably works at stock settings, but fails at OC, then the OC by definition is not 100% stable. You might not care and are happy to risk using a system configured like that, but I sure as hell wouldn't.Oxford Guy - Saturday, August 29, 2015 - link

Exactly. Not stable is not stable.HollyDOL - Sunday, August 30, 2015 - link

I have to agree... While we are not talking about server stable with ECC and things, either you are rock stable on desktop use or not stable at all. Already failing on one of test scenarios is not good at all. I wouldn't be happy if there were some hidden issues occuring during compilations, or after few hours of rendering a scene... or, let's be honest, in the middle of gaming session with my online guild. As such I am running my 2500k half GHz lower than stability testing shown as errorless. Maybe it's excessively much, but I like to be on a safe side with my OC, especially since the machine is used for wide variety of purposes.