The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM ESTFrequency Scaling

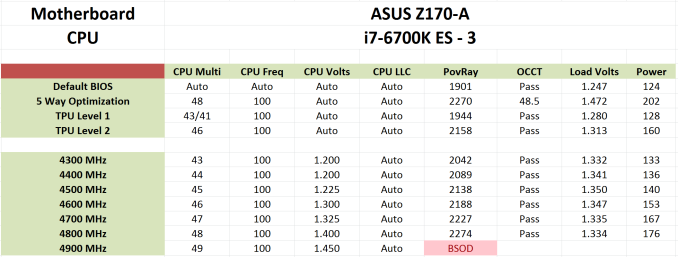

Below is an example of our results from overclock testing in a table that we publish in with both processor and motherboard. Our tests involve setting a multiplier and a frequency, some stress tests, and either raising the multiplier if successful or increasing the voltage at the point of failure/a blue screen. This methodology has worked well as a quick and dirty method to determine frequency, though lacks the subtly that seasoned overclockers might turn to in order to improve performance.

This was done on our ASUS Z170-A sample while it was being tested for review. When we applied ASUS's automatic overclock software tool, Auto-OC, it finalized an overclock at 4.8 GHz. This was higher than what we had seen with the same processor previously (even with the same cooler), so in true fashion I was skeptical as ASUS Auto-OC has been rather hopeful in the past. But it sailed through our standard stability tests easily, without reducing in overclocking once, meaning that it was not overheating by any means. As a result, I applied our short-form CPU tests in a recently developed automated script as an extra measure of stability.

These tests run in order of time taken, so last up was Handbrake converting a low quality film followed by a high quality 4K60 film. In low quality mode, all was golden. At 4K60, the system blue screened. I triple-checked with the same settings to confirm it wasn’t going through, and three blue screens makes a strike out. But therein is a funny thing – while this configuration was stable with our regular mixed-AVX test, the large-frame Handbrake conversion made it fall over.

So as part of this testing, from 4.2 GHz to 4.8 GHz, I ran our short-form CPU tests over and above the regular stability tests. These form the basis of the results in this mini-test. Lo and behold, it failed at 4.6 GHz as well in similar fashion – AVX in OCCT OK, but HandBrake large frame not so much. I looped back with ASUS about this, and they confirmed they had seen similar behavior specifically with HandBrake as well.

Users and CPU manufacturers tend to view stability in one of two ways. The basic way is as a pure binary yes/no. If the CPU ever fails in any circumstance, it is a no. When you buy a processor from Intel or AMD, that rated frequency is in the yes column (if it is cooled appropriately). This is why some processors seem to overclock like crazy from a low base frequency – because at that frequency, they are confirmed as working 100%. A number of users, particularly those who enjoy strangling a poor processor with Prime95 FFT torture tests for weeks on end, also take on this view. A pure binary yes/no is also hard for us to test in a time limited review cycle.

The other way of approaching stability is the sliding scale. At some point, the system is ‘stable enough’ for all intents and purposes. This is the situation we have here with Skylake – if you never go within 10 feet of HandBrake but enjoy gaming with a side of YouTube and/or streaming, or perhaps need to convert a few dozen images into a 3D model then the system is stable.

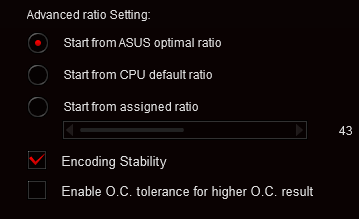

To that end, ASUS is implementing a new feature in its automatic overclocking tool. Along with the list of stress test and OC options, an additional checkbox for HandBrake style data paths has been added. This will mean that a system needs more voltage to cope, or will top out somewhere else. But the sliding scale has spoken.

Incidentally at IDF I spoke to Tom Vaughn, VP of MultiCoreWare (who develops the open source x265 HEVC video encoder and accompanying GUI interface). We discussed video transcoding, and I bought up this issue on Skylake. He stated that the issue was well known by MultiCoreWare for overclocked systems. Despite the prevalance of AVX testing software, x265 encoding with the right algorithms will push parts of the CPU beyond all others, and with large frames it can require large amounts of memory to be pushed around the caches at the same time, offering further permutations of stability. We also spoke about expanding our x265 tests, covering best case/worst case scenarios from a variety of file formats and sources, in an effort to pinpoint where stability can be a factor as well as overall performance. These might be integrated into future overclocking tests, so stay tuned.

103 Comments

View All Comments

MikeMurphy - Saturday, January 30, 2016 - link

Tragically, few stress testing programs cycle power states during testing, which is important. Most just place CPU under continuous load.0razor1 - Friday, August 28, 2015 - link

Didn't mean memcheck - it's error check on regular OCCT :GPU.Xenonite - Saturday, August 29, 2015 - link

Different parts of the CPU are fabricated to withstand different voltages. The DRAM controller is only optimised for the lowest possible power/performance, so the silicon is designed with low leakage in mind.As another example, the integrated PLL runs at 1.8V.

Electron migration is indeed the main failure mechanism in most modern processors, however, the metal interconnect fabric has not been shinking by the same factors as the CMOS logic has. That means these 14nm processors can take more voltage than you would expect from a simple linear geometric relationship.

What exactly the maximum safe limits are will probably never be known to those outside of Intel, but just as with Haswell, I've been running at a 24/7 1.4V core voltage, which I don't believe will significantly shorten the life of the CPU (especially if you have the cooling capacity to up the voltage to around 1.55V as the CPU degrades over the following decade).

In any case, NOT running the CPU at at least 4.6GHz would mean that it wasn't a worthwhile upgrade from my 5960X, so the safety question is pretty much moot in my case.

Oxford Guy - Saturday, August 29, 2015 - link

Unless that worthwhile upgrade burns up in which case it's a non-worthwhile downgrade.0razor1 - Sunday, August 30, 2015 - link

Hey @Xenonite, sorry to quote you directly..'Electron migration is indeed the main failure mechanism in most modern processors, however, the metal interconnect fabric has not been shinking by the same factors as the CMOS logic has'

-> I'd say spot on. But I thought that's what Intel 14nm was all about -they had the metal shrunk down to 14nm as well, as opposed to what Samsung as a pseudo 14nm (20nm metal interconnect).

just as with Haswell, I've been running at a 24/7 1.4V core voltage

-> Intel has specified 1.3VCore as being the max safe voltage. I'd pay heed :)

NOT running the CPU at at least 4.6GHz would mean that it wasn't a worthwhile upgrade from my 5960X, so the safety question is pretty much moot in my case.

-> You're right. But then everyone can't afford upgrades. I've just come from a Phenom 2 @4GHz (1.41VCore) to a 4670k @ 4.6GHz (1.29/1.9 core/VDDIN). What I did do was once the Ph2 was out of warranty, lapped it and OC'd it as far as it would go. Tried my hands at an FX -6300 for a week and was disappointed to say the least.

Long story short and back to my point, P95.

If it doesn't P95, it corrupts. /motto

varg14 - Friday, September 4, 2015 - link

I agree if you have good cooling like my Cooler Master Nepton 140XL on my 4.6-5.1ghz 2600k that uses motherboard VRM's and keep temps under 70c always I see no reason to see any chip degradation or failures. I really only heard of chip degradation and failures when they started putting the VRM's on the CPU die itself with haswell adding more heat to the CPU. Now with Skylake using motherboard VRM;s again everything should be peachy keen.StevoLincolnite - Saturday, August 29, 2015 - link

Electromigration.I was going those volts at 40nm...

0razor1 - Sunday, August 30, 2015 - link

Lol I topped out @ 1.41Vcore on 45nm Phenom 2 ( with max temps on the core of 60C @ 4GHz).Earlier 24x7 for three years was 1.38Vcore for 3.8GHz.

JKflipflop98 - Sunday, September 6, 2015 - link

"If it's OK, then can someone illustrate why one should not go over say 1.6V on the DRAM in 22nm, why stick to 1.35V for 14nm? Might as well use standard previous generation voltages and call it a day?"Because the DRAM's line voltage goes straight into the integrated memory controller within the cpu. While the chunky circuits in your ram modules can handle 1.6V, the tiny little logical transistors on the CPU can only handle 1.35 before vaporizing.

Zoeff - Friday, August 28, 2015 - link

Yeah that's what I thought as well but apparently the voltage in the silicon is lower compared to what the input voltage is which is what you can control as the user. At least, this is what I read on overclock.net. Right now CPUz reports ~1.379v (flicking, +/- 0.01v) and that's with EIST, C-States and SVID Support disabled. Different monitoring software sometimes reports different voltages too so I find it hard to check what my CPU is actually doing.