The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTComparing IPC on Skylake: Discrete Gaming

For this set of tests, we kept things simple – a low end single R7 240 DDR3, an ex-high end GTX 770 Lightning and a top line GTX 980 on our standard CPU game set under normal conditions. The IGP is not used here on the basis that each generation uses a substantially different integrated graphics arrangement.

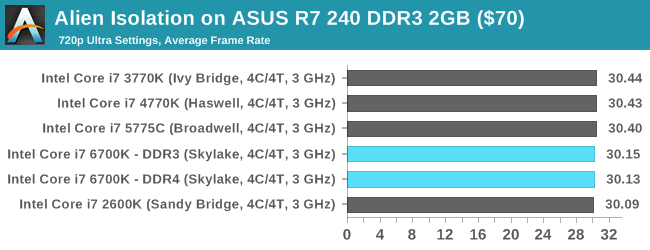

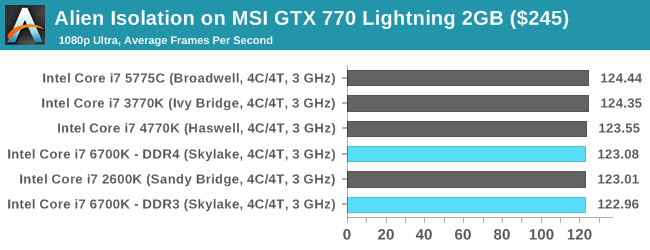

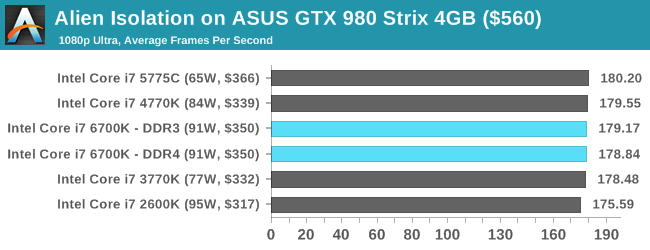

Alien: Isolation

If first person survival mixed with horror is your sort of thing, then Alien: Isolation, based off of the Alien franchise, should be an interesting title. Developed by The Creative Assembly and released in October 2014, Alien: Isolation has won numerous awards from Game Of The Year to several top 10s/25s and Best Horror titles, ratcheting up over a million sales by February 2015. Alien: Isolation uses a custom built engine which includes dynamic sound effects and should be fully multi-core enabled.

For low end graphics, we test at 720p with Ultra settings, whereas for mid and high range graphics we bump this up to 1080p, taking the average frame rate as our marker with a scripted version of the built-in benchmark.

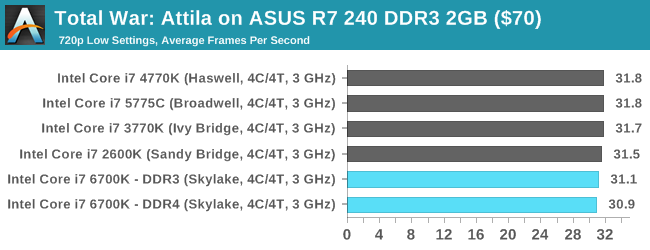

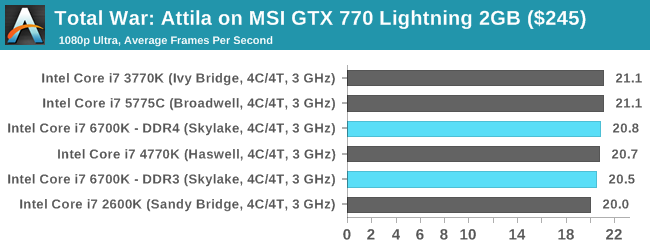

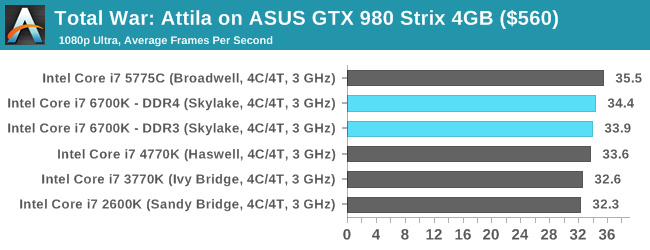

Total War: Attila

The Total War franchise moves on to Attila, another The Creative Assembly development, and is a stand-alone strategy title set in 395AD where the main story line lets the gamer take control of the leader of the Huns in order to conquer parts of the world. Graphically the game can render hundreds/thousands of units on screen at once, all with their individual actions and can put some of the big cards to task.

For low end graphics, we test at 720p with performance settings, recording the average frame rate. With mid and high range graphics, we test at 1080p with the quality setting. In both circumstances, unlimited video memory is enabled and the in-game scripted benchmark is used.

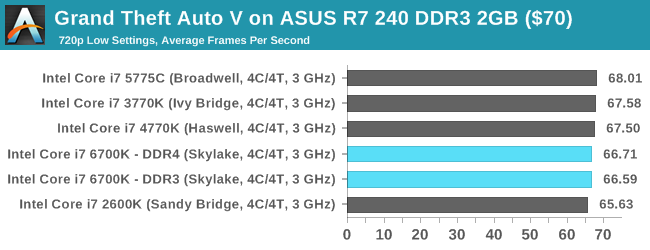

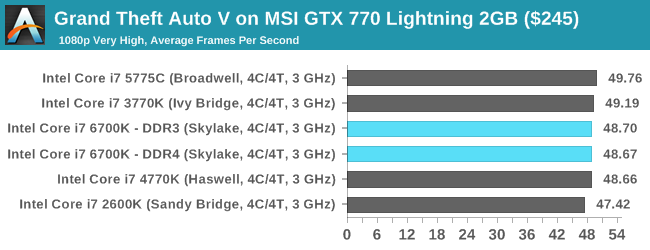

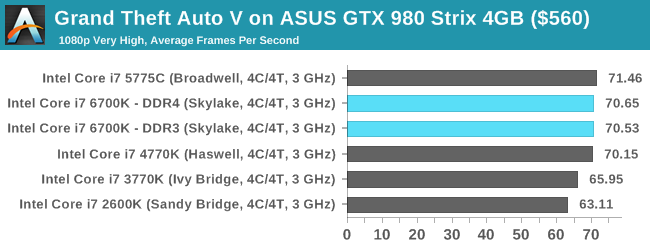

Grand Theft Auto V

The highly anticipated iteration of the Grand Theft Auto franchise finally hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark, relying only on the final part which combines a flight scene along with an in-city drive-by followed by a tanker explosion. For low end systems we test at 720p on the lowest settings, whereas mid and high end graphics play at 1080p with very high settings across the board. We record both the average frame rate and the percentage of frames under 60 FPS (16.6ms).

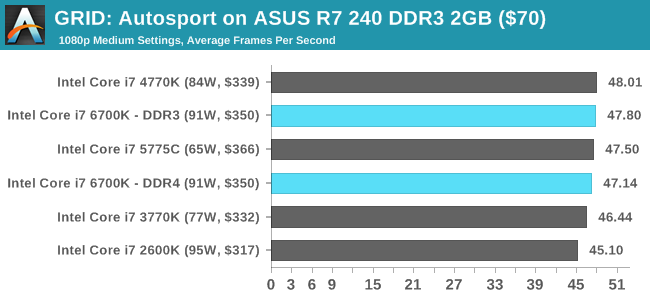

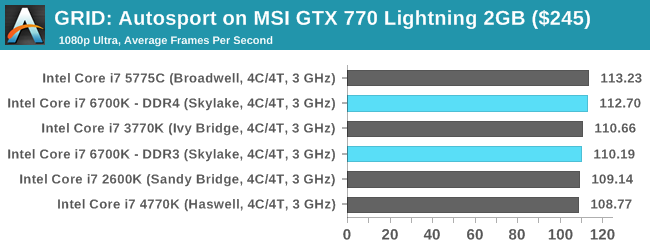

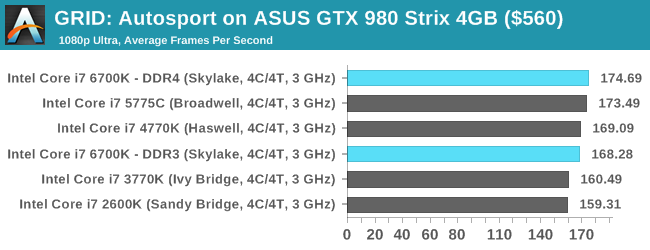

GRID: Autosport

No graphics tests are complete without some input from Codemasters and the EGO engine, which means for this round of testing we point towards GRID: Autosport, the next iteration in the GRID and racing genre. As with our previous racing testing, each update to the engine aims to add in effects, reflections, detail and realism, with Codemasters making ‘authenticity’ a main focal point for this version.

GRID’s benchmark mode is very flexible, and as a result we created a test race using a shortened version of the Red Bull Ring with twelve cars doing two laps. The car is focus starts last and is quite fast, but usually finishes second or third. For low end graphics we test at 1080p medium settings, whereas mid and high end graphics get the full 1080p maximum. Both the average and minimum frame rates are recorded.

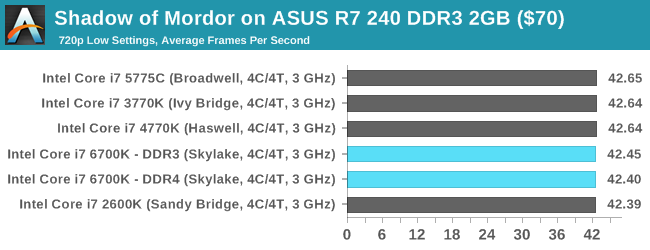

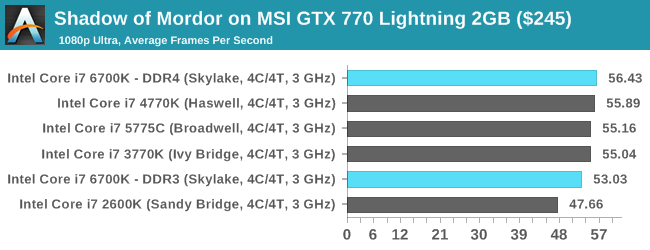

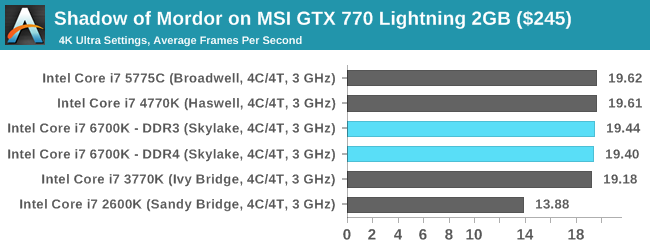

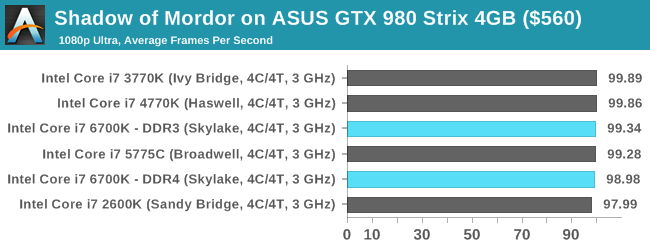

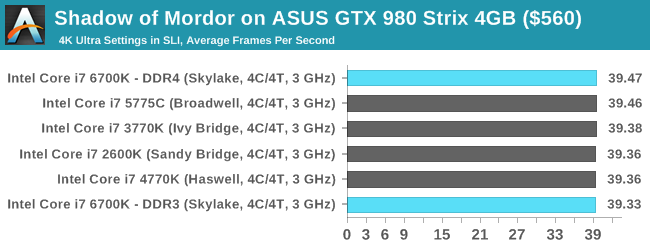

Middle-Earth: Shadow of Mordor

The final title in our testing is another battle of system performance with the open world action-adventure title, Shadow of Mordor. Produced by Monolith using the LithTech Jupiter EX engine and numerous detail add-ons, SoM goes for detail and complexity to a large extent, despite having to be cut down from the original plans. The main story itself was written by the same writer as Red Dead Redemption, and it received Zero Punctuation’s Game of The Year in 2014.

For testing purposes, SoM gives a dynamic screen resolution setting, allowing us to render at high resolutions that are then scaled down to the monitor. As a result, we get several tests using the in-game benchmark. For low end graphics we examine at 720p with low settings, whereas mid and high end graphics get 1080p Ultra. The top graphics test is also redone at 3840x2160, also with Ultra settings, and we also test two cards at 4K where possible.

Conclusions on Gaming

There’s no easy way to write this.

Discrete graphics card performance decreases on Skylake over Haswell.

This doesn’t particularly make much sense at first glance. Here we have a processor with a higher IPC than Haswell but it performs worse in both DDR3 and DDR4 modes. The amount by which it performs worse is actually relatively minor, usually -3% with the odd benchmark (GRID on R7 240) going as low as -5%. Why does this happen at all?

So we passed our results on to Intel, as well as a few respected colleagues in the industry, all of whom were quite surprised. During a benchmark, the CPU performs tasks and directs memory transfers through the PCIe bus and vice versa. Technically, the CPU tasks should complete quicker due to the IPC and the improved threading topology, so that only leaves the PCIe to DRAM via CPU transfers.

Our best guess, until we get to IDF to analyze what has been changed or a direct explanation from Intel, is that part of the FIFO buffer arrangement between the CPU and PCIe might have changed with a hint of additional latency. That being said, a minor increase in PCIe overhead (or a decrease in latency/bandwidth) should be masked by the workload, so there might be something more fundamental at play, such as bus requests being accidentally duplicated or resent due to signal breakdown. There might also be a tertiary answer of an internal bus not running at full speed. To be sure, we rested some benchmarks on a different i7-6700K and a different motherboard, but saw the same effect. We’ll see how this plays out on the full-speed tests.

477 Comments

View All Comments

mkozakewich - Thursday, August 6, 2015 - link

It's unlikely you'll be seeing doubles and doubles anymore. If you look at what's been going on for the past several years, we're moving to more efficient processes instead of improving performance. I'm sure Intel's end goal is to make powerful CPUs for devices that fit into people's pockets. At that point you might see more start going into raw performance.edlee - Wednesday, August 5, 2015 - link

i am not sure why conclusion to the review makes it seem i7-2600k users should upgrade to this.If you are a gamer, there is no 25% improvement in average or minimum frame rate, its 3-6% at best.

Is this the future of intel's tock strategy, to give very little improvement to gamers?

VeauX - Wednesday, August 5, 2015 - link

On the gaming side, you'll never see a speed bump if you are not CPU Limited. Once you have a decent CPU, just put your money in GPU, period.Nagorak - Wednesday, August 5, 2015 - link

CPU is almost irrelevant for games at this point. As games start to take advantage of more cores, older processors are utilized more efficiently, further negating the need to upgrade. DX12 may improve this further.I sort of wonder if Intel isn't on a path to some trouble here. There's basically no point for anyone to upgrade their CPU anymore, not even gamers. Other than a few specialized applications the increase in performance just doesn't really matter, if it exists at all.

AndrewJacksonZA - Thursday, August 6, 2015 - link

I also sometimes think that, but then I remember that we developers *WILL* find a way to make use of more computing power.Having said that, I still can't quite justify me upgrading from my E6750 and 6670 @ 1240 x 1024. I slapped in an SSD in February last year and it was like I got a brand new machine.

Chrome and Edge on Win10 lag a teeny tiny bit though, maybe I can use that as my justification... Perhaps a 5960X or a 5930K though - more cores FTW? Or perhaps a 6700K and get it to 5GHz for the rights to claim some "5GHz Daily Driver" epeen... ;-)

Zoomer - Friday, August 14, 2015 - link

Exactly, I reached the opposite conclusion as Ian. There is no point in upgrading even from SB. If you do, stick to DDR3. Only GTA5 benefits from DDR4.It's interesting to see if regular DDR3 sticks can run on Skylake, perhaps by bumping the Vsa, voltages. Not clear if Ian's overclocking tests were with the IGP disabled - would be interesting to see if disabling the IGP / reducing Fgt, Vgt helps overclocking any.

StevoLincolnite - Wednesday, August 5, 2015 - link

I'm still happily sitting with my Sandy-Bridge-E. Still handles everything you could throw at it just fine... And still gives Intel's $1,000 chips a run for their money whilst sitting at 5Ghz.mapesdhs - Wednesday, August 12, 2015 - link

Yup, that's why my most recent gaming build was a R4E & 3930K, cost less than a new HW build, much quicker overall. My existing SB gaming PC is 5GHz aswell (every 2700K I've tried handles 5.0 just fine).leexgx - Monday, August 10, 2015 - link

hmm maybe i can upgrade from my i7-920 now (really any of the newer intel cpus are faster then it)sheeple - Thursday, October 15, 2015 - link

DON'T BE STUPID SHEEPLE!!! NEW DOES NOT ALWAYS = BETTER!