The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTComparing IPC on Skylake: Memory Latency and CPU Benchmarks

The following explanation of IPC has been previously used in our Broadwell review.

Being able to do more with less, in the processor space, allows both the task to be completed quicker and often for less power. While the concept of having devices with multiple cores has allowed many programs to run at once, purely parallel compute such as graphics and most things to run faster, we are all still limited by the fact that a lot of software is still relying on one line of code after another. This is referred to as the serial part of the software, and is the basis for many early programming classes – getting the software to compile and complete is more important than speed. But the truth is that having a few fast cores helps more than several thousand super slow cores. This is where IPC comes in to play.

The principles behind extracting IPC are quite complex as one might imagine. Ideally every instruction a CPU gets should be read, executed and finished in one cycle, however that is never the case. The processor has to take the instruction, decode the instruction, gather the data (depends on where the data is), perform work on the data, then decide what to do with the result. Moving has never been more complicated, and the ability for a processor to hide latency, pre-prepare data by predicting future events or keeping hold of previous events for potential future use is all part of the plan. All the meanwhile there is an external focus on making sure power consumption is low and the frequency of the processor can scale depending on what the target device actually is.

For the most part, Intel has successfully increased IPC every generation of processor. In most cases, 5-10% with a node change and 5-25% with an architecture change with the most recent large jumps being with the Core architecture and the Sandy Bridge architectures, ushering in new waves of super-fast computational power. As Broadwell to Skylake is an architecture change with what should be large updates, we should expect some good gains.

| Intel Desktop Processor Cache Comparison | |||||

| L1-D | L1-I | L2 | L3 | L4 | |

| Sandy Bridge i7 | 4 x 32 KB | 4 x 32 KB | 4 x 256 KB | 8 MB | |

| Ivy Bridge i7 | 4 x 32 KB | 4 x 32 KB | 4 x 256 KB | 8 MB | |

| Haswell i7 | 4 x 32 KB | 4 x 32 KB | 4 x 256 KB | 8 MB | |

| Broadwell i7 (Desktop / Iris Pro 6200) |

4 x 32 KB | 4 x 32 KB | 4 x 256 KB | 6 MB | 128 MB eDRAM |

| Skylake i7 | 4 x 32 KB | 4 x 32 KB | 4 x 256 KB | 8 MB | |

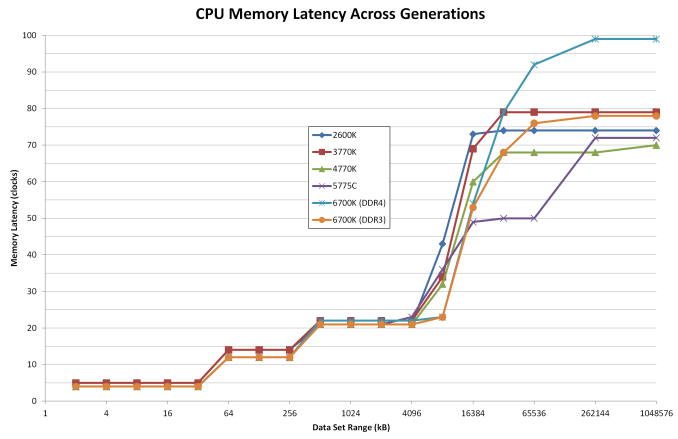

For this test we took Intel’s most recent high-end i7 processors from the last five generations and set them to 3.0 GHz and with HyperThreading disabled. As each platform uses DDR3, we set the memory across each to DDR3-1866 with a CAS latency of 9. For Skylake we also run at DDR4-2133 C15 as a default speed. From a pure cache standpoint, here is how each of the processors performed:

If we ignore Broadwell and its eDRAM, the purple line, especially from 16MB to 128MB, both of the lines for Skylake stay at the low latencies until 4MB. Between 4MB and 8MB, the cache latency still seems to be substantially lower than that of the previous generations.

Normally in this test, despite all of the CPUs having 8MB of L3 cache, the 8MB test has to spill out to main memory because some of the cache is already filled. If you have a more efficient caching and pre-fetch algorithm here, then the latency ‘at 8MB’ will be lower. So an update for Skylake, as shown in both the DDR4 and DDR3 results, is that the L3 caching algorithms or hardware resources have been upgraded.

At this point I would also compare the DDR3 to DDR4 results on Skylake above 16MB. It seems that the latency in this region is a lot higher than the others, showing nearly 100 clocks as we move up to 1GB. But it is worth remembering that these tests are against a memory clock of 2133 MHz, whereas the others are at 1866 MHz. As a result, the two lines are more or less equal in terms of absolute time, as we would expect.

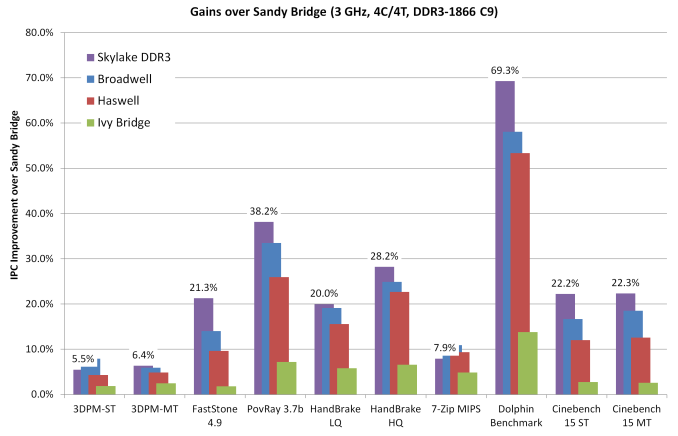

Here are the generational CPU results at 3.0 GHz:

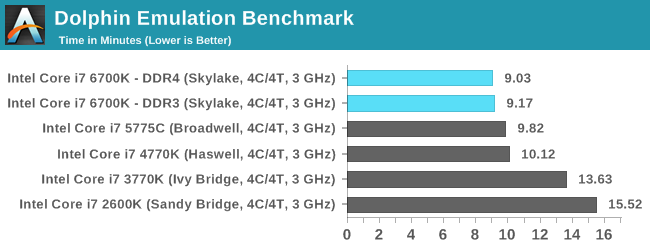

Dolphin Benchmark: link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that raytraces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in minutes, where the Wii itself scores 17.53 minutes.

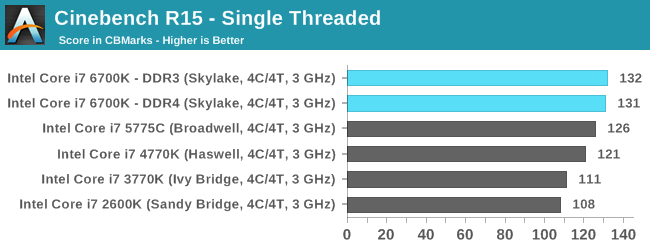

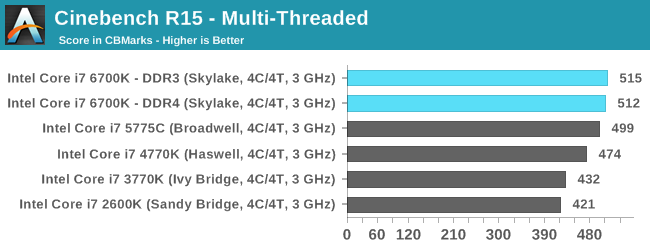

Cinebench R15

Cinebench is a benchmark based around Cinema 4D, and is fairly well known among enthusiasts for stressing the CPU for a provided workload. Results are given as a score, where higher is better.

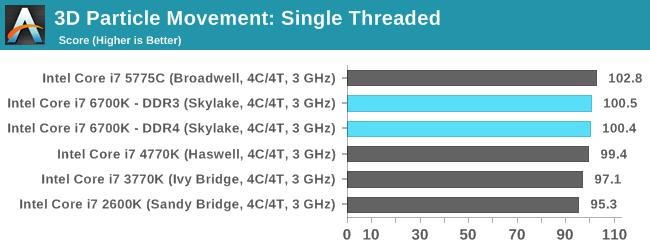

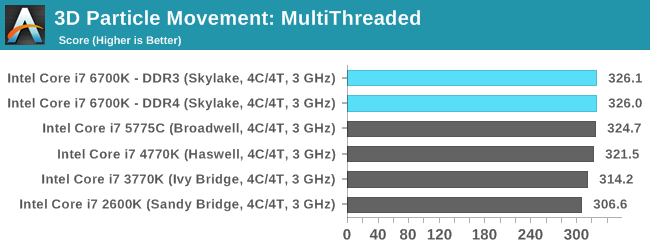

Point Calculations – 3D Movement Algorithm Test: link

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz and IPC wins in the single thread version, whereas the multithread version has to handle the threads and loves more cores. For a brief explanation of the platform agnostic coding behind this benchmark, see my forum post here.

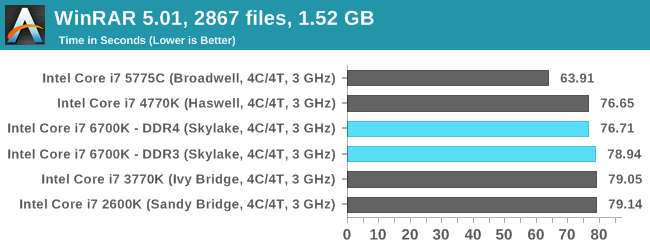

Compression – WinRAR 5.0.1: link

Our WinRAR test from 2013 is updated to the latest version of WinRAR at the start of 2014. We compress a set of 2867 files across 320 folders totaling 1.52 GB in size – 95% of these files are small typical website files, and the rest (90% of the size) are small 30 second 720p videos.

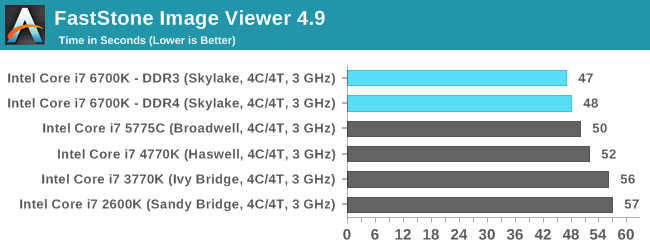

Image Manipulation – FastStone Image Viewer 4.9: link

Similarly to WinRAR, the FastStone test us updated for 2014 to the latest version. FastStone is the program I use to perform quick or bulk actions on images, such as resizing, adjusting for color and cropping. In our test we take a series of 170 images in various sizes and formats and convert them all into 640x480 .gif files, maintaining the aspect ratio. FastStone does not use multithreading for this test, and thus single threaded performance is often the winner.

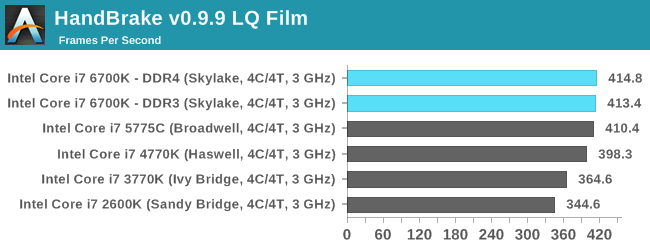

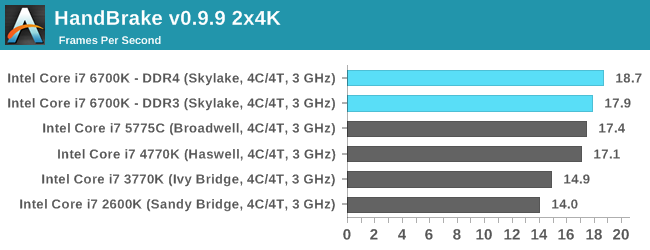

Video Conversion – Handbrake v0.9.9: link

Handbrake is a media conversion tool that was initially designed to help DVD ISOs and Video CDs into more common video formats. The principle today is still the same, primarily as an output for H.264 + AAC/MP3 audio within an MKV container. In our test we use the same videos as in the Xilisoft test, and results are given in frames per second.

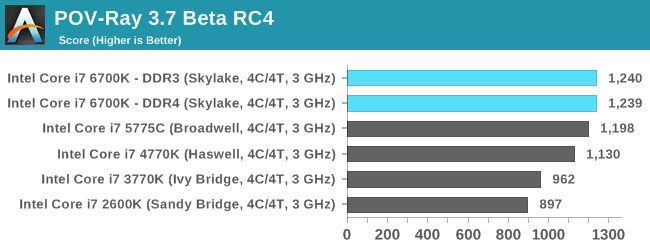

Rendering – PovRay 3.7: link

The Persistence of Vision RayTracer, or PovRay, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 2-3 minutes on high end platforms.

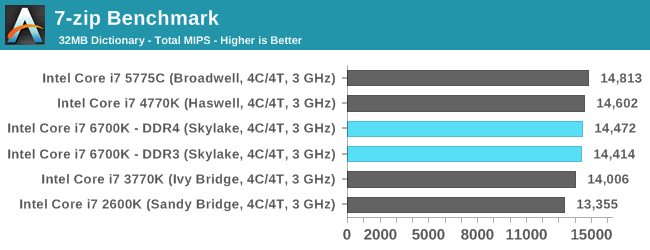

Synthetic – 7-Zip 9.2: link

As an open source compression tool, 7-Zip is a popular tool for making sets of files easier to handle and transfer. The software offers up its own benchmark, to which we report the result.

Overall: CPU IPC

Removing WinRAR as a benchmark because it gets boosted by the eDRAM in Broadwell, we get an interesting look at how each generation has evolved over time. Taking Sandy Bridge (i7-2600K) as the base, we have the following:

From a pure upgrade perspective, the IPC gain here for Skylake does not look great. In fact in two benchmarks the IPC seems to have decreased – 3DPM in single thread mode and 7-ZIP. What makes 3DPM interesting is that the multithread version still has some improvement at least, if only minor. This difference between MT and ST is more nuanced than first appearances suggest. Throughout the testing, it was noticeable that multithreaded results seem to (on average) get a better kick out of the IPC gain than single threaded. If this is true, it would suggest that Intel has somehow improved its thread scheduler or offered new internal hardware to deal with thread management. We’ll probably find out more at IDF later in the year.

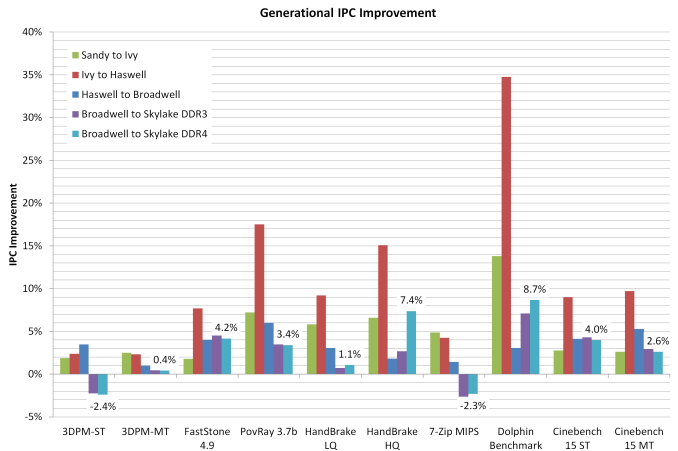

If we adjust this graph to show generation to generation improvement and include the DDR4 results:

This graph shows that:

Sandy Bridge to Ivy Bridge: Average ~5.8% Up

Ivy Bridge to Haswell: Average ~11.2% Up

Haswell to Broadwell: Average ~3.3% Up

Broadwell to Skylake (DDR3): Average ~2.4% Up

Broadwell to Skylake (DDR4): Average ~2.7% Up

Oh dear. Typically with an architecture update we see a bigger increase in performance than 2.7% IPC. Looking at matters purely from this perspective, Skylake does not come out well. These results suggest that Skylake is merely another minor upgrade in the performance metrics, and that a clock for clock result compared to Broadwell is not favorable. However, consider that very few people actually invested in Broadwell. If anything, Haswell was the last major mainstream processor generation that people actually purchased, which means that:

Haswell to Skylake (DDR3): Average ~5.7% Up.

This is more of a bearable increase, and it takes advantage of the fact that Broadwell on the desktop was a niche focused launch. The other results in the review will be interesting to see.

477 Comments

View All Comments

vdek - Thursday, August 6, 2015 - link

I'm still running my x58 motherboard. I ended up upgrading to a Xeon 5650 for $75, which is a 6 core 32nm CPU compatible with the x58. Overclocked at 4.2ghz on air, the thing has excellent gaming performance, I see absolutely no reason to upgrade to Skylake.bischofs - Thursday, August 6, 2015 - link

Absolutely agree, My overclocked 920 still runs like a watch after 8 years. Not sure what Intel is doing these days, but lack of competition is really impacting this market.stux - Friday, August 7, 2015 - link

I upgraded my 920 to a 990x, it runs at about 4.4ghz on air in an XPC chassis! and has 6/12 cores.I bought it off ebay cheap, and with an SSD on a SATA3 card I see no reason to upgrade. It works fantastically well, and is pretty much as fast as any modern 4 core machine.

Samus - Sunday, October 25, 2015 - link

If you single GPU and don't go ultra-high-end then gaming is still relevant on x58, but it really isn't capable of SLI due to PCIe 2.0 and the lanes being reduced to 8x electrical when more than one 16x length slot is used. QPI also isn't very efficient by todays standards and at the time, AMD still had a better on-die memory controller, but Intel's first attempt was commendable, but completely overhauled with Sandy Bridge which offered virtually the same performance from 2 channels. Anybody who has run dual channel on X58 knows how bad it actually is and why triple channel is needed to keep it competitive with todays platforms.I loved X58. It is undoubtedly the most stable platform I'd had since the 440BX. But as I said, by todays standards, it makes Sandy Bridge seem groundbreaking, not because of the IPC, but because of the chipset platform. The reduced power consumption, simplicity and overall smaller-size and lower cost of 60/70 series chipsets, then the incredibly simplified VRM layout in 80/90 chipsets (due to the ondie FIVR of Haswell) makes X58 "look" ancient, but as I said, still relevant.

Just don't load up the PCIe bus. A GPU, sound card and USB 3.0 controller is about as far as you want to go, and for the most part, as far as you need too!

vdek - Thursday, August 6, 2015 - link

Get a Xeon 5650, 6 core CPU, 32nm, will run at 4-4.2ghz all day on air. I upgraded my i7 920 the X5650 and I couldn't be happier. They go for about $70-80 on amazon or ebay. I'm planning on keeping my desktop for another 2-3 years, I upgraded the GPU to a GTX970 and it maxes out most of what I can throw at it. I don't really see my CPU as a bottleneck here.mdw9604 - Tuesday, August 11, 2015 - link

Can you OC a Xeon 5650?mapesdhs - Wednesday, August 12, 2015 - link

Of course, back then the main oc'ing method was still bclk-based based, though X58 was a little more involved than that compared to P55 (uncore, etc.)LCTR - Saturday, August 15, 2015 - link

I'd been pondering the 6700K until I saw these posts from 920 users :)I use mine for gaming / video editing, it's running non-hyperthreaded at 4.2GHz on air (about 4Ghz with HT on)

I also upgraded my GPU to a 970 and have seen decent gaming performance - if I could jump to a X5650 and stretch things for 1-2 years that'd be great...

What sort of performance do you see from the X5650? Would it win 4GHz with HT enabled?

The Xeon 5650's don't need any special mobo support or anything, do they? I have a gigabyte GA-EX58-UD5

Nfarce - Wednesday, August 5, 2015 - link

Well sadly, ever since SB (which I have one that's 4 years old, a 2500K, alongside a newer Haswell 4690K, each new tick/tock has not been much. The days of getting 50% boost in performance between a few generations are long gone, let alone 100% boost, or doubling performance. Also keep in mind that there is a reason for this decrease in increased performance: as dies shrink, physics with electrons start becoming an issue. Intel has been focusing more on decreased power usage. At some point CPU manufacturers will need to look at an entirely different manufacturing material and design as silicon and traditional PCB design is coming to its limit.Mr Perfect - Wednesday, August 5, 2015 - link

It's not even 30% in high-end gaming. There is a clear improvement between SB and Skylake, but why should I build a whole new PC for 5FPS? I can't justify that expense.I'd be curious to see the high-end gaming benchmarks rerun with the next generation of GPUs. Will next gen GPUs care more about the CPU, or does DX12 eliminate the difference altogether?