The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTIntel’s 6th Gen Skylake Conclusions

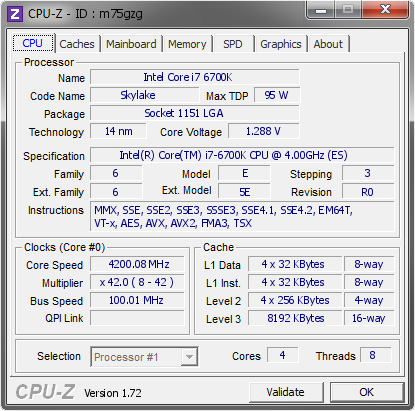

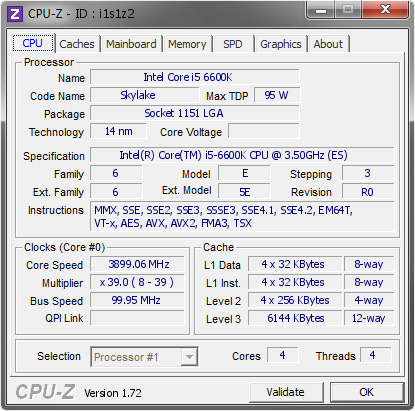

The new 6th Generation Skylake platform from Intel has launched today, and as part of the package there are two new processors, the Core i7-6700K and the Core i5-6600K, a new Z170 motherboard chipset with corresponding motherboards, and also a new market for DDR4 DRAM. The purpose of the Skylake platform launch is to update the mainstream PC market segment for the purposes of better performance and enhanced connectivity.

What is Skylake?

Skylake is a new processor architecture based on Intel’s 14nm process using the newest generation of their FinFET lithographic technology, and the two overclockable K series CPUs are the first processors to be released into the wild. For some this is mildly confusing, given that Intel launched Broadwell for desktop (a die shrink "tick" from 22nm to 14nm) only a couple of months ago. Broadwell has only acted as a minor stopgap measure, fulfilling the requirements of an upgradable platform and taking the crown of the best integrated graphics processor for a socket. As a result of that minor role, a number of users will see Skylake more as an upgrade from Haswell, the last mainstream processor family that had a long market shelf life.

For this launch and review, details about Skylake have been relatively rare to come by even in Intel’s own press documents, and we are set to hear more details at Intel’s Developer Forum in mid-August. Nonetheless, we have been able to determine that Skylake has a raft of updates, focusing on fixed function hardware to accelerate certain workloads as well as decoupling the CPU and PCIe frequency domains on the silicon to allow for more precise overclocking.

The platform changes as well. The voltage regulators inside Haswell CPUs move back out on to the motherboard as they generated too much heat inside the CPU. This is good for overclockers, but means the required components on Z170 motherboards, and price, has the potential to go up. The Z170 chipset is the sole chipset being released today, with others coming later in the year. Z170 features an upgrade to DMI 3.0, giving faster CPU-to-chipset bandwidth and enabling a 26-lane high speed IO hub. Of these lanes, 20 can be configured for PCIe lanes under a strict set of rules, giving access to the potential for more functionality on board. Most motherboards being launched today will use these lanes to provide M.2 slots, USB 3.1 Gen 2 ports via controllers, extra networking, extra PCIe slots and SATA Express connectivity. In our motherboard overview we have details on over 55 motherboards to look through.

The Results

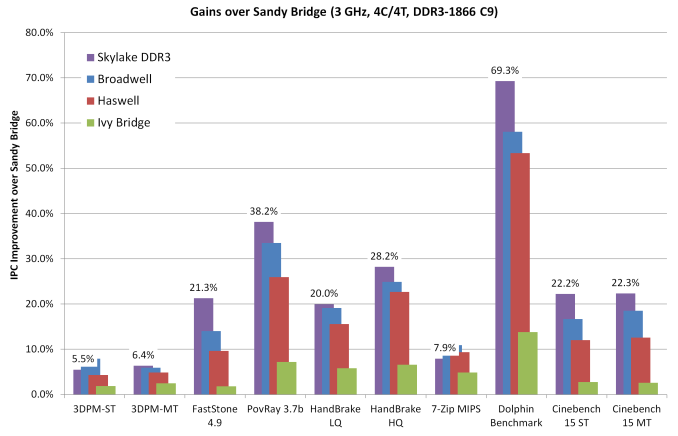

Overall, Skylake is not an earth shattering leap in performance. In our IPC testing, with CPUs at 3 GHz, we saw a 5.7% increase in performance over a Haswell processor at the same clockspeed and ~ 25% gains over Sandy Bridge. That 5.7% value masks the fact that between Haswell and Skylake, we have Broadwell, marking a 5.7% increase for a two generation gap.

In our discrete gaming benchmarks, at 3GHz Skylake actually performs worse than Haswell at an equivalent clockspeed, giving up an average of 1.3% performance. We don’t have much from Intel as to analyze the architecture to see why this happens, and it is pretty arguable that it is noticeable, but it is there. Hopefully this is just a teething issue with the new platform.

When we ratchet the CPUs back up to their regular, stock clockspeeds, we see a gap worth discussing. Overall at stock, the i7-6700K is an average 37% faster than Sandy Bridge in CPU benchmarks, 19% faster than the i7-4770K, and 5% faster than the Devil’s Canyon based i7-4790K. Certain benchmarks like HandBrake, Hybrid x265, and Google Octane get substantially bigger gains, suggesting that Skylake’s strengths may lie in fixed function hardware under the hood.

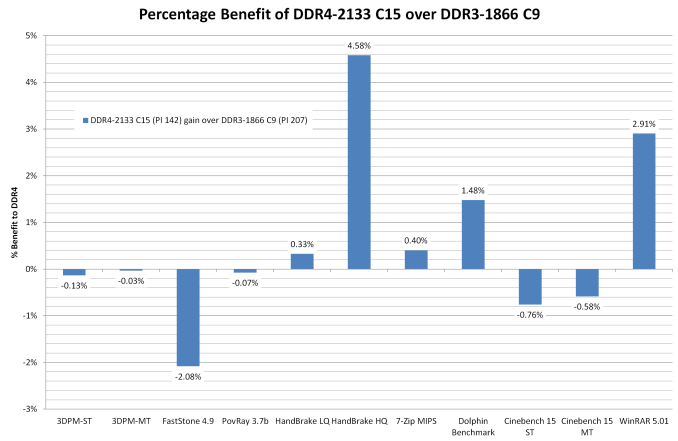

In full speed gaming benchmarks we have some situations that benefit from Skylake (GRID on high end graphics cards) and others that drop (Mordor on GTX 770), but the important aspect to consider is despite Skylake supporting both DDR3L and DDR4 memory, our results show that even with a fast DDR3 kit, a default-speed DDR4 set of memory is still worth upgrading to. On average there’s a small change in performance in favor of the DDR4 (especially in integrated graphics), but DDR4 confers benefits such as more memory per module and lower voltages to aid power consumption

A quick turn to the overclocking situation on Skylake: from our small sample set, most of the CPUs achieved 4.6 GHz when pushing and 4.5 GHz comfortably, including the retail sample that had 4.5 GHz. Speaking to other reviewers, their results match us or even slightly better our own, with one report of a 5.0 GHz gem of a processor. From our testing, Skylake seems to have more of a temperature barrier than a voltage barrier, so increasing the cooling seems to be the task of the day here to get the high frequencies.

With all that, I’m going to make a bold statement.

Sandy Bridge, Your Time Is Up.

A large number of users invested into Intel based platforms during the Core 2 Quad, Nehalem and Sandy Bridge releases. Sandy Bridge was notable because it inferred a large performance gain at stock speeds, and with a good processor anyone could reach 4.7 GHz and even higher using a good high end cooler. With that, Intel has had a problem enticing these users to upgrade because their performance has been constantly matched by Ivy Bridge, Haswell and Broadwell – for every 5% IPC increase from the CPU, an average 200 MHz was lost on the good overclock and they would have to find a good overclocking CPU again. There was no great reason, apart from chipset functionality to upgrade.

That changes with Skylake.

From a clock-to-clock performance perspective, Skylake gives an average ~25% better performance in CPU based benchmarks, and when running both generations of processors at their stock speeds that increase jumps up to 37%. In specific tests, it is even higher. When you scale up to a 4.5 GHz Skylake against a 4.7 GHz Sandy Bridge, the 4% frequency difference is only a tiny portion of that. There are other added benefits, such as the move to a DDR4 memory topology that has denser memory modules, as well as PCIe storage and even PCIe 3.0 graphics connectivity.

As I said above, Skylake is not necessarily the most ground breaking architecture over Haswell. It affords a 19% CPU performance gain over the i7-4770K and 5% over the i7-4790K. There is a small minor issue with gaming that disappears when you use synthetics, but only to the tune of a couple of percentage points. For that minor hit, the package combo of processor, chipset and DDR4 should be an intrigue in the minds of Sandy Bridge (and older) owners.

What Happens Now?

Next on the list for us is Intel’s Developer Forum in mid-August. Here Intel has said they will present details on their Skylake architecture and it will hopefully shed some further insight into what is going on under the hood with Skylake. We will have a full write up for you after the event.

There is still the rest of the Skylake stack to be released – non overclocking processors, low power processors, and the B/H/Q chipsets too. There is no official date on these except ‘later in the year’. We will also get to these when we have more information.

477 Comments

View All Comments

SkOrPn - Tuesday, December 13, 2016 - link

Well if you were paying attention to AMD news today, maybe you partially got your answer finally. Jim Keller yet again to the rescue. Ryzen up and take note... AMD is back...CaedenV - Wednesday, August 5, 2015 - link

Agreed, seems like the only way to get a real performance boost is to up the core count rather than waiting for dramatically more powerful single-core parts to hit the market.kmmatney - Wednesday, August 5, 2015 - link

If you have an overclocked SandyBridge, it seems like a lot of money to spend (new motherboard and memory) for a 30% gain in speed. I personally like to upgrade my GPU and CPU when I can get close the double the performance of the previous hardware. It's a nice improvement here, but nothing earth=shattering - especially considering you need a new motherboard and memory.Midwayman - Wednesday, August 5, 2015 - link

And right as dx12 is hitting as well. That sandy bridge may live a couple more generations if dx12 lives up to the hype.freaqiedude - Wednesday, August 5, 2015 - link

agreed I really don't see the point of spending money for a 30% speedbump in general, (as its not that much) when the benefit in games is barely a few percent, and my other workloads are fast enough as is.If Intel would release a mainstream hexa/octa core I would be all over that, as the things I do that are heavy are all SIMD and thus fully multithreaded, but I can't justify a new pc for 25% extra performance in some area's. with CPU performance becoming less and less relevant for games that atleast is no reason for me to upgrade...

Xenonite - Thursday, August 6, 2015 - link

"If Intel would release a mainstream hexa/octa core I would be all over that, as the things I do that are heavy are all SIMD and thus fully multithreaded, but I can't justify a new pc for 25% extra performance in some area's."SIMD actually has absolutely nothing to do with multithreading. SIMD refers to instruction-level parallellism, and all that has to be done to make use of it, for a well-coded app, is to recompile with the appropriate compiler flag. If the apps you are interested in have indeed been SIMD optimised, then the new AVX and AVX2 instructions have the potential to DOUBLE your CPU performance. Even if your application has been carefully designed with multi-threading in mind (which very few developers can, let alone are willing to, do) the move from a quad core to a hexa core CPU will yield a best-case performance increase of less than 50%, which is less than half what AVX and AVX2 brings to the table (with AVX-512 having the potential to again provide double the performance of AVX/AVX2).

Unfortunately it seems that almost all developers simply refuse to support the new AVX instructions, with most apps being compiled for >10 year old SSE or SSE2 processors.

If someone actually tried, these new processors (actually Haswell and Broadwell too) could easily provide double the performance of Sandy Bridge on integer workloads. When compared to the 900-series Nehalem-based CPUs, the increase would be even greater and applicable to all workloads (integer and floating point).

boeush - Thursday, August 6, 2015 - link

Right, and wrong. SIMD are vector based calculations. Most code and algorithms do not involve vector math (whether FP or integer). So compiling with or without appropriate switches will not make much of a difference for the vast majority of programs. That's not to say that certain specialized scenarios can't benefit - but even then you still run into a SIMD version of Amdahl's Law, with speedup being strictly limited to the fraction of the code (and overall CPU time spent) that is vectorizable in the first place. Ironically, some of the best vectorizable scenarios are also embarrassingly parallel and suitable to offloading on the GPU (e.g. via OpenCL, or via 3D graphics APIs and programmable shaders) - so with that option now widely available, technologically mature, and performant well beyond any CPU's capability, the practical utility of SSE/AVX is diminished even further. Then there is the fact that a compiler is not really intelligent enough to automatically rewrite your code for you to take good advantage of AVX; you'd actually have to code/build against hand-optimized AVX-centric libraries in the first place. And lastly, AVX 512 is available only on Xeons (Knights Landing Phi and Skylake) so no developer targeting the consumer base can take advantage of AVX 512.Gonemad - Wednesday, August 5, 2015 - link

I'm running an i7 920 and was asking myself the same thing, since I'm getting near 60-ish FPS on GTA 5 with everything on at 1080p (more like 1920 x 1200), running with a R9 280. It seems the CPU would be holding the GFX card back, but not on GTA 5.Warcraft - who could have guessed - is getting abysmal 30 FPS just standing still in the Garrison. However, system resources shows GFX card is being pushed, while the CPU barely needs to move.

I was thinking perhaps the multicore incompatibility on Warcraft would be an issue, but then again the evidence I have shows otherwise. On the other hand, GTA 5, that was created in the multicore era, runs smoothly.

Either I have an aberrant system, or some i7 920 era benchmarks could help me understand what exactly do I need to upgrade. Even specific Warcraft behaviour on benchmarks could help me, but I couldn't find any good decisive benchmarks on this Blizzard title... not recently.

Samus - Wednesday, August 5, 2015 - link

The problem now with nehalem and the first gen i7 in general isn't the CPU, but the x58 chipset and its outdated PCI express bus and quickpath creating a bottleneck. The triple channel memory controller went mostly unsaturated because of the other chipset bottlenecks which is why it was dropped and (mostly) never reintroduced outside of enthusiast x99 quad channel interface.For certain applications the i7 920 is, amazingly, still competitive today, but gaming is not one of them. An SLI GTX 570 configuration saturates the bus, I found out first hand that is about the most you can get out of the platform.

D. Lister - Thursday, August 6, 2015 - link

Well said. The i7 9xx series had a good run, but now, as an enthusiast/gamer in '15, you wouldn't want to go any lower than Sandy Bridge.