The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTDDR4 vs DDR3L on the CPU

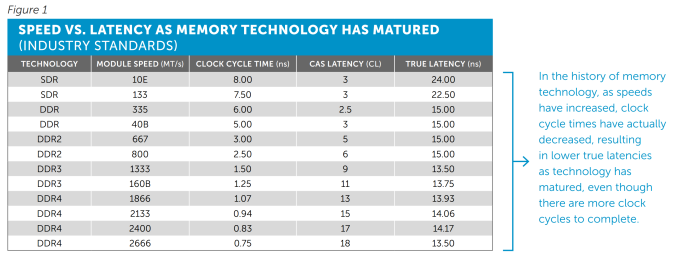

One of the big questions when DDR4 was launched was around the comparison to DDR3. Was it better, was it worse? DDR4 by default switches down to an operating voltage of 1.2 volts from 1.5 volts, making it more power efficient, and the standard increases the maximum capacity on an unbuffered memory module. There are also some other enhancements such as per-IC voltage drop control and a design to aid DRAM placement in motherboards. But there was one big scary number – a CAS Latency of 15 (known as C15 or CL15).

Let’s do a quick memory recap on frequency (technically, transfer rate but used interchangeably for this purpose) against latency.

The CAS latency is the number of clocks taken between an access request from the memory controller to actually acting on that request. So a CL of 15 means that there are 15 clocks between that request and getting access. Generally, a lower CL is better.

The Frequency is the rate at which those clocks occur. DDR stands for Double Data Rate, which means that in one hertz in the frequency there are two requests – one each on the rise and fall of the clock signal. The reciprocal of the frequency/transfer rate (one divided by the frequency) is the time taken to perform a clock.

But the important thing here is that the latency is a number of clocks and thus is just a number, and the frequency determines how fast these clocks go. So on its own the CAS Latency value doesn’t say much. The important metric is when the two are used together -the true latency is the CAS Latency * Time taken per clock, and here’s a table of values from Crucial’s recent whitepaper on the subject:

So here we have the values for True Latency:

DDR3-1600 C11: 13.75 nanoseconds

DDR4-2133 C15: 14.06 nanoseconds

In fact despite the development of new memory interfaces, the true latency for DRAM under default specifications has stayed roughly the same since DDR. As we make faster memory modules, the CAS Latency rises to keep higher frequency memory stable, but overall the true latency stays the same.

Normally in our DRAM reviews I refer to the performance index, which has a similar effect in gauging general performance:

DDR3-1600 C11: 1600/11 = 145.5

DDR4-2133 C15: 2133/15 = 142.2

As you have faster memory, you get a bigger number, and if you reduce the CL, we get a bigger number also. Thus for comparing memory kits, if the difference > 10, then the kit with the biggest performance index tends to win out, though for similar kits the one with the highest frequency is preferred.

“But who uses DDR3-1600 C11? Isn’t most memory like DDR3-1866 C9?”

This is valid point – as DDR3 has matured, the number of kits in the market that are running faster than default specifications are actually normal now. The performance index for this kit is:

DDR3-1866 C9: 1866/9 = 207.3

In the grand scheme of things, a PI of 207 is actually quite large, and super high for DDR3L. There are a few DDR3 memory kits that go beyond this up to a PI of 220, or an overclock might go to 240 beyond normal voltages, but a value of 207 shows the maturity of the DDR3 market. If we look at the current DDR4 market, we can pick up kits with DDR4-3000 C15 ratings, which are similarly in the 200 bracket now too.

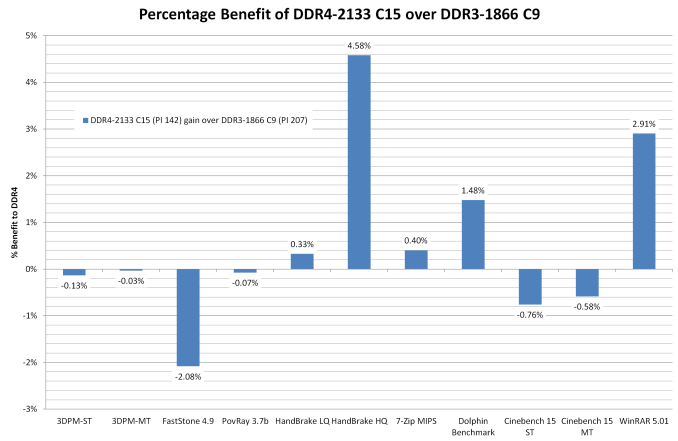

I’ve prefaced our DDR3L vs DDR4 testing with all this as a response to ‘large CL = bad’. Actually, you have to compare both numbers. Now that we have a platform that runs both, and we were able to source a beta DDR3L/DDR4 combination motherboard to test them on, we can see how it squares up from ‘regular DDR4’ against ‘high performance DDR3(L)’.

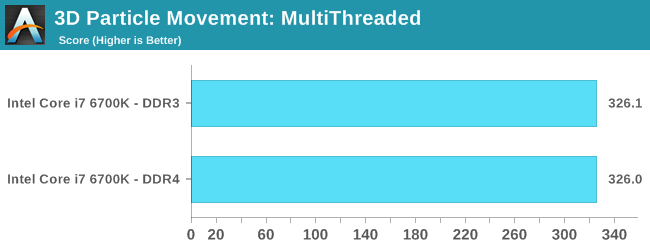

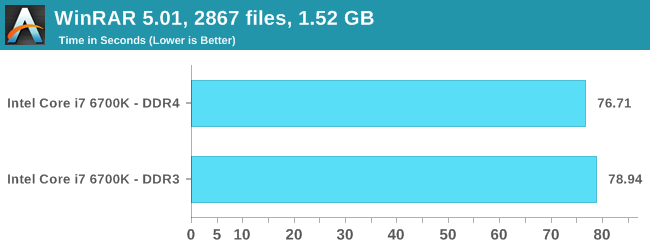

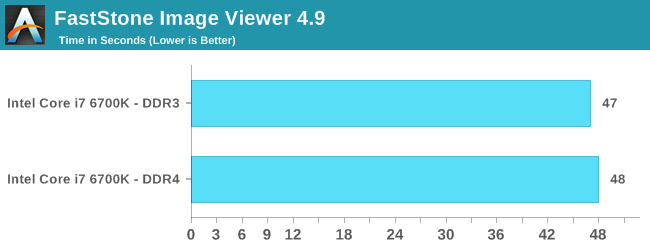

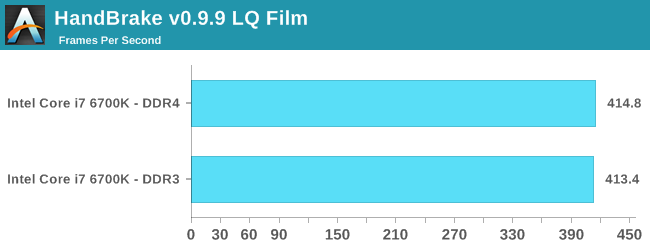

For these tests, both sets of numbers were run at 3.0 GHz with hyperthreading disabled. Memory speeds were DDR4-2133 C15 and DDR3-1866 C9 respectively.

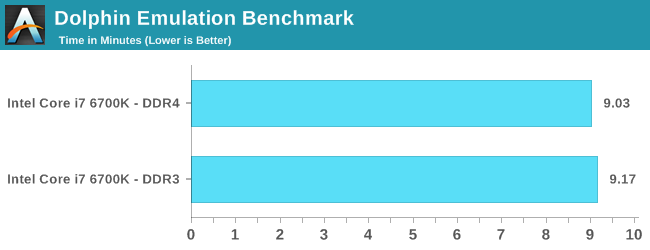

Dolphin Benchmark: link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that raytraces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in minutes, where the Wii itself scores 17.53 minutes.

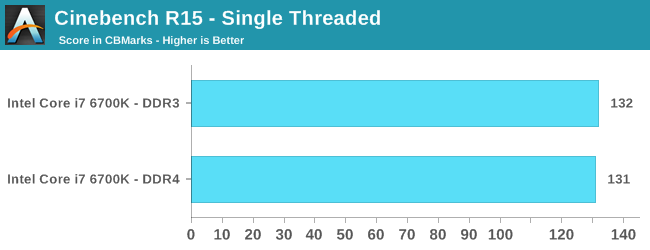

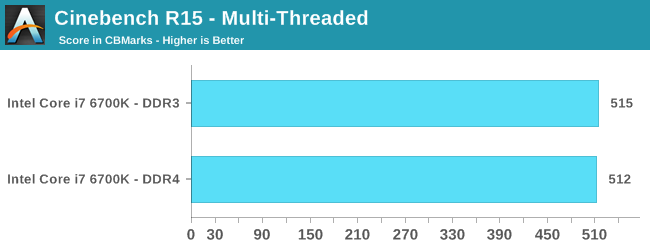

Cinebench R15

Cinebench is a benchmark based around Cinema 4D, and is fairly well known among enthusiasts for stressing the CPU for a provided workload. Results are given as a score, where higher is better.

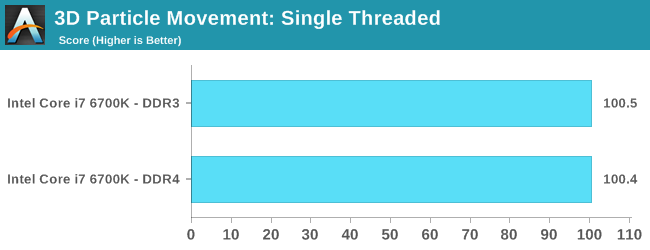

Point Calculations – 3D Movement Algorithm Test: link

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz and IPC wins in the single thread version, whereas the multithread version has to handle the threads and loves more cores. For a brief explanation of the platform agnostic coding behind this benchmark, see my forum post here.

Compression – WinRAR 5.0.1: link

Our WinRAR test from 2013 is updated to the latest version of WinRAR at the start of 2014. We compress a set of 2867 files across 320 folders totaling 1.52 GB in size – 95% of these files are small typical website files, and the rest (90% of the size) are small 30 second 720p videos.

Image Manipulation – FastStone Image Viewer 4.9: link

Similarly to WinRAR, the FastStone test us updated for 2014 to the latest version. FastStone is the program I use to perform quick or bulk actions on images, such as resizing, adjusting for color and cropping. In our test we take a series of 170 images in various sizes and formats and convert them all into 640x480 .gif files, maintaining the aspect ratio. FastStone does not use multithreading for this test, and thus single threaded performance is often the winner.

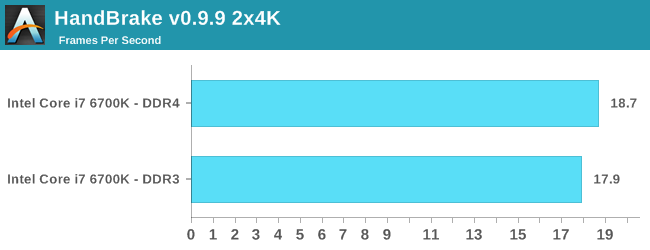

Video Conversion – Handbrake v0.9.9: link

Handbrake is a media conversion tool that was initially designed to help DVD ISOs and Video CDs into more common video formats. The principle today is still the same, primarily as an output for H.264 + AAC/MP3 audio within an MKV container. In our test we use the same videos as in the Xilisoft test, and results are given in frames per second.

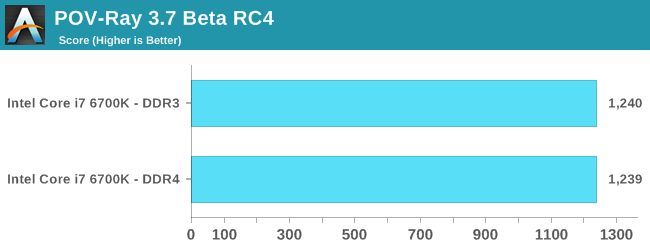

Rendering – PovRay 3.7: link

The Persistence of Vision RayTracer, or PovRay, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 2-3 minutes on high end platforms.

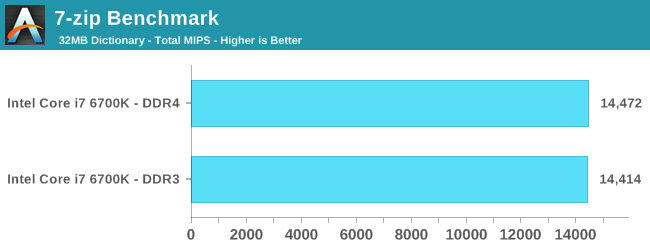

Synthetic – 7-Zip 9.2: link

As an open source compression tool, 7-Zip is a popular tool for making sets of files easier to handle and transfer. The software offers up its own benchmark, to which we report the result.

Overall: DDR4 vs DDR3L on the CPU

Pretty sure the results speak for themselves:

Comparing default DDR4 to a high performance DDR3 memory kit is almost an equal contest. Having the faster frequency helps for large frame video encoding (HandBrake HQ) as well as WinRAR which is normally memory intensive. The only real benchmark loss was FastStone, which regressed by one second (out of 48 seconds).

End result, looking at the CPU test scores, is that upgrading to DDR4 doesn’t degrade performance from your high end DRAM kit, and you get the added benefit of future upgrades, faster speeds, lower power consumption due to the lower voltage and higher density modules.

477 Comments

View All Comments

medi03 - Thursday, August 6, 2015 - link

Yeah. Blaming Intel that HP didn't want to use FASTER AMD CPUs FOR FREE, fearing Intel's illegal revenge is just nuts.AMD Athlon 64's beat Intel in all regards, they were faster, cheaper and less power hungry. Yet Intel was selling several times more Prescotts,

Not being able to profit even in a situation when you have superior product (despite much modest R&D budget), yeah, why blame intel.

MrBungle123 - Sunday, August 9, 2015 - link

In the Athlon 64 days, yes, AMD had a better product but the cold hard truth behind the curtain was that AMD didn't have the manufacturing capacity to supply everyone that Intel was feeding chips to.silverblue - Thursday, August 6, 2015 - link

A "tweaked 8-core Ph2"? Putting aside the fact that significant changes would've been required to the fetch and retire hardware (the integer units themselves were very capable but were underutilised), a better IMC and all the modern instruction sets that K10 didn't support, AMD had already developed its replacement. It probably would've buried them to have to shelve Bulldozer (twice, it turns out) and redevelop what was essentially a 12-year old micro-architecture.AMD were under pressure to deliver Bulldozer hence the cutting of corners and the decision to go with GF's poor 32nm process as they simply didn't have any alternative (plus I imagine they were promised far more than GF could deliver). Phenom II was not enough against Nehalem, let alone Sandy Bridge.

Blaming Intel doesn't help either as AMD couldn't exactly saturate the market with their products even when they were fabbing them themselves, however I think the huge drop in mainstream CPU prices when Core 2 was released along with the huge price paid for ATi did more damage than any bribing of retailers and systems manufacturers.

nikaldro - Wednesday, August 5, 2015 - link

40% over excavator, with 8 cores, good clockspeeds and good pricing doesn't sound that bad. I'll wait till Zen comes out, then decide.Spoelie - Thursday, August 6, 2015 - link

IPC difference between piledriver and skylake amounts to 80%... Lets hope excavator's IPC is better than anticipated and 40% is sandbagging it a bit.Given AMD's track record of overpromising and underdelivering, I'm afraid Zen will massively disappoint.

Asomething - Thursday, August 6, 2015 - link

Well it will only be behind by something like 15-25% if the difference between piledriver and skylake is 80% since piledriver to excavator is supposed to be a good 20% jump. If amd can manage to catchup in any meaningful way and make chips that can touch 5ghz then things might turn out ok.mapesdhs - Thursday, August 6, 2015 - link

Catchup will not be good enough. They need to be usefully competitive to pull people away from Intel into a platform switch, especially business, who have to think about this sort of thing for the long haul, and AMD's track record has been pretty woeful in this regard. I hope they can bring it to the table with Zen, but I'll believe it when I see it. Highly unlikely Intel isn't planning to either splat its prices or shove up performance, etc., if they need to when Zen comes out, especially for consumer CPUs. We know what's really possible based on how many cores, TDP, clock rates, etc. are used for the XEONs, but that potential just hasn't been put into a consumer chip yet.Remember, Intel could have released an 8-core for X79, but they didn't because there was no need; indeed the 3930K *is* an 8-core, just with 2 cores disabled (read the reviews). Ever since then, again and again, Intel has held back what it's perfectly capable of producing if it wanted to. The low clock of the 5960X is yet another example, it could easily be much higher.

MapRef41N93W - Friday, August 7, 2015 - link

You're assuming it's going to be a flat 40% over Excavator and not a best case scenario 40% (like every single AMD future performance projection always is...). It's more than likely a flat 20% IPC increase which puts it even behind Nehalem IPC wise.Top off the fact that it's AMD's first FinFET part (look at the penalty Intel paid in clockspeed with the transition to FinFET with IB/HW) and a transition to a new scalable uARCH (again look at the clockspeed hit Intel took when going from Netburst to scalable core arch, very similar to what AMD is doing now actually) and I can see Zen parts clocking horribly on top of that. Being on a Samsung node that is designed with low power in mind won't help their case either.

You may get an 8 core Zen part for $300-$400 but it probably won't clock worth a damn and end up at 3.5-4GHz on average. So it would be a much worse choice than a 5820k for most people.

mapesdhs - Wednesday, August 12, 2015 - link

Btw, I wasn't assuming anything about Zen, I really haven't a clue how it'll compare to Intel's offerings of the day. I hope it's good, but with all that's happened before, I hope for the best but expect the worst, though I'd like to be wrong.Azix - Friday, August 21, 2015 - link

You guys are being pretty negative on AMD. AMD tried to do an 8core chip on 32nm, maybe that was their mistake. The market wasn't even ready considering how long that way and where we are now. I do think intel got them pretty badly with their cheatingThe next processors are on a much better process. Based on the process alone we would expect a significant bit more performance than some seem willing to allow. Not to mention the original architecture was designed on a 32nm process. It's no surprise it would fall that far behind intel who is currently on 14nm. As time progresses though, those process jumps will take intel longer and longer. AMD will be much closer. Next year will be the first these two are on the same process (similar anyway). in a long while and it will last till at least 2017. AMD should be able to pick up some CPU sales next year and hopefully return to profitability. Intel also enjoys ddr4 support.

Stop pushing old 32nm architectures and crappy motherboards.