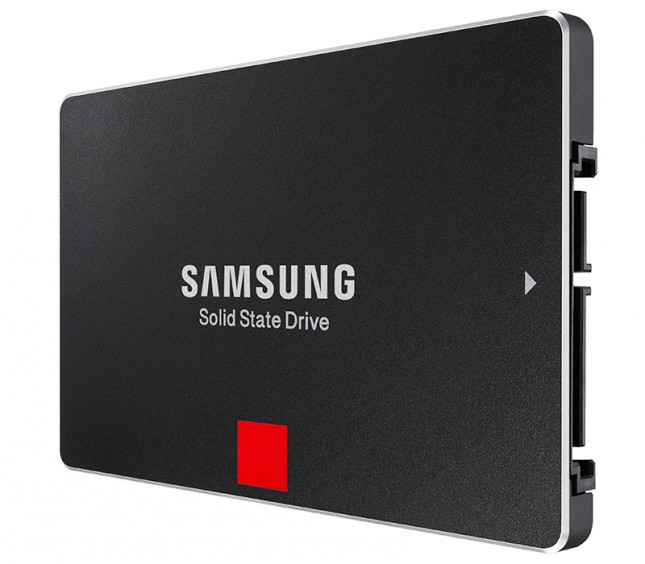

The 2TB Samsung 850 Pro & EVO SSD Review

by Kristian Vättö on July 23, 2015 10:00 AM EST

During the early days of SSDs, we saw rather quick development in capacities. The very first SSDs were undoubtedly small, generally 32GB or 64GB, but there was a need for higher capacities to make SSDs more usable in client environments. MLC NAND caused a rapid decline in prices and the capacities quickly increased to 128GB and 256GB. 512GB also came along fairly soon, but for a long while the 512GB drives cost more than a decent gaming PC with prices being over $1000.

I would argue that the 512GB drives were introduced too early - the adoption was minimal due to the absurd price. The industry learned from that and instead of pushing 1TB SSDs to the market at over $1000, it wasn't until 2013 when Crucial introduced the M500 with the 960GB model being priced reasonably at $600. Nowadays 1TB has become a common capacity in almost every OEM's lineup, which is thanks to both lower NAND prices and controllers being sophisticated enough to manage 1TB of NAND. The next milestone is obviously the multi-terabyte era, which we are entering with the release of 2TB Samsung 850 Pro and EVO models.

Breaking capacity thresholds involves work on both the NAND and the controller side. All controllers have a fixed number of die they can talk to and for modern 8-channel controllers with eight chip enablers (CEs) per channel the limit is typically 64 dies. With 128Gbit (16GB) being the common NAND die capacity today, 64 dies yields 1,024GB or 1TB (as it's often marketed). It's possible to utilize a single CE for managing more than one die (which is what e.g. Silicon Motion does to achieve 1TB with a 4-channel controller), but it adds complexity to the firmware design and there's a negative performance impact as the two dies on the same chip enabler can't be accessed simultaneously.

Increasing NAND capacity per die is one way to work around the channel/CE limitation, but it's generally not the most efficient way. First off, doubling the capacity of the die increases complexity substantially because you are effectively dealing with twice the number of transistors per die. The second drawback is reduced write performance, especially at smaller capacities, as SSDs rely heavily on parallelism for performance, so doubling the capacity per die will cut parallelism in half. That reduces the usability of the die in capacity sensitive applications such as eMMC storage, which don't have many die to begin with (as the same dies are often used in various different applications ranging from mobile to enterprise).

The new MHX controller

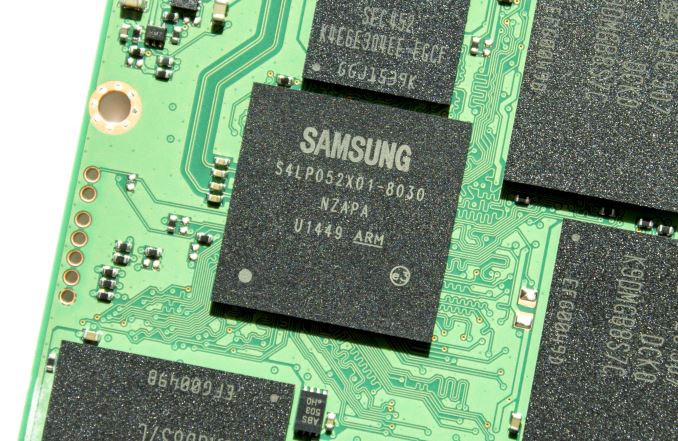

The real bottleneck, however, is the DRAM controller. Today's NAND mapping table designs tend to require about 1MB of DRAM per 1GB of NAND for optimal performance, so breaking the 1TB limit requires a DRAM controller capable of supporting 2GB of DRAM. From a design standpoint, implementing a beefier DRAM controller isn't a massive challenge, but it eats both die and PCB area and hence increases cost. Given how 2TB SSDs are currently a relatively small niche, embedding a DRAM controller with 2GB support for a mainstream controller isn't very economical, which is why today's client-grade SSD controller usually support up to 1GB to increase cost efficiency.

Initially the 850 EVO was supposed to carry a 2TB SKU at the time of launch, but Samsung didn't consider the volume to be high enough. As Samsung is the number one manufacturer of client SSDs and supplies millions of drives to PC OEMs, the company is not really in the business of making low volume niche products, hence the release of 2TB client SSDs was postponed in wait for lower pricing and higher demand as a result.

| Comparison of Samsung SSD Controllers | ||||

| MDX | MEX | MGX | MHX | |

| Core Architecture | ARM Cortex R4 | |||

| # of Cores | 3 | 3 | 2 | 3 |

| Core Frequency | 300MHz | 400MHz | 550MHz | 400MHz |

| Max DRAM | 1GB | 1GB | 512MB (?) | 2GB |

| DRAM Type | LPDDR2 | LPDDR2 | LPDDR2 | LPDDR3 |

The new 2TB versions of the 850 Pro and EVO both use Samsung's new MHX controller. I was told it's otherwise identical to the MEX besides the DRAM controller supporting up to 2GB of LPDDR3, whereas the MEX only supports 1GB of LPDDR2. The MGX is the lighter version of MEX with two higher clocked cores instead of three slower ones, and it's found in the 120GB, 250GB and 500GB EVOs.

| Samsung SSD 850 Pro Specifications | |||||

| Capacity | 128GB | 256GB | 512GB | 1TB | 2TB |

| Controller | MEX | MHX | |||

| NAND | Samsung 32-layer MLC V-NAND | ||||

| NAND Die Capacity | 86Gbit | 128Gbit | |||

| DRAM | 256MB | 512MB | 512MB | 1GB | 2GB |

| Sequential Read | 550MB/s | 550MB/s | 550MB/s | 550MB/s | 550MB/s |

| Sequential Write | 470MB/s | 520MB/s | 520MB/s | 520MB/s | 520MB/s |

| 4KB Random Read | 100K IOPS | 100K IOPS | 100K IOPS | 100K IOPS | 100K IOPS |

| 4KB Random Write | 90K IOPS | 90K IOPS | 90K IOPS | 90K IOPS | 90K IOPS |

| DevSleep Power | 2mW | 5mW | |||

| Slumber Power | Max 60mW | ||||

| Active Power (Read/Write) | Max 3.3W / 3.4W | ||||

| Encryption | AES-256, TCG Opal 2.0 & IEEE-1667 (eDrive supported) | ||||

| Endurance | 150TB | 300TB | |||

| Warranty | 10 years | ||||

Specification wise the 2TB 850 Pro is almost identical to its 1TB sibling. The performance on paper is an exact match with the 2TB model drawing a bit more power in DevSleep mode, which is likely due to the additional DRAM despite LPDDR3 being more power efficient than LPDDR2. Initially the 850 Pro was rated at 150TB of write endurance across all capacities, but Samsung changed that sometime after the launch and the 512GB, 1TB and 2TB versions now carry 300TB endurance rating along with a 10-year warranty.

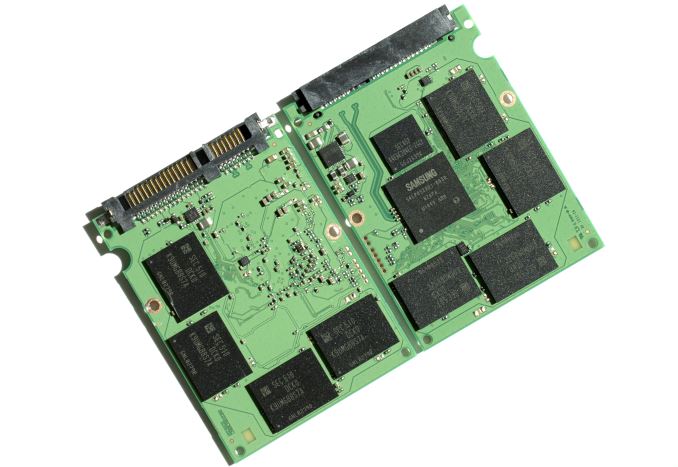

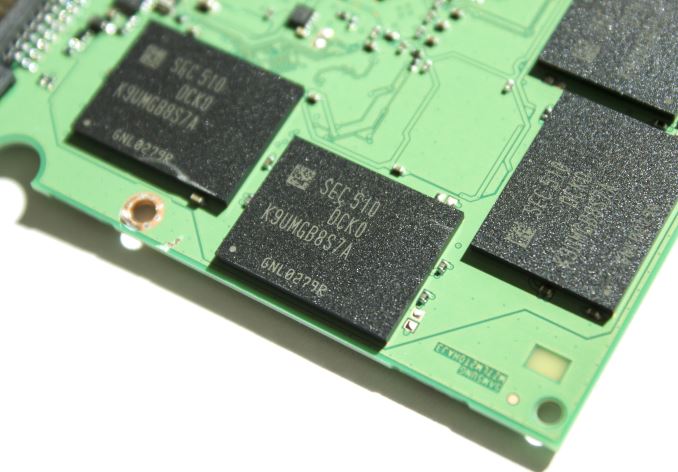

There's another hardware change in addition to the new MHX controller as the NAND part number suggests that the 2TB 850 Pro uses 128Gbit dies instead of the 86Gbit dies found in the other capacities. The third character, which is a U in this case, refers to the type of NAND (SLC, MLC or TLC) and the number of dies, and Samsung's NAND part number decoder tells us that U stands for a 16-die MLC package. With eight NAND packages on the PCB, each die must be 128Gbit (i.e. 16GiB) to achieve raw NAND capacity of 2,048GiB. 2,048GB out of that is user accessible space, resulting in standard ~7% over-provisioning due to GiB (1024^3 bytes) to GB (1000^3 bytes) translation.

According to Samsung, this is still a 32-layer die, which would imply that Samsung has simply developed a higher capacity die using the same process. It's logical that Samsung decided to go with a lower capacity die at first because it's less complex and yields better performance at smaller capacities. In turn, a larger die results in additional cost savings due to peripheral circuitry scaling, so despite still being a 32-layer part the 128Gbit die should be more economical to manufacture than its 86Gbit counterpart.

| Samsung SSD 850 EVO Specifications | ||||||

| Capacity | 120GB | 250GB | 500GB | 1TB | 2TB | |

| Controller | MGX | MEX | MHX | |||

| NAND | Samsung 32-layer 128Gbit TLC V-NAND | |||||

| DRAM | 256MB | 512MB | 1GB | 2GB | ||

| Sequential Read | 540MB/s | 540MB/s | 540MB/s | 540MB/s | 540MB/s | |

| Sequential Write | 520MB/s | 520MB/s | 520MB/s | 520MB/s | 520MB/s | |

| 4KB Random Read | 94K IOPS | 97K IOPS | 98K IOPS | 98K IOPS | 98K IOPS | |

| 4KB Random Write | 88K IOPS | 88K IOPS | 90K IOPS | 90K IOPS | 90K IOPS | |

| DevSleep Power | 2mW | 2mW | 2mW | 4mW | 5mW | |

| Slumber Power | 50mW | 60mW | ||||

| Active Power (Read/Write) | Max 3.7W / 4.4W | 3.7W / 4.7W | ||||

| Encryption | AES-256, TCG Opal 2.0, IEEE-1667 (eDrive) | |||||

| Endurance | 75TB | 150TB | ||||

| Warranty | Five years | |||||

Like the 2TB Pro, the EVO has similar performance characteristics with the 1TB model. Only power consumption is higher, but given the increase in NAND and DRAM capacities that was expected.

As the 32-layer TLC V-NAND die was 128Gbit to begin with, Samsung didn't need to develop a new higher capacity die to bring the capacity to 2TB. The EVO also uses eight 16-die packages with the only difference to Pro being TLC NAND, which is more economical to manufacture since storing three bits in one cell yields higher density than two. Out of the 2,048GiB of raw NAND, 2,000GB is user-accessible, which is 48GB less than in the 2TB 850 Pro, but the TurboWrite SLC cache eats a portion of NAND and TLC tends to require a bit more over-provisioning to keep the write amplification low for endurance reasons.

| AnandTech 2015 SSD Test System | |

| CPU | Intel Core i7-4770K running at 3.5GHz (Turbo & EIST enabled, C-states disabled) |

| Motherboard | ASUS Z97 Deluxe (BIOS 2205) |

| Chipset | Intel Z97 |

| Chipset Drivers | Intel 10.0.24+ Intel RST 13.2.4.1000 |

| Memory | Corsair Vengeance DDR3-1866 2x8GB (9-10-9-27 2T) |

| Graphics | Intel HD Graphics 4600 |

| Graphics Drivers | 15.33.8.64.3345 |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 8.1 x64 |

- Thanks to Intel for the Core i7-4770K CPU

- Thanks to ASUS for the Z97 Deluxe motherboard

- Thanks to Corsair for the Vengeance 16GB DDR3-1866 DRAM kit, RM750 power supply, Hydro H60 CPU cooler and Carbide 330R case

66 Comments

View All Comments

Kristian Vättö - Thursday, July 23, 2015 - link

The move to Windows 8 broke compatibility with that method since HD Tach no longer works. I do have another idea, though, but I just haven't had the time to try it out and implement it to our test suite.editorsorgtfo - Thursday, July 23, 2015 - link

Kristian, what would you consider the best SATA 6Gbps drive(s) with power-loss protection?editorsorgtfo - Thursday, July 23, 2015 - link

Judging from the 850 Pro and EVO PCBs, they don't even guard their NAND mappings. Or my eyesight is giving.Meegul - Thursday, July 23, 2015 - link

It's mentioned at the end of 'The Destroyer' test that the 2TB 850 Pro uses less power than the 512GB variant, with one reason being cited as possibly a more efficient process node for the controller. Wouldn't it be more likely that the move to LPDDR3 in the 2TB variant was the cause for the increase in efficiency?MikhailT - Thursday, July 23, 2015 - link

He said that on the final words page as well: "I'm very glad to see improved power efficiency in the 2TB models. A part of that is explained by the move from LPDDR2 to LPDDR3, but it's also possible that the MHX is manufactured using a more power efficient process node. "MrCommunistGen - Thursday, July 23, 2015 - link

Kristian, I like that you give a little bit of love to the Mixed Sequential Read/Write graphs.Honestly this is the 1 area that I still find myself tearing out my hair waiting for on my Mid 2014 rMBP 1TB. I do a lot of work with large VMs in VMware and from time to time I have to copy one.

Peak read and Peak write speeds on this SSD are quite good, often approaching 1GB/s, but mixed sequential read/write is capped to an aggregate total of 1GB/s (yes I realize that this is bus limited on a x2 PCI-E 2.0 SSD).

This is one area that I really look forward to seeing improvements in with x4 PCI-E 3.0 SSDs.

jas.brooks - Sunday, March 13, 2016 - link

Hey MrCommunistGen,Just wondered if you could shed some light onto the Mixed Sequential Read/Write significance for you. I'm not super-tecchy personally, so it doesn't mean very much to me in those words alone.

BUT, I have been experiencing some very frustrating behaviour on my rMBP late-2013 (with 1TB built in SS-storage). When I'm using Premiere Pro CC2015 with video projects over a certain size (I'm a pro cameraman and editor, so am using heavy XAVC-I video from a Sony FS-7), then I get crazy lags waiting for a sequence to open, or specifically when making copy&paste commands. I have noticed that my (16GB) RAM is often near full in these situations, and there is a swap file in action too (between 1-16GB).

Any thoughts on my problem? More specifically, any possible ideas/suggestions of a config adjustment that could improve my experience? Or, is it simply the case that I'm pushing my machine too hard, and need to get a 32GB-RAM-cabable laptop ASAP?

Thanks!

jason

jcompagner - Thursday, July 23, 2015 - link

So they now have a way larger package/die for the pro version?Because with the 1TB evo and 1TB Pro i got the picture

both are exactly the same hardware only the evo stored 3 in 1 cell and the pro 2

(128GBit / 3 * 2 = 86GBit pro)

But now they both have 128GBit for the evo this i guess just means more of the same stuff

But for the Pro this has to mean that it has way more cells 50% more. So the die of the 2TB has to be 50% bigger then a one of the 1TB right?

Kristian Vättö - Saturday, July 25, 2015 - link

The 86Gbit MLC and 128Gbit TLC dies are not identical -- the TLC die is actually smaller (68.9mm^2 vs 87.4mm^2) due to it being a single-plane design. A lot more than the number of memory transistors goes into the die size, so estimating the die size based on the 50% increase in memory capacity alone isn't really possible.karakarga - Friday, July 24, 2015 - link

Why the read and write speeds not increasing? 550~540 Mb/s read and 520 MB/s write speeds, may reach 600 MB's there are still headroom!