Tick Tock On The Rocks: Intel Delays 10nm, Adds 3rd Gen 14nm Core Product "Kaby Lake"

by Brett Howse & Ryan Smith on July 16, 2015 10:15 AM EST

For almost as long as this website has been existence, there has been ample speculation and concern over the future of Moore’s Law. The observation, penned by Intel’s co-founded Gordon Moore, has to date correctly predicted the driving force behind the rapid growth of the electronics industry, with massive increases in transistor counts enabling faster and faster processors over the generations.

The heart of Moore’s Law, that transistor counts will continue to increase, is for the foreseeable future still alive and well, with plans for transistors reaching out to 7nm and beyond. However in the interim there is greater concern over whether the pace of Moore’s Law is sustainable and whether fabs can continue to develop smaller processes every two years as they have for so many years in the past.

The challenge facing semiconductor fabs is that the complexity of the task – consistently etching into silicon at smaller and smaller scales – increases with every new node, and trivial physics issues at larger nodes have become serious issues at smaller nodes. This in turn continues to drive up the costs of developing the next generation of semiconductor fabs, and even that is predicated on the physics issues being resolved in a timely manner. No other industry is tasked with breaking the laws of physics every two years, and over the years the semiconductor industry has been increasingly whittled down as firms have been pushed out by the technical and financial hurdles in keeping up with the traditional front-runners.

The biggest front runner in turn is of course Intel, who has for many years now been at the forefront of semiconductor development, and by-and-large the bellwether for the semiconductor fabrication industry as a whole. So when Intel speaks up on the challenges they face, others listen, and this was definitely the case for yesterday’s Intel earnings announcement.

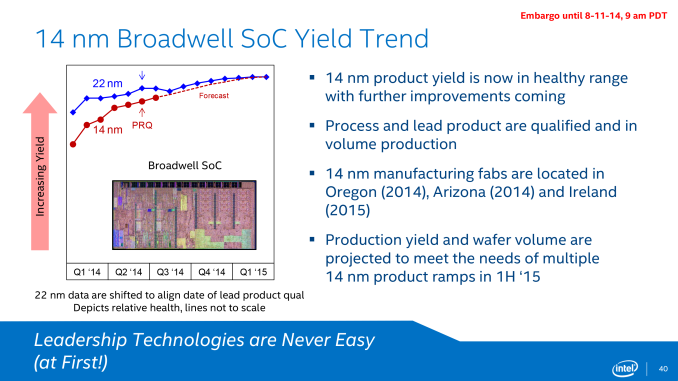

As part of their call, Intel has announced that they have pushed back their schedule for the deployment of their 10nm process, and in turn it has affected their product development roadmap. Acknowledging that the traditional two year cadence has become (at best) a two and a half year cadence for Intel, the company’s 10nm process, originally scheduled to go into volume production in late 2016, is now scheduled to reach volume production in the second half of 2017, a delay of near a year. This delay means that Intel’s current 14nm node will in effect become a three year node for the company, with 10nm not entering volume production until almost three years after 14nm hit the same point in 2014.

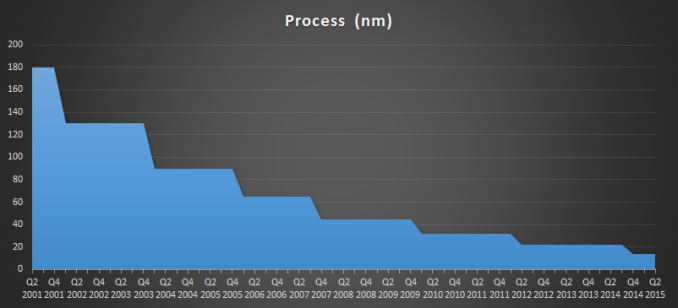

Intel’s initial struggles with 14nm have been well publicized, with the company having launched their 14nm process later than they would have liked. With their 22nm process having launched in Q2 of 2012, 14nm didn’t reach the same point until Q4 of 2014, and by traditional desktop standards the delay has been even longer. Ultimately Intel was hoping that the delays they experienced with 14nm would be an aberration, but that has not been the case.

Intel’s latest delay ends up being part of a larger trend in semiconductor manufacturing, which has seen the most recent nodes stick around much longer than before. Intel’s leading node for desktop processors in particular has been 22nm for close to three years. Meanwhile competitors TSMC and Samsung have made much greater use of their 28nm nodes than expected, as their planar 20nm nodes have seen relatively little usage due to leakage, causing some customers to wait for their respective 16nm/14nm FinFET nodes, which offer better electrical characteristics at these small geometries than planar transistors. Observationally then there’s nothing new from Intel’s announcement that we haven’t already seen, but it confirms the expected and all too unfortunate news that even the industry’s current bellwether isn’t going to be able to keep up with a traditional two year cadence.

Intel Historical Development Cadence

Meanwhile the fact that 14nm is going to be around for another year at Intel presents its own challenges for Intel’s product groups as well as their fabrication groups, which brings us to the second part of Intel’s announcement. Intel’s traditional development model for processors over the last decade has been the company’s famous tick-tock model – releasing processors built on an existing architecture and a new manufacturing node (tick), and then following that up with a new architecture built on the then-mature manufacturing node (tock), and repeating the cycle all over again – which in turn is built on the two year development cadence. Intel wants to have new products every year, and alternating architectures and manufacturing nodes was the sanest, safest way to achieve that. However with the delay of 10nm, it means that Intel now has an additional year to fill in their product lineup, and that means tick-tock is on the rocks.

Previously rumored and now confirmed by Intel, the company will be developing a 3rd generation 14nm Core product, to fit in between the company’s forthcoming 14nm Skylake (2015) and 10nm Cannonlake (2017) processor families.

| Intel Core Family Roadmap | ||||

| Previous Roadmap | New Roadmap | |||

| 2014 | Broadwell | Broadwell | ||

| 2015 | Skylake | Skylake | ||

| 2016 | Cannonlake | Kaby Lake (New) | ||

| 2017 | (10nm New Architecture) | Cannonlake | ||

| 2018 | N/A | (10nm New Architecture) | ||

The new processor family is being dubbed Kaby Lake. It will be based on the preceding Skylake micro-architecture but with key performance enhancements to differentiate it from Skylake and to offer a further generation of performance improvements in light of the delay of Intel’s 10nm process. Intel hasn’t gone into detail at this time over just what those enhancements will be for Kaby Lake, though we are curious over just how far in advance Intel has been planning for the new family. Intel has several options here, including back-porting some of their planned Cannonlake enhancements, or looking at smaller-scale alternatives, depending on just how long Kaby Lake has been under development.

Kaby Lake in turn comes from Intel’s desire to have yearly product updates, but also to meet customer demands for predictable product updates. The PC industry as a whole is still strongly tethered to yearly hardware cycles, which puts OEMs in a tight spot if they don’t have anything new to sell. Intel has already partially gone down this route once with the Haswell Refresh processors for 2014, which served to cover the 14nm delay, and Kaby Lake in turn is a more thorough take on the process.

Finally, looking at a longer term perspective, while Intel won’t be able to maintain their two year development cadence for 10nm, the company hasn’t given up on it entirely. The company is still hoping for a two year cadence for the shift from 10nm to 7nm, which ideally would see 7nm hit volume production in 2019. Given the longer timeframes Intel has required for both 14nm and 10nm, a two year cadence for 7nm is definitely questionable at this time, though not impossible.

For the moment at least this means tick-tock isn’t quite dead at Intel – it’s merely on the rocks. What happens from here may more than anything else depend on the state of the long in development Extreme Ultra-Violet (EUV) technology, which Intel isn’t implementing for 10nm, but if it’s ready for 7nm would speed up the development process. Ultimately with any luck we should hear about the final fate of tick-tock as early as the end of 2016, when Intel has a better idea of when their 7nm process will be ready.

Source: Intel Earnings Call via Seeking Alpha (Transcript)

138 Comments

View All Comments

boeush - Sunday, July 19, 2015 - link

'Wasting' is a value judgement based on usage. Having extra cores in reserve for when you actually need them - that is, to run applications actually capable of scaling over them, or to run many heavy single-core power viruses simultaneously - is a nice capability to have in the back pocket. Finding yourself in occasional need yet lacking such resources can be an exercise in head-on-brick frustration.That said, in my view the real abject waste presently evident is the iGPU taking up more than half of typical die area and TDP while almost guaranteed to remain unused in desktop builds (that include discrete GPUs). Moreover, all the circuitry and complexity for race-to-sleep, multiple clock domains and power backplanes, power monitoring and management, etc. - are totally useless on *desktops*. When typical users regularly outfit their machines with 200+ W GPUs (and more than one), why are their CPUs limited to sub-150 or even 100 W? Why not a 300 W CPU that is 2x+ faster?

Lastly, with Intel we've witnessed hardly any change in absolute performance over a shift from 32 nm to 14. By Moore's Law alone current CPUs should be at least 4x (that's 300%) faster than Sandy Bridge. By all means, beef them up (while getting rid of unnecessary power management overhead) - but even then, by virtue of process shrink, you'd still have plenty of space left over to add more cores, more cache, and wider fabric.

All that in total is my argument for bringing relevance (and demand) back to the desktop market. IOW make the PC into a personal supercomputer rather than a glorified laptop in a large and heavy box, with a giant GPU attached

jeffkibuule - Thursday, July 16, 2015 - link

You need to keep in mind that Intel's chips with hyper threading already effectively allow 8 simultaneous hardware threads to run in parallel with each other without the OS being aware of it. In fact, I'm pretty sure they are exposed to the OS as 8 cores. They each have their own set of registers, stack pointer, frame pointer, etc.. and the things that aren't duplicated aren't used often enough to warrant being on each core (floating point math is not what a CPU spends most of its time doing).boeush - Friday, July 17, 2015 - link

Yeah, and the fact that the caches are constantly being trashed by the co-located, competing 'threads' doesn't impact performance one bit. And the ALU/FPU and load/store contention are totally inconsequential. I mean there's no reason at all why some applications actually drop in performance when hyperthreading is enabled.Look, I'm all for optimizing overall resource utilization, but it's not a substitute for raw performance through more actual hardware.

My answer to the cannibalization of the desktop market by mobile is simple enough: bring back the differentiation! Desktops have much larger space/heat/power budgets. Correspondingly, a desktop CPU that consumes 30 times the power of a mobile one, should be able to offer performance that is at least 10 times greater.

boeush - Friday, July 17, 2015 - link

Also, regarding floating point math... There might be a reason not enough applications (and especially games) are FP-heavy: it's because general CPU performance on FP code sucks. So they stay away from it. It's chicken vs. egg - if CPUs with lots of cores and exceptional FP performance became the norm, suddenly you might notice a whole bunch of software (especially games) eating it up and begging for seconds. Any sort of physics-driven simulation; anything involving neural networks; anything involving ray-tracing or 3D geometry - all would benefit immensely from any boost to FP resources. Granted, office workers, movie pirates, and web surfers couldn't care less -- but they aren't the whole world, and they aren't the halo product audience.psyq321 - Friday, July 17, 2015 - link

Actually, ever since Pentium II or so, floating point performance on Intel CPUs is not handicapped compared to integer performance.In fact, Sandy Bridge and Ivy Bridge SKUs could do floating point calculations faster due to the fact that AVX (first generation) only had floating point ops.

boeush - Friday, July 17, 2015 - link

Vector extensions are cool and everything, but they are not universally applicable and they are hard to optimize for. I'm not saying let's get rid of AVX, SSE, etc. - but in addition to those, why not boost the regular FPU to 128 bits, clock it higher or unroll/parallelize it further to make it work faster, and have a pair of them per core for better hyperthreading? Yeah, it would all take serious chip real estate and power budget increases - but having gotten rid of the monkey on the back of the CPU that is the iGPU, and considering the advantages of 14nm process over previous generations, it should all be doable with proper effort and a change in design priorities (pushing performance over power efficiency - which makes perfect sense for desktops but not mobile or data center use cases.)Oxford Guy - Thursday, July 16, 2015 - link

You mean AMD FX? That was what AMD did back in 2012 or whatever. Big cache, lots of threads, no GPU, more PCI-e lanes.boeush - Thursday, July 16, 2015 - link

Exactly so, or otherwise what Intel already does with their Xeon line. Instead of reserving real performance for the enterprise market only while trying to sell mobile architectures to desktop customers, why not have a distinct desktop architecture for desktops, a separate one (with the iGPU and the power optimizations) for mobile, and a third one for the enterprise (even if it's only the desktop chips with enterprise-specific features enabled)? Surely that would help revitalize the desktop market interest/demand, don't you think?boeush - Thursday, July 16, 2015 - link

Sorry for multi-post; wish I could edit the previous one to add this - we all celebrated when Intel's mobile performance narrowed the gap with desktop. But perhaps we should have been mourning instead. It signalled the end of disparate mobile/desktop architectures - the slbeginning of the era of unified design. So now desktop chips are held hostage to mobile constraints. I have no doubt whatsoever that a modern architecture on a modern process designed singly with desktop in mind would have far superior performance (at much higher power - but who cares?) - than the current mongrel generations of CPU designs.Gigaplex - Sunday, July 19, 2015 - link

You just described the current enthusiast chips on the LGA 2011 socket (or equivalent for the given generation). The mass market is more interested in the iGPU chips. If nobody wanted them, nobody would be buying them.