The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTThe State of Mantle, The Drivers, & The Test

Before diving into our long-awaited benchmark results, I wanted to quickly touch upon the state of Mantle now that AMD has given us a bit more insight into what’s going on.

With the Vulkan project having inherited and extended Mantle, Mantle’s external development is at an end for AMD. AMD has already told us in the past that they are essentially taking it back inside, and will be using it as a platform for testing future API developments. Externally then AMD has now thrown all of their weight behind Vulkan and DirectX 12, telling developers that future games should use those APIs and not Mantle.

In the meantime there is the question of what happens to existing Mantle games. So far there are about half a dozen games that support the API, and for these games Mantle is the only low-level API available to them. Should Mantle disappear, then these games would no longer be able to render at such a low-level.

The situation then is that in discussing the performance results of the R9 Fury X with Mantle, AMD has confirmed that while they are not outright dropping Mantle support, they have ceased all further Mantle optimization. Of particular note, the Mantle driver has not been optimized at all for GCN 1.2, which includes not just R9 Fury X, but R9 285, R9 380, and the Carrizo APU as well. Mantle titles will probably still work on these products – and for the record we can’t get Civilization: Beyond Earth to play nicely with the R9 285 via Mantle – but performance is another matter. Mantle is essentially deprecated at this point, and while AMD isn’t going out of their way to break backwards compatibility they aren’t going to put resources into helping it either. The experiment that is Mantle has come to an end.

This will in turn impact our testing somewhat. For our 2015 benchmark suite we began using low-level APIs when available, which in the current game suite includes Battlefield 4, Dragon Age: Inquisition, and Civilization: Beyond Earth, not counting on AMD to cease optimizing Mantle quite so soon. As a result we’re in the uncomfortable position of having to backtrack on our policies some in order to not base our recommendations on stupid settings.

Starting with this review we’re going to use low-level APIs when available, and when using them makes performance sense. That means we’re not going to use Mantle in the cases where performance has clearly regressed due to a lack of optimizations, but will use it for games where it still works as expected (which essentially comes down to Civ: BE). Ultimately everything will move to Vulkan and DirectX 12, but in the meantime we will need to be more selective about where we use Mantle.

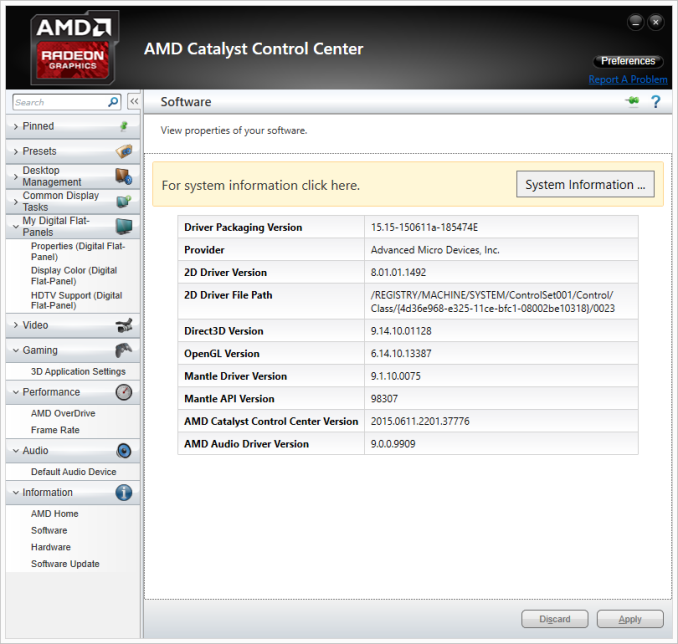

The Drivers

For the launch of the 300/Fury series, AMD has taken an unexpected direction with their drivers. The launch driver for these parts is the Catalyst 15.15 driver, AMD’s next major driver branch which includes everything from Fiji support to WDDM 2.0 support. However in launching these parts, AMD has bifurcated their drivers; the new cards get Catalyst 15.15, the old cards get Catalyst 15.6 (driver version 14.502).

Eventually AMD will bring these cards back together in a later driver release, after they have done more extensive QA against their older cards. In the meantime it’s possible to use a modified version of Catalyst 15.15 to enable support for some of these older cards, but unsigned drivers and Windows do not get along well, and it introduces other potential issues. Otherwise considering that these new drivers do include performance improvements for existing cards, we are not especially happy with the current situation. Existing Radeon owners are essentially having performance held back from them, if only temporarily. Small tomes could be written on AMD’s driver situation – they clearly don’t have the resources to do everything they’d like to at once – but this is perhaps the most difficult situation they’ve put Radeon owners in yet.

The Test

Finally, let’s talk testing. For our benchmarking we have used AMD’s Catalyst 15.15 beta drivers for the R9 Fury X, and their Catalyst 15.5 beta drivers for all other AMD cards. Meanwhile for NVIDIA cards we are on release 352.90.

From a build standpoint we’d like to remind everyone that installing a GPU radiator in our closed cased test bed does require reconfiguring the test bed slightly; a 120mm rear exhaust fan must be removed to make room for the GPU radiator.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 Fury X AMD Radeon R9 295X2 AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 680 NVIDIA GeForce GTX 580 |

| Video Drivers: | NVIDIA Release 352.90 Beta AMD Catalyst Cat 15.5 Beta (All Other AMD Cards) AMD Catalyst Cat 15.15 Beta (R9 Fury X) |

| OS: | Windows 8.1 Pro |

458 Comments

View All Comments

bennyg - Saturday, July 4, 2015 - link

Marketing performance. Exactly.Except efficiency was not good enough across the generations of 28nm GCN in an era where efficiency + thermal/power limits constrain performance, and look what Nvidia did over a similar era from Fermi (which was at market when GCN 1.0 was released) to Kepler to Maxwell. Plus efficiency is kind of the ultimate marketing buzzword in all areas of tech and not having any ability to mention it (plus having generally inferor products) hamstrung their marketing all along

xenol - Monday, July 6, 2015 - link

Efficiency is important because of three things:1. If your TDP is through the rough, you'll have issues with your cooling setup. Any time you introduce a bigger cooling setup because your cards run that hot, you're going to be mocked for it and people are going to be weary of it. With 22nm or 20nm nowhere in sight for GPUs, efficiency had to be a priority, otherwise you're going to ship cards that take up three slots or ship with water coolers.

2. You also can't just play to the desktop market. Laptops are still the preferred computing platform and even if people are going for a desktop, AIOs are looking much more appealing than a monitor/tower combo. So you want to have any shot in either market, you have to build an efficient chip. And you have to convince people they "need" this chip, because Intel's iGPUs do what most people want just fine anyway.

3. Businesses and such with "always on" computers would like it if their computers ate less power. Even if you can save a handful of watts, multiplying that by thousands and they add up to an appreciable amount of savings.

xenol - Monday, July 6, 2015 - link

(Also by "computing platform" I mean the platform people choose when they want a computer)medi03 - Sunday, July 5, 2015 - link

ATI is the reason both Microsoft and Sony use AMDs APUs to power their consoles.It might be the reason why APUs even exist.

tipoo - Thursday, July 2, 2015 - link

That was then, this is now. Now, AMD together with the acquisition, has a lower market cap than Nvidia.Murloc - Thursday, July 2, 2015 - link

yeah, no.ddriver - Thursday, July 2, 2015 - link

ATI wasn't bigger, AMD just paid a preposterous and entirely unrealistic amount of money for it. Soon after the merger, AMD + ATI was worth less than what they paid for the latter, ultimately leading to the loss of its foundries, putting it in an even worse position. Let's face it, AMD was, and historically has always been betrayed, its sole purpose is to create the illusion of competition so that the big boys don't look bad for running unopposed, even if this is what happens in practice.Just when AMD got lucky with Athlon a mole was sent to make sure AMD stays down.

testbug00 - Sunday, July 5, 2015 - link

foundries didn't go because AMD bought ATI. That might have accelerated it by a few years however.Foundry issue and cost to AMD dates back to the 1990's and 2000-2001.

5150Joker - Thursday, July 2, 2015 - link

True, AMD was at a much better position in 2006 vs NVIDIA, they just got owned.3DVagabond - Friday, July 3, 2015 - link

When was Intel the underdog? Because that's who's knocked them down (The aren't out yet.).