The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTToday’s Review: Radeon R9 Fury X

Now that we’ve had a chance to cover all of the architectural and design aspirations of the Fiji GPU and its constituting cards, let’s get down to the business end of this article: the product we’ll be reviewing today.

Having launched last week and being reviewed today is AMD’s Radeon R9 Fury X, the company’s new flagship single-GPU video card. Featuring a fully enabled Fiji GPU, the R9 Fury X is Fiji at its finest, and a safe bet to be the grandest video card AMD releases built on TSMC’s 28nm process. Fiji is clocked high, cooled with overkill, and priced to go right up against the only GM200 GeForce card from NVIDIA that anyone cares about: the GeForce GTX 980 Ti.

| AMD GPU Specification Comparison | ||||||

| AMD Radeon R9 Fury X | AMD Radeon R9 Fury | AMD Radeon R9 290X | AMD Radeon R9 290 | |||

| Stream Processors | 4096 | (Fewer) | 2816 | 2560 | ||

| Texture Units | 256 | (How much) | 176 | 160 | ||

| ROPs | 64 | (Depnds) | 64 | 64 | ||

| Boost Clock | 1050MHz | (On Yields) | 1000MHz | 947MHz | ||

| Memory Clock | 1Gbps HBM | (Memory Too) | 5Gbps GDDR5 | 5Gbps GDDR5 | ||

| Memory Bus Width | 4096-bit | 4096-bit | 512-bit | 512-bit | ||

| VRAM | 4GB | 4GB | 4GB | 4GB | ||

| FP64 | 1/16 | 1/16 | 1/8 | 1/8 | ||

| TrueAudio | Y | Y | Y | Y | ||

| Transistor Count | 8.9B | 8.9B | 6.2B | 6.2B | ||

| Typical Board Power | 275W | (High) | 250W | 250W | ||

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Architecture | GCN 1.2 | GCN 1.2 | GCN 1.1 | GCN 1.1 | ||

| GPU | Fiji | Fiji | Hawaii | Hawaii | ||

| Launch Date | 06/24/15 | 07/14/15 | 10/24/13 | 11/05/13 | ||

| Launch Price | $649 | $549 | $549 | $399 | ||

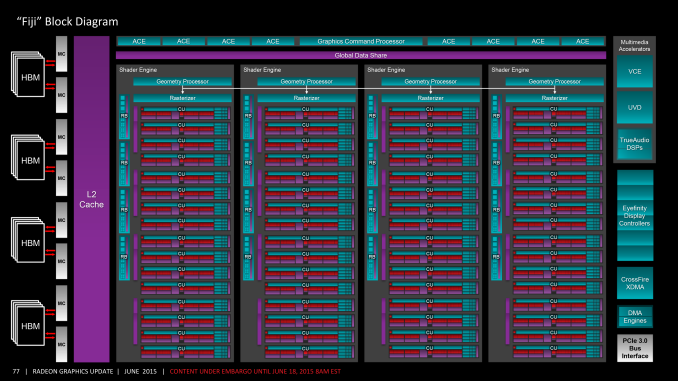

With a maximum boost clockspeed of 1050MHz and with 4096 SPs organized into 64 CUs, R9 Fury X has been designed to deliver more shading/compute performance than ever before. Hawaii by comparison topped out at 2816 SPs (44 CUs), giving R9 Fury X a 1280 SP (~45%) advantage in raw shading hardware. Meanwhile as a result of scaling up the number of CUs, the number of texture units has also scaled up to 256 texture units, a new high-water mark for the number of texture units in a single GPU from any vendor.

Getting away from the CUs for a second, the R9 Fury X features less dramatic changes at its front-end and back-end relative to Hawaii. Like Hawaii, R9 Fury X features 4 geometry engines on the front-end and 64 ROPs on the back-end, so from a theoretical standpoint Fiji does not have any additional resources to work with on those portions of the rendering pipeline. That said, what the raw specifications do not cover are the architectural optimizations we have covered in past pages, which should see Fiji’s ROPs and geometry engines both perform better per unit and per clock than Hawaii’s. Meanwhile the other significant influence here is the extensive memory bandwidth enabled by using High Bandwidth Memory, which combined with a larger 2MB L2 cache should leave the ROPs far better fed on R9 Fury X than it did on AMD’s Hawaii cards.

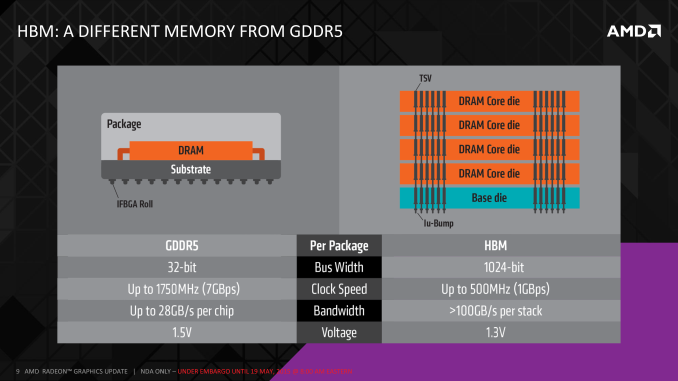

As for High Bandwidth Memory, the next-generation memory technology gives AMD more memory bandwidth than ever before. Featuring an ultra-wide 4096-bit memory bus clocked at 1Gbps (500MHz DDR), the R9 Fury X has a whopping 512GB/sec of memory bandwidth, fed by 4GB of HBM organized in 4 stacks of 1GB each. Relative to R9 290X, this represents a 60% increase in memory bandwidth, a true generational jump that we will not see again in an AMD GPU for some number of years to come.

Consequently the performance expectations for R9 Fury X will significantly vary with the nature of the rendering workload. For pure compute workloads, between the 45% increase in SPs and 5% clockspeed increase, R9 Fury X will be up to 53% faster than the R9 290X. Meanwhile for ROP-bound scenarios the difference can be anywhere between 5% and 120%, depending on how bandwidth-bound the task is and how effective delta compression is in shrinking the bandwidth requirements. Real world expectations are 30-40% over R9 290X, depending on the game and the resolution, with R9 Fury X extending its gains at higher resolutions.

For AMD, the Radeon R9 Fury X is a critically important card for a number of reasons. From a technology perspective this is the very first HBM card, and consequently the missteps AMD makes and the lessons they learn here will be important for future generation of cards. At the same time from a competitive perspective, the importance of a flagship cannot be ignored. While flagship card sales are only a tiny part of overall card sales for NVIDIA and AMD, the PC video card industry is (in)famous for its window shopping and the emphasis put on which card holds the performance crown. Most buyers cannot (or will not) buy a card like R9 Fury X, but the sales impact of holding the crown is undeniable, as buyers as a whole will favor whoever can hold the crown. After seeing their consumer discrete market share fall to the lowest level in years, AMD is gunning to get the crown back, and the halo effect that comes from it that spurs on so many additional sales of lower-end cards.

The competition for the R9 Fury X is of course NVIDIA’s recently released GeForce GTX 980 Ti. Based on a cut-down version of NVIDIA’s GM200 GPU, the GTX 980 Ti is an odd card that comes entirely too close to their official flagship GTX Titan X in performance (~95%), to the point where although the GTX Titan X is the de jure flagship for NVIDIA, it is the GTX 980 Ti that is the de facto flagship for the company. Meanwhile, although only NVIDIA knows for sure, given the timing of the GTX 980 Ti’s launch, there is every reason to believe that the company launched it with the specific intent of countering the R9 Fury X before it even launched, so AMD does not enjoy a first-mover advantage here.

Price-wise the R9 Fury X has launched at $649, the same price as the GTX 980 Ti, so between these two cards this is a straight-up fist fight. There is no price spoiler effect in play here, the question simply comes down to which card is the better card. The only advantage for either party in this case is that NVIDIA is offering a free copy of Batman: Arkham Knight with GTX 980 Ti cards, not that the PC port of the game is an asset at this time given its poor state.

Finally, as far as launch quantities are concerned, AMD has declined to comment on how many R9 Fury X cards were available for launch. What we do know is that the cards sold out on the first day and we have yet to see a massive restocking take place yet, though at just a week post-launch restocks typically don’t come quite this soon. In any case whether due to demand, supply, or a mix of the two, the initial launch allocations of R9 Fury X did sell out, and for the moment getting another card is easier said than done.

| Summer 2015 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon R9 Fury X | $649 | GeForce GTX 980 Ti | |||

| $499 | GeForce GTX 980 | ||||

| Radeon R9 390X | $429 | ||||

| Radeon R9 290X Radeon R9 390 |

$329 | GeForce GTX 970 | |||

| Radeon R9 290 | $250 | ||||

| Radeon R9 380 | $200 | GeFroce GTX 960 | |||

| Radeon R7 370 Radeon R9 270 |

$150 | ||||

| $130 | GeForce GTX 750 Ti | ||||

| Radeon R7 360 | $110 | ||||

458 Comments

View All Comments

bennyg - Saturday, July 4, 2015 - link

Marketing performance. Exactly.Except efficiency was not good enough across the generations of 28nm GCN in an era where efficiency + thermal/power limits constrain performance, and look what Nvidia did over a similar era from Fermi (which was at market when GCN 1.0 was released) to Kepler to Maxwell. Plus efficiency is kind of the ultimate marketing buzzword in all areas of tech and not having any ability to mention it (plus having generally inferor products) hamstrung their marketing all along

xenol - Monday, July 6, 2015 - link

Efficiency is important because of three things:1. If your TDP is through the rough, you'll have issues with your cooling setup. Any time you introduce a bigger cooling setup because your cards run that hot, you're going to be mocked for it and people are going to be weary of it. With 22nm or 20nm nowhere in sight for GPUs, efficiency had to be a priority, otherwise you're going to ship cards that take up three slots or ship with water coolers.

2. You also can't just play to the desktop market. Laptops are still the preferred computing platform and even if people are going for a desktop, AIOs are looking much more appealing than a monitor/tower combo. So you want to have any shot in either market, you have to build an efficient chip. And you have to convince people they "need" this chip, because Intel's iGPUs do what most people want just fine anyway.

3. Businesses and such with "always on" computers would like it if their computers ate less power. Even if you can save a handful of watts, multiplying that by thousands and they add up to an appreciable amount of savings.

xenol - Monday, July 6, 2015 - link

(Also by "computing platform" I mean the platform people choose when they want a computer)medi03 - Sunday, July 5, 2015 - link

ATI is the reason both Microsoft and Sony use AMDs APUs to power their consoles.It might be the reason why APUs even exist.

tipoo - Thursday, July 2, 2015 - link

That was then, this is now. Now, AMD together with the acquisition, has a lower market cap than Nvidia.Murloc - Thursday, July 2, 2015 - link

yeah, no.ddriver - Thursday, July 2, 2015 - link

ATI wasn't bigger, AMD just paid a preposterous and entirely unrealistic amount of money for it. Soon after the merger, AMD + ATI was worth less than what they paid for the latter, ultimately leading to the loss of its foundries, putting it in an even worse position. Let's face it, AMD was, and historically has always been betrayed, its sole purpose is to create the illusion of competition so that the big boys don't look bad for running unopposed, even if this is what happens in practice.Just when AMD got lucky with Athlon a mole was sent to make sure AMD stays down.

testbug00 - Sunday, July 5, 2015 - link

foundries didn't go because AMD bought ATI. That might have accelerated it by a few years however.Foundry issue and cost to AMD dates back to the 1990's and 2000-2001.

5150Joker - Thursday, July 2, 2015 - link

True, AMD was at a much better position in 2006 vs NVIDIA, they just got owned.3DVagabond - Friday, July 3, 2015 - link

When was Intel the underdog? Because that's who's knocked them down (The aren't out yet.).