The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTCompute

Shifting gears, we have our look at compute performance. As an FP64 card, the R9 Fury X only offers the bare minimum FP64 performance for a GCN product, so we won’t see anything great here. On the other hand with a theoretical FP32 performance of 8.6 TFLOPs, AMD could really clean house on our more regular FP32 workloads.

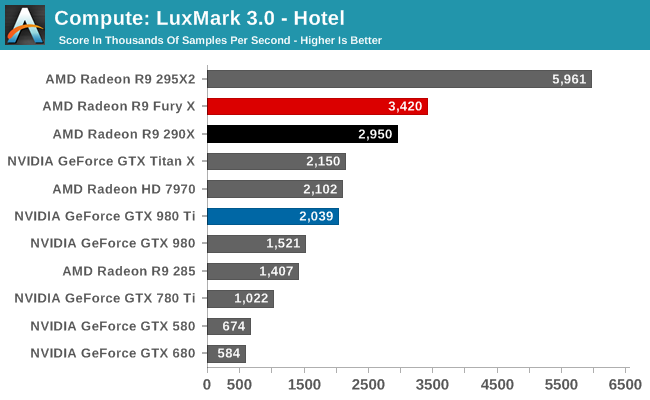

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

The results with LuxMark ended up being quite a bit of a surprise, and not for a good reason. Compute workloads are shader workloads, and these are workloads that should best illustrate the performance improvements of R9 Fury X over R9 290X. And yet while the R9 Fury X is the fastest single GPU AMD card, it’s only some 16% faster, a far cry from the 50%+ that it should be able to attain.

Right now I have no reason to doubt that the R9 Fury X is capable of utilizing all of its shaders. It just can’t do so very well with LuxMark. Given the fact that the R9 Fury X is first and foremost a gaming card, and OpenCL 1.x traction continues to be low, I am wondering whether we’re seeing a lack of OpenCL driver optimizations for Fiji.

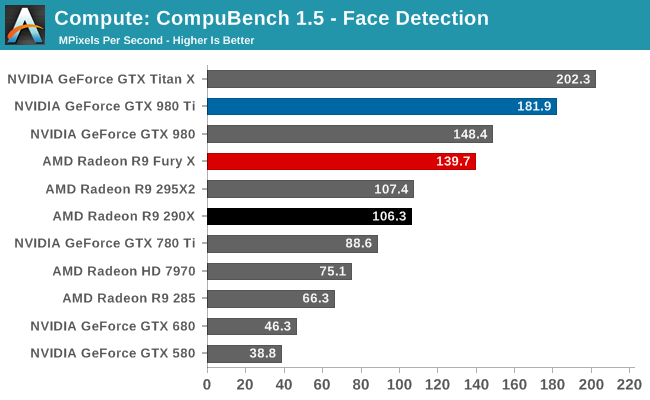

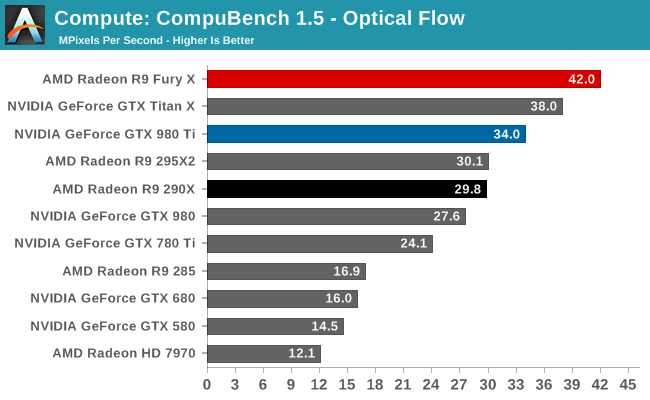

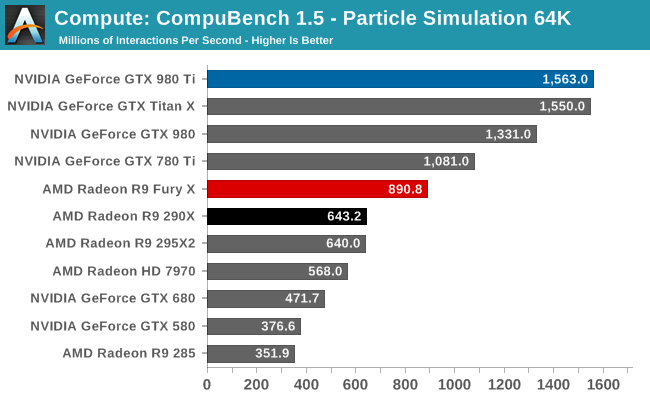

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Quickly taking some of the air out of our driver theory, the R9 Fury X’s performance on CompuBench is quite a bit better, and much closer to what we’d expect given the hardware of the R9 Fury X. The Fury X only wins overall at Optical Flow, a somewhat memory-bandwidth heavy test that to no surprise favors AMD’s HBM additions, but otherwise the performance gains across all of these tests are 40-50%. Overall then the outcome over who wins is heavily test dependent, though this is nothing new.

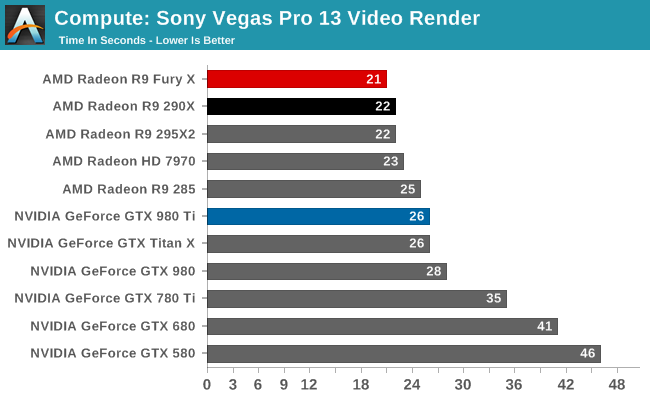

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

At this point Vegas is becoming increasingly CPU-bound and will be due for replacement. The Fury X none the less shaves off an additional second of rendering time, bringing it down to 21 seconds.

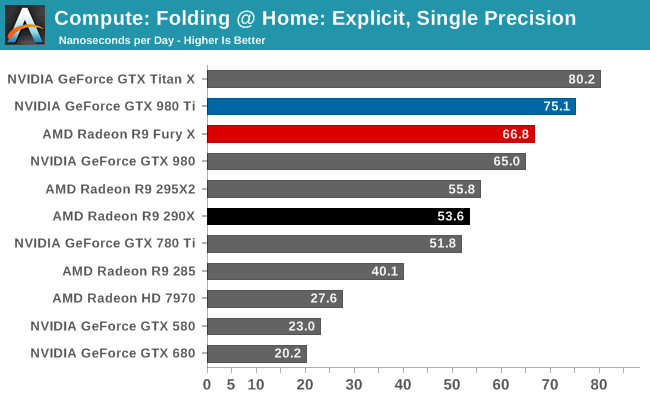

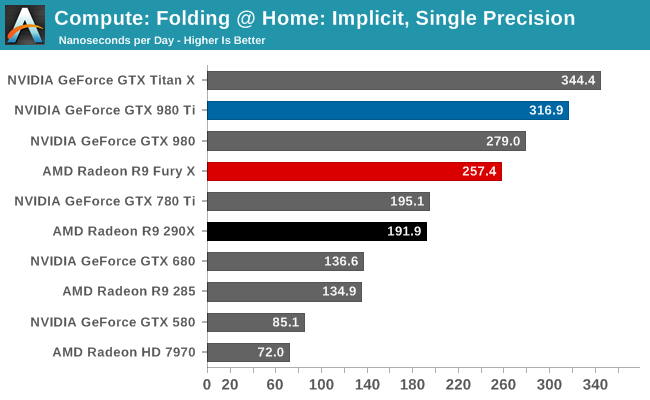

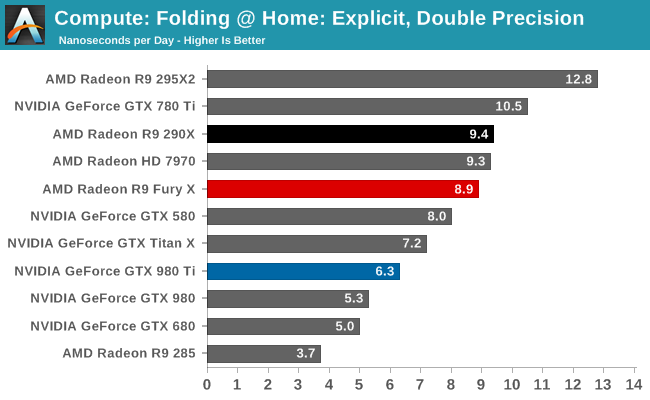

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Both of the FP32 tests for FAHBench show smaller than expected performance gains given the fact that the R9 Fury X has such a significant increase in compute resources and memory bandwidth. 25% and 34% respectively are still decent gains, but they’re smaller gains than anything we saw on CompuBench. This does lend a bit more support to our theory about driver optimizations, though FAHBench has not always scaled well with compute resources to begin with.

Meanwhile FP64 performance dives as expected. With a 1/16 rate it’s not nearly as bad as the GTX 900 series, but even the Radeon HD 7970 is beating the R9 Fury X here.

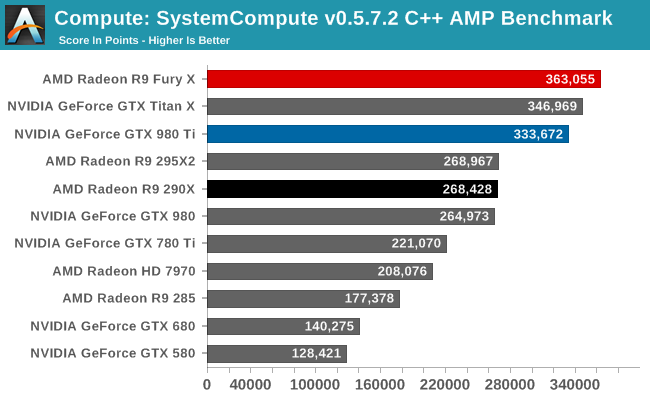

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

Our C++ AMP benchmark is another case of decent, though not amazing, GPU compute performance gains. The R9 Fury X picks up 35% over the R9 290X. And in fact this is enough to vault it over NVIDIA’s cards to retake the top spot here, though not by a great amount.

458 Comments

View All Comments

chizow - Monday, July 6, 2015 - link

Oh, and also forgot his biggest mistake was vastly overpaying for ATI, leading both companies on this downward spiral of crippling debt and unrealized potential.chizow - Monday, July 6, 2015 - link

Uh...Bulldozer happened on Ruiz's watch, and he also wasn't able to capitalize on K8's early performance leadership. Beyond that he orchestrated the sale of their fabs to ATIC culminating in the usurious take or pay WSA with GloFo that still cripples them to this day. But of course, it was no surprise why he did this, he traded AMD's fabs for a position as GloFo's CEO which he was forced to resign from in shame due to insider trading allegations. Yep, Ruiz was truly a crook but AMD fanboys love to throw stones at Huang. :Dtipoo - Thursday, July 2, 2015 - link

Nooo please put it back, it was so much better with Anandtech referring to AMD as the taint :PHOOfan 1 - Thursday, July 2, 2015 - link

At least he didn't spell it "perianal"Wreckage - Thursday, July 2, 2015 - link

It's silly to paint AMD as the underdog. It was not that long ago that they were able to buy ATI (a company that was bigger than NVIDIA). I remember at the time a lot of people were saying that NVIDIA was doomed and could never stand up to the might of a combined AMD + ATI. AMD is not the underdog, AMD got beat by the underdog.Drumsticks - Thursday, July 2, 2015 - link

I mean, AMD has a market cap of ~2B, compared to 11B of Nvidia and ~140B of Intel. They also have only ~25% of the dGPU market I believe. While I don't know a lot about stocks and I'm sure this doesn't tell the whole story, I'm not sure you could ever sell Nvidia as the underdog here.Kjella - Thursday, July 2, 2015 - link

Sorry but that is plain wrong as nVidia wasn't just bigger than ATI, they were bigger than AMD. Their market cap in Q2 2006 was $9.06 billion, on the purchase date AMD was worth $8.84 billion and ATI $4.2 billion. It took a massive cash/stock deal worth $5.6 billion to buy ATI, including over $2 billion in loans. AMD stretched to the limit to make this happen, three days later Intel introduced the Core 2 processor and it all went downhill from there as AMD couldn't invest more and struggled to pay interest on falling sales. And AMD made an enemy of nVidia, which Intel could use to boot nVidia out of the chipset/integrated graphics market by not licensing QPI/DMI with nVidia having nowhere to go. It cost them $1.5 billion, but Intel has made back that several times over since.kspirit - Thursday, July 2, 2015 - link

That was pretty savage of Intel, TBH. I'm impressed.Iketh - Monday, July 6, 2015 - link

or you could say AMD purposely finalized the purchase just before Core2 was introduced... after Core2, the purchase would have been impossibleWreckage - Thursday, July 2, 2015 - link

http://money.cnn.com/2006/07/24/technology/nvidia_...AMD was worth $8.5B and ATI was worth $5B at the time of the merger making them worth about twice what NVIDIA was worth at the time ($7B)

In 2004 NVIDIA had a market cap of $2.4B and ATI was at $4.3B nearly twice.

http://www.tomshardware.com/news/nvidias-market-sh...

NVIDIA was the underdog until the combined AMD+ATI collapsed and lost most of their value. They are Goliath brought down by David.