LG 34UM67: UltraWide FreeSync Review

by Jarred Walton on March 31, 2015 3:00 PM ESTLG 34UM67 Display Uniformity

Given the size of the display, creating good uniformity can be difficult. Most of the display does reasonably well, with the corners tending to vary a bit more the center. The left side of our sample in particular looks a bit dim, but it’s only something you really notice when you look for it.

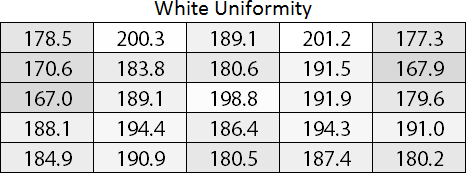

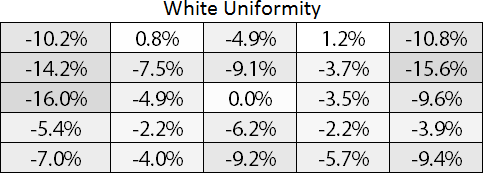

Starting with white uniformity, the center ends up being close to the brightest area, when most other sectors dropping off slightly. The center portion along with the bottom are all within 10%, which is a good result, but the top left and right corners fall off by up to 15%. Professionals would appreciate better uniformity overall, but for gaming the LG 34UM67 works well.

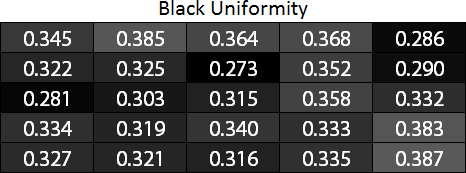

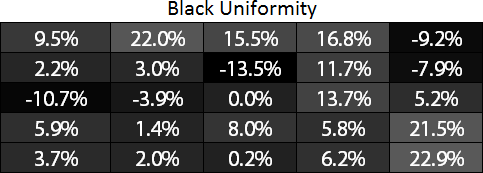

Black uniformity interestingly is a bit of a reverse from the white, with many areas showing slightly higher black levels than the center. However, our i1 Pro is not the best device for measuring black levels and the actual difference between 0.315 cd/m2 and 0.387 cd/m2 isn’t all the great when looking with your physical eyes, even though it’s a 23% difference. There’s a lot of variability in the charts, but mostly the corners seem to be the biggest outliers.

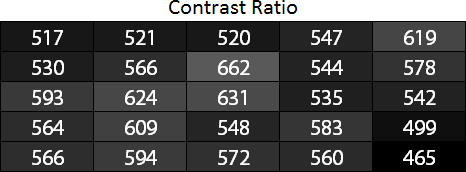

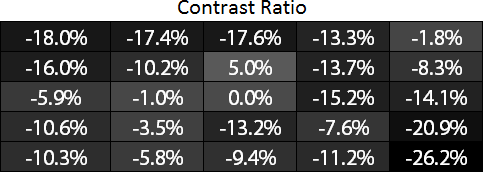

Compared to our earlier calibrated results, or uniformity contrast measurements have all fallen off quite a bit. Our measured contrast this time ranges from 465:1 on the bottom-right corner to as high as 662:1 just above the center, but I think most of the black levels were measured too high so the contrast results are only moderately useful.

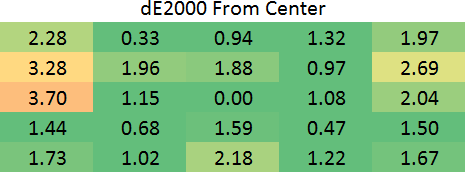

Delta E shows similar uniformity again. The top-left edge and top-right seem to have the greatest variance, but for a non-professional display most of this discussion is academic.

The short summary is that uniformity on the LG 34UM67 is good but not exceptional. There will obviously be differences between panels, so where we had problems primarily on the corners and left/right edges, other displays may show more or less issues. Perhaps the most telling aspect is that prior to testing uniformity, I looked carefully over the display with a variety of solid background images to see if I could detect any problems. There are some very minor discolorations that show up primarily when viewing pure white, but the size of the display makes the corners more of an acute viewing angle so it often feels like that’s as big of a problem as display uniformity.

96 Comments

View All Comments

dragonsqrrl - Wednesday, April 1, 2015 - link

"FreeSync actually has a far wider range than G-Sync so when a monitor comes out that can take advantage of it it will probably be awesome."That's completely false. Neither G-Sync nor the Adaptive-Sync spec have inherent limitations to frequency range. Frequency ranges are imposed due to panel specific limitations, which vary from one to another.

bizude - Thursday, April 2, 2015 - link

Price Premium?! It's 50$ cheaper than it's predeccessor, the 34UM65, for crying out loud, and has a higher refresh rate as well.AnnonymousCoward - Friday, April 3, 2015 - link

The $ goes on the left of the number.gatygun - Tuesday, June 30, 2015 - link

1) 27 hz range isn't a issue, you just have to make sure you game runs at 48+ fps at any time, which means you need to drop settings until you hit 60+ on average in less action packed games and 75 average on fast paced packed action games which have a wider gap with low fps.The 75hz upper limit isn't a issue as you can simple use msi afterburner to lock it towards 75 fps.

The 48hz should actually have been 35 or 30, it would make it easier for the 290/290x for sure and you can push better visuals. But the screen is a 75hz screen and that's where you should be aiming for.

This screen will work perfectly in games like diablo 3 / path of exile / mmo's which are simplistic gpu performance games and will push 75 fps without a issue.

For newer games like witcher 3, yes you need to trade off a lot of settings to get that 48 fps minimum, but at the same time you can just enable v-sync and deal with the additional controlled lag from those few drops you get in stressing situations. You can see them as your gpu not being up to par. crossfire will happen at some point.

2) Extra features will cost extra money, as they will have to write additional stuff down, write additional software functions etc. It's never free, it's just free that amd gpu's handle the hardware side of things instaed of having to buy licenses and hardware and plant them into the screens. So technically specially in comparison towards nvidia it can be seen as free.

The 29um67 is atm the cheapest freesync monitor on top of it, it's the little brother of this screen, but for the price and what it brings it's extremely sharp priced for sure.

I'm also wondering why nobody made any review on that screen tho, the 34inch isn't great ppi wise while the 29inch is perfect for that resolution. But oh well.

3) In my opinion the 34 isn't worth it, the 29um67 is where people should be looking at, with a price tag of 330 atm, it's basically 2x cheaper if not 3x then the swift. There is no competition.

I agree that input lag is really needed for gaming monitors and it's a shame they didn't spend much attention towards it anymore.

All with all the 29um67 is a solid screen for what you get, the 48 minimum is indeed not practical, but if you like your games hitting high framerates before anythign else this will surely work.

twtech - Wednesday, April 1, 2015 - link

It seems like the critical difference between FreeSync and GSync is that FreeSync will likely be available on a wide-range of monitors at varying price points, whereas GSync is limited to very high-end monitors with high max refresh rates, and they even limit the monitors to a single input only for the sake of minimizing pixel lag.I like AMD's approach here, because most people realistically aren't going to want to spend what it costs for a GSync-capable monitor, and even if the FreeSync experience isn't perfect with the relatively narrow refresh rate range that most ordinary monitors will support, it's better than nothing.

If somebody who currently has an nVidia card buys a monitor like this one just becuase they want a 34" ultrawide, maybe they will be tempted to go AMD for their next graphics upgrade, because it supports adaptive refresh rate with the display that they already have.

I think ultimately that's why nVidia will have to give in and support FreeSync. If they don't, they risk effectively losing adaptive sync as a feature to AMD for all but the extreme high end users.

Ubercake - Thursday, April 2, 2015 - link

Right now you can get a G-sync monitor anywhere between $400 and $800.AMD originally claimed adding freesync tech to a monitor wouldn't add to the cost, but somehow it seems to.

Ubercake - Thursday, April 2, 2015 - link

Additionally, it's obvious by the frequency range limitation of this monitor that the initial implementation of the freesync monitors is not quite up to par. If this technology is so capable, why limit it out of the gate?Black Obsidian - Thursday, April 2, 2015 - link

LG appears to have taken the existing 34UM65, updated the scaler (maybe a new module, maybe just a firmware update), figured out what refresh rates the existing panel would tolerate, and kicked the 34UM67 out the door at the same initial MSRP as its predecessor.And that's not necessarily a BAD approach, per se, just one that doesn't fit everybody's needs. If they'd done the same thing with the 34UM95 as the basis (3440x1440), I'd have cheerfully bought one.

bizude - Thursday, April 2, 2015 - link

Actually the MSRP is $50 cheaper than the UM65gatygun - Tuesday, June 30, 2015 - link

Good luck getting 48 minimums on a 3440x1440 resolution on a single 290x as crossfire isn't working with freesync.