The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

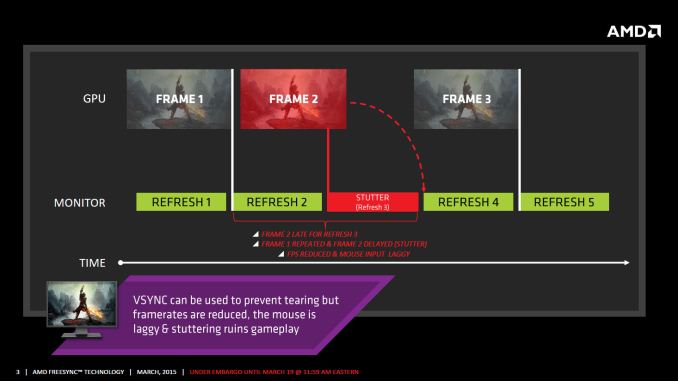

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

medi03 - Thursday, March 19, 2015 - link

nVidia's roalty cost for GSync is infinity.They've stated they were not going to license it to anyone.

chizow - Thursday, March 19, 2015 - link

It's actually nil, they have never once said there is a royalty fee attached to G-Sync.Creig - Friday, March 20, 2015 - link

TechPowerup"NVIDIA Sacrifices VESA Adaptive Sync Tech to Rake in G-SYNC Royalties"

WCCF Tech

"AMD FreeSync, unlike Nvidia G-Sync is completely and utterly royalty free"

The Tech Report

"Like the rest of the standard—and unlike G-Sync—this "Adaptive-Sync" feature is royalty-free."

chizow - Friday, March 20, 2015 - link

@CreigAgain, please link confirmation from Nvidia that G-Sync carries a penny of royalty fees. BoM for the G-Sync module is not the same as a Royalty Fee, especially because as we have seen, that G-Sync module may very well be the secret sauce FreeSync is missing in providing an equivalent experience as G-Sync.

Indeed, a quote from someone who didn't just take AMD's word for it: http://www.pcper.com/reviews/Displays/AMD-FreeSync...

"I have it from people that I definitely trust that NVIDIA is not charging a licensing fee to monitor vendors that integrate G-Sync technology into monitors. What they do charge is a price for the G-Sync module, a piece of hardware that replaces the scalar that would normally be present in a modern PC display. "

JarredWalton - Friday, March 20, 2015 - link

Finish the quote:"It might be a matter of semantics to some, but a licensing fee is not being charged, as instead the vendor is paying a fee in the range of $40-60 for the module that handles the G-Sync logic."

Of course, royalties for using the G-SYNC brand is the real question -- not royalties for using the G-SYNC module. But even if NVIDIA doesn't charge "royalties" in the normal sense, they're charging a premium for a G-SYNC scaler compared to a regular scaler. Interestingly, if the G-SYNC module is only $40-$60, that means the LCD manufacturers are adding $100 over the cost of the module.

chizow - Friday, March 20, 2015 - link

Why is there a need to finish the quote? If you get a spoiler and turbo charger in your next car, are they charging you a royalty fee? It's not semantics to anyone who actually understands the simple notion: better tech = more hardware = higher price tag.AnnihilatorX - Sunday, March 22, 2015 - link

Nvidia is making profit over the Gsync module, how's that different from a royalty?chizow - Monday, March 23, 2015 - link

@AnnihilatorX, how is "making profit from" suddenly the key determining factor for being a royalty? Is AMD charging you a royalty every time you buy one of their graphics cards? Complete and utter rubbish. Honestly, as much as some want to say it is "semantics", it really isn't, it comes down to clear definitions that are specific in usage particularly in legal or contract contexts.A Royalty is a negotiated fee for using a brand, good, or service that is paid continuously per use or at predetermined intervals. That is completely different than charging a set price for Bill of Material for an actual component that is integrated into a product you can purchase at a premium. It is obvious to anyone that additional component adds value to the product and is reflected in the higher price. This is in no way, a royalty.

Alexvrb - Monday, March 23, 2015 - link

Jarred, Chizow is the most diehard Nvidia fanboy on Anandtech. There is nothing you could say to him to convince him that Freesync/Adaptive Sync is in any way better than G-Sync (pricing or otherwise). Just being unavailable on Nvidia hardware makes it completely useless to him. At least until Nvidia adopts it. Then it'll suddenly be a triumph of open standards, all thanks to VESA and Nvidia and possibly some other unimportant company.chizow - Monday, March 23, 2015 - link

And Alexvrb is one of the staunchest AMD supporters on Anandtech. There is quite a lot that could convince me FreeSync is better than G-Sync, that it actually does what it sets out to do without issues or compromise, but clearly that isn't covered in this Bubble Gum Review of the technology. Unlike the budget-focused crowd that AMD targets, Price is not going to be the driving factor for me especially if one solution is better than the other at achieving what it sets out to do, so yes, while Freesync is cheaper, to me it is obvious why, it's just not as good as G-Sync.But yes, I'm honestly ambivalent to whether or not Nvidia supports Adaptive Sync or not, as long as they continue to support G-Sync as their premium option than it's np. Supporting Adaptive Sync as their cheap/low-end solution would just be one less reason for anyone to buy an AMD graphics card, which would probably be an unintended consequence for AMD.